Detecting Faces in Grayscale Images

1.Knowledge-based methods;

2.Feature invariant approaches;find nose or eyes, detect invariant features, edge shape texture shape …

3.Template matching methods;put a template on face

4.Appearance-based methods;machine learning;need test data

Face Recognition Challenges:

1.Inter-class similarity(Identify similar faces)

2.Accommodate intra-class variability(expressions pose,illumination,accessories )

Face Recognition Techniques:

1.Feature Methods:pure geometry

2.Holistic Methods:whole face ,Correlation PCA,LDA

3.Hybrid Methods:EBGM LFA

Here comes the Hero:

PCA:Principal Component Analysis.

A face image defines a point in the high- dimensional image space

They can be projected into an appropriately chosen subspace of eigenfaces and classification can be performed by similarity computation

Geometric interpretation:

PCA: maximum variance ;projects the data along the directions where the data varies the most. In other words:minimum the association/correlation between remained data.

Covariance matrix:

1.represent the relation between dimensions.

2.Elements in the diagonal are the variance in each dimension,others are the covariance of two dimensions.

So matrix diagonalization (except the value along the diagonal, others are 0 )maximum the variance of each dimension and reduce the noise that produce by other dimension.

Then we choose some of dimension which have large Eigen Value to construct project matrix actually these are the coordinates system in the new low dimension space.

Last we project the original matrix to the new dimension space.

Projects Description :You are given three sets of 100 facial images: training.zip, probe.zip, and gallery.zip.

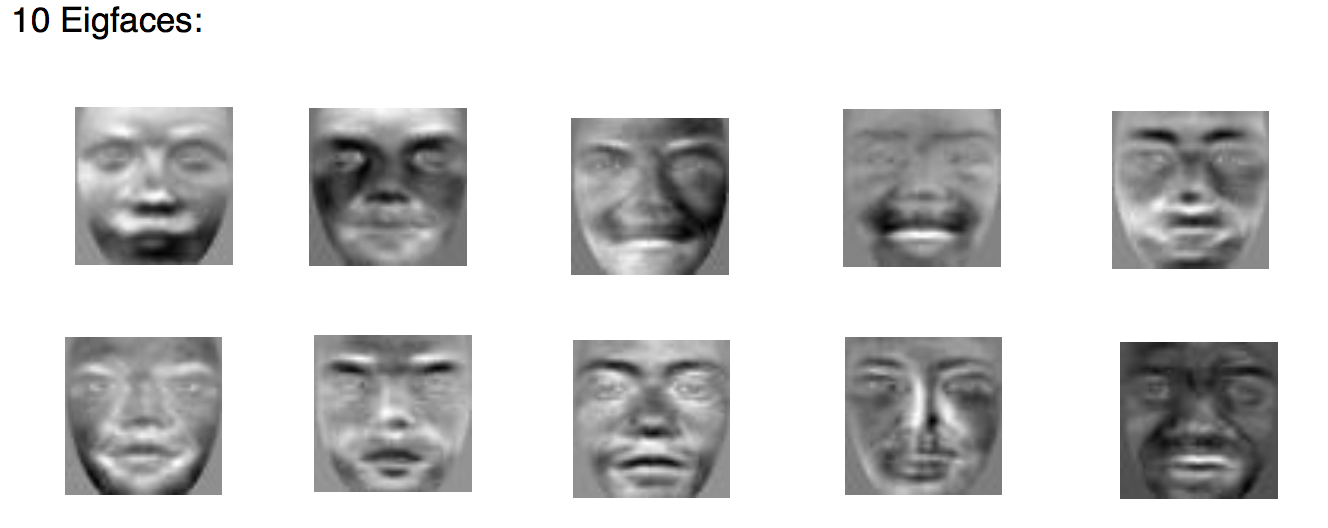

(1)Write source code which computes the eigen-faces (i.e., basis images) using the 100 face images in the training set. Show the eigen-faces corresponding to the top 10 eigen-values.

each face size is 50*50 (2500,1),So the all the 100 faces data T:(2500 100) ;

step1 :

compute the a average face,then use original face minus the average,make the mean of each face/dimension is 0. we got

A (2500,100)

step2:Compute the Eigen value and vectors.

L=A'*A;

[V,d1]=eig(L);

d=diag(d1);

dsort=sort(d,1,'descend');

top10d=dsort(1:10);

eigvector=[];L is(100,100)

V size is (100,100)

eigvector size is (100,10);

Step 3:project and show the eigen-faces corresponding to the top 10 eigen-values.

for i=1:10

Eigface(:,i)=A*eigvector(:,i)*(top10d(i,1).^(-1/2));

endEigface is (2500,10),each column is a eigface (50,50);

(ii) Select the top 30 eigen-faces and compute the eigen-coefficients of all the images in the dataset.

W=Eigfaces'*double(A);%%%%train set cofficientsW is (30,100)

(iii) Using the probe and gallery sets, plot the ROC curve indicating the EER summarizing the matching performance using these coefficients as well as the score distributions.

use the Eiggaces to computer the cofficients in each sets,then calculate the distance(Euclidean,Manhattan,minkowski distance) between probe and gallery.

In a nutshell,

Reduce the dimension of the data from N^2 to M(eigenvectors)

1.Subtract the average face

2.compute the projection onto the face space

3.computer the distance in the face space between the face and all known faces.

problem with Eigenface:

no distinction between inter- and intra-class variabilities(LDA better)

Different illumination

Different head pose

Different alignment

Different facial expression

LDA

1. maximizes the between-class scatter

2. minimizes the within-class scatter

Reduce dimension of the data from N2 to P-1(person number)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?