Problem Description:

develop a program to tone map HDR images using the point and region processing filtering

First Simple Tone Mapping

Simple Tone Mapping Your first tone mapping operator will be to gamma correct the image, in its log space of its luminance. This is an example point-processing technique. To do so requires three steps:

1. First construct the luminance data for the image. You can use whatever operator you want (i.e. YUV, xyY, etc.). The simplest is Lw = 1/61.0* (20.0R + 40.0G + B) for each pixel.

2. Next, compute Ld = Lw for each pixel. However, instead of using the powf() function, it is more ecient to do this in the log space with multiplication. To do so, compute log(Lw) for each pixel, then perform log(Ld) = r* log(Lw). Finally compute Ld = exp(log(Ld)).

3. Finally, compute Cd = Ld/lw * C for each color channel C = {R, G, B}. This scales each color Lw

of each pixel relative to the target display luminance and the input world luminance.

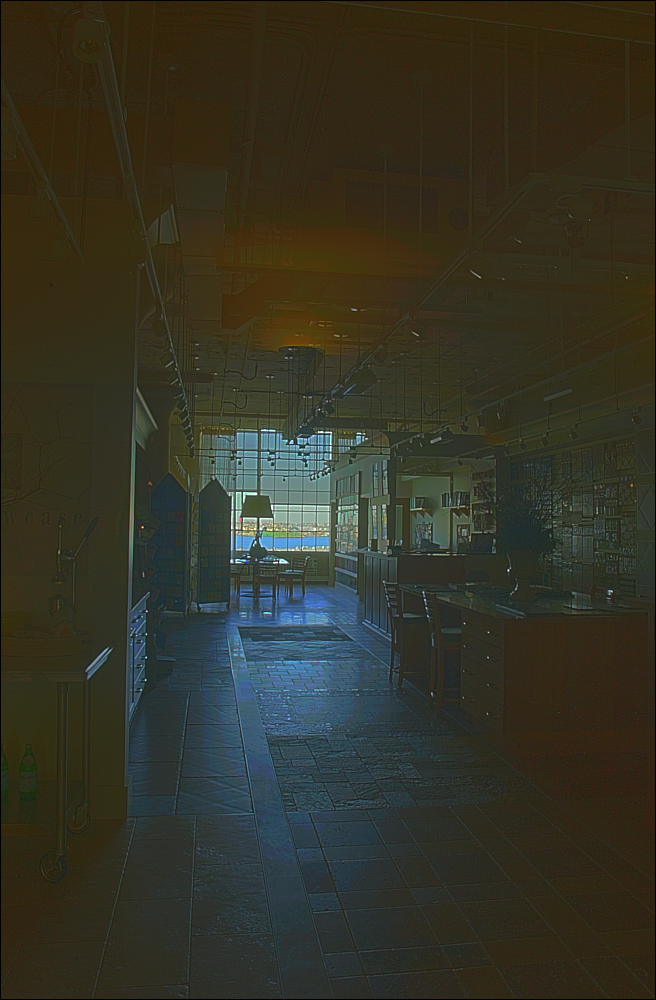

Original image:

After:

gama=0.1

Second Tone Mapping with Convolution

Section 10.2 of Szeliski describes a very simple tone mapping operator which separately processes the low-pass (B) and high-pass (S) data of the log(Lw) channel. We will build these two channels using convolution.

First, you will need to implement a convolution operator, g, that performs low-pass smoothing by doing log(Lw) ⌦ g. g can be anything you like, but I suggest using a box filter of varying sizes (even 5 * 5 will improve the tone map, but up to 20*20 may do better). Later you may want to experiment with tent or Gaussian filters. The basic process you will perform, for each pixel, is the following:

1. B=log(Lw)⌦g

2. S=log(Lw)-B

3. log(Ld)=r*B+S.

4. Ld = exp(log(Ld)).

5.Cd=Ld/Lw*C

Note that Szeliski, incorrectly, multiplies S by in step 3.

Setting is this situation can be tricky to understand. In general, the idea is that you want

to preserve some contrast threshold c. On Durand’s website (near the bottom) he suggests using a , called “compression factor” set relative to the minimum and maximum of B. Specifically, = log(c)/(max(B)-min(B)). I found that c 2 [5, 100] worked well. He also suggests subtracting an absolute scale from the formulation I found that both of these changes improved my results.

void ContoneMap(rgba_pixel **image)//- c mode

{

convolve(conFilter,Lw);

float **Ld;

Ld = new float*[HEIGHT];

Ld[0] = new float[WIDTH*HEIGHT];

for (int i=1; i<HEIGHT; i++) {

Ld[i] = Ld[i-1] + WIDTH;

}

S = new float*[HEIGHT];

S[0] = new float[WIDTH*HEIGHT];

for (int i=1; i<HEIGHT; i++) {

S[i] = S[i-1] + WIDTH;

}

float min=10000,max=0;

for (int row=0; row<HEIGHT; row++) {

for (int col=0; col<WIDTH; col++) {

if(temp_buffer[row][col]<min)min=temp_buffer[row][col];

if(temp_buffer[row][col]>max)max=temp_buffer[row][col];

}

}

cout<<max<<endl;

cout<<min<<endl;

gam=log(c)/(max-min);

cout<<"gam= "<<gam<<endl;

for (int row=0; row<HEIGHT; row++) {

for (int col=0; col<WIDTH; col++) {

S[row][col]=Lw[row][col]-temp_buffer[row][col];

Ld[row][col]=exp(gam*(temp_buffer[row][col])+S[row][col]);

image[row][col].r=image[row][col].r*Ld[row][col]/lw[row][col];

image[row][col].g=image[row][col].g*Ld[row][col]/lw[row][col];

image[row][col].b=image[row][col].b*Ld[row][col]/lw[row][col];

image[row][col].a=1.0;

//cout<<image[row][col].r<<image[row][col].g<<endl;

}

}

}Using boxfilter

size 7

Using Gaussian filters

Advanced Extension

Modify your convolution-based tone mapper to act like a bilateral filter. The idea is that when you tone map with convolution, you will create halos based on how big of a window you convolve against. These halos are the result of crossing edges in the image.

To do so, you will modify your convolution to produce a non-linear operator. Durand suggests quite a few options for this, you are welcome to experiment with your own. I found that multiplying by a weight of w = exp(clamp(d^2)) where d equals the difference in log(Lw) between the center pixel of the convolution and the whatever other pixel j you are summing.

This mode is use a Bilateral Filtering to tone the map.The each weight of the filter is determined by differences in image log-space luminances.

612

612

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?