1、Failed to start namenode.

重新格式化hadoop

查看logs日志

hdfs namenode –format

ormatting using clusterid: CID-dd578a63-1547-43fb-a4c4-4d058a9ab002

2021-12-06 18:43:13,572 ERROR namenode.NameNode: Failed to start namenode.

java.lang.NullPointerException

at java.io.File.<init>(File.java:277)

at org.apache.hadoop.hdfs.server.namenode.NNStorage.setStorageDirectories(NNStorage.java:320)

at org.apache.hadoop.hdfs.server.namenode.NNStorage.<init>(NNStorage.java:179)

at org.apache.hadoop.hdfs.server.namenode.FSImage.<init>(FSImage.java:167)

at org.apache.hadoop.hdfs.server.namenode.NameNode.format(NameNode.java:1206)

at org.apache.hadoop.hdfs.server.namenode.NameNode.createNameNode(NameNode.java:1673)

at org.apache.hadoop.hdfs.server.namenode.NameNode.main(NameNode.java:1783)

2021-12-06 18:43:13,587 INFO util.ExitUtil: Exiting with status 1: java.lang.NullPointerException

2021-12-06 18:43:13,619 INFO namenode.NameNode: SHUTDOWN_MSG:

检查hdfs-site.xml

这个错误是因为hdfs-site.xml文件配置错误导致的,仔细检查其中的 <name>dfs.namenode.shared.edits.dir</name>配置信息是否填写正确,一般都是因为这个配置信息写错导致的。

正确如下:

2、Failed to add storage directory

查看logs日志

021-12-06 18:58:13,948 WARN org.apache.hadoop.hdfs.server.common.Storage: Failed to add storage directory [DISK]file:/usr/local/hadoop/hadoopdata/datanode

java.io.IOException: Incompatible clusterIDs in /usr/local/hadoop/hadoopdata/datanode: namenode clusterID = CID-5cc3c497-58d4-4d06-a19d-71bdb88b13ce; datanode clusterID = CID-79134119-10c9-4381-a6a5-b4854ddb81b0

org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:829)

at java.lang.Thread.run(Thread.java:748)

2021-12-06 18:58:13,953 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: Initialization failed for Block pool <registering> (Datanode Uuid a1081773-99a9-4a1a-a536-e5951fd35212) service to master/192.168.57.110:9000. Exiting.

java.io.IOException: All specified directories have failed to load.

删除data的current。

[root@hadoop-slave data]# pwd

/usr/local/hadoop-2.10.0/data/dfs/data

[root@hadoop-slave data]# ls

current

[root@hadoop-slave data]# rm -rf current

[root@hadoop-slave data]#

重启,检验Jps 6个结果

bash /usr/local/hadoop/sbin/stop-all.sh

bash /usr/local/hadoop/sbin/start-all.sh

[root@master logs]# jps

53089 NameNode

54099 Jps

53445 SecondaryNameNode

53224 DataNode

53832 NodeManager

53691 ResourceManager

3、测试报错

3.1、Could not find or load main class org.apache.hadoop.mapreduce.v2.app.MRAppMaster

测试

./bin/hadoop jar ./share/hadoop/mapreduce/hadoop-mapreduce-examples-*.jar pi 3 3

结果:

处理方法,修改mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

</configuration>

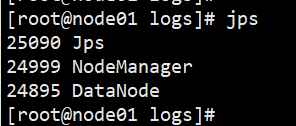

3.2、节点启动之后没有NodeManager

bash /usr/local/hadoop/sbin/stop-all.sh

bash /usr/local/hadoop/sbin/start-all.sh

节点启动之后没有NodeManager

把slaves覆盖workers

Hadoop安装目录下的etc/hadoop/slaves文件,把slaves改成workers就可以生成日志了

Node1

Node2

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?