转载自:https://blog.csdn.net/u012348774/article/details/112123638

code来自:mmflow

1. 模型

针对此问题,PWCNet利用多尺度特征来替换网络串联,其大致网络结构如下。PWCNet首先通过CNN卷积得到多层的特征,然后从低分辨率开始估计光流,并将低分辨率的光流上采样到高分辨率,同时构建cost volume和预测当前分辨率的光流,最后逐步得到最终分辨率的光流结果。

针对其中光流的coarse-to-fine过程,有一个更详细的示意图如下,接下来就具体介绍各个模块的事情。

1. encoder

PWCNetEncoder(

(layers): Sequential(

(0): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(3, 16, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(16, 16, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(16, 16, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(1): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(16, 32, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(2): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(32, 64, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(3): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(64, 96, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(96, 96, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(96, 96, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(4): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(96, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(5): BasicConvBlock(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(128, 196, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(196, 196, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(196, 196, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

2. 多尺度光流估计

- 用前一尺度的光流,对feat2做warp

- 对feat1和warp feat2做cost volume

- concat 4个特征

(corr_feat_, _feat1, upflow, upfeat) - concat feat经dense conv过渡之后,输出当前尺度的光流

最后的光流输出

是在最后的dense conv输出的特征后,再加一个context conv

ContextNet(

(layers): Sequential(

(0): ConvModule(

(conv): Conv2d(565, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(1): ConvModule(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(2, 2), dilation=(2, 2))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(2): ConvModule(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(4, 4), dilation=(4, 4))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(3): ConvModule(

(conv): Conv2d(128, 96, kernel_size=(3, 3), stride=(1, 1), padding=(8, 8), dilation=(8, 8))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(4): ConvModule(

(conv): Conv2d(96, 64, kernel_size=(3, 3), stride=(1, 1), padding=(16, 16), dilation=(16, 16))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(5): ConvModule(

(conv): Conv2d(64, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(activate): LeakyReLU(negative_slope=0.1, inplace=True)

)

(6): Conv2d(32, 2, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

)

)

3. 训练

3.1 两阶段的训练

PWCNet的作者也意识到训练非常的麻烦,所以也进行了非常细致的讨论。为此,本文将训练分成两个阶段,预训练阶段和优化训练阶段

- loss函数

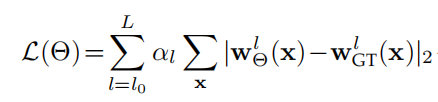

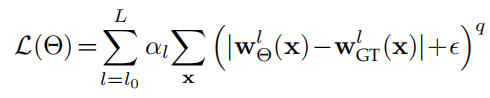

PWCNet在预训练阶段使用了2范数,加快收敛速度,公式如下

在优化训练阶段使用了1范数,并在一定程度上去除外点,提升光流质量,公式如下

总结

- cost volume模块,计算corr feature

- 多尺度的注意力上采样,用上一尺度的flow warp当前尺度的feat

5396

5396

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?