1. rtmp 消息解析:

1.1 、RTMP Message格式概述

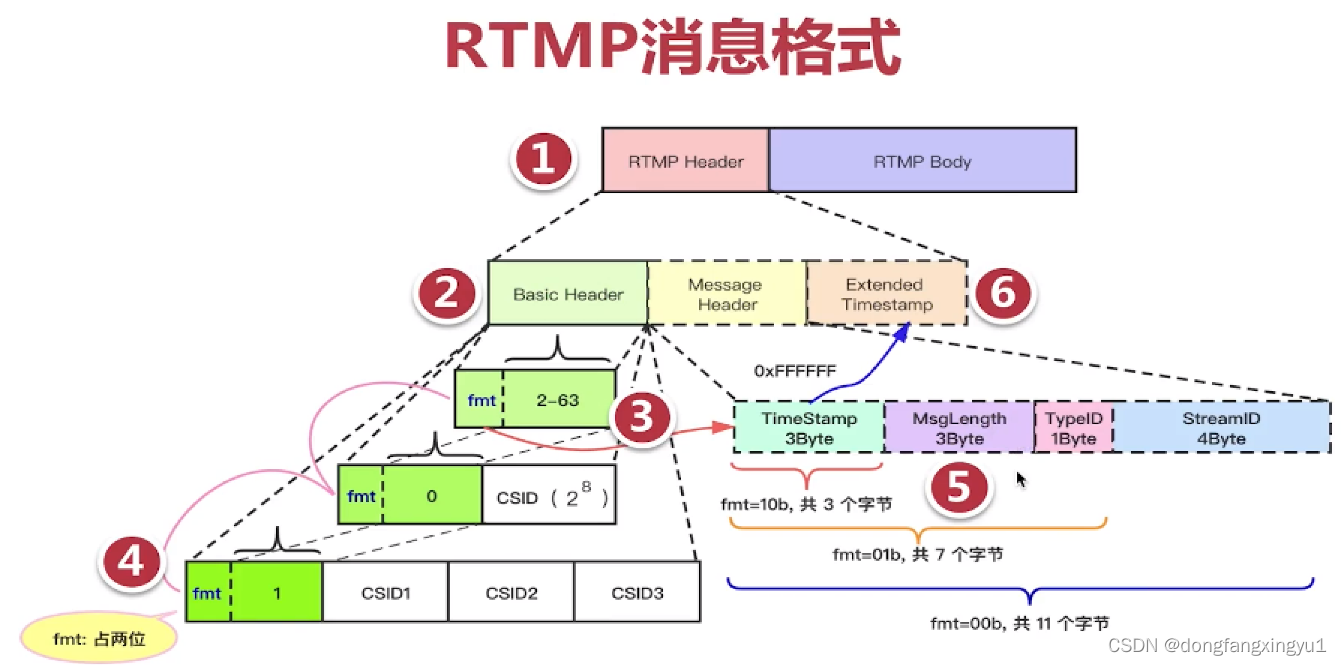

RTMP协议面向上层用户定义了一种Message数据结构用于封装音视频的帧数据和协议控制命令,具体格式如下:

0 1 2 3 4 5 6 7 0 1 2 3 4 5 6 7 0 1 2 3 4 5 6 7 0 1 2 3 4 5 6 7

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Message Type | Payload length |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Timestamp |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Stream ID |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Message Payload |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

Message Type(1 Byte):消息类型ID,主要分为三类:协议控制消息、音视频数据帧、命令消息。

Payload Length(3-Bytes):报文净荷的字节数。以大端格式保存。

Timestamp(4-Bytes):当前消息的时间戳。以大端格式保存

StreamID(4-Bytes):消息流ID,其实从抓包看,一般只有0、1两种值,0:信令,1:音视频数据

Message Payload:Message报文净荷

1.2、RTMP Chunk格式概述

RTMP协议的一个Message数据包可能会非常大(尤其是传输一个视频帧时,最大可能16M字节)。

为了保证TCP高效传输(超大数据包在网络传输中受MTU限制会自动切片,超大数据包在网络传输过程中丢失一个数据片,都需要重传整个报文,导致传输效率降低)。

所以,RTMP协议的设计者决定在RTMP协议内部对Message数据包分片,这里分片的单位被称为Chunk(数据块)。

Chunk报文长度默认128字节,同时用户也可以修改本端发送的Chunk报文长度,并通过RTMP协议通知接收端。所以,网络中实际传输的RTMP报文,总是如下Chunk封装格式:

1~3字节

|<-Chunk Header->| 0/3/7/11字节 0或4字节

+----------------+----------------+-------------------+-----------+

| Basic header | Msg Header | Extended Timestamp|Chunk Data |

+----------------+----------------+-------------------+-----------+

注:因为Msg Header里有一个3字节的timestamp,当timestamp 被设置为0xffffff时,才会出现Extended Timestamp字段

1.2.1 Basic Header

Basic Header也被称为Chunk header,它的实际格式一共有3种(1~3个字节),目的是为了满足不同的长度的CSID(Chunk Stream ID),所以,在足够存储CSID字段的前提下应该用尽量少的字节格式从而减少由于引入Basic Header而增加的数据量。

+-----------------------+------------------+

| format [2个bit] | CSID [6bit] | 结构1:1字节

+-----------------------+------------------+

+-----------------------+------------------+--------------------+

| format [2个bit] | 0 [6bit] | 扩展 CSID [8bit] | 结构2:2字节

+-----------------------+------------------+--------------------+

+-----------------------+------------------+--------------------------------+

| format [2个bit] | 1 [6bit] | 扩展 CSID [16bit] | 结构3:3字节

+-----------------------+------------------+--------------------------------+

实际上为了处理方便,RTMP协议软件在具体实现时总是先读取一个字节的Basic Header,并根据前面2bit的format信息和后面6bit的CSID信息,判断报文的实际结构。

首先判断第一个字节的低6bit的CSID

当低6bit的CSID为0时,Basic Header占用2个字节,扩展CSID在[64+0,255+64=319]之间。

当低6bit的CSID为1时,Basic Header占用3个字节,扩展CSID在[64+0,65535+64=65599]之间。

当低6bit的CSID为2~63时,Basic Header占用1个字节,这种情况适用于绝大多数的RTMP Chunk报文。

CSID=2时,Message Type为1,2,3,5,6对应Chunk层控制协议,4对应用户控制命令

CSID=3~8,用于connect、createStream、releaseStream 、publish、metaData、音视频数据

(不同厂家之间,如FFMPEG、OBS在这里使用的CSID差异很大)

显然,接下来更多的CSID已经意义不大了,毕竟有Message Type也够了。当然,用户可以使用更大的CSID做一些私有协议的扩展。

实际上,真实环境中需要的CSID并不多,所以一般情况下,Basic Header长度总是一个字节。

1.2.2 Msg Header

接下来通过2bit的format字段,判断后面Msg Header格式

format =00,Msg Header占用11个字节,这种结构最浪费,一般用于流开始发送的第一个chunk报文,且只有这种情况下,报文中的timestamp才是一个绝对时间, 后续chunk报文的Msg Header中要么是没有timestamp,有timestamp也只是相对前一个chunk报文的时间增量。

|<--Basic Header->|<------------------------Msg Header----------------------->|

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| format | CSID | timestamp | message length | message type | msg stream id |

| 00 | | | | | |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

2 bits 6 bits 3 bytes 3 bytes 1 bytes 4 bytes

format =01,Msg Header占用7个字节,省去了4个字节的msg stream id,表示当前chunk和上一次发送的chunk所在的流相同

|<--Basic Header->|<---------------Msg Header---------------->|

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| format | CSID | timestamp | message length | message type |

| 01 | | | | |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

2 bits 6 bits 3 bytes 3 bytes 1 bytes

format =10,Msg Header占用3个字节,相对于format=01格式又省去了表示消息长度的3个字节和表示消息类型的1个字节。

|<--Basic Header->|<--Msg Header--->|

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| format | CSID | timestamp |

| 10 | | |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

2 bits 6 bits 3 bytes

format =11,Msg Header占用0个字节,它表示这个chunk的Msg Header和上一个chunk是完全相同的。

|<--Basic Header->|

+-+-+-+-+-+-+-+-+-+

| format | CSID |

| 11 | |

+-+-+-+-+-+-+-+-+-+

2 bits 6 bits

1.3 音视频数据帧

RTMP客户端与服务器之间连接建立后,开始推拉流时,总是先传输和音视频编码信息相关的MetaData(元数据),再传输音视频数据帧:

1)音视频数据总是采用FLV封装格式:tagHeader(1字节) + tagData(编码帧)

2)对于H.264视频,第一个视频帧必须是SPS和PPS,后面才是I帧和P帧。

3)对于AAC音频,第一个音频帧必须是AAC sequence header,后面才是AAC编码音频数据。

所谓元数据MetaData,其实就是一些用字符串描述的音视频编码器ID和宽高信息,接下来以H264和ACC为例初步了解一下音视频数据在RTMP中的Chunk封装格式:

Message Type =8;Audio message

协议层 封装层

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

|RTMP Chunk Header | FLV AudioTagHeader | FLV AudioTagBody |

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

FLV AudioTagHeader

++++++++++++++++++++++++++++++++++++++++++++++++++ +++++++++++++++

|SoundFormat | SoundRate | SoundSize | SoundType | OR + |AACPacketType|

++++++++++++++++++++++++++++++++++++++++++++++++++ +++++++++++++++

4bits 2bits 1bit 1bit

编码格式 采样率 采样精度8或16位 声道数(单/双)

如果音频格式是AAC(0x0A),上面的AudioTagHeader中会多出1个字节的AACPacketType描述后续的ACC包类型:

AACPacketType = 0x00 表示后续数据是AAC sequence header,

AACPacketType = 0x01 表示后续数据是AAC音频数据

最终,AAC sequence header由AudioSpecificConfig定义,简化的AudioSpecificConfig信息包括2字节如下:

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

|AAC Profile 5bits | 采样率 4bits | 声道数 4bits | 其他 3bits |

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

对于RTMP推拉流,在发送第一个音频数据包前必须要发送这个AAC sequence header包。

Message Type =9;Video message

协议层 封装层

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

|RTMP Chunk Header | FLV VideoTagHeader | FLV VideoTagBody |

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

FLV VideoTagHeader

+++++++++++++++++++++++ ++++++++++++++++ ++++++++++++++++++

|Frame Type | CodecID | + |AVCPacketType | + |CompositionTime |

+++++++++++++++++++++++ ++++++++++++++++ ++++++++++++++++++

4 bits 4 bits 1 byte 3 byte

视频帧类型 编码器ID

Frame Type = 1表示H264的关键帧,包括IDR;Frame Type=2表示H264的非关键帧

codecID = 7表示AVC

当采用AVC编码(即H264)时,会增加1个字节的AVCPacketType字段(描述后续的AVC包类型)和3个字节的CompositionTime :

AVCPacketType=0时,表示后续数据AVC sequence header,此时3字节CompositionTime内容为0

AVCPacketType=1时,表示后续数据AVC NALU

AVCPacketType=2时,表示后续数据AVC end of sequence (一般不需要)

FLV VideoTagBody

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

| Size | AVCDecoderConfigurationRecord or ( one or more NALUs ) |

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

4 bytes2. ffmpeg 实现rtmp 协议

const URLProtocol ff_##flavor##_protocol = { \

.name = #flavor, \

.url_open2 = rtmp_open, \

.url_read = rtmp_read, \

.url_read_seek = rtmp_seek, \

.url_read_pause = rtmp_pause, \

.url_write = rtmp_write, \

.url_close = rtmp_close, \

.priv_data_size = sizeof(RTMPContext), \

.flags = URL_PROTOCOL_FLAG_NETWORK, \

.priv_data_class= &flavor##_class, \

};2.1 rtmp_open 分析;

1. tcp 连接

2.rtmp 握手

3.rtmp 连接

4.rtmp 连接后处理:

设置chunk_size

设置WINDOW_ACK_SIZE

invoke 操作等等.......

static int rtmp_open(URLContext *s, const char *uri, int flags, AVDictionary **opts)

{

RTMPContext *rt = s->priv_data;

char proto[8], hostname[256], path[1024], auth[100], *fname;

char *old_app, *qmark, *n, fname_buffer[1024];

uint8_t buf[2048];

int port;

int ret;

if (rt->listen_timeout > 0)

rt->listen = 1;

rt->is_input = !(flags & AVIO_FLAG_WRITE);

av_url_split(proto, sizeof(proto), auth, sizeof(auth),

hostname, sizeof(hostname), &port,

path, sizeof(path), s->filename);

n = strchr(path, ' ');

if (n) {

av_log(s, AV_LOG_WARNING,

"Detected librtmp style URL parameters, these aren't supported "

"by the libavformat internal RTMP handler currently enabled. "

"See the documentation for the correct way to pass parameters.\n");

*n = '\0'; // Trim not supported part

}

if (auth[0]) {

char *ptr = strchr(auth, ':');

if (ptr) {

*ptr = '\0';

av_strlcpy(rt->username, auth, sizeof(rt->username));

av_strlcpy(rt->password, ptr + 1, sizeof(rt->password));

}

}

if (rt->listen && strcmp(proto, "rtmp")) {

av_log(s, AV_LOG_ERROR, "rtmp_listen not available for %s\n",

proto);

return AVERROR(EINVAL);

}

if (!strcmp(proto, "rtmpt") || !strcmp(proto, "rtmpts")) {

if (!strcmp(proto, "rtmpts"))

av_dict_set(opts, "ffrtmphttp_tls", "1", 1);

/* open the http tunneling connection */

ff_url_join(buf, sizeof(buf), "ffrtmphttp", NULL, hostname, port, NULL);

} else if (!strcmp(proto, "rtmps")) {

/* open the tls connection */

if (port < 0)

port = RTMPS_DEFAULT_PORT;

ff_url_join(buf, sizeof(buf), "tls", NULL, hostname, port, NULL);

} else if (!strcmp(proto, "rtmpe") || (!strcmp(proto, "rtmpte"))) {

if (!strcmp(proto, "rtmpte"))

av_dict_set(opts, "ffrtmpcrypt_tunneling", "1", 1);

/* open the encrypted connection */

ff_url_join(buf, sizeof(buf), "ffrtmpcrypt", NULL, hostname, port, NULL);

rt->encrypted = 1;

} else {

/* open the tcp connection */

if (port < 0)

port = RTMP_DEFAULT_PORT;

if (rt->listen)

ff_url_join(buf, sizeof(buf), "tcp", NULL, hostname, port,

"?listen&listen_timeout=%d",

rt->listen_timeout * 1000);

else

ff_url_join(buf, sizeof(buf), "tcp", NULL, hostname, port, NULL);

}

reconnect:

// tcp 连接

if ((ret = ffurl_open_whitelist(&rt->stream, buf, AVIO_FLAG_READ_WRITE,

&s->interrupt_callback, opts,

s->protocol_whitelist, s->protocol_blacklist, s)) < 0) {

av_log(s , AV_LOG_ERROR, "Cannot open connection %s\n", buf);

goto fail;

}

if (rt->swfverify) {

if ((ret = rtmp_calc_swfhash(s)) < 0)

goto fail;

}

rt->state = STATE_START;

// rtmp hand shake 发送C0 C1 C2 接受S0 S1 S2

if (!rt->listen && (ret = rtmp_handshake(s, rt)) < 0)

goto fail;

if (rt->listen && (ret = rtmp_server_handshake(s, rt)) < 0)

goto fail;

rt->out_chunk_size = 128;

rt->in_chunk_size = 128; // Probably overwritten later

rt->state = STATE_HANDSHAKED;

// Keep the application name when it has been defined by the user.

old_app = rt->app;

rt->app = av_malloc(APP_MAX_LENGTH);

if (!rt->app) {

ret = AVERROR(ENOMEM);

goto fail;

}

//extract "app" part from path

qmark = strchr(path, '?');

if (qmark && strstr(qmark, "slist=")) {

char* amp;

// After slist we have the playpath, the full path is used as app

av_strlcpy(rt->app, path + 1, APP_MAX_LENGTH);

fname = strstr(path, "slist=") + 6;

// Strip any further query parameters from fname

amp = strchr(fname, '&');

if (amp) {

av_strlcpy(fname_buffer, fname, FFMIN(amp - fname + 1,

sizeof(fname_buffer)));

fname = fname_buffer;

}

} else if (!strncmp(path, "/ondemand/", 10)) {

fname = path + 10;

memcpy(rt->app, "ondemand", 9);

} else {

char *next = *path ? path + 1 : path;

char *p = strchr(next, '/');

if (!p) {

if (old_app) {

// If name of application has been defined by the user, assume that

// playpath is provided in the URL

fname = next;

} else {

fname = NULL;

av_strlcpy(rt->app, next, APP_MAX_LENGTH);

}

} else {

// make sure we do not mismatch a playpath for an application instance

char *c = strchr(p + 1, ':');

fname = strchr(p + 1, '/');

if (!fname || (c && c < fname)) {

fname = p + 1;

av_strlcpy(rt->app, path + 1, FFMIN(p - path, APP_MAX_LENGTH));

} else {

fname++;

av_strlcpy(rt->app, path + 1, FFMIN(fname - path - 1, APP_MAX_LENGTH));

}

}

}

if (old_app) {

// The name of application has been defined by the user, override it.

if (strlen(old_app) >= APP_MAX_LENGTH) {

ret = AVERROR(EINVAL);

goto fail;

}

av_free(rt->app);

rt->app = old_app;

}

if (!rt->playpath) {

int max_len = 1;

if (fname)

max_len = strlen(fname) + 5; // add prefix "mp4:"

rt->playpath = av_malloc(max_len);

if (!rt->playpath) {

ret = AVERROR(ENOMEM);

goto fail;

}

if (fname) {

int len = strlen(fname);

if (!strchr(fname, ':') && len >= 4 &&

(!strcmp(fname + len - 4, ".f4v") ||

!strcmp(fname + len - 4, ".mp4"))) {

memcpy(rt->playpath, "mp4:", 5);

} else {

if (len >= 4 && !strcmp(fname + len - 4, ".flv"))

fname[len - 4] = '\0';

rt->playpath[0] = 0;

}

av_strlcat(rt->playpath, fname, max_len);

} else {

rt->playpath[0] = '\0';

}

}

if (!rt->tcurl) {

rt->tcurl = av_malloc(TCURL_MAX_LENGTH);

if (!rt->tcurl) {

ret = AVERROR(ENOMEM);

goto fail;

}

ff_url_join(rt->tcurl, TCURL_MAX_LENGTH, proto, NULL, hostname,

port, "/%s", rt->app);

}

if (!rt->flashver) {

rt->flashver = av_malloc(FLASHVER_MAX_LENGTH);

if (!rt->flashver) {

ret = AVERROR(ENOMEM);

goto fail;

}

if (rt->is_input) {

snprintf(rt->flashver, FLASHVER_MAX_LENGTH, "%s %d,%d,%d,%d",

RTMP_CLIENT_PLATFORM, RTMP_CLIENT_VER1, RTMP_CLIENT_VER2,

RTMP_CLIENT_VER3, RTMP_CLIENT_VER4);

} else {

snprintf(rt->flashver, FLASHVER_MAX_LENGTH,

"FMLE/3.0 (compatible; %s)", LIBAVFORMAT_IDENT);

}

}

rt->receive_report_size = 1048576;

rt->bytes_read = 0;

rt->has_audio = 0;

rt->has_video = 0;

rt->received_metadata = 0;

rt->last_bytes_read = 0;

rt->max_sent_unacked = 2500000;

rt->duration = 0;

av_log(s, AV_LOG_DEBUG, "Proto = %s, path = %s, app = %s, fname = %s\n",

proto, path, rt->app, rt->playpath);

if (!rt->listen) {

//rtmp 连接服务器

if ((ret = gen_connect(s, rt)) < 0)

goto fail;

} else {

if ((ret = read_connect(s, s->priv_data)) < 0)

goto fail;

}

do {

//连接rtmp服务 后处理

ret = get_packet(s, 1);

} while (ret == AVERROR(EAGAIN));

if (ret < 0)

goto fail;

if (rt->do_reconnect) {

int i;

ffurl_closep(&rt->stream);

rt->do_reconnect = 0;

rt->nb_invokes = 0;

for (i = 0; i < 2; i++)

memset(rt->prev_pkt[i], 0,

sizeof(**rt->prev_pkt) * rt->nb_prev_pkt[i]);

free_tracked_methods(rt);

goto reconnect;

}

if (rt->is_input) {

// generate FLV header for demuxer

rt->flv_size = 13;

if ((ret = av_reallocp(&rt->flv_data, rt->flv_size)) < 0)

goto fail;

rt->flv_off = 0;

memcpy(rt->flv_data, "FLV\1\0\0\0\0\011\0\0\0\0", rt->flv_size);

// Read packets until we reach the first A/V packet or read metadata.

// If there was a metadata package in front of the A/V packets, we can

// build the FLV header from this. If we do not receive any metadata,

// the FLV decoder will allocate the needed streams when their first

// audio or video packet arrives.

while (!rt->has_audio && !rt->has_video && !rt->received_metadata) {

if ((ret = get_packet(s, 0)) < 0)

goto fail;

}

// Either after we have read the metadata or (if there is none) the

// first packet of an A/V stream, we have a better knowledge about the

// streams, so set the FLV header accordingly.

if (rt->has_audio) {

rt->flv_data[4] |= FLV_HEADER_FLAG_HASAUDIO;

}

if (rt->has_video) {

rt->flv_data[4] |= FLV_HEADER_FLAG_HASVIDEO;

}

// If we received the first packet of an A/V stream and no metadata but

// the server returned a valid duration, create a fake metadata packet

// to inform the FLV decoder about the duration.

if (!rt->received_metadata && rt->duration > 0) {

if ((ret = inject_fake_duration_metadata(rt)) < 0)

goto fail;

}

} else {

rt->flv_size = 0;

rt->flv_data = NULL;

rt->flv_off = 0;

rt->skip_bytes = 13;

}

s->max_packet_size = rt->stream->max_packet_size;

s->is_streamed = 1;

return 0;

fail:

av_freep(&rt->playpath);

av_freep(&rt->tcurl);

av_freep(&rt->flashver);

av_dict_free(opts);

rtmp_close(s);

return ret;

}

2.2 rtmp_write 分析

主要去掉flv tag header,调用rtmp_send_packet

static int rtmp_write(URLContext *s, const uint8_t *buf, int size)

{

RTMPContext *rt = s->priv_data;

int size_temp = size;

int pktsize, pkttype, copy;

uint32_t ts;

const uint8_t *buf_temp = buf;

uint8_t c;

int ret;

int i = 0;

for(i = 0; i < 20; i++)

{

printf("%2x ",buf[i]);

}

printf("\n");

do {

if (rt->skip_bytes) {

int skip = FFMIN(rt->skip_bytes, size_temp);

buf_temp += skip;

size_temp -= skip;

rt->skip_bytes -= skip;

continue;

}

if (rt->flv_header_bytes < RTMP_HEADER) {

const uint8_t *header = rt->flv_header;

int channel = RTMP_AUDIO_CHANNEL;

copy = FFMIN(RTMP_HEADER - rt->flv_header_bytes, size_temp);

bytestream_get_buffer(&buf_temp, rt->flv_header + rt->flv_header_bytes, copy);

rt->flv_header_bytes += copy;

size_temp -= copy;

if (rt->flv_header_bytes < RTMP_HEADER)

break;

pkttype = bytestream_get_byte(&header);

pktsize = bytestream_get_be24(&header);

ts = bytestream_get_be24(&header);

ts |= bytestream_get_byte(&header) << 24;

bytestream_get_be24(&header);

rt->flv_size = pktsize;

if (pkttype == RTMP_PT_VIDEO)

channel = RTMP_VIDEO_CHANNEL;

if (((pkttype == RTMP_PT_VIDEO || pkttype == RTMP_PT_AUDIO) && ts == 0) ||

pkttype == RTMP_PT_NOTIFY) {

if ((ret = ff_rtmp_check_alloc_array(&rt->prev_pkt[1],

&rt->nb_prev_pkt[1],

channel)) < 0)

return ret;

// Force sending a full 12 bytes header by clearing the

// channel id, to make it not match a potential earlier

// packet in the same channel.

rt->prev_pkt[1][channel].channel_id = 0;

}

//this can be a big packet, it's better to send it right here

if ((ret = ff_rtmp_packet_create(&rt->out_pkt, channel,

pkttype, ts, pktsize)) < 0)

return ret;

rt->out_pkt.extra = rt->stream_id;

rt->flv_data = rt->out_pkt.data;

}

copy = FFMIN(rt->flv_size - rt->flv_off, size_temp);

bytestream_get_buffer(&buf_temp, rt->flv_data + rt->flv_off, copy);

rt->flv_off += copy;

size_temp -= copy;

if (rt->flv_off == rt->flv_size) {

rt->skip_bytes = 4;

if (rt->out_pkt.type == RTMP_PT_NOTIFY) {

// For onMetaData and |RtmpSampleAccess packets, we want

// @setDataFrame prepended to the packet before it gets sent.

// However, not all RTMP_PT_NOTIFY packets (e.g., onTextData

// and onCuePoint).

uint8_t commandbuffer[64];

int stringlen = 0;

GetByteContext gbc;

bytestream2_init(&gbc, rt->flv_data, rt->flv_size);

if (!ff_amf_read_string(&gbc, commandbuffer, sizeof(commandbuffer),

&stringlen)) {

if (!strcmp(commandbuffer, "onMetaData") ||

!strcmp(commandbuffer, "|RtmpSampleAccess")) {

uint8_t *ptr;

if ((ret = av_reallocp(&rt->out_pkt.data, rt->out_pkt.size + 16)) < 0) {

rt->flv_size = rt->flv_off = rt->flv_header_bytes = 0;

return ret;

}

memmove(rt->out_pkt.data + 16, rt->out_pkt.data, rt->out_pkt.size);

rt->out_pkt.size += 16;

ptr = rt->out_pkt.data;

ff_amf_write_string(&ptr, "@setDataFrame");

}

}

}

printf("\n");

uint8_t *ptr;

ptr = rt->out_pkt.data;

for(i = 0; i < 20; i++)

{

printf("%2x ",ptr[i]);

}

printf("\n");

if ((ret = rtmp_send_packet(rt, &rt->out_pkt, 0)) < 0)

return ret;

rt->flv_size = 0;

rt->flv_off = 0;

rt->flv_header_bytes = 0;

rt->flv_nb_packets++;

}

} while (buf_temp - buf < size);

if (rt->flv_nb_packets < rt->flush_interval)

return size;

rt->flv_nb_packets = 0;

/* set stream into nonblocking mode */

rt->stream->flags |= AVIO_FLAG_NONBLOCK;

/* try to read one byte from the stream */

ret = ffurl_read(rt->stream, &c, 1);

/* switch the stream back into blocking mode */

rt->stream->flags &= ~AVIO_FLAG_NONBLOCK;

if (ret == AVERROR(EAGAIN)) {

/* no incoming data to handle */

return size;

} else if (ret < 0) {

return ret;

} else if (ret == 1) {

RTMPPacket rpkt = { 0 };

if ((ret = ff_rtmp_packet_read_internal(rt->stream, &rpkt,

rt->in_chunk_size,

&rt->prev_pkt[0],

&rt->nb_prev_pkt[0], c)) <= 0)

return ret;

if ((ret = rtmp_parse_result(s, rt, &rpkt)) < 0)

return ret;

ff_rtmp_packet_destroy(&rpkt);

}

return size;

}rtmp_send_packet

ff_rtmp_packet_write

ff_rtmp_packet_write

将rtmp message 拆分chunk size发送

int ff_rtmp_packet_write(URLContext *h, RTMPPacket *pkt,

int chunk_size, RTMPPacket **prev_pkt_ptr,

int *nb_prev_pkt)

{

uint8_t pkt_hdr[16], *p = pkt_hdr;

int mode = RTMP_PS_TWELVEBYTES;

int off = 0;

int written = 0;

int ret;

RTMPPacket *prev_pkt;

int use_delta; // flag if using timestamp delta, not RTMP_PS_TWELVEBYTES

uint32_t timestamp; // full 32-bit timestamp or delta value

if ((ret = ff_rtmp_check_alloc_array(prev_pkt_ptr, nb_prev_pkt,

pkt->channel_id)) < 0)

return ret;

prev_pkt = *prev_pkt_ptr;

//if channel_id = 0, this is first presentation of prev_pkt, send full hdr.

use_delta = prev_pkt[pkt->channel_id].channel_id &&

pkt->extra == prev_pkt[pkt->channel_id].extra &&

pkt->timestamp >= prev_pkt[pkt->channel_id].timestamp;

timestamp = pkt->timestamp;

if (use_delta) {

timestamp -= prev_pkt[pkt->channel_id].timestamp;

}

if (timestamp >= 0xFFFFFF) {

pkt->ts_field = 0xFFFFFF;

} else {

pkt->ts_field = timestamp;

}

if (use_delta) {

if (pkt->type == prev_pkt[pkt->channel_id].type &&

pkt->size == prev_pkt[pkt->channel_id].size) {

mode = RTMP_PS_FOURBYTES;

if (pkt->ts_field == prev_pkt[pkt->channel_id].ts_field)

mode = RTMP_PS_ONEBYTE;

} else {

mode = RTMP_PS_EIGHTBYTES;

}

}

if (pkt->channel_id < 64) {

bytestream_put_byte(&p, pkt->channel_id | (mode << 6));

} else if (pkt->channel_id < 64 + 256) {

bytestream_put_byte(&p, 0 | (mode << 6));

bytestream_put_byte(&p, pkt->channel_id - 64);

} else {

bytestream_put_byte(&p, 1 | (mode << 6));

bytestream_put_le16(&p, pkt->channel_id - 64);

}

if (mode != RTMP_PS_ONEBYTE) {

bytestream_put_be24(&p, pkt->ts_field);

if (mode != RTMP_PS_FOURBYTES) {

bytestream_put_be24(&p, pkt->size);

bytestream_put_byte(&p, pkt->type);

if (mode == RTMP_PS_TWELVEBYTES)

bytestream_put_le32(&p, pkt->extra);

}

}

if (pkt->ts_field == 0xFFFFFF)

bytestream_put_be32(&p, timestamp);

// save history

prev_pkt[pkt->channel_id].channel_id = pkt->channel_id;

prev_pkt[pkt->channel_id].type = pkt->type;

prev_pkt[pkt->channel_id].size = pkt->size;

prev_pkt[pkt->channel_id].timestamp = pkt->timestamp;

prev_pkt[pkt->channel_id].ts_field = pkt->ts_field;

prev_pkt[pkt->channel_id].extra = pkt->extra;

if ((ret = ffurl_write(h, pkt_hdr, p - pkt_hdr)) < 0)

return ret;

written = p - pkt_hdr + pkt->size;

while (off < pkt->size) {

int towrite = FFMIN(chunk_size, pkt->size - off);

if ((ret = ffurl_write(h, pkt->data + off, towrite)) < 0)

return ret;

off += towrite;

if (off < pkt->size) {

uint8_t marker = 0xC0 | pkt->channel_id;

if ((ret = ffurl_write(h, &marker, 1)) < 0)

return ret;

written++;

if (pkt->ts_field == 0xFFFFFF) {

uint8_t ts_header[4];

AV_WB32(ts_header, timestamp);

if ((ret = ffurl_write(h, ts_header, 4)) < 0)

return ret;

written += 4;

}

}

}

return written;

}

576

576

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?