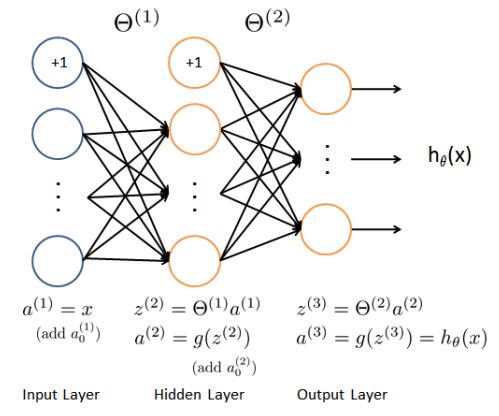

Model representation

作为例子模型,神经网络有三层,一个输入层,一个隐藏层和一个输出层。

在手写数字识别中,输入是20*20的图片像素值,因此输入层除偏差单元1外,有400个单元。

第二层有25个单元;输出层有10单元,对应识别的数字(类),0用10来表示。

训练集依旧用X,y来表示。

中间参数为Theta1和Theta2。

Load data and set parameters

.mat格式的数据文件,直接保存了X,y。

fileName = 'xxx.mat';

load(fileName, 'X', 'y');

m = size(X, 1);

input_layer_size = 400; % 20x20 Input Images of Digits

hidden_layer_size = 25; % 25 hidden units

num_labels = 10; % 10 labels, from 1 to 10

% (note that we have mapped "0" to label 10)Visualize data

随机选择100个数据点,可视化。

randperm(n),可以把随机打乱一个n长度的数字序列。

sel = randperm(m);

sel = sel(1:100);

displayData(X(sel, :));displayData函数

function [h, display_array] = displayData(X, example_width)

%DISPLAYDATA Display 2D data in a nice grid

% [h, display_array] = DISPLAYDATA(X, example_width) displays 2D data

% stored in X in a nice grid. It returns the figure handle h and the

% displayed array if requested.

% Set example_width automatically if not passed in

if ~exist('example_width', 'var') || isempty(example_width)

example_width = round(sqrt(size(X, 2)));

end

% Gray Image

colormap(gray);

% Compute rows, cols

[m n] = size(X);

example_height = (n / example_width);

% Compute number of items to display

display_rows = floor(sqrt(m));

display_cols = ceil(m / display_rows);

% Between images padding

pad = 1;

% Setup blank display

display_array = - ones(pad + display_rows * (example_height + pad), ...

pad + display_cols * (example_width + pad));

% Copy each example into a patch on the display array

curr_ex = 1;

for j = 1:display_rows

for i = 1:display_cols

if curr_ex > m,

break;

end

% Copy the patch

% Get the max value of the patch

max_val = max(abs(X(curr_ex, :)));

display_array(pad + (j - 1) * (example_height + pad) + (1:example_height), ...

pad + (i - 1) * (example_width + pad) + (1:example_width)) = ...

reshape(X(curr_ex, :), example_height, example_width) / max_val;

curr_ex = curr_ex + 1;

end

if curr_ex > m,

break;

end

end

% Display Image

h = imagesc(display_array, [-1 1]);

% Do not show axis

axis image off

drawnow;

end

Train parameters

Random initialize Theta

θ(l) 的随机初始化值通常落在 [−ϵinit,ϵinit] 。这个范围内的数保证了参数足够小,并且使得学习过程更有效率。一种有效的策略是使得 ϵinit=6√Lin+Lout

本文介绍了一个使用MATLAB实现的神经网络模型,用于手写数字识别。模型包含输入、隐藏和输出三层,其中输入层有400个单元,隐藏层25个单元,输出层10个单元。数据加载后,通过可视化部分随机数据进行展示。接下来,通过随机初始化权重、定义成本函数和反向传播算法进行参数训练。最后,利用fmincg进行优化训练,并实现预测功能。

本文介绍了一个使用MATLAB实现的神经网络模型,用于手写数字识别。模型包含输入、隐藏和输出三层,其中输入层有400个单元,隐藏层25个单元,输出层10个单元。数据加载后,通过可视化部分随机数据进行展示。接下来,通过随机初始化权重、定义成本函数和反向传播算法进行参数训练。最后,利用fmincg进行优化训练,并实现预测功能。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

155

155

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?