特征选择指的是按照一定的规则从原来的特征集合中选择出一小部分最为有效的特征。通过特征选择,一些和任务无关或是冗余的特征被删除,从而提高数据处理的效率。

文本数据的特征选择研究的重点就是用来衡量单词重要性的评估函数,其过程就是首先根据这个评估函数来给每一个单词计算出一个重要性的值,然后根据预先设定好的阈值来选择出所有其值超过这个阈值的单词。

根据特征选择过程与后续数据挖掘算法的关联,特征选择方法可分为过滤、封装和嵌入。

(1)过滤方法(Filter Approach):使用某种独立于数据挖掘任务的方法,在数据挖掘算法运行之前进行特征选择,即先过滤特征集产生一个最有价值的特征子集。或者说,过滤方法只使用数据集来评价每个特征的相关性, 它并不直接优化任何特定的分类器, 也就是说特征子集的选择和后续的分类算法无关。

(2)封装方法(Wrapper Approach):将学习算法的结果作为特征子集评价准则的一部分,根据算法生成规则的分类精度选择特征子集。该类算法具有使得生成规则分类精度高的优点,但特征选择效率较低。封装方法与过滤方法正好相反, 它直接优化某一特定的分类器, 使用后续分类算法来评价候选特征子集的质量。

一般说来, 过滤方法的效率比较高, 结果与采用的分类算法没有关系, 但效果稍差;封装方法占用的运算时间较多, 结果依赖于采用的分类算法, 也因为这样其效果较好。

(3)嵌入方法(embedded Approach):特征选择作为数据挖掘算法的一部分自然地出现。在数据挖掘算法运行期间,算法本身决定使用哪些属性和忽略哪些特征,如决策树C4.5分类算法。

如果将过滤方法和封装方法结合,就产生了第四种方法:

(4)混合方法(Hybrid Approach):过滤方法和封装方法的结合,先用过滤方法从原始数据集中过滤出一个候选特征子集,然后用封装方法从候选特征子集中得到特征子集。该方法具有过滤方法和封装方法两者的优点,即效率高,效果好。

根据特征选择过程是否用到类信息的指导,即其评估函数是否依赖类信息,特征选择可分为监督式特征选择(需要依赖类信息的有监督特征选择,只能用于文本分类)、无监督式特征选择(不需要依赖类信息的无监督特征选择,用于文本分类和文本聚类)。

(1)监督式特征选择(supervised feature selection):使用类信息来进行指导,通过度量类信息与特征之间的相互关系来确定子集大小。

(2)无监督式特征选择(unsupervised feature selection):在没有类信息的指导下,使用样本聚类或特征聚类对聚类过程中的特征贡献度进行评估,根据贡献度的大小进行特征选择。

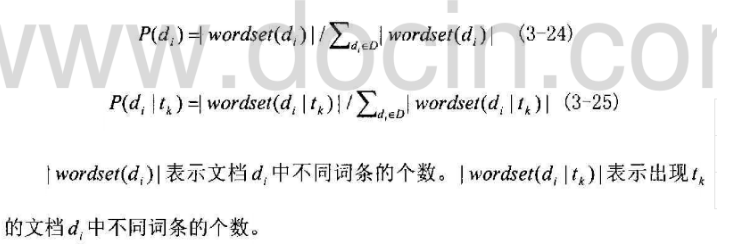

下面,我先介绍有监督特征选择方法IG(Information Gain信息增益)和CHI(X2 statistic卡方统计)及它们相应的Java实现。由于这两者需要的数据是相同的:clusterMember类别成员类,特征所在文本集,DF集,需要做的事情是相同的:进行特征选择得到特征重要性集,所以我写了一个抽象父类Supervisor:

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Set;

/**

*

* @author Angela

*/

public abstract class Supervisor {

protected Map<Integer,List<String>> clusterMember;

protected Map<String,Set<String>> featureIndex;

protected Map<String,Integer> DF;

protected int textNum;

protected Set<String> featureSet;

protected Map<String,Double> featureMap;

/**

* 构造函数,初始化各个数据

* @param clusterMember 类别成员

* @param featureIndex

* @param DF

*/

public Supervisor(Map<Integer,List<String>> clusterMember,

Map<String,Set<String>> featureIndex,Map<String,Integer> DF){

this.clusterMember=clusterMember;

textNum=0;

for(Map.Entry<Integer,List<String>> me: clusterMember.entrySet()){

textNum+=me.getValue().size();

}

this.featureIndex=featureIndex;

this.DF=DF;

featureSet=DF.keySet();

featureMap=new HashMap<String,Double>();

}

/**

* 进行特征选择,得到featureMap

* @return 特征-重要性集

*/

public abstract Map<String,Double> selecting();

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

IG

import java.util.List;

import java.util.Map;

import java.util.Set;

/**

*

* @author Angela

*/

public class IG extends Supervisor{

public IG(Map<Integer,List<String>> clusterMember,

Map<String,Set<String>> featureIndex,Map<String,Integer> DF){

super(clusterMember,featureIndex,DF);

}

/**

* 计算文本集的熵

* @return 总熵

*/

public double computeEntropyOfCluster(){

double entropy=0;

double pro;

double temp;

for(Map.Entry<Integer,List<String>> me:clusterMember.entrySet()){

pro=(double)me.getValue().size()/textNum;

temp=pro*Math.log(pro);

entropy+=temp;

}

return -entropy;

}

/**

* 计算特征的条件熵

* @param f 特征

* @return 特征f的条件熵

*/

public double computeConditionalEntropy(String f){

double ce=0;

double df=DF.get(f);

double nf=textNum-df;

Set<String> text=featureIndex.get(f);

double proOfCF1;

double proOfCF2;

double value1=0;

double value2=0;

for(Map.Entry<Integer,List<String>> me:clusterMember.entrySet()){

int count=0;

List<String> member=me.getValue();

int size=member.size();

for(String path: member){

if(text.contains(path)){

count++;

}

}

double temp1=0;

double temp2=0;

if(count!=0&&count!=size){

proOfCF1=count/df;

temp1=proOfCF1*Math.log(proOfCF1);

proOfCF2=(size-count)/nf;

temp2=proOfCF2*Math.log(proOfCF2);

}

value1+=temp1;

value2+=temp2;

}

ce=(df*value1+nf*value2)/textNum;

return ce;

}

/**

* 进行特征选择,计算所有特征的IG,得到featureMap

* @return 特征-重要性集

*/

@Override

public Map<String,Double> selecting(){

double e=computeEntropyOfCluster();

for(String f: featureSet){

double ce=computeConditionalEntropy(f);

double value=e+ce;

featureMap.put(f, value);

}

return featureMap;

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

CHI

这里我选择的是计算CHI总和

import java.util.List;

import java.util.Map;

import java.util.Set;

/**

*

* @author Angela

*/

public class CHI extends Supervisor{

public CHI(Map<Integer,List<String>> clusterMember,

Map<String,Set<String>> featureIndex,Map<String,Integer> DF){

super(clusterMember,featureIndex,DF);

}

/**

* 计算特征的CHI值

* @param feature 特征

* @return 特征f的CHI值

*/

public double computeCHI(String feature){

double macro_chi=0;

double pf=DF.get(feature);

double nf=textNum-pf;

Set<String> text=featureIndex.get(feature);

for(Map.Entry<Integer,List<String>> me:clusterMember.entrySet()){

List<String> member=me.getValue();

double pc=member.size();

double nc=textNum-pc;

double value1=pf*nf*pc*nc;

double pf_pc=0;

for(String path: member){

if(text.contains(path)){

pf_pc++;

}

}

double pf_nc=pf-pf_pc;

double nf_pc=pc-pf_pc;

double nf_nc=nc-pf_nc;

double value2=Math.pow(pf_pc*nf_pc-pf_nc*nf_nc,2);

double chi=pc*value2/value1;

macro_chi+=chi;

}

return macro_chi;

}

/**

* 进行特征选择,计算所有特征的CHI,得到featureMap

* @return 特征-重要性集

*/

public Map<String,Double> selecting(){

for(String feature: featureSet){

double value=computeCHI(feature);

featureMap.put(feature, value);

}

return featureMap;

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

然后我们写一个方法来将特征选择后的结果保存到arff文件中:

public static void supervisor(String segmentPath,String DFPath,

int num,String TFIDFPath,String savePath){

Map<String,Integer> DF=Reader.toIntMap(DFPath);

Map<String,Integer> subDF=MapUtil.getSubMap(DF, 3, 3000);

Map<String,Set<String>> dataSet=Reader.readDataSet(segmentPath);

Map<String,Set<String>> featureIndex=

GetData.getFeatureIndex(dataSet, subDF.keySet());

Map<Integer,List<String>> clusterMember=

GetData.getClusterMember(segmentPath);

Supervisor sv=new CHI(clusterMember,featureIndex,subDF);

Map<String,Double> featureMap=sv.selecting();

Set<String> featureSet=MapUtil.getSubMap(featureMap, num).keySet();

Map<String,Map<String,Double>> tfidf=

GetData.getTFIDF(TFIDFPath, featureSet);

List<String> labelList=GetData.getLabelList(TFIDFPath);

String[] labels=new String[labelList.size()];

labelList.toArray(labels);

//写出arff文件,进行测试

Writer.saveAsArff(tfidf, featureSet, labels, savePath);

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

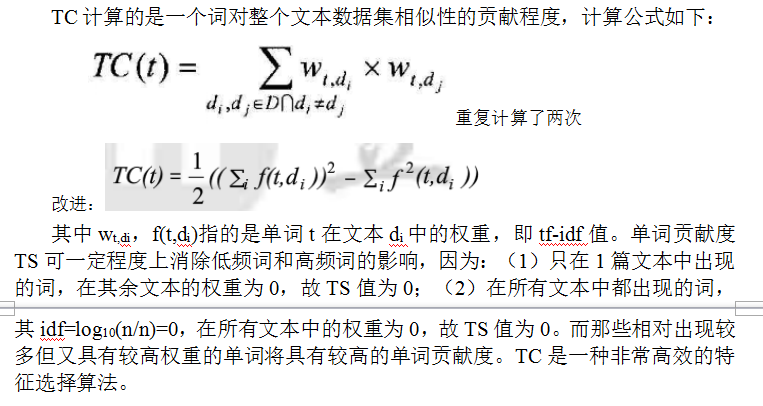

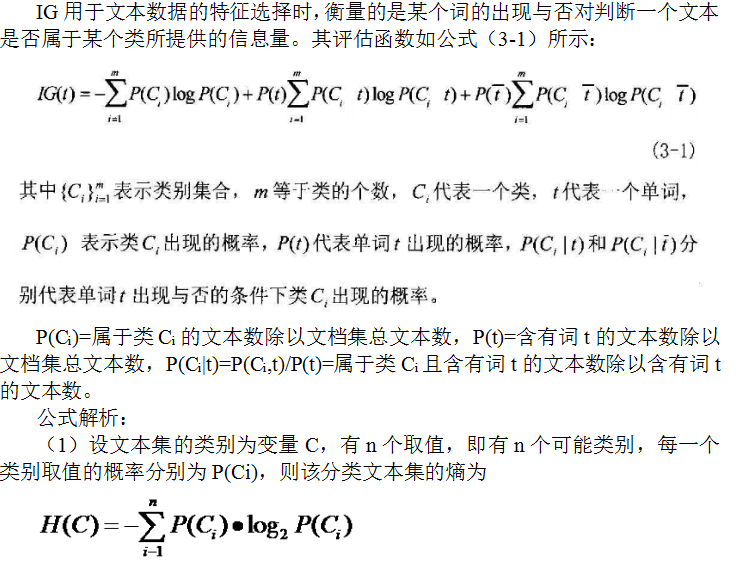

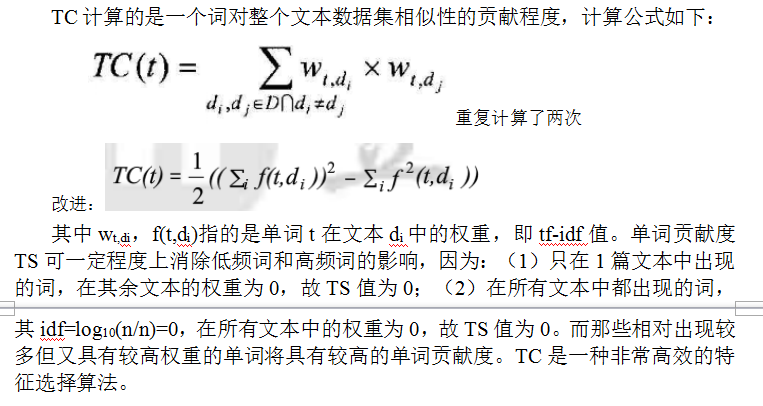

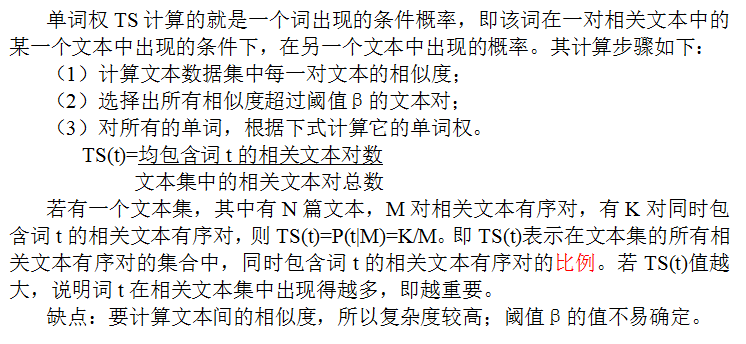

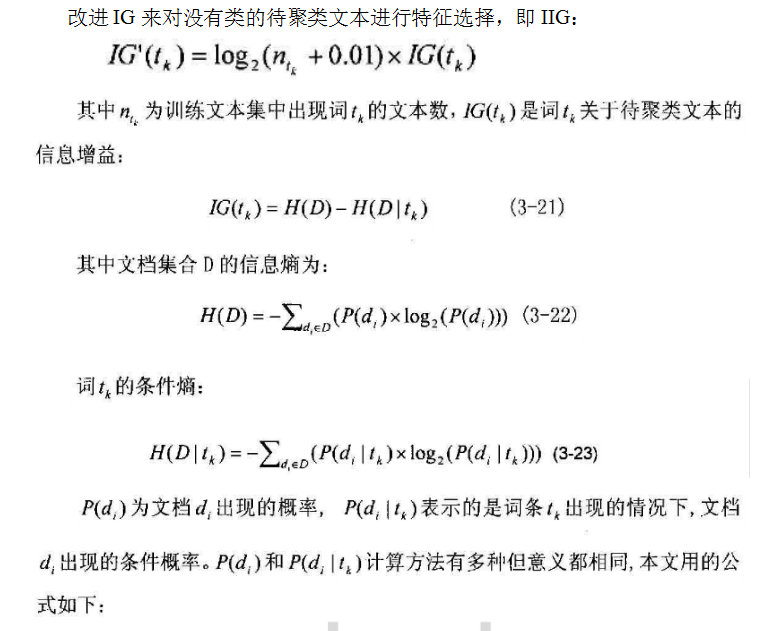

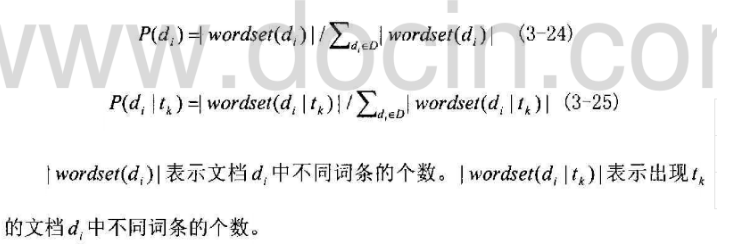

下面,介绍无监督特征选择方法TC(Term Contribution单词贡献度)、TS(Term Strength单词权)、IIG(Improved Information Gain改进的用于无监督的IG)及它们各自的Java实现。三者的分类效果都很好,但TS的时间复杂度是N*N(N为文本集的文本总数),花费的时间高于TC和IIG。

TC

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Set;

import utility.GetData;

import utility.MapUtil;

import utility.Reader;

import utility.Writer;

/**

*

* @author Angela

*/

public class TC {

/**

* 计算特征的TC值

* @param index 包含有某个特征的所有文本及在文本中的权重的集合

* @return 该特征的TC值

*/

private static double computeTC(Map<String,Double> index){

double tc=0;

double value1=0;

double value2=0;

for(Map.Entry<String,Double> me: index.entrySet()){

double value=me.getValue();

value1+=value;

value2+=value*value;

}

tc=(value1*value1-value2)/2;

return tc;

}

/**

* 进行特征选择,计算所有特征的TC贡献度,得到featureMap

* @return 特征-重要性集

*/

public static Map<String,Double> selecting(

Map<String,Map<String,Double>> termIndex){

Map<String,Double> featureMap=new HashMap<String,Double>();

for(Map.Entry<String,Map<String,Double>> me:termIndex.entrySet()){

String f=me.getKey();

double value=computeTC(me.getValue());

featureMap.put(f, value);

}

return featureMap;

}

public static void main(String args[]){

String segmentPath="data\\r8-train-stemmed";

String DFPath="data\\r8trainDF.txt";

int num=1000;

String TFIDFPath="data\\r8trainTFIDF2";

String savePath="E:\\tc.arff";

Map<String,Integer> DF=Reader.toIntMap(DFPath);

Set<String> subSet=MapUtil.getSubMap(DF, 3, 3000).keySet();

Map<String,Map<String,Double>> TFIDF=

GetData.getTFIDF(TFIDFPath, subSet);

Map<String,Map<String,Double>> termIndex=

GetData.getTermIndex(TFIDF, subSet);

Map<String,Double> featureMap=TC.selecting(termIndex);

Set<String> featureSet=MapUtil.getSubMap(featureMap, num).keySet();

Map<String,Map<String,Double>> tfidf=

GetData.getTFIDF(TFIDF, featureSet);

List<String> labelList=GetData.getLabelList(TFIDFPath);

String[] labels=new String[labelList.size()];

labelList.toArray(labels);

Writer.saveAsArff(tfidf, featureSet, labels, savePath);

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

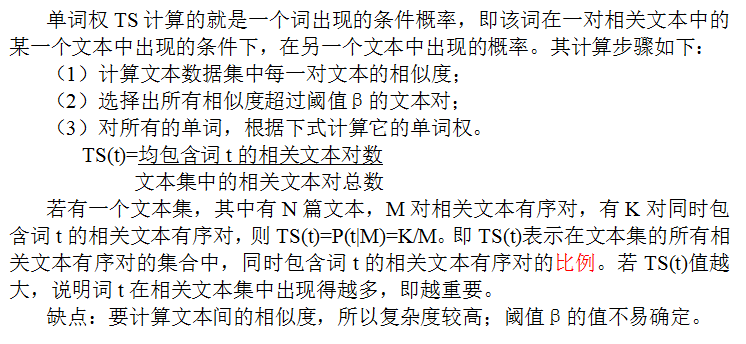

TS

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Set;

import utility.GetData;

import utility.MapUtil;

import utility.MathUtil;

import utility.Reader;

import utility.Writer;

/**

*

* @author Angela

*/

public class TS {

public static Map<String,Double> selecting(

Map<String,Map<String,Double>> TFIDF,double threshold){

int textNum=TFIDF.size();

List<Map<String,Double>> textVSM=new

ArrayList<Map<String,Double>>(TFIDF.values());

Map<String,Integer> termSimTextNum=new HashMap<String,Integer>();

int simTextCount=0;

for(int i=0;i<textNum;i++){

List<String> simText=new ArrayList<String>();

Map<String,Double> text1=textVSM.get(i);

Set<String> featureSet=text1.keySet();

for(int j=0;j<textNum;j++){

if(i!=j){

Map<String,Double> text2=textVSM.get(j);

double cos=MathUtil.calSim(text1,text2);

if(cos>threshold){

simTextCount++;

for(String feature: featureSet){

if(text2.containsKey(feature)){

if(termSimTextNum.containsKey(feature)){

termSimTextNum.put(feature,

termSimTextNum.get(feature)+1);

}else{

termSimTextNum.put(feature, 1);

}

}

}

}

}

}

}

System.out.println("相关文本对总数:"+simTextCount);

Map<String,Double> featureMap=new HashMap<String,Double>();

for(Map.Entry<String,Integer> me: termSimTextNum.entrySet()){

String f=me.getKey();

double value=me.getValue()*1.0/simTextCount;

featureMap.put(f, value);

}

return featureMap;

}

public static void main(String args[]){

String segmentPath="data\\r8-train-stemmed";

String DFPath="data\\r8trainDF.txt";

int num=1000;

String TFIDFPath="data\\r8trainTFIDF2";

String savePath="E:\\ts.arff";

Map<String,Integer> DF=Reader.toIntMap(DFPath);

Map<String,Integer> subDF=MapUtil.getSubMap(DF, 3, 3000);

Set<String> subSet=subDF.keySet();

Map<String,Map<String,Double>> TFIDF=

GetData.getTFIDF(TFIDFPath, subSet);

Map<String,Double> featureMap=TS.selecting(TFIDF,0.5);

Set<String> featureSet=MapUtil.getSubMap(featureMap, num).keySet();

Map<String,Map<String,Double>> tfidf=

GetData.getTFIDF(TFIDF, featureSet);

List<String> labelList=GetData.getLabelList(TFIDFPath);

String[] labels=new String[labelList.size()];

labelList.toArray(labels);

Writer.saveAsArff(tfidf, featureSet, labels, savePath);

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

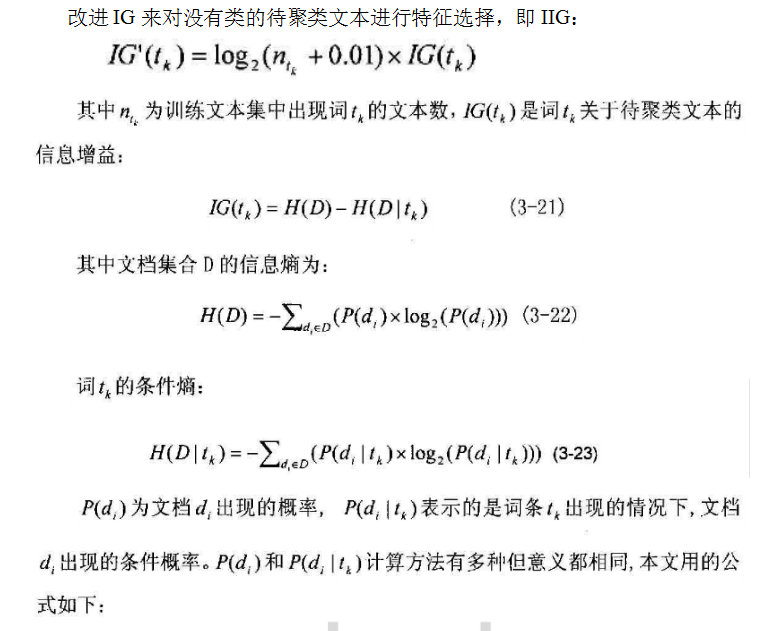

IIG

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Set;

import utility.GetData;

import utility.MapUtil;

import utility.Reader;

import utility.Writer;

public class IIG {

public static Map<String,Double> selecting(

Map<String,Set<String>> dataSet,Map<String,Integer> DF){

Set<String> featureSet=DF.keySet();

Map<String,Set<String>> featureIndex=GetData.

getFeatureIndex(dataSet,featureSet);

Map<String,List<Integer>> featureNum=new HashMap<String,List<Integer>>();

Map<String,Integer> featureSum=new HashMap<String,Integer>();

for(Map.Entry<String,Set<String>> me: featureIndex.entrySet()){

String f=me.getKey();

Set<String> texts=me.getValue();

List<Integer> list=new ArrayList<Integer>();

int sum=0;

for(String text: texts){

int len=dataSet.get(text).size();

list.add(len);

sum+=len;

}

featureNum.put(f, list);

featureSum.put(f, sum);

}

Map<String,Double> featureMap=new HashMap<String,Double>();

for(String f: featureSet){

int dfValue=DF.get(f);

List<Integer> list=featureNum.get(f);

int sum=featureSum.get(f);

double ce=0;

for(int num: list){

double pro=num*1.0/sum;

ce+=(pro*Math.log(pro));

}

double value=-Math.log(dfValue+0.01)*ce;

featureMap.put(f, value);

}

return featureMap;

}

public static void main(String args[]){

String segmentPath="data\\r8-train-stemmed";

String DFPath="data\\r8trainDF.txt";

int num=1000;

String TFIDFPath="data\\r8trainTFIDF2";

String savePath="E:\\iig.arff";

Map<String,Integer> DF=Reader.toIntMap(DFPath);

Map<String,Integer> subDF=MapUtil.getSubMap(DF, 3, 3000);

Set<String> subSet=subDF.keySet();

Map<String,Set<String>> dataSet=Reader.readDataSet(segmentPath);

Map<String,Map<String,Double>> TFIDF=

GetData.getTFIDF(TFIDFPath, subSet);

Map<String,Double> featureMap=IIG.selecting(dataSet,subDF);

//MapUtil.print(featureMap, 100);

Set<String> featureSet=MapUtil.getSubMap(featureMap, num).keySet();

Map<String,Map<String,Double>> tfidf=

GetData.getTFIDF(TFIDF, featureSet);

List<String> labelList=GetData.getLabelList(TFIDFPath);

String[] labels=new String[labelList.size()];

labelList.toArray(labels);

Writer.saveAsArff(tfidf, featureSet, labels, savePath);

}

}

2276

2276

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?