最近在学习tensorflow,今天特地总结下损失函数,多总结总是件好事,也方便大家查找。资料来源于《tensorflow机器学习实战指南》。

import tensorflow as tf

import matplotlib.pyplot as plt回归算法损失函数

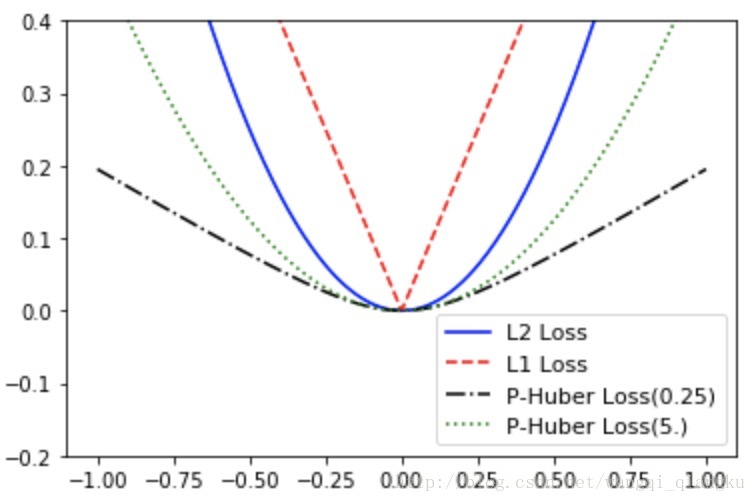

x_vals = tf.linspace(-1.,1.,500)

target = tf.constant(0.)l2正则损失函数(即欧拉损失函数)

sess = tf.InteractiveSession()

l2_y_vals = tf.square(target-x_vals)

l2_y_out = sess.run(l2_y_vals)l1正则损失函数(即绝对值损失函数)

l1_y_vals = tf.abs(target-x_vals)

l1_y_out = sess.run(l1_y_vals)Pseudo-Huber损失函数

delta1 = tf.constant(0.25)

phuber1_y_vals = tf.multiply(tf.square(delta1),tf.sqrt(1.+tf.square((target-x_vals)/delta1))-1.)

phuber1_y_out = sess.run(phuber1_y_vals)

delta2 = tf.constant(5.)

phuber2_y_vals = tf.multiply(tf.square(delta2),tf.sqrt(1.+tf.square((target-x_vals)/delta2))-1.)

phuber2_y_out = sess.run(phuber2_y_vals)回归算法的损失函数

x_array = sess.run(x_vals)

plt.plot(x_array,l2_y_out,'b-',label='L2 Loss')

plt.plot(x_array,l1_y_out,'r--',label='L1 Loss')

plt.plot(x_array,phuber1_y_out,'k-.',label = 'P-Huber Loss(0.25)')

plt.plot(x_array,phuber2_y_out,'g:',label= 'P-Huber Loss(5.)')

plt.ylim(-0.2,0.4)

plt.legend(loc='lower right',prop={'size':11})

plt.show()分类算法损失函数

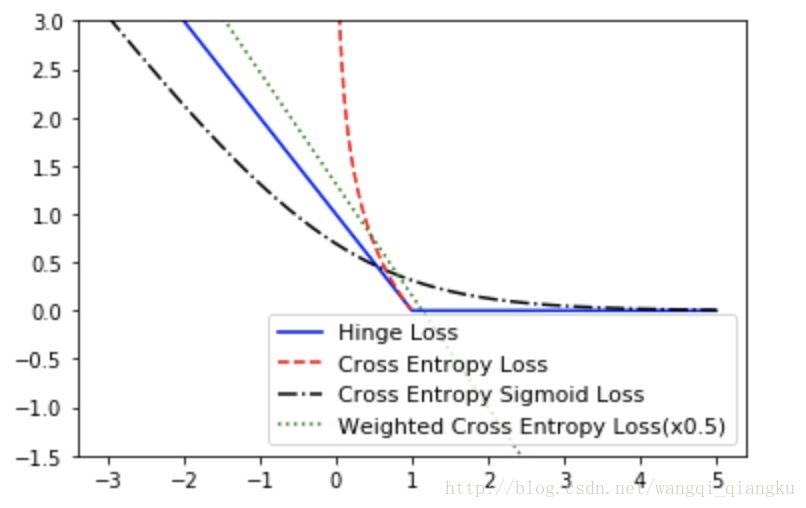

x_vals = tf.linspace(-3.,5.,500)

target = tf.constant(1.)

targets = tf.fill([500,],1.)Hinge损失函数

hinge_y_vals = tf.maximum(0.,1.-tf.multiply(target,x_vals))

hinge_y_out = sess.run(hinge_y_vals)两类交叉熵损失函数(cross_entropy loss)

xentropy_y_vals = -tf.multiply(target,tf.log(x_vals))-tf.multiply((1.-target),tf.log(1.-x_vals))

xentropy_y_out = sess.run(xentropy_y_vals)sigmoid交叉熵损失函数(sigmoid cross entropy loss)

xentropy_sigmoid_y_vals = tf.nn.sigmoid_cross_entropy_with_logits(logits=x_vals,labels=targets)

xentropy_sigmoid_y_out = sess.run(xentropy_sigmoid_y_vals)加权交叉熵损失函数(weighted cross entropy loss)

weight = tf.constant(0.5)

xentropy_weighted_y_vals = tf.nn.weighted_cross_entropy_with_logits(x_vals,targets,weight)

xentropy_weighted_y_out = sess.run(xentropy_weighted_y_vals)softmax交叉熵损失函数(softmax cross entropy loss)

unscaled_logits = tf.constant([[1.,-3.,10.]])

target_dist = tf.constant([[0.1,0.02,0.88]])

softmax_entropy = tf.nn.softmax_cross_entropy_with_logits_v2(logits=unscaled_logits,labels = target_dist)

sess.run(softmax_entropy)稀疏softmax交叉熵损失函数(sparse softmax cross entropy loss)

unscaled_logits = tf.constant([[1.,-3.,10.]])

sparse_target_dist = tf.constant([2])

sparse_xentropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=unscaled_logits,labels=sparse_target_dist)

sess.run(sparse_xentropy)分类算法损失函数

x_array = sess.run(x_vals)

plt.plot(x_array,hinge_y_out,'b-',label='Hinge Loss')

plt.plot(x_array,xentropy_y_out,'r--',label='Cross Entropy Loss')

plt.plot(x_array,xentropy_sigmoid_y_out,'k-.',label='Cross Entropy Sigmoid Loss')

plt.plot(x_array,xentropy_weighted_y_out,'g:',label='Weighted Cross Entropy Loss(x0.5)')

plt.ylim(-1.5,3)

plt.legend(loc='lower right',prop={'size':11})

plt.show()

8928

8928

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?