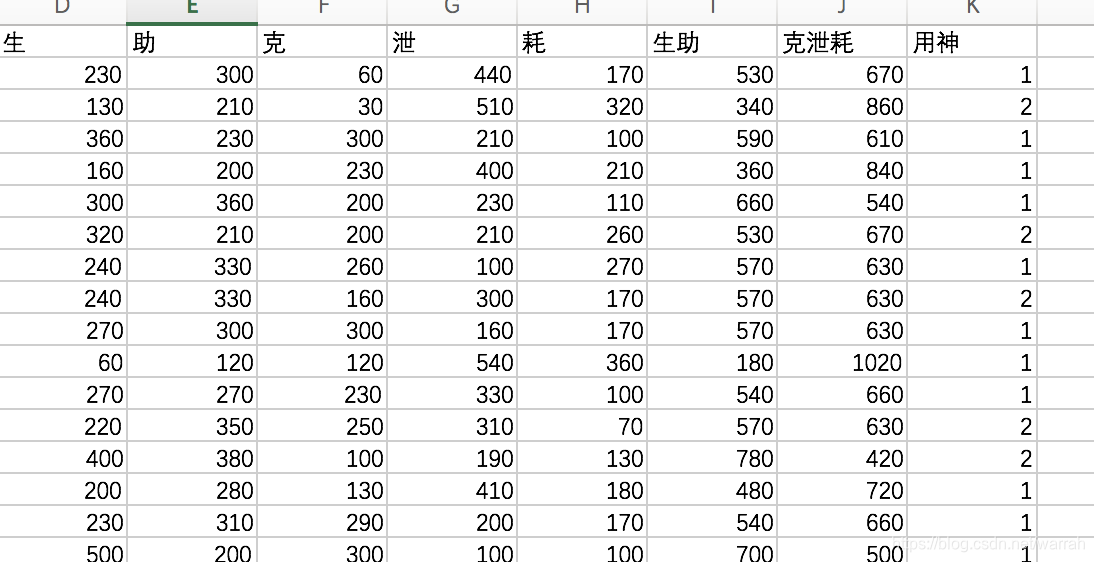

我对韦千里的《呱呱集》中108个命例,进行标注,尝试使用knn算法,计算命例的用神。结果先用最简单的“克泄耗”和“生助”两种,

计算规则使用李洪城老师的《具体断四柱导读》

1 knn算法

import numpy as np

import pandas as pd

from sklearn import metrics

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

def get_datas():

file_name = r'/Users/dzm/Documents/bazi/201903291933.csv'

file = pd.read_csv(file_name,usecols=['生','助','克','泄','耗','用神'],encoding='GBK')

ar = np.array(file)

return ar[:,:5],ar[:,5]

def predict_1(x_data,y_data):

scaler = MinMaxScaler()

X = scaler.fit_transform(x_data)

x_train,x_test,y_train,y_test = train_test_split(X,y_data,test_size=0.2)

knn = KNeighborsClassifier(n_neighbors=3)

knn.fit(x_train,y_train)

print(knn)

expected = y_test

predicted = knn.predict(x_test)

print(metrics.classification_report(expected, predicted))

label = list(set(y_data))

print(metrics.confusion_matrix(expected, predicted, labels=label))

if __name__ == '__main__':

x_data, y_data = get_datas()

predict_1(x_data,y_data)

上面的脚本,有三段输出,怎么解读呢?

第一段输出的,解释参考sklearn 翻译笔记:KNeighborsClassifier

KNeighborsClassifier(algorithm='auto', leaf_size=30, metric='minkowski',

metric_params=None, n_jobs=None, n_neighbors=3, p=2,

weights='uniform')

下面的输出,须要了解召回率、准确率、调和平均几个概念,参考

准确率与召回率、如何解释召回率与准确率?,召回率指的样本中想匹配的种类占该类总数的比率,召回率又叫查全率,这个就容易理解一些。而准确率时对抽取的样本而言。下图描述了1和2两种分类的查全率和查准率。

precision recall f1-score support

1 0.83 0.45 0.59 11

2 0.62 0.91 0.74 11

micro avg 0.68 0.68 0.68 22

macro avg 0.73 0.68 0.66 22

weighted avg 0.73 0.68 0.66 22

下面的输出,须要了解基于混淆矩阵的评价指标,查看ML01 机器学习后利用混淆矩阵Confusion matrix 进行结果分析,就容易理解了。通过混淆矩阵,可以看出,我们的结果并不理想

[[ 5 6]

[ 1 10]]

2 决策树

决策树需要理解信息熵,参考白话信息熵

import numpy as np

import pandas as pd

from sklearn import metrics

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

import matplotlib.pylab as plt

from sklearn import tree

def predict_2(x_data,y_data):

scaler = MinMaxScaler()

X = scaler.fit_transform(x_data)

x_train, x_test, y_train, y_test = train_test_split(X, y_data, test_size=0.1)

clf = tree.DecisionTreeClassifier()

clf.fit(x_train,y_train)

print(clf)

expected = y_test

predicted = clf.predict(x_test)

print(metrics.classification_report(expected, predicted))

label = list(set(y_data))

print(metrics.confusion_matrix(expected, predicted, labels=label))

输出的结果为

DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features=None, max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False, random_state=None,

splitter='best')

precision recall f1-score support

1 0.38 1.00 0.55 3

2 1.00 0.38 0.55 8

micro avg 0.55 0.55 0.55 11

macro avg 0.69 0.69 0.55 11

weighted avg 0.83 0.55 0.55 11

[[3 0]

[5 3]]

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?