创建数据库

CREATE DATABASE [IF NOT EXISTS] database_name

[COMMENT database_comment]

[LOCATION hdfs_path]删除数据库

DROP DATABASE [IF EXISTS] database_name [RESTRICT|CASCADE];

-- RESTRICT:默认选项只能删除空库

-- CASCADE:可以删除库和库里的表创建表

创建表

CREATE [TEMPORARY] [EXTERNAL] TABLE [IF NOT EXISTS] [db_name.]table_name

[(col_name data_type [COMMENT col_comment], ...]

[COMMENT table_comment]

[PARTITIONED BY (col_name data_type [COMMENT col_comment], ...)]

[CLUSTERED BY (col_name, col_name, ...) [SORTED BY (col_name [ASC|DESC], ...)] INTO num_buckets BUCKETS]

[

[ROW FORMAT row_format]

[STORED AS file_format]

]

[LOCATION hdfs_path]

[AS select_statement];

CREATE [TEMPORARY] [EXTERNAL] TABLE [IF NOT EXISTS] [db_name.]table_name

LIKE existing_table_or_view_name

[LOCATION hdfs_path];

primitive_data_type

: TINYINT

| SMALLINT

| INT

| BIGINT

| BOOLEAN

| FLOAT

| DOUBLE

| DOUBLE PRECISION

| STRING

| BINARY

| TIMESTAMP

| DECIMAL

| DECIMAL(precision, scale)

| DATE

| VARCHAR

| CHAR

row_format

: DELIMITED [FIELDS TERMINATED BY char [ESCAPED BY char]] [COLLECTION ITEMS TERMINATED BY char]

[MAP KEYS TERMINATED BY char] [LINES TERMINATED BY char]

[NULL DEFINED AS char]

| SERDE serde_name [WITH SERDEPROPERTIES (property_name=property_value, property_name=property_value, ...)]

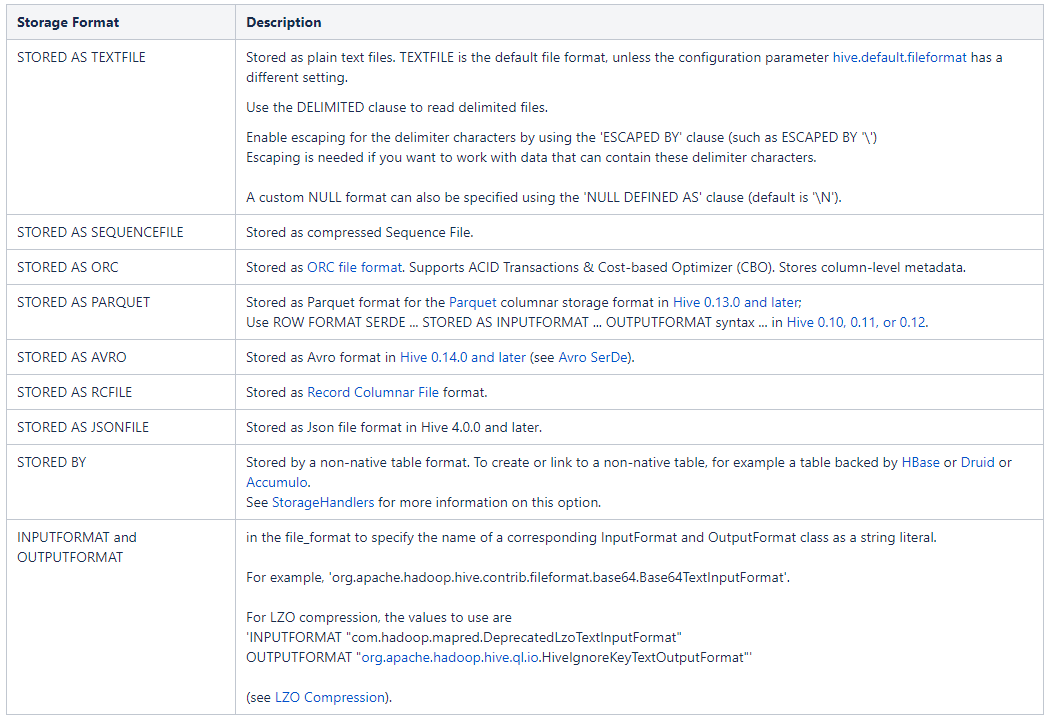

file_format:

: SEQUENCEFILE

| TEXTFILE -- (Default, depending on hive.default.fileformat configuration)

| RCFILE

| ORC

| PARQUET

| AVRO

| JSONFILE

| INPUTFORMAT input_format_classname OUTPUTFORMAT output_format_classname内部表,外部表

创建时指定external时为外部表,否则为内部表,或者通过desc formatted table_name;输出表的详细信息,其中Table Type:列会输出存储目录信息和数据表类型内部表(managed table),外部表(external table)

区别:

内部表数据由Hive自身管理,外部表数据由HDFS管理;

内部表数据存储的位置是hive.metastore.warehouse.dir(默认:/user/hive/warehouse),外部表数据的存储位置由自己制定;

删除内部表会直接删除元数据(metadata)及存储数据;删除外部表仅仅会删除元数据,HDFS上的文件并不会被删除;

对内部表的修改会将修改直接同步给元数据,而对外部表的表结构和分区进行修改,则需要修复(MSCK REPAIR TABLE table_name;)

外部表:hdfs上的共享数据,多个部门公用时采用

-- 创建内部表

create table tb_managed(

id int,

name string,

hobby array<string>,

add map<String,string>

)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

-- 数据文件及内容

complex_data_type.txt

1,xiaoming,book-TV-code,beijing:chaoyang-shagnhai:pudong

2,lilei,book-code,nanjing:jiangning-taiwan:taibei

3,lihua,music-book,heilongjiang:haerbin

-- load数据

load data local inpath '/usr/local/hive-2.1.1/data_dir/complex_data_type.txt' overwrite into table tb_managed;

-- 查询表模式信息

0: jdbc:hive2://node225:10000/db01> desc formatted tb_managed;

OK

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

| col_name | data_type | comment |

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

| # col_name | data_type | comment |

| | NULL | NULL |

| id | int | |

| name | string | |

| hobby | array<string> | |

| add | map<string,string> | |

| | NULL | NULL |

| # Detailed Table Information | NULL | NULL |

| Database: | db01 | NULL |

| Owner: | root | NULL |

| CreateTime: | Wed Oct 10 15:21:31 CST 2018 | NULL |

| LastAccessTime: | UNKNOWN | NULL |

| Retention: | 0 | NULL |

| Location: | hdfs://ns1/user/hive/warehouse/db01.db/tb_managed | NULL |

| Table Type: | MANAGED_TABLE | NULL |

| Table Parameters: | NULL | NULL |

| | numFiles | 1 |

| | numRows | 0 |

| | rawDataSize | 0 |

| | totalSize | 147 |

| | transient_lastDdlTime | 1539156255 |

| | NULL | NULL |

| # Storage Information | NULL | NULL |

| SerDe Library: | org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe | NULL |

| InputFormat: | org.apache.hadoop.mapred.TextInputFormat | NULL |

| OutputFormat: | org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat | NULL |

| Compressed: | No | NULL |

| Num Buckets: | -1 | NULL |

| Bucket Columns: | [] | NULL |

| Sort Columns: | [] | NULL |

| Storage Desc Params: | NULL | NULL |

| | colelction.delim | - |

| | field.delim | , |

| | mapkey.delim | : |

| | serialization.format | , |

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

35 rows selected (0.346 seconds)

-- 创建外部表

create external table tb_external(

id int,

name string,

hobby array<string>,

add map<String,string>

)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

location '/tmp/hive/tb_external'

;

-- load数据

load data local inpath '/usr/local/hive-2.1.1/data_dir/complex_data_type.txt' overwrite into table tb_external;

0: jdbc:hive2://node225:10000/db01> desc formatted tb_external;

OK

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

| col_name | data_type | comment |

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

| # col_name | data_type | comment |

| | NULL | NULL |

| id | int | |

| name | string | |

| hobby | array<string> | |

| add | map<string,string> | |

| | NULL | NULL |

| # Detailed Table Information | NULL | NULL |

| Database: | db01 | NULL |

| Owner: | root | NULL |

| CreateTime: | Wed Oct 10 15:39:29 CST 2018 | NULL |

| LastAccessTime: | UNKNOWN | NULL |

| Retention: | 0 | NULL |

| Location: | hdfs://ns1/tmp/hive | NULL |

| Table Type: | MANAGED_TABLE | NULL |

| Table Parameters: | NULL | NULL |

| | numFiles | 1 |

| | totalSize | 147 |

| | transient_lastDdlTime | 1539157215 |

| | NULL | NULL |

| # Storage Information | NULL | NULL |

| SerDe Library: | org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe | NULL |

| InputFormat: | org.apache.hadoop.mapred.TextInputFormat | NULL |

| OutputFormat: | org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat | NULL |

| Compressed: | No | NULL |

| Num Buckets: | -1 | NULL |

| Bucket Columns: | [] | NULL |

| Sort Columns: | [] | NULL |

| Storage Desc Params: | NULL | NULL |

| | colelction.delim | - |

| | field.delim | , |

| | line.delim | \n |

| | mapkey.delim | : |

| | serialization.format | , |

+-------------------------------+-------------------------------------------------------------+-----------------------+--+

34 rows selected (0.22 seconds)

分区

是指按照数据的某列(值)或某些列(值)分为多个区,形式分区即数据表的子文件夹,查询时通过指定分区字段的值,直接从指定分区中查询避免执行权标扫描。

创建分区表的时候,通过关键字 partitioned by (partition_col data_type)声明该表是分区表,按partition_col进行分区,partition_col值一致的所有记录存放在一个分区中,可以依据多个列进行分区,即对某个分区的数据按照某些列继续分区。

向分区表导入数据的时候,要通过关键字partition(partition_col=value)显示声明数据要导入到表的哪个分区。

分区,是将满足某些条件的记录打包,做个记号,在查询时提高效率,在查询时分区字段会显示到客户端上,但并不真正在存储在数据表文件中,是所谓伪列。

分区表的分区会反映在文件系统中的存储路径,实际上是在表目录下创建了一个文件夹名为partition_col=value,并将该区导入的数据放置该在文件夹下面。

show partitions table_name;

静态分区

create table tb_partitions(

id int,

name string,

hobby array<string>,

add map<String,string>

)

partitioned by (part_tag string)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

load data local inpath '/usr/local/hive-2.1.1/data_dir/complex_data_type.txt' into table tb_partitions partition(part_tag = 'first');

load data local inpath '/usr/local/hive-2.1.1/data_dir/complex_data_type.txt' into table tb_partitions partition(part_tag = 'second');

select * from tb_partitions where part_tag='first';

insert overwrite table table_name partition(partition_col=value) select ...

0: jdbc:hive2://node225:10000/db01> show partitions tb_partitions;

OK

+-----------------+--+

| partition |

+-----------------+--+

| part_tag=first |

+-----------------+--+

1 row selected (0.278 seconds)

动态分区

hive是批处理系统,为提高多分区插入数据的效率,hive提供了一个动态分区功能,其可以基于查询参数的位置去推断分区的名称,从而建立分区。

set hive.exec.dynamic.partition =true(默认false),表示开启动态分区功能? ?

set hive.exec.dynamic.partition.mode = nonstrict(默认strict),表示允许所有分区都是动态的,否则必须有静态分区字段

系统默认以最后一个字段作为分区名,分区需要分区的字段只能放在后面,不能把顺序弄错。系统是根据查询字段的位置推断分区名的,而不是字段名称。

单字段分区

create table tb_part_dynamic_1(

id int,

name string,

hobby array<string>,

add map<String,string>

)

partitioned by (tag string)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

insert overwrite table tb_part_dynamic_1 partition(tag) select id,name,hobby,add,part_tag from tb_partitions;多字段同时静态和动态分区

create table tb_part_dynamic_2(

id int,

name string,

hobby array<string>,

add map<String,string>

)

partitioned by (categ string,tag string)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

insert overwrite table tb_part_dynamic_2 partition(categ = 'big',tag) select id,name,hobby,add,part_tag from tb_partitions;

多字段全动态分区

create table tb_part_dynamic_3(

id int,

name string,

hobby array<string>,

add map<String,string>

)

partitioned by (tag1 string,tag2 string)

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

insert overwrite table tb_part_dynamic_3 partition(tag1,tag2) select id,name,hobby,add,categ,tag from tb_part_dynamic_2;分桶

相对分区进行更细粒度的划分。分桶将整个数据内容安照某列属性值得hash值进行区分,如要安照clustered_col属性分为num_buckets个桶,就是对clustered_col属性值的hash值对num_buckets取摸,按照取模结果对数据分桶。如取模结果为0,1,2,..num_buckets-1的数据记录分别存放到数据表目录下的一个文件。

分桶之前要执行命令hive.enforce.bucketiong=true;

要使用关键字clustered by 指定分区依据的列名,还要指定分为多少桶num_buckets。

分桶的字段为数据表中已有的字段,所以不用指定数据类型。

查看分桶数据需要使用tablesample。

select * from table_name tablesample(bucket 1 out of 3 on id)

可以分桶又分区,但从文件目录信息看不到分桶的子文件信息,可以看到分区的目录信息

0: jdbc:hive2://node225:10000/db01> set hive.enforce.bucketing=true;

No rows affected (0.008 seconds)

0: jdbc:hive2://node225:10000/db01> set hive.enforce.bucketing;

+------------------------------+--+

| set |

+------------------------------+--+

| hive.enforce.bucketing=true |

+------------------------------+--+

1 row selected (0.016 seconds)

create table tb_clusters(

id int,

name string,

hobby array<string>,

add map<String,string>

)

clustered by (id) sorted by (name asc) into 2 buckets

row format delimited

fields terminated by ','

collection items terminated by '-'

map keys terminated by ':'

lines terminated by '\n'

;

insert overwrite table tb_clusters select id,name,hobby,add from tb_partitions;The CLUSTERED BY and SORTED BY creation commands do not affect how data is inserted into a table – only how it is read.

row_format

默认记录和字段分隔符

- \n 每行一条记录

- ^A 分隔列(八进制 \001)

- ^B 分隔ARRAY或者STRUCT中的元素,或者MAP中多个键值对之间分隔(八进制 \002)

- ^C 分隔MAP中键值对的“键”和“值”(八进制 \003)

只能使用单字符

此处通过测试有些问题,先记录测试情况

# 将正常的数据按所谓的默认分割符写入本地文件系统

insert overwrite local directory '/usr/local/hive-2.1.1/data_dir/tb_insert_multi_02'

row format delimited

fields terminated by '^A'

collection items terminated by '^B'

map keys terminated by '^C'

lines terminated by '\n'

stored as textfile

select * from tb_insert_multi_02

;

# 本地文件情况

[root@node225 ~]# cat /usr/local/hive-2.1.1/data_dir/tb_insert_multi_02/000000_0

1^xiaoming^book^TV^code^beijing^chaoyang^shagnhai^pudong^first^100

2^lilei^book^code^nanjing^jiangning^taiwan^taibei^first^100

3^lihua^music^book^heilongjiang^haerbin^first^100

1^xiaoming^book^TV^code^beijing^chaoyang^shagnhai^pudong^first^100

2^lilei^book^code^nanjing^jiangning^taiwan^taibei^first^100

3^lihua^music^book^heilongjiang^haerbin^first^100

# 按所谓默认分隔符创建表

create table tb_data_format(

id int,

name string,

hobby array<string>,

add map<String,string>,

tag1 string,

tag2 int

)

row format delimited

fields terminated by '^A'

collection items terminated by '^B'

map keys terminated by '^C'

lines terminated by '\n'

;

0: jdbc:hive2://node225:10000/db01> desc formatted tb_data_format;

OK

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

| col_name | data_type | comment |

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

| # col_name | data_type | comment |

| | NULL | NULL |

| id | int | |

| name | string | |

| hobby | array<string> | |

| add | map<string,string> | |

| tag1 | string | |

| tag2 | int | |

| | NULL | NULL |

| # Detailed Table Information | NULL | NULL |

| Database: | db01 | NULL |

| Owner: | root | NULL |

| CreateTime: | Thu Oct 11 16:42:25 CST 2018 | NULL |

| LastAccessTime: | UNKNOWN | NULL |

| Retention: | 0 | NULL |

| Location: | hdfs://ns1/user/hive/warehouse/db01.db/tb_data_format | NULL |

| Table Type: | MANAGED_TABLE | NULL |

| Table Parameters: | NULL | NULL |

| | COLUMN_STATS_ACCURATE | {\"BASIC_STATS\":\"true\"} |

| | numFiles | 0 |

| | numRows | 0 |

| | rawDataSize | 0 |

| | totalSize | 0 |

| | transient_lastDdlTime | 1539247345 |

| | NULL | NULL |

| # Storage Information | NULL | NULL |

| SerDe Library: | org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe | NULL |

| InputFormat: | org.apache.hadoop.mapred.TextInputFormat | NULL |

| OutputFormat: | org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat | NULL |

| Compressed: | No | NULL |

| Num Buckets: | -1 | NULL |

| Bucket Columns: | [] | NULL |

| Sort Columns: | [] | NULL |

| Storage Desc Params: | NULL | NULL |

| | colelction.delim | ^B |

| | field.delim | ^A |

| | line.delim | \n |

| | mapkey.delim | ^C |

| | serialization.format | ^A |

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

# load 数据

load data local inpath '/usr/local/hive-2.1.1/data_dir/tb_insert_multi_02/000000_0' into table tb_data_format;

# 查询确认表中数据,并未按期望的形式加载数据

0: jdbc:hive2://node225:10000/db01> select * from tb_data_format;

OK

+--------------------+----------------------+-----------------------+---------------------+----------------------+----------------------+--+

| tb_data_format.id | tb_data_format.name | tb_data_format.hobby | tb_data_format.add | tb_data_format.tag1 | tb_data_format.tag2 |

+--------------------+----------------------+-----------------------+---------------------+----------------------+----------------------+--+

| 1 | xiaoming | ["book"] | {"TV":null} | code | NULL |

| 2 | lilei | ["book"] | {"code":null} | nanjing | NULL |

| 3 | lihua | ["music"] | {"book":null} | heilongjiang | NULL |

| 1 | xiaoming | ["book"] | {"TV":null} | code | NULL |

| 2 | lilei | ["book"] | {"code":null} | nanjing | NULL |

| 3 | lihua | ["music"] | {"book":null} | heilongjiang | NULL |

+--------------------+----------------------+-----------------------+---------------------+----------------------+----------------------+--+

# 利用默认的分隔符创建数据表,查询表的结构信息,显示默认的分隔符并非所谓的“^A”等

0: jdbc:hive2://node225:10000/db01> create table tb_data_delimit(

. . . . . . . . . . . . . . . . . > id int,

. . . . . . . . . . . . . . . . . > name string,

. . . . . . . . . . . . . . . . . > hobby array<string>,

. . . . . . . . . . . . . . . . . > add map<String,string>,

. . . . . . . . . . . . . . . . . > tag1 string,

. . . . . . . . . . . . . . . . . > tag2 int

. . . . . . . . . . . . . . . . . > )

. . . . . . . . . . . . . . . . . > ;

OK

No rows affected (0.207 seconds)

0: jdbc:hive2://node225:10000/db01> desc formatted tb_data_delimit;

OK

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

| col_name | data_type | comment |

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

| # col_name | data_type | comment |

| | NULL | NULL |

| id | int | |

| name | string | |

| hobby | array<string> | |

| add | map<string,string> | |

| tag1 | string | |

| tag2 | int | |

| | NULL | NULL |

| # Detailed Table Information | NULL | NULL |

| Database: | db01 | NULL |

| Owner: | root | NULL |

| CreateTime: | Thu Oct 11 16:43:28 CST 2018 | NULL |

| LastAccessTime: | UNKNOWN | NULL |

| Retention: | 0 | NULL |

| Location: | hdfs://ns1/user/hive/warehouse/db01.db/tb_data_delimit | NULL |

| Table Type: | MANAGED_TABLE | NULL |

| Table Parameters: | NULL | NULL |

| | COLUMN_STATS_ACCURATE | {\"BASIC_STATS\":\"true\"} |

| | numFiles | 0 |

| | numRows | 0 |

| | rawDataSize | 0 |

| | totalSize | 0 |

| | transient_lastDdlTime | 1539247408 |

| | NULL | NULL |

| # Storage Information | NULL | NULL |

| SerDe Library: | org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe | NULL |

| InputFormat: | org.apache.hadoop.mapred.TextInputFormat | NULL |

| OutputFormat: | org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat | NULL |

| Compressed: | No | NULL |

| Num Buckets: | -1 | NULL |

| Bucket Columns: | [] | NULL |

| Sort Columns: | [] | NULL |

| Storage Desc Params: | NULL | NULL |

| | serialization.format | 1 |

+-------------------------------+-------------------------------------------------------------+-----------------------------+--+

# 同样将分隔符换成“\001”等也有问题

0: jdbc:hive2://node225:10000/db01> create table tb_data_format(

. . . . . . . . . . . . . . . . . > id int,

. . . . . . . . . . . . . . . . . > name string,

. . . . . . . . . . . . . . . . . > hobby array<string>,

. . . . . . . . . . . . . . . . . > add map<String,string>,

. . . . . . . . . . . . . . . . . > tag1 string,

. . . . . . . . . . . . . . . . . > tag2 int

. . . . . . . . . . . . . . . . . > )

. . . . . . . . . . . . . . . . . > row format delimited

. . . . . . . . . . . . . . . . . > fields terminated by '\001'

. . . . . . . . . . . . . . . . . > collection items terminated by '\002'

. . . . . . . . . . . . . . . . . > map keys terminated by '\003'

. . . . . . . . . . . . . . . . . > lines terminated by '\n'

. . . . . . . . . . . . . . . . . > ;DELIMITED

每个字段之间

FIELDS TERMINATED BY char ','

集合内元素与元素之间,每组K-V对之间

COLLECTION ITEMS TERMINATED BY '-'

每组K-V对内部

MAP KEYS TERMINATED BY ':'

每条数据之间由换行符

LINES TERMINATED BY '\n'

SERDE

SerDe是Serialize/Deserilize的简称,目的是用于序列化和反序列化。

序列化是对象转换为字节序列的过程。

反序列化是字节序列恢复为对象的过程。

Serialize把hive使用的java object转换成能写入hdfs的字节序列,或者其他系统能识别的流文件。Deserilize把字符串或者二进制流转换成hive能识别的java object对象。比如:select语句会用到Serialize对象, 把hdfs数据解析出来;insert语句会使用Deserilize,数据写入hdfs系统,需要把数据序列化。

hive创建表时, 通过自定义的SerDe或使用Hive内置的SerDe类型指定数据的序列化和反序列化方式。

用row format 参数说明SerDe的类型

SerDe包括内置类型

Avro

ORC

RegEx

Thrift

Parquet

CSV

JsonSerDe

常用RegexSerde解析多字节分隔符

需要两个参数:

input.regex = "(.*)::(.*)::(.*)"

output.format.string = "%1$s %2$s %3$s"

create table t_user(

userid bigint comment '用户id',

gender string comment '性别',

age int comment '年龄',

occupation string comment '职业',

zipcode string comment '邮政编码'

)

comment '用户信息表'

row format serde 'org.apache.hadoop.hive.serde2.RegexSerDe'

with serdeproperties('input.regex'='(.*)::(.*)::(.*)::(.*)::(.*)','output.format.string'='%1$s %2$s %3$s %4$s %5$s')

stored as textfile;文件格式

TEXTFILE:为默认格式,导入数据时会直接把数据文件拷贝到hdfs上不进行处理,磁盘开销大,数据解析开销大。

SEQUENCEFILE,RCFILE,ORCFILE格式的表不能直接从本地文件导入数据,数据要先导入到textfile格式的表中, 然后再从表中用insert导入SequenceFile,RCFile,ORCFile表中。

相比TEXTFILE和SEQUENCEFILE,RCFILE由于列式存储方式,数据加载时性能消耗较大,但是具有较好的压缩比和查询响应。数据仓库的特点是一次写入、多次读取,因此,整体来看,RCFILE相比其余两种格式具有较明显的优势。

SEQUENCEFILE

是Hadoop API提供的一种二进制文件支持,其具有使用方便、可分割、可压缩的特点。支持三种压缩选择:NONE,RECORD,BLOCK。Record压缩率低,一般建议使用BLOCK压缩。

RCFILE

是一种行列存储相结合的存储方式。首先,其将数据按行分块,保证同一个record在一个块上,避免读一个记录需要读取多个block。其次,块数据列式存储,有利于数据压缩和快速的列存取。

720

720

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?