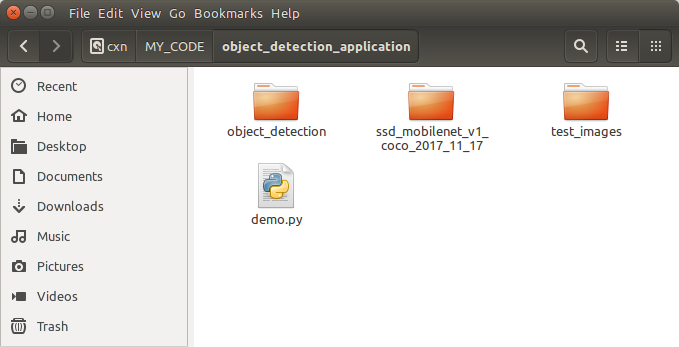

1 download pre-trained models: eg, you can get: ssd_mobilenet_v1_coco_2017_11_17

2 download tensorflow/models from git hub:

https://github.com/tensorflow/models

follow this tutorial and change some code, save them in demo.py

import numpy as np import os import sys import tensorflow as tf from matplotlib import pyplot as plt from PIL import Image # This is needed since the notebook is stored in the object_detection folder. sys.path.append("..") from object_detection.utils import ops as utils_ops if tf.__version__ < '1.4.0': raise ImportError('Please upgrade your tensorflow installation to v1.4.* or later!') from object_detection.utils import label_map_util from object_detection.utils import visualization_utils as vis_util #/media/xiaonanchong/cxn/ssd_mobilenet_v1_coco_2017_11_17 PATH_TO_CKPT = '/media/xiaonanchong/cxn/MY_CODE/object_detection_application/ssd_mobilenet_v1_coco_2017_11_17/frozen_inference_graph.pb' PATH_TO_LABELS = os.path.join('/media/xiaonanchong/cxn/GIT_HUB_CODE/models/research/object_detection/data', 'mscoco_label_map.pbtxt') NUM_CLASSES = 90 detection_graph = tf.Graph() with detection_graph.as_default(): od_graph_def = tf.GraphDef() with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid: serialized_graph = fid.read() od_graph_def.ParseFromString(serialized_graph) tf.import_graph_def(od_graph_def, name='') label_map = label_map_util.load_labelmap(PATH_TO_LABELS) categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True) category_index = label_map_util.create_category_index(categories) def load_image_into_numpy_array(image): (im_width, im_height) = image.size return np.array(image.getdata()).reshape( (im_height, im_width, 3)).astype(np.uint8) PATH_TO_TEST_IMAGES_DIR = '/media/xiaonanchong/cxn/MY_CODE/object_detection_application/test_images' TEST_IMAGE_PATHS = [ os.path.join(PATH_TO_TEST_IMAGES_DIR, 'image{}.jpg'.format(i)) for i in range(1, 3) ] # Size, in inches, of the output images. IMAGE_SIZE = (12, 8) def run_inference_for_single_image(image, graph): with graph.as_default(): with tf.Session() as sess: # Get handles to input and output tensors ops = tf.get_default_graph().get_operations() all_tensor_names = {output.name for op in ops for output in op.outputs} tensor_dict = {} for key in [ 'num_detections', 'detection_boxes', 'detection_scores', 'detection_classes', 'detection_masks' ]: tensor_name = key + ':0' if tensor_name in all_tensor_names: tensor_dict[key] = tf.get_default_graph().get_tensor_by_name( tensor_name) if 'detection_masks' in tensor_dict: # The following processing is only for single image detection_boxes = tf.squeeze(tensor_dict['detection_boxes'], [0]) detection_masks = tf.squeeze(tensor_dict['detection_masks'], [0]) # Reframe is required to translate mask from box coordinates to image coordinates and fit the image size. real_num_detection = tf.cast(tensor_dict['num_detections'][0], tf.int32) detection_boxes = tf.slice(detection_boxes, [0, 0], [real_num_detection, -1]) detection_masks = tf.slice(detection_masks, [0, 0, 0], [real_num_detection, -1, -1]) detection_masks_reframed = utils_ops.reframe_box_masks_to_image_masks( detection_masks, detection_boxes, image.shape[0], image.shape[1]) detection_masks_reframed = tf.cast( tf.greater(detection_masks_reframed, 0.5), tf.uint8) # Follow the convention by adding back the batch dimension tensor_dict['detection_masks'] = tf.expand_dims( detection_masks_reframed, 0) image_tensor = tf.get_default_graph().get_tensor_by_name('image_tensor:0') # Run inference output_dict = sess.run(tensor_dict, feed_dict={image_tensor: np.expand_dims(image, 0)}) # all outputs are float32 numpy arrays, so convert types as appropriate output_dict['num_detections'] = int(output_dict['num_detections'][0]) output_dict['detection_classes'] = output_dict[ 'detection_classes'][0].astype(np.uint8) output_dict['detection_boxes'] = output_dict['detection_boxes'][0] output_dict['detection_scores'] = output_dict['detection_scores'][0] if 'detection_masks' in output_dict: output_dict['detection_masks'] = output_dict['detection_masks'][0] #print(output_dict.__len__()) #4 #print (output_dict.__class__) #<type 'dict'> return output_dict for image_path in TEST_IMAGE_PATHS: image = Image.open(image_path) # the array based representation of the image will be used later in order to prepare the # result image with boxes and labels on it. image_np = load_image_into_numpy_array(image) # Expand dimensions since the model expects images to have shape: [1, None, None, 3] image_np_expanded = np.expand_dims(image_np, axis=0) # Actual detection. output_dict = run_inference_for_single_image(image_np, detection_graph) print("objection detection done !") # Visualization of the results of a detection. vis_util.visualize_boxes_and_labels_on_image_array( image_np, output_dict['detection_boxes'], output_dict['detection_classes'], output_dict['detection_scores'], category_index, instance_masks=output_dict.get('detection_masks'), use_normalized_coordinates=True, line_thickness=8) plt.figure(figsize=IMAGE_SIZE) plt.imshow(image_np)

run this script with python 2.7.13(~/anaconda2/bin/python) and tensorflow 1.4.1

other useful links:

googles: https://cloud.google.com/solutions/creating-object-detection-application-tensorflow

Creating an Object Detection Application Using TensorFlow

https://towardsdatascience.com/how-to-deploy-machine-learning-models-with-tensorflow-part-1-make-your-model-ready-for-serving-776a14ec3198

How to deploy Machine Learning models with TensorFlow. Part 1 — make your model ready for serving.

https://medium.freecodecamp.org/how-to-deploy-an-object-detection-model-with-tensorflow-serving-d6436e65d1d9

How to deploy an Object Detection Model with TensorFlow serving

Microsoft: https://docs.microsoft.com/en-us/cognitive-toolkit/object-detection-using-fast-r-cnn-brainscript

Object detection using Fast R-CNN

tensorflow: https://www.tensorflow.org/programmers_guide/saved_model#top_of_page

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?