数据

(数据来源)

https://www.kaggle.com/c/titanic/overview

(数据详情)

fare 票价

sibsp 泰坦尼克号的兄弟、配偶

parch 泰坦尼克号的父母、孩子

训练模型

import pandas as pd

from sklearn.metrics import mean_absolute_error

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeRegressor

train_path = '/home/aistudio/data/data39090/train.csv'

train_data = pd.read_csv(train_path)

train_data = train_data.dropna(axis=0)

train_y = train_data.Survived

feature = ['Pclass', 'Sex', 'Age', 'SibSp','Parch', 'Fare']

X = train_data[feature]

X = X.replace("male", "1")

X = X.replace("female", "0")

train_X, val_X, train_Y, val_Y = train_test_split(X, y)

leaf_notes = [10, 50, 100, 500]

def best_mae_found(train_X, train_Y, val_X, val_Y, max_leaf_nodes):

Dtree_model = DecisionTreeRegressor(max_leaf_nodes=max_leaf_nodes, random_state = 1)

Dtree_model.fit(train_X, train_Y)

val_predice = Dtree_model.predict(val_X)

mae = mean_absolute_error(val_Y, val_predice)

return mae

all_leaf_notes = {max_leaf_nodes: best_mae_found(train_X, train_Y, val_X, val_Y, max_leaf_nodes) for max_leaf_nodes in leaf_notes}

best_leaf_nodes = min(all_leaf_notes, key=all_leaf_notes.get)

Dtree_model = DecisionTreeRegressor(max_leaf_nodes=best_leaf_nodes, random_state = 1)

Dtree_model.fit(train_X, train_Y)

val_predice = Dtree_model.predict(val_X)

mae = mean_absolute_error(val_Y, val_predice)

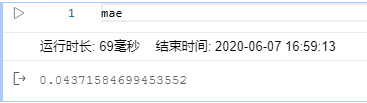

MAE

MAE是训练数据得到的绝对平均误差

预测

test_path = '/home/aistudio/data/data39090/test.csv'

test_data = pd.read_csv(test_path)

test_data = test_data.dropna(axis=0)

X_test = test_data[feature]

X_test = X_test.replace("male", "1")

X_test = X_test.replace("female", "0")

test_predice = Dtree_model.predict(X_test) # 预测结果

结语

😐 并未使用随机森林,但为了更好的拟合,代码中寻找了决策树模型的最佳叶节点

此方法的排名与准确率

结合随机森林,设置叶节点和深度可能会更好。😃

4442

4442

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?