呀吼!

好久不见!

这回的番外主要是想做点人脸检测(face detection)的实战demo,我们就叫它——

You Only Look At Face,YOLAF。

由于我的目标是希望设计一个轻量化的人脸检测模型,所以命名为:

TinyYOLAF

比起目标检测这种更加通用的任务,人脸检测还是要容易不少,毕竟类别就只有一个,而且数据集也都不大,最常用的widerface也就1.2w左右的训练图片,比COCO小太多了!!!!!!!!!

这个demo的代码在这里,请大家自行下载:

https://github.com/yjh0410/YOLAFgithub.com下面是widerface的官网,大家可以自行下载数据集:

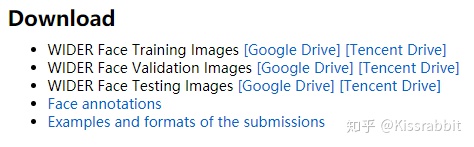

http://shuoyang1213.me/WIDERFACE/shuoyang1213.me

为了方便呢,我已经把数据集都下好存在了腾讯微云(官网提供了谷歌云和腾讯位于两个链接)

链接:

文件分享share.weiyun.com简单说一下数据集。

数据集一共包含五个部分:

分别是train、val、test三部分数据集,这个就不用说了,都是图片。

train和val的label在wider_face_split中,而eval_tools则是用于后续计算AP以及绘制PR曲线用的,里面包含了一堆matlab的文件,所以,大家还需要有matlab,最好是能有,当然,如此只是出于兴趣玩一玩,也可以不用~

widerface的数据集结构还是很简单的,没有什么太多说到。

OK!我就假设大家已经下载好数据集了,那么接下来就讲一下这个demo是怎么做的吧。和以前一样,我还是会从数据读取、模型、label制作、loss函数、训练代码四个部分来讲解。

目录如下:

一、TinyYOLAF之WiderFace数据读取

二、TinyYOLAF之模型

三、TinyYOLAF之label制作

四、TinyYOLAF之Loss函数

五、TinyYOLAF之训练

七、关于widerface官方的验证工具——eval_tools

八、非数据集图片可视化(福利)

一、TinyYOLAF之WiderFace数据读取

这个代码我是从下面这个项目的widerface.py粘贴过来的:

Tencent/FaceDetection-DSFDgithub.com

腾讯优图的,所以质量应该有保证,这个项目唯一不好的一点就是不支持训练,没有提供训练参数,即使过去了这么久,也没有把train代码release出来。所以,这其中用了什么样的trcik,trick在最后结果中带来的涨点比重等,我们都无法得知~不知道是不是因为不方便开源呢?毕竟有的时候个别项目的代码涉及一些公司的东西,的确不好开源~

接着再说读取数据的代码:

from __future__ import division , print_function

"""WIDER Face Dataset Classes

author: swordli

"""

#from .config import HOME

import os.path as osp

import sys

import torch

import torch.utils.data as data

import cv2

import numpy as np

#from utils.augmentations import SSDAugmentation

import scipy.io

import pdb

from collections import defaultdict

import matplotlib.pyplot as plt

plt.switch_backend('agg')

WIDERFace_CLASSES = ['face'] # always index 0

# note: if you used our download scripts, this should be right

WIDERFace_ROOT = "/home/k303/face_detection/dataset/wider_face/"

class WIDERFaceAnnotationTransform(object):

"""Transforms a WIDERFace annotation into a Tensor of bbox coords and label index

Initilized with a dictionary lookup of classnames to indexes

Arguments:

class_to_ind (dict, optional): dictionary lookup of classnames -> indexes

(default: alphabetic indexing of VOC's 20 classes)

keep_difficult (bool, optional): keep difficult instances or not

(default: False)

height (int): height

width (int): width

"""

def __init__(self, class_to_ind=None):

self.class_to_ind = class_to_ind or dict(

zip(WIDERFace_CLASSES, range(len(WIDERFace_CLASSES))))

def __call__(self, target, width, height):

"""

Arguments:

target (annotation) : the target annotation to be made usable

will be an ET.Element

Returns:

a list containing lists of bounding boxes [bbox coords]

"""

for i in range(len(target)):

target[i][0] = float(target[i][0]) / width

target[i][1] = float(target[i][1]) / height

target[i][2] = float(target[i][2]) / width

target[i][3] = float(target[i][3]) / height

#res.append( [ target[i][0], target[i][1], target[i][2], target[i][3], target[i][4] ] )

return target # [[xmin, ymin, xmax, ymax, label_ind], ... ]

class WIDERFaceDetection(data.Dataset):

"""WIDERFace Detection Dataset Object

http://mmlab.ie.cuhk.edu.hk/projects/WIDERFace/

input is image, target is annotation

Arguments:

root (string): filepath to WIDERFace folder.

image_set (string): imageset to use (eg. 'train', 'val', 'test')

transform (callable, optional): transformation to perform on the

input image

target_transform (callable, optional): transformation to perform on the

target `annotation`

(eg: take in caption string, return tensor of word indices)

dataset_name (string, optional): which dataset to load

(default: 'WIDERFace')

"""

def __init__(self, root,

image_sets='train',

transform=None, target_transform=WIDERFaceAnnotationTransform(),

dataset_name='WIDER Face'):

self.root = root

self.image_set = image_sets

self.transform = transform

self.target_transform = target_transform

self.name = dataset_name

'''

self._annopath = osp.join('%s', 'Annotations', '%s.xml')

self._imgpath = osp.join('%s', 'JPEGImages', '%s.jpg')

'''

self.img_ids = list()

self.label_ids = list()

self.event_ids = list()

'''

for (year, name) in image_sets:

rootpath = osp.join(self.root, 'VOC' + year)

for line in open(osp.join(rootpath, 'ImageSets', 'Main', name + '.txt')):

self.ids.append((rootpath, line.strip()))

'''

if self.image_set == 'train':

path_to_label = osp.join ( self.root , 'wider_face_split' )

path_to_image = osp.join ( self.root , 'WIDER_train/images' )

fname = "wider_face_train.mat"

if self.image_set == 'val':

path_to_label = osp.join ( self.root , 'wider_face_split' )

path_to_image = osp.join ( self.root , 'WIDER_val/images' )

fname = "wider_face_val.mat"

if self.image_set == 'test':

path_to_label = osp.join ( self.root , 'wider_face_split' )

path_to_image = osp.join ( self.root , 'WIDER_test/images' )

fname = "wider_face_test.mat"

self.path_to_label = path_to_label

self.path_to_image = path_to_image

self.fname = fname

self.f = scipy.io.loadmat(osp.join(self.path_to_label, self.fname))

self.event_list = self.f.get('event_list')

self.file_list = self.f.get('file_list')

self.face_bbx_list = self.f.get('face_bbx_list')

self._load_widerface()

def _load_widerface(self):

error_bbox = 0

train_bbox = 0

for event_idx, event in enumerate(self.event_list):

directory = event[0][0]

for im_idx, im in enumerate(self.file_list[event_idx][0]):

im_name = im[0][0]

if self.image_set in [ 'test' , 'val']:

self.img_ids.append( osp.join(self.path_to_image, directory, im_name + '.jpg') )

self.event_ids.append( directory )

self.label_ids.append([])

continue

face_bbx = self.face_bbx_list[event_idx][0][im_idx][0]

bboxes = []

for i in range(face_bbx.shape[0]):

# filter bbox

if face_bbx[i][2] < 2 or face_bbx[i][3] < 2 or face_bbx[i][0] < 0 or face_bbx[i][1] < 0:

error_bbox +=1

#print (face_bbx[i])

continue

train_bbox += 1

xmin = float(face_bbx[i][0])

ymin = float(face_bbx[i][1])

xmax = float(face_bbx[i][2]) + xmin -1

ymax = float(face_bbx[i][3]) + ymin -1

bboxes.append(

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

207

207

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?