优化 Linux 系统的 CPU 占用时间统计

当我们想要观察或者监视Linux系统的负载情况时,经常会采用top、vmstat、mpstat等命令。但是这些命令的统计结果并不一定准确,我们在这个项目中仔细分析了现有的负载统计、调度以及时间子系统,针对现有的统计漏洞做了改进。

下面我将会依次介绍这几个子系统是如何合作输出统计信息,然后说明其中存在的问题,并将我们所作的改进展示出来。

相关的子系统如何完成统计

在具体深入源码之前,首先需要对相关的子系统做一个大致的介绍。

- 负载统计子系统

- 功能:负责将Linux上的各个CPU的负载统计下来,然后输出到虚拟文件系统中,统计的单位有两种:以CPU为单位,或者以进程作为单位。

- 如何完成:这个模块的实现方式依赖于内核编译选项,可以有两种情况:抽样检测和系统调度记录。具体实现和调度子系统和时间子系统有关系。

- 统计相关时间

- 一种是在周期性时钟中断时,检测当前的任务情况,完成统计工作;这个周期性的时钟中断是用更新需要调度的任务的相关时间,所以不易太短;但是时间太长,抽样的精度就可能很低;这就存在一个调度的效率和抽样精度之间的矛盾;

- 另一种是在上下文切换时,记录每一次上下文切换的时间,从而精确完成记录工作;

- 输出到虚拟文件系统中

- 统计相关时间

- 调度子系统

- 功能:完成对Linux任务的调度功能;

- 这个模块和负载统计的关系:

- 这个模块提供了我们统计系统负载的时机;

- 第一种方式是在周期性时钟中断时完成负载统计任务,将这个tick中的所有时间,全部归属为当前任务;

- 在上下文切换时进行统计,记录从上一次进入这个任务到退出当前任务的时间,从而精确统计负载情况;

- 需要注意的是,Linux中调度子系统本身也会统计进程相关时间,进而完成调度,这个时间统计是准确的,但是负载统计子系统的时间统计和调度子系统的时间统计是两件事。

- 时间子系统

- 功能:完成定时器和提供时间这两种功能;

- 这个模块和负载统计的关系:

- 这个模块提供了统计的时钟来源,是我们统计的单位;

- 我们在采用周期性时钟中断统计的情况下时,开启的clock event是HZ_PERIODIC;

- 如果我们想要在上下文切换时进行统计,开启的clock event是NO_HZ_IDLE或者NO_HZ_FULL;

由于负载统计子系统随着编译选项的不同,所采用的策略有较大差异,我们这里分两种情况阐述上述三个子系统如何配合输出统计信息。由于篇幅的原因,这里不列出三个子系统的详细情况,可以参考我之前的博文,或者相关的书籍。

周期性时钟中断时统计

- 相关的编译选项和内核启动选项

- 负载统计子系统

- TICK_CPU_ACCOUNTING

- 时间子系统

- HZ_PERIODIC

- 内核启动选项

- 无

- 负载统计子系统

- 统计的流程

-

数据结构

-

每一个CPU在内核初始化时都会创建一个结构体,来统计CPU的使用情况,代码;

enum cpu_usage_stat { CPUTIME_USER, CPUTIME_NICE, CPUTIME_SYSTEM, CPUTIME_SOFTIRQ, CPUTIME_IRQ, CPUTIME_IDLE, CPUTIME_IOWAIT, CPUTIME_STEAL, CPUTIME_GUEST, CPUTIME_GUEST_NICE, NR_STATS, }; -

每一个进程在task_struct中用utime和stime,来统计进程的使用情况,代码;

struct task_struct { ... u64 utime; u64 stime; ... };

-

-

统计相关的时间

- 目前在Linux内核中使用的普通进程调度器为CFS,CFS调度器会通过一个周期性时钟中断来判断正在执行的进程是否需要继续执行,进而完成调度任务,我们称这个CPU的时钟中断间隔为tick;在每一次周期性时钟中断时,将中断时执行的任务认为是这个tick内CPU执行的任务,进而来统计CPU利用情况,简单来说就是通过抽样的算法来统计;

-

在每一个tick的中断过程中,我们会对当前的CPU使用情况进行统计,代码;

void account_process_tick(struct task_struct *p, int user_tick) { u64 cputime, steal; if (vtime_accounting_enabled_this_cpu()) return; if (sched_clock_irqtime) { irqtime_account_process_tick(p, user_tick, 1); return; } cputime = TICK_NSEC; //统计steal字段 steal = steal_account_process_time(ULONG_MAX); if (steal >= cputime) return; cputime -= steal; //重点在这里,根据当前执行的进程情况,分别统计 //如果执行的是用户态代码 if (user_tick) account_user_time(p, cputime); //如果执行的是系统态代码 else if ((p != this_rq()->idle) || (irq_count() != HARDIRQ_OFFSET)) account_system_time(p, HARDIRQ_OFFSET, cputime); //如果CPU处于空闲状态 else account_idle_time(cputime); }-

如果是用户态

void account_user_time(struct task_struct *p, u64 cputime) { int index; // 以进程为统计对象 /* Add user time to process. */ p->utime += cputime; account_group_user_time(p, cputime); //统计到user还是nice字段 index = (task_nice(p) > 0) ? CPUTIME_NICE : CPUTIME_USER; // 以CPU为统计对象 /* Add user time to cpustat. */ task_group_account_field(p, index, cputime); /* Account for user time used */ acct_account_cputime(p); } -

如果是系统态

void account_system_time(struct task_struct *p, int hardirq_offset, u64 cputime) { int index; // 统计guest和guest_nice字段 if ((p->flags & PF_VCPU) && (irq_count() - hardirq_offset == 0)) { account_guest_time(p, cputime); return; } //如果处于执行硬中断上下文的过程中,统计到hi字段 if (hardirq_count() - hardirq_offset) index = CPUTIME_IRQ; //如果处于执行软中断上下文的过程中,统计到si字段 else if (in_serving_softirq()) index = CPUTIME_SOFTIRQ; //执行system代码,统计到system字段 else index = CPUTIME_SYSTEM; account_system_index_time(p, cputime, index); } -

如果是空闲情况

void account_idle_time(u64 cputime) { u64 *cpustat = kcpustat_this_cpu->cpustat; struct rq *rq = this_rq(); // IO操作,统计到iowait字段 if (atomic_read(&rq->nr_iowait) > 0) cpustat[CPUTIME_IOWAIT] += cputime; // 空闲,统计到idle字段 else cpustat[CPUTIME_IDLE] += cputime; }

-

-

- 目前在Linux内核中使用的普通进程调度器为CFS,CFS调度器会通过一个周期性时钟中断来判断正在执行的进程是否需要继续执行,进而完成调度任务,我们称这个CPU的时钟中断间隔为tick;在每一次周期性时钟中断时,将中断时执行的任务认为是这个tick内CPU执行的任务,进而来统计CPU利用情况,简单来说就是通过抽样的算法来统计;

-

输出到虚拟文件系统中

- 以CPU为统计对象

-

相关的数据结构是一直保存在内存中的,最终会在被访问时写入到/proc/stat文件中,代码;

-

创建/proc/stat文件;

static int __init proc_stat_init(void) { proc_create("stat", 0, NULL, &stat_proc_ops); return 0; } -

操作/proc/stat文件;

static const struct proc_ops stat_proc_ops = { .proc_flags = PROC_ENTRY_PERMANENT, .proc_open = stat_open, .proc_read_iter = seq_read_iter, .proc_lseek = seq_lseek, .proc_release = single_release, }; -

在stat_open函数中,我们打开了/proc/stat文件,并且利用show_stat函数完成了信息的写入;

static int stat_open(struct inode *inode, struct file *file) { unsigned int size = 1024 + 128 * num_online_cpus(); /* minimum size to display an interrupt count : 2 bytes */ size += 2 * nr_irqs; return single_open_size(file, show_stat, NULL, size); } -

show_stat文件

static int show_stat(struct seq_file *p, void *v) { int i, j; u64 user, nice, system, idle, iowait, irq, softirq, steal; u64 guest, guest_nice; u64 sum = 0; u64 sum_softirq = 0; unsigned int per_softirq_sums[NR_SOFTIRQS] = {0}; struct timespec64 boottime; user = nice = system = idle = iowait = irq = softirq = steal = 0; guest = guest_nice = 0; getboottime64(&boottime); //这个地方是统计了所有CPU的数据,也就是/proc/stat中第一行的结果 for_each_possible_cpu(i) { struct kernel_cpustat kcpustat; u64 *cpustat = kcpustat.cpustat; kcpustat_cpu_fetch(&kcpustat, i); user += cpustat[CPUTIME_USER]; nice += cpustat[CPUTIME_NICE]; system += cpustat[CPUTIME_SYSTEM]; idle += get_idle_time(&kcpustat, i); iowait += get_iowait_time(&kcpustat, i); irq += cpustat[CPUTIME_IRQ]; softirq += cpustat[CPUTIME_SOFTIRQ]; steal += cpustat[CPUTIME_STEAL]; guest += cpustat[CPUTIME_GUEST]; guest_nice += cpustat[CPUTIME_GUEST_NICE]; sum += kstat_cpu_irqs_sum(i); sum += arch_irq_stat_cpu(i); for (j = 0; j < NR_SOFTIRQS; j++) { unsigned int softirq_stat = kstat_softirqs_cpu(j, i); per_softirq_sums[j] += softirq_stat; sum_softirq += softirq_stat; } } sum += arch_irq_stat(); seq_put_decimal_ull(p, "cpu ", nsec_to_clock_t(user)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(nice)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(system)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(idle)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(iowait)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(irq)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(softirq)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(steal)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(guest)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(guest_nice)); seq_putc(p, '\n'); //这个地方是分别统计各个CPU的数据 for_each_online_cpu(i) { struct kernel_cpustat kcpustat; u64 *cpustat = kcpustat.cpustat; kcpustat_cpu_fetch(&kcpustat, i); /* Copy values here to work around gcc-2.95.3, gcc-2.96 */ user = cpustat[CPUTIME_USER]; nice = cpustat[CPUTIME_NICE]; system = cpustat[CPUTIME_SYSTEM]; idle = get_idle_time(&kcpustat, i); iowait = get_iowait_time(&kcpustat, i); irq = cpustat[CPUTIME_IRQ]; softirq = cpustat[CPUTIME_SOFTIRQ]; steal = cpustat[CPUTIME_STEAL]; guest = cpustat[CPUTIME_GUEST]; guest_nice = cpustat[CPUTIME_GUEST_NICE]; seq_printf(p, "cpu%d", i); seq_put_decimal_ull(p, " ", nsec_to_clock_t(user)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(nice)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(system)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(idle)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(iowait)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(irq)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(softirq)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(steal)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(guest)); seq_put_decimal_ull(p, " ", nsec_to_clock_t(guest_nice)); seq_putc(p, '\n'); } seq_put_decimal_ull(p, "intr ", (unsigned long long)sum); show_all_irqs(p); seq_printf(p, "\nctxt %llu\n" "btime %llu\n" "processes %lu\n" "procs_running %lu\n" "procs_blocked %lu\n", nr_context_switches(), (unsigned long long)boottime.tv_sec, total_forks, nr_running(), nr_iowait()); seq_put_decimal_ull(p, "softirq ", (unsigned long long)sum_softirq); for (i = 0; i < NR_SOFTIRQS; i++) seq_put_decimal_ull(p, " ", per_softirq_sums[i]); seq_putc(p, '\n'); return 0; }

-

-

- 以进程为统计对象

-

这些数据是保存在内存中的,最终会在被访问时写入到/proc//stat文件中,代码;

-

操作虚拟文件系统的内容基本一致,这里不再复述

-

利用do_task_stat函数写入相关的统计信息

static int do_task_stat(struct seq_file *m, struct pid_namespace *ns, struct pid *pid, struct task_struct *task, int whole) { ... if (!whole) { min_flt = task->min_flt; maj_flt = task->maj_flt; task_cputime_adjusted(task, &utime, &stime); gtime = task_gtime(task); } ... } -

task_cputime_adjusted函数相关内容

void task_cputime_adjusted(struct task_struct *p, u64 *ut, u64 *st) { struct task_cputime cputime = { .sum_exec_runtime = p->se.sum_exec_runtime, }; task_cputime(p, &cputime.utime, &cputime.stime); cputime_adjust(&cputime, &p->prev_cputime, ut, st); } static inline void task_cputime(struct task_struct *t, u64 *utime, u64 *stime) { *utime = t->utime; *stime = t->stime; } void cputime_adjust(struct task_cputime *curr, struct prev_cputime *prev, u64 *ut, u64 *st) { u64 rtime, stime, utime; unsigned long flags; /* Serialize concurrent callers such that we can honour our guarantees */ raw_spin_lock_irqsave(&prev->lock, flags); rtime = curr->sum_exec_runtime; /* * This is possible under two circumstances: * - rtime isn't monotonic after all (a bug); * - we got reordered by the lock. * * In both cases this acts as a filter such that the rest of the code * can assume it is monotonic regardless of anything else. */ if (prev->stime + prev->utime >= rtime) goto out; stime = curr->stime; utime = curr->utime; /* * If either stime or utime are 0, assume all runtime is userspace. * Once a task gets some ticks, the monotonicy code at 'update:' * will ensure things converge to the observed ratio. */ if (stime == 0) { utime = rtime; goto update; } if (utime == 0) { stime = rtime; goto update; } stime = mul_u64_u64_div_u64(stime, rtime, stime + utime); update: /* * Make sure stime doesn't go backwards; this preserves monotonicity * for utime because rtime is monotonic. * * utime_i+1 = rtime_i+1 - stime_i * = rtime_i+1 - (rtime_i - utime_i) * = (rtime_i+1 - rtime_i) + utime_i * >= utime_i */ if (stime < prev->stime) stime = prev->stime; utime = rtime - stime; /* * Make sure utime doesn't go backwards; this still preserves * monotonicity for stime, analogous argument to above. */ if (utime < prev->utime) { utime = prev->utime; stime = rtime - utime; } prev->stime = stime; prev->utime = utime; out: *ut = prev->utime; *st = prev->stime; raw_spin_unlock_irqrestore(&prev->lock, flags); } -

在这里补充一下cputime_adjust函数的文档,可以看出,这里采用了调度子系统中的sum_exec_runtime来对负载统计子系统的utime和stime进行放缩,以保证统计结果的相对准确;

/* * Adjust tick based cputime random precision against scheduler runtime * accounting. * * Tick based cputime accounting depend on random scheduling timeslices of a * task to be interrupted or not by the timer. Depending on these * circumstances, the number of these interrupts may be over or * under-optimistic, matching the real user and system cputime with a variable * precision. * * Fix this by scaling these tick based values against the total runtime * accounted by the CFS scheduler. * * This code provides the following guarantees: * * stime + utime == rtime * stime_i+1 >= stime_i, utime_i+1 >= utime_i * * Assuming that rtime_i+1 >= rtime_i. */

-

- 以CPU为统计对象

-

最后再回顾一下上面这些函数是如何完成调用的;

- 首先是操作系统启动,在内核中注册了来统计CPU状态的结构体;每一个进程在创建时,在结构体中维护了进程的相关时间;

- 接着在每一个tick完成时的时钟中断里,统计了CPU的使用情况和进程的使用情况;

- 然后在读取虚拟文件系统时,将这些信息更新到/proc/stat文件或/proc//stat中;

- 最后,top命令再开始利用这些数据完成计算CPU的利用率(这一步的算法很多,这里只列举一个);

t o t a l t i m e = u s e r t i m e + s y s t e m t i m e + n i c e t i m e + i d e l t i m e + i o w a i t t i m e + h i t i m e + s i t i m e + s e a l t t i m e + g u e s t t i m e + g n i c e t i m e total_{time}=user_{time}+system_{time}+nice_{time}+idel_{time}+iowait_{time}+hi_{time}+si_{time}+sealt_{time}+guest_{time}+gnice_{time} totaltime=usertime+systemtime+nicetime+ideltime+iowaittime+hitime+sitime+sealttime+guesttime+gnicetime

R a t e C P U = Δ u s e r t i m e Δ t o t a l t i m e Rate_{CPU}=\frac{\Delta user_{time}}{\Delta total_{time}} RateCPU=ΔtotaltimeΔusertime - 完成进程的利用率计算:

t o t a l t i m e = u s e r t i m e + s y s t e m t i m e + n i c e t i m e + i d e l t i m e + i o w a i t t i m e + h i t i m e + s i t i m e + s e a l t t i m e + g u e s t t i m e + g n i c e t i m e total_{time}=user_{time}+system_{time}+nice_{time}+idel_{time}+iowait_{time}+hi_{time}+si_{time}+sealt_{time}+guest_{time}+gnice_{time} totaltime=usertime+systemtime+nicetime+ideltime+iowaittime+hitime+sitime+sealttime+guesttime+gnicetime

R a t e P r o c e s s = Δ ( u s e r P r o c e s s t i m e + s y s t e m P r o c e s s t i m e ) Δ t o t a l t i m e Rate_{Process}=\frac{\Delta (user_{Process_time}+system_{Process_time})}{\Delta total_{time}} RateProcess=ΔtotaltimeΔ(userProcesstime+systemProcesstime)

-

上下文切换时统计

- 相关的编译选项和内核启动选项

- 负载统计子系统

- VIRT_CPU_ACCOUNTING_GEN

- 时间子系统

- NO_HZ_FULL

- CONFIG_HIGH_RES_TIMERS

- 内核启动选项

- nohz_full

- 负载统计子系统

- 统计的流程

-

数据结构

-

每一个CPU在内核初始化时都会创建一个结构体,来统计CPU的使用情况,代码;

enum cpu_usage_stat { CPUTIME_USER, CPUTIME_NICE, CPUTIME_SYSTEM, CPUTIME_SOFTIRQ, CPUTIME_IRQ, CPUTIME_IDLE, CPUTIME_IOWAIT, CPUTIME_STEAL, CPUTIME_GUEST, CPUTIME_GUEST_NICE, NR_STATS, }; -

每一个进程在task_struct中用utime和stime,来统计进程的使用情况,代码;

struct task_struct { ... #ifdef CONFIG_VIRT_CPU_ACCOUNTING_GEN struct vtime vtime; #endif ... }; struct vtime { seqcount_t seqcount; unsigned long long starttime; enum vtime_state state; unsigned int cpu; u64 utime; u64 stime; u64 gtime; };

-

-

统计过程

-

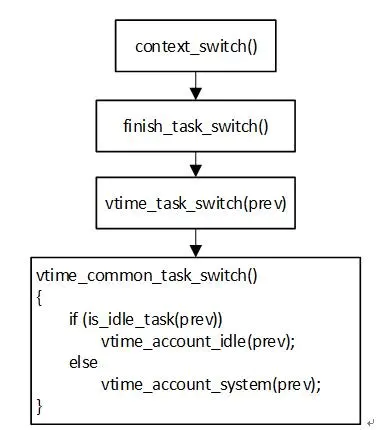

我们从上下文切换切入,从这个content_switch函数来看这件事情

-

首先是在程序调度过程中,调用了context_switch函数

/* * context_switch - switch to the new MM and the new thread's register state. */ static __always_inline struct rq * context_switch(struct rq *rq, struct task_struct *prev, struct task_struct *next, struct rq_flags *rf) { prepare_task_switch(rq, prev, next); /* * For paravirt, this is coupled with an exit in switch_to to * combine the page table reload and the switch backend into * one hypercall. */ arch_start_context_switch(prev); /* * kernel -> kernel lazy + transfer active * user -> kernel lazy + mmgrab() active * * kernel -> user switch + mmdrop() active * user -> user switch */ if (!next->mm) { // to kernel enter_lazy_tlb(prev->active_mm, next); next->active_mm = prev->active_mm; if (prev->mm) // from user mmgrab(prev->active_mm); else prev->active_mm = NULL; } else { // to user membarrier_switch_mm(rq, prev->active_mm, next->mm); /* * sys_membarrier() requires an smp_mb() between setting * rq->curr / membarrier_switch_mm() and returning to userspace. * * The below provides this either through switch_mm(), or in * case 'prev->active_mm == next->mm' through * finish_task_switch()'s mmdrop(). */ switch_mm_irqs_off(prev->active_mm, next->mm, next); if (!prev->mm) { // from kernel /* will mmdrop() in finish_task_switch(). */ rq->prev_mm = prev->active_mm; prev->active_mm = NULL; } } rq->clock_update_flags &= ~(RQCF_ACT_SKIP|RQCF_REQ_SKIP); prepare_lock_switch(rq, next, rf); /* Here we just switch the register state and the stack. */ switch_to(prev, next, prev); barrier(); return finish_task_switch(prev); } -

可以看到context_switch中的结尾,有一个finish_task_switch函数来给上下文切换打扫战场

/** * finish_task_switch - clean up after a task-switch * @prev: the thread we just switched away from. * * finish_task_switch must be called after the context switch, paired * with a prepare_task_switch call before the context switch. * finish_task_switch will reconcile locking set up by prepare_task_switch, * and do any other architecture-specific cleanup actions. * * Note that we may have delayed dropping an mm in context_switch(). If * so, we finish that here outside of the runqueue lock. (Doing it * with the lock held can cause deadlocks; see schedule() for * details.) * * The context switch have flipped the stack from under us and restored the * local variables which were saved when this task called schedule() in the * past. prev == current is still correct but we need to recalculate this_rq * because prev may have moved to another CPU. */ static struct rq *finish_task_switch(struct task_struct *prev) __releases(rq->lock) { ... /* * A task struct has one reference for the use as "current". * If a task dies, then it sets TASK_DEAD in tsk->state and calls * schedule one last time. The schedule call will never return, and * the scheduled task must drop that reference. * * We must observe prev->state before clearing prev->on_cpu (in * finish_task), otherwise a concurrent wakeup can get prev * running on another CPU and we could rave with its RUNNING -> DEAD * transition, resulting in a double drop. */ prev_state = prev->state; vtime_task_switch(prev); ... } -

接下来就非常有意思,finish_task_switch调用了一个vtime_task_switch函数,这个函数的定义根据编译选项的不同而不同,在这里我将会分别阐述在不同的预编译选项下,如何完成统计工作,另外由于这个相关的代码内容实在是太长了,这里列出头文件中的声明部分,根据函数调用情况再看具体的函数定义

#if defined(CONFIG_VIRT_CPU_ACCOUNTING_NATIVE) static inline bool vtime_accounting_enabled_this_cpu(void) { return true; } extern void vtime_task_switch(struct task_struct *prev); #elif defined(CONFIG_VIRT_CPU_ACCOUNTING_GEN) /* * Checks if vtime is enabled on some CPU. Cputime readers want to be careful * in that case and compute the tickless cputime. * For now vtime state is tied to context tracking. We might want to decouple * those later if necessary. */ static inline bool vtime_accounting_enabled(void) { return context_tracking_enabled(); } static inline bool vtime_accounting_enabled_cpu(int cpu) { return context_tracking_enabled_cpu(cpu); } static inline bool vtime_accounting_enabled_this_cpu(void) { return context_tracking_enabled_this_cpu(); } extern void vtime_task_switch_generic(struct task_struct *prev); static inline void vtime_task_switch(struct task_struct *prev) { if (vtime_accounting_enabled_this_cpu()) vtime_task_switch_generic(prev); } #else /* !CONFIG_VIRT_CPU_ACCOUNTING */ static inline bool vtime_accounting_enabled_cpu(int cpu) {return false; } static inline bool vtime_accounting_enabled_this_cpu(void) { return false; } static inline void vtime_task_switch(struct task_struct *prev) { } #endif /* * Common vtime APIs */ #ifdef CONFIG_VIRT_CPU_ACCOUNTING extern void vtime_account_kernel(struct task_struct *tsk); extern void vtime_account_idle(struct task_struct *tsk); #else /* !CONFIG_VIRT_CPU_ACCOUNTING */ static inline void vtime_account_kernel(struct task_struct *tsk) { } #endif /* !CONFIG_VIRT_CPU_ACCOUNTING */ #ifdef CONFIG_VIRT_CPU_ACCOUNTING_GEN extern void arch_vtime_task_switch(struct task_struct *tsk); extern void vtime_user_enter(struct task_struct *tsk); extern void vtime_user_exit(struct task_struct *tsk); extern void vtime_guest_enter(struct task_struct *tsk); extern void vtime_guest_exit(struct task_struct *tsk); extern void vtime_init_idle(struct task_struct *tsk, int cpu); #else /* !CONFIG_VIRT_CPU_ACCOUNTING_GEN */ static inline void vtime_user_enter(struct task_struct *tsk) { } static inline void vtime_user_exit(struct task_struct *tsk) { } static inline void vtime_guest_enter(struct task_struct *tsk) { } static inline void vtime_guest_exit(struct task_struct *tsk) { } static inline void vtime_init_idle(struct task_struct *tsk, int cpu) { } #endif #ifdef CONFIG_VIRT_CPU_ACCOUNTING_NATIVE extern void vtime_account_irq_enter(struct task_struct *tsk); static inline void vtime_account_irq_exit(struct task_struct *tsk) { /* On hard|softirq exit we always account to hard|softirq cputime */ vtime_account_kernel(tsk); } extern void vtime_flush(struct task_struct *tsk); #else /* !CONFIG_VIRT_CPU_ACCOUNTING_NATIVE */ static inline void vtime_account_irq_enter(struct task_struct *tsk) { } static inline void vtime_account_irq_exit(struct task_struct *tsk) { } static inline void vtime_flush(struct task_struct *tsk) { } #endif-

开启了CONFIG_VIRT_CPU_ACCOUNTING选项后,我们可以看到这个vtime_task_switch函数啥也不做,也就是统计工作还是交由tick阶段的函数完成,这里只是一个幌子

-

而开启了CONFIG_VIRT_CPU_ACCOUNTING_NATIVE选项后,我们发现这个vtime_task_switch函数大有所为

void vtime_task_switch(struct task_struct *prev) { if (is_idle_task(prev)) vtime_account_idle(prev); else vtime_account_kernel(prev); vtime_flush(prev); arch_vtime_task_switch(prev); }-

进一步探讨vtime_account_idle函数

//注意这个函数的定义在https://elixir.bootlin.com/linux/v5.10/source/arch/ia64/kernel/time.c#L152 void vtime_account_idle(struct task_struct *tsk) { struct thread_info *ti = task_thread_info(tsk); ti->idle_time += vtime_delta(tsk); } -

进一步探讨vtime_account_kernel函数

//注意这个函数的定义在https://elixir.bootlin.com/linux/v5.10/source/arch/ia64/kernel/time.c#L136 void vtime_account_kernel(struct task_struct *tsk) { struct thread_info *ti = task_thread_info(tsk); __u64 stime = vtime_delta(tsk); if ((tsk->flags & PF_VCPU) && !irq_count()) ti->gtime += stime; else if (hardirq_count()) ti->hardirq_time += stime; else if (in_serving_softirq()) ti->softirq_time += stime; else ti->stime += stime; }

-

-

而开启了CONFIG_VIRT_CPU_ACCOUNTING_GEN选项后,我们发现了

static inline void vtime_task_switch(struct task_struct *prev) { if (vtime_accounting_enabled_this_cpu()) vtime_task_switch_generic(prev); }void vtime_task_switch_generic(struct task_struct *prev) { struct vtime *vtime = &prev->vtime; write_seqcount_begin(&vtime->seqcount); if (vtime->state == VTIME_IDLE) vtime_account_idle(prev); else __vtime_account_kernel(prev, vtime); vtime->state = VTIME_INACTIVE; vtime->cpu = -1; write_seqcount_end(&vtime->seqcount); vtime = ¤t->vtime; write_seqcount_begin(&vtime->seqcount); if (is_idle_task(current)) vtime->state = VTIME_IDLE; else if (current->flags & PF_VCPU) vtime->state = VTIME_GUEST; else vtime->state = VTIME_SYS; vtime->starttime = sched_clock(); vtime->cpu = smp_processor_id(); write_seqcount_end(&vtime->seqcount); }-

首先看一下idel_time

void vtime_account_idle(struct task_struct *tsk) { account_idle_time(get_vtime_delta(&tsk->vtime)); }/* * Account for idle time. * @cputime: the CPU time spent in idle wait */ void account_idle_time(u64 cputime) { u64 *cpustat = kcpustat_this_cpu->cpustat; struct rq *rq = this_rq(); if (atomic_read(&rq->nr_iowait) > 0) cpustat[CPUTIME_IOWAIT] += cputime; else cpustat[CPUTIME_IDLE] += cputime; }static u64 get_vtime_delta(struct vtime *vtime) { u64 delta = vtime_delta(vtime); u64 other; /* * Unlike tick based timing, vtime based timing never has lost * ticks, and no need for steal time accounting to make up for * lost ticks. Vtime accounts a rounded version of actual * elapsed time. Limit account_other_time to prevent rounding * errors from causing elapsed vtime to go negative. */ other = account_other_time(delta); WARN_ON_ONCE(vtime->state == VTIME_INACTIVE); vtime->starttime += delta; return delta - other; } -

这个account_idle_time和我们在tick统计的情况下,统计idel时间用到的函数是一样的,只是传来的参数不一致

-

然后看一下kernel_time

static void __vtime_account_kernel(struct task_struct *tsk, struct vtime *vtime) { /* We might have scheduled out from guest path */ if (vtime->state == VTIME_GUEST) vtime_account_guest(tsk, vtime); else vtime_account_system(tsk, vtime); } -

可以看到这里追踪了vtime_account_guest和vtime_account_system的时间

-

-

-

-

输出到虚拟文件系统中

- 以CPU为统计对象

-

和之前的区别就是在kcpustat_cpu_fetch

#ifdef CONFIG_VIRT_CPU_ACCOUNTING_GEN extern u64 kcpustat_field(struct kernel_cpustat *kcpustat, enum cpu_usage_stat usage, int cpu); extern void kcpustat_cpu_fetch(struct kernel_cpustat *dst, int cpu); #else static inline u64 kcpustat_field(struct kernel_cpustat *kcpustat, enum cpu_usage_stat usage, int cpu) { return kcpustat->cpustat[usage]; } static inline void kcpustat_cpu_fetch(struct kernel_cpustat *dst, int cpu) { *dst = kcpustat_cpu(cpu); } #endif -

关键问题在kcpustat_cpu_fetch上,从这个函数追踪到代码

void kcpustat_cpu_fetch(struct kernel_cpustat *dst, int cpu) { const struct kernel_cpustat *src = &kcpustat_cpu(cpu); struct rq *rq; int err; if (!vtime_accounting_enabled_cpu(cpu)) { *dst = *src; return; } rq = cpu_rq(cpu); for (;;) { struct task_struct *curr; rcu_read_lock(); curr = rcu_dereference(rq->curr); if (WARN_ON_ONCE(!curr)) { rcu_read_unlock(); *dst = *src; return; } err = kcpustat_cpu_fetch_vtime(dst, src, curr, cpu); rcu_read_unlock(); if (!err) return; cpu_relax(); } } -

又追查到kcpustat_cpu_fetch_vtime,这个函数的目的是将当前正在执行的任务加入到统计中,以防一个任务执行时间太久,始终没有进行上下文切换。

static int kcpustat_cpu_fetch_vtime(struct kernel_cpustat *dst, const struct kernel_cpustat *src, struct task_struct *tsk, int cpu) { struct vtime *vtime = &tsk->vtime; unsigned int seq; do { u64 *cpustat; u64 delta; int state; seq = read_seqcount_begin(&vtime->seqcount); state = vtime_state_fetch(vtime, cpu); if (state < 0) return state; *dst = *src; cpustat = dst->cpustat; /* Task is sleeping, dead or idle, nothing to add */ if (state < VTIME_SYS) continue; delta = vtime_delta(vtime); /* * Task runs either in user (including guest) or kernel space, * add pending nohz time to the right place. */ if (state == VTIME_SYS) { cpustat[CPUTIME_SYSTEM] += vtime->stime + delta; } else if (state == VTIME_USER) { if (task_nice(tsk) > 0) cpustat[CPUTIME_NICE] += vtime->utime + delta; else cpustat[CPUTIME_USER] += vtime->utime + delta; } else { WARN_ON_ONCE(state != VTIME_GUEST); if (task_nice(tsk) > 0) { cpustat[CPUTIME_GUEST_NICE] += vtime->gtime + delta; cpustat[CPUTIME_NICE] += vtime->gtime + delta; } else { cpustat[CPUTIME_GUEST] += vtime->gtime + delta; cpustat[CPUTIME_USER] += vtime->gtime + delta; } } } while (read_seqcount_retry(&vtime->seqcount, seq)); return 0; }

-

- 以进程为统计对象

-

和在周期性时钟间隔统计基本一致,这里重点说明不一致的地方;

-

利用do_task_stat函数写入相关的统计信息

static int do_task_stat(struct seq_file *m, struct pid_namespace *ns, struct pid *pid, struct task_struct *task, int whole) { ... if (!whole) { min_flt = task->min_flt; maj_flt = task->maj_flt; task_cputime_adjusted(task, &utime, &stime); gtime = task_gtime(task); } ... } -

task_cputime_adjusted函数这里很关键,根据编译选项的不同,task_cputime函数是从vtime这个结构体成员中获取时间

void task_cputime_adjusted(struct task_struct *p, u64 *ut, u64 *st) { struct task_cputime cputime = { .sum_exec_runtime = p->se.sum_exec_runtime, }; task_cputime(p, &cputime.utime, &cputime.stime); cputime_adjust(&cputime, &p->prev_cputime, ut, st); } void task_cputime(struct task_struct *t, u64 *utime, u64 *stime) { struct vtime *vtime = &t->vtime; unsigned int seq; u64 delta; if (!vtime_accounting_enabled()) { *utime = t->utime; *stime = t->stime; return; } do { seq = read_seqcount_begin(&vtime->seqcount); *utime = t->utime; *stime = t->stime; /* Task is sleeping or idle, nothing to add */ if (vtime->state < VTIME_SYS) continue; delta = vtime_delta(vtime); /* * Task runs either in user (including guest) or kernel space, * add pending nohz time to the right place. */ if (vtime->state == VTIME_SYS) *stime += vtime->stime + delta; else *utime += vtime->utime + delta; } while (read_seqcount_retry(&vtime->seqcount, seq)); } void cputime_adjust(struct task_cputime *curr, struct prev_cputime *prev, u64 *ut, u64 *st) { u64 rtime, stime, utime; unsigned long flags; /* Serialize concurrent callers such that we can honour our guarantees */ raw_spin_lock_irqsave(&prev->lock, flags); rtime = curr->sum_exec_runtime; /* * This is possible under two circumstances: * - rtime isn't monotonic after all (a bug); * - we got reordered by the lock. * * In both cases this acts as a filter such that the rest of the code * can assume it is monotonic regardless of anything else. */ if (prev->stime + prev->utime >= rtime) goto out; stime = curr->stime; utime = curr->utime; /* * If either stime or utime are 0, assume all runtime is userspace. * Once a task gets some ticks, the monotonicy code at 'update:' * will ensure things converge to the observed ratio. */ if (stime == 0) { utime = rtime; goto update; } if (utime == 0) { stime = rtime; goto update; } stime = mul_u64_u64_div_u64(stime, rtime, stime + utime); update: /* * Make sure stime doesn't go backwards; this preserves monotonicity * for utime because rtime is monotonic. * * utime_i+1 = rtime_i+1 - stime_i * = rtime_i+1 - (rtime_i - utime_i) * = (rtime_i+1 - rtime_i) + utime_i * >= utime_i */ if (stime < prev->stime) stime = prev->stime; utime = rtime - stime; /* * Make sure utime doesn't go backwards; this still preserves * monotonicity for stime, analogous argument to above. */ if (utime < prev->utime) { utime = prev->utime; stime = rtime - utime; } prev->stime = stime; prev->utime = utime; out: *ut = prev->utime; *st = prev->stime; raw_spin_unlock_irqrestore(&prev->lock, flags); }

-

- 以CPU为统计对象

-

存在的问题

通过上面的讲解,我们对目前系统的两种统计CPU负载的方法有了很深入的了解。下面说明目前这两种方法存在的问题

- 第一种方法

- 统计的精度不够是最显著的问题;

- 在向虚拟文件系统输出统计进程的情况时,会用调度子系统的信息来矫正负载统计子系统的信息,这就会导致CPU利用情况和进程利用情况不一致;

- 第二种方法

- 开销比较大;

- 同样也会出现CPU利用情况和进程利用情况不一致;

我们的方法

- 不在负载统计子系统改进,直接扩充调度子系统,在上下文切换时统计idle进程的运行时间,然后就能反向算出CPU利用率,这样就保证了统计的精度;

- 同时,我们利用的时间来源是一致的,大大减少了CPU利用情况和进程利用情况不一致的情况;

- 下面具体阐释我们的方法;

改进的具体内容

-

统计相关信息

- 统计CPU上idle进程的时间

-

每个CPU上都在初始化时有一个idle进程,这个进程是可以在CPU上没有进程执行时完成空转功能;

-

idle进程是被idle调度类调度的,但是这个进程的的执行时间目前是没有被统计的,我们可以复用当前的调度子系统框架,记录这个时间,idle调度类的具体情况这里就不再展开了,我主要是参考了这篇博客;

-

我们可以在idle进程进入前,记录它的执行时机节点

struct task_struct *pick_next_task_idle(struct rq *rq) { struct task_struct *next = rq->idle; #ifdef CONFIG_PROC_IDLE if (static_branch_likely(&proc_idle)) { struct sched_entity *idle_se = &rq->idle->se; u64 now = sched_clock(); idle_se->exec_start = now; } #endif set_next_task_idle(rq, next, true); return next; } -

然后在退出idle进程时,记录idle进程的执行时间

static void put_prev_task_idle(struct rq *rq, struct task_struct *prev) { #ifdef CONFIG_PROC_IDLE if (!static_branch_likely(&proc_idle)) return; struct sched_entity *idle_se = &rq->idle->se; u64 now = sched_clock(); u64 delta_exec; delta_exec = now - idle_se->exec_start; if (unlikely((s64)delta_exec <= 0)) return; schedstat_set(idle_se->statistics.exec_max, max(delta_exec, idle_se->statistics.exec_max)); idle_se->sum_exec_runtime += delta_exec; #endif } -

CONFIG_PROC_IDLE是我们自定义的一个内核编译选项,可以让我们确定是否开启这个新功能,同时利用内核启动参数,决定是否启用这个功能;

config PROC_IDLE bool "include /proc/stat2 file" depends on PROC_FS default y help Provide the CPU idle time in the /proc/stat2 file.DEFINE_STATIC_KEY_TRUE(proc_idle); #ifdef CONFIG_PROC_IDLE static int __init init_proc_idle(char *str) { if (!strcmp(str, "false")) static_branch_disable(&proc_idle); return 1; } __setup("proc_idle=", init_proc_idle); #endif -

我们可以用ftrace验证我们上述的代码逻辑是否正确

- 可以看到,在每一次进入和退出idle进程时,都会调用我们修改的函数

- [外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-6W1sXg4o-1635943651102)(https://raw.githubusercontent.com/Richardhongyu/pic/main/4818167818e8e161c3e8a246409ada0.png)]

- [外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-hxcdbtjz-1635943651104)(https://raw.githubusercontent.com/Richardhongyu/pic/main/67530386c8949e09e6019106344a1fa.png)]

-

- 输出相关信息到虚拟文件系统

-

我们需要在虚拟文件系统中创建一个文件/proc/stat2

// SPDX-License-Identifier: GPL-2.0-only /* * linux/fs/proc/stat2.c * * Copyright (C) 2007 * * cpu idle time accouting */ #include <linux/cpumask.h> #include <linux/device.h> #include <linux/fs.h> #include <linux/init.h> #include <linux/interrupt.h> #include <linux/kernel.h> #include <linux/kernel_stat.h> #include <linux/module.h> #include <linux/proc_fs.h> #include <linux/sched.h> #include <linux/sched/stat.h> #include <linux/seq_file.h> #include <linux/slab.h> #include <linux/time.h> #include <linux/irqnr.h> #include <linux/sched/cputime.h> #include <linux/tick.h> #ifdef CONFIG_PROC_IDLE #define PROC_NAME "stat2" extern u64 cal_idle_sum_exec_runtime(int cpu); static u64 get_idle_sum_exec_runtime(int cpu) { u64 idle = cal_idle_sum_exec_runtime(cpu); return idle; } static int show_idle(struct seq_file *p, void *v) { int i; u64 idle; idle = 0; for_each_possible_cpu(i) { idle += get_idle_sum_exec_runtime(i); } seq_put_decimal_ull(p, "cpu ", nsec_to_clock_t(idle)); seq_putc(p, '\n'); for_each_online_cpu(i) { idle = get_idle_sum_exec_runtime(i); seq_printf(p, "cpu%d", i); seq_put_decimal_ull(p, " ", nsec_to_clock_t(idle)); seq_putc(p, '\n'); } return 0; } static int idle_open(struct inode *inode, struct file *file) { unsigned int size = 32 + 32 * num_online_cpus(); return single_open_size(file, show_idle, NULL, size); } static struct proc_ops idle_procs_ops = { .proc_open = idle_open, .proc_read_iter = seq_read_iter, .proc_lseek = seq_lseek, .proc_release = single_release, }; static int __init kernel_module_init(void) { proc_create(PROC_NAME, 0, NULL, &idle_procs_ops); return 0; } fs_initcall(kernel_module_init); #endif /*CONFIG_PROC_IDLE*/ -

还需要将正在执行的idle进程信息统计到文件中,提升统计的准确率

u64 cal_idle_sum_exec_runtime(int cpu) { struct rq *rq = cpu_rq(cpu); struct sched_entity *idle_se = &rq->idle->se; u64 idle = idle_se->sum_exec_runtime; if (!static_branch_likely(&proc_idle)) return 0ULL; if (rq->curr == rq->idle) { u64 now = sched_clock(); u64 delta_exec; delta_exec = now - idle_se->exec_start; if (unlikely((s64)delta_exec <= 0)) return idle; schedstat_set(idle_se->statistics.exec_max, max(delta_exec, idle_se->statistics.exec_max)); idle += delta_exec; } return idle; }

-

- 统计CPU上idle进程的时间

-

利用相关的信息的一个示例

-

我另外写了一个示例程序,展示如何利用我们定义的/proc/stat2

// SPDX-License-Identifier: GPL-2.0-only /* * idle_cal.c * * Copyright (C) 2021 * * cpu idle time accouting */ #include <stdlib.h> #include <stdio.h> #include <fcntl.h> #include <string.h> #include <unistd.h> #include <time.h> #include <limits.h> #include <sys/time.h> #define BUFFSIZE 4096 #define HZ 100 #define FILE_NAME "/proc/stat2" struct cpu_info { char name[BUFFSIZE]; long long value[1]; }; int main(void) { int cpu_number = sysconf(_SC_NPROCESSORS_ONLN); struct cpu_info *cpus = (struct cpu_info *)malloc(sizeof(struct cpu_info)*cpu_number); struct cpu_info *cpus_2 = (struct cpu_info *)malloc(sizeof(struct cpu_info)*cpu_number); char buf[BUFFSIZE]; long long sub; double value; while (1) { FILE *fp = fopen(FILE_NAME, "r"); int i = 0; struct timeval start, end; while (i < cpu_number+1) { int n = fscanf(fp, "%s %lld\n", cpus[i].name, &cpus[i].value[0]); if (n < 0) { printf("wrong"); return -1; } i += 1; } gettimeofday(&start, NULL); fflush(fp); fclose(fp); i = 0; sleep(1); FILE *fp_2 = fopen(FILE_NAME, "r"); while (i < cpu_number+1) { int n = fscanf(fp_2, "%s %lld\n", cpus_2[i].name, &cpus_2[i].value[0]); if (n < 0) { printf("wrong"); return -1; } i += 1; } gettimeofday(&end, NULL); fflush(fp); fclose(fp_2); sub = end.tv_sec-start.tv_sec; value = sub*1000000.0+end.tv_usec-start.tv_usec; system("reset"); printf("CPU idle rate %f\n", 1000000/HZ*(cpus_2[0].value[0]-cpus[0].value[0]) /value); for (int i = 1; i < cpu_number+1; i++) { printf("CPU%d idle rate %f\n", i-1, 1-1000000/HZ *(cpus_2[i].value[0]-cpus[i].value[0])/value); } } return 0; }

-

未来展望

- 还需要进一步扩展目前的统计范围,目前只能区分idle和非idle的时间,未来准备将这个统计范围扩展,可以区分user time,system time,guest time等各个时段的时间。

结束语

这个项目为期三个月,我从中学习到很多操作系统的知识,并且熟悉了开源社区的运行规则。非常感谢谢秀奇,成坚,汪少博老师的指导,没有他们的帮助,我不可能完成这个项目。

本文深入探讨了Linux系统中CPU时间统计的两种主要方法:基于周期性时钟中断和基于上下文切换。针对现有方法存在的问题,提出了一种新的解决方案,通过在上下文切换时记录idle进程的运行时间,来提高CPU利用率统计的准确性。

本文深入探讨了Linux系统中CPU时间统计的两种主要方法:基于周期性时钟中断和基于上下文切换。针对现有方法存在的问题,提出了一种新的解决方案,通过在上下文切换时记录idle进程的运行时间,来提高CPU利用率统计的准确性。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?