若是开发要用到之前的hive的元数据,而又不想在hive里面编程,可以选择整合spark,在spark中写hive sql。

两种方式:

1)整合hive 在 spark sql交互式命令控制台中写sql 执行

2)整合到idea中 在idea中写sql或者代码 (我比较喜欢这种),后期打包提交到集群上运行

但是若不写代码或者sql,仅仅进行简单的查看,还是命令行比较方便

1)整合hive 在 spark sql交互式命令控制台中写sql 执行

1.安装MySQL并创建一个普通用户,并且授权

CREATE USER 'hive'@'%' IDENTIFIED BY '123456';

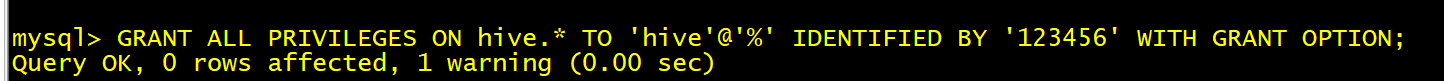

GRANT ALL PRIVILEGES ON hive.* TO 'hive'@'%' IDENTIFIED BY '123456' WITH GRANT OPTION;

FLUSH PRIVILEGES;先登录mysql (在linux上)

mysql -uroot -p

输入密码 123456

如果它报密码过于简单,不符合密码验证策略

先去把策略等级给改成LOW

之后设置 set global validate_password_length=6; 密码长度为6 因为我设置的密码是123456

它本来的要求是最低为8位(如上图)

更改之后的配置 SHOW VARIABLES LIKE 'validate_password%';

接着就可以创建用户了

授权刷新

2.添加一个hive-site.xml

<?xml version="1.0" encoding="UTF-8" standalone="no"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. See the NOTICE file distributed with this work for additional information regarding copyright ownership. The ASF licenses this file to You under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. --> <configuration> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://linux01:3306/hive?createDatabaseIfNotExist=true</value> <description>JDBC connect string for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>com.mysql.jdbc.Driver</value> <description>Driver class name for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>hive</value> <description>username to use against metastore database</description> </property> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>123456</value> <description>password to use against metastore database</description> </property> <property> <name>hive.metastore.schema.verification</name> <value>false</value> </property> <property> <name>datanucleus.schema.autoCreateAll</name> <value>true</value> </property> <property> <name>hive.metastore.warehouse.dir</name> <value>hdfs://linux01:8020/user/default</value> <description>location of default database for the warehouse</description> </property> </configuration>在spark下的conf目录下

3.上传一个mysql连接驱动

bin/spark-sql --master spark://linux01:7077 --driver-class-path /root/mysql-connector-java-5.1.47.jar

4.重新启动SparkSQL的命令行

---------------------------

spark-sql on yarn

---------------------------bin/spark-sql \

--master yarn \

--deploy-mode client \

--driver-memory 1g \

--executor-memory 512m \

--num-executors 3 \

--executor-cores 1 \

--driver-class-path /root/mysql-connector-java-5.1.49.jar在saprk sql中写hive 查出来的结果还是不美观和方便的

我们可以使用beeline

bin/beeline/ -u jdbc://linux01:10000

2)整合到idea中 在idea中写sql或者代码 (我比较喜欢这种),后期打包提交到集群上运行

若是不想在spark命令行中写sql,也可以选择在idea中写sql,不过要加入配置文件和依赖,这样就可以在idea中快乐的写sql了。

1.添加配置文件 将hive-site.xml 放在资源文件夹下

<?xml version="1.0" encoding="UTF-8" standalone="no"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. See the NOTICE file distributed with this work for additional information regarding copyright ownership. The ASF licenses this file to You under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. --> <configuration> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://linux01:3306/hive?createDatabaseIfNotExist=true</value> <description>JDBC connect string for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>com.mysql.jdbc.Driver</value> <description>Driver class name for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>hive</value> <description>username to use against metastore database</description> </property> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>123456</value> <description>password to use against metastore database</description> </property> <property> <name>hive.metastore.schema.verification</name> <value>false</value> </property> <property> <name>datanucleus.schema.autoCreateAll</name> <value>true</value> </property> <property> <name>hive.metastore.warehouse.dir</name> <value>hdfs://linux01:8020/user/default</value> <description>location of default database for the warehouse</description> </property> </configuration>2.添加spark和hive整合的依赖

3.创建spark session时添加对hive的支持

查看hdfs下的/user/default下已经有了tb_user文件夹了

接着往里面load数据

使用hdfs dfs -put linux上的数据 /user/default/tb_user 到hdfs中

或者使用

load data local inpath 'C:/Users/Maste_king/Desktop/user.txt' into table tb_user; 如果是在linux的交互式命令行端中执行 文件需要在本地 即linux机器上 我是在idea中写 且执行 本地就是我的windows其实我感觉像这种简单的语句,每次写了要点运行还是很麻烦的,这种执行语句,建议还是在交互式命令行端执行比较方便

在idea中 整合hive ,写sql和代码,最主要是可以又写hive的sql,又能写spark的sql,还能写dataset DSL风格的编程,甚至可以写RDD编程

是不是灰常灵活?哈哈哈

这样子根据具体需求,能用sql解决的写sql,不能用sql解决的写dataset,或者还想写sql,那就自定义函数写sql

不用去关心优化,算子执行顺序,因为spark3.0 能够自适应查询优化和动态分区裁剪优化

4569

4569

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?