最近在运行EMNLP 2020 paper: "POINTER: Constrained Progressive Text Generation via Insertion-based Generative Pre-training"的代碼:GitHub - dreasysnail/POINTERContribute to dreasysnail/POINTER development by creating an account on GitHub.![]() https://github.com/dreasysnail/POINTER

https://github.com/dreasysnail/POINTER

遇到的问题记录如下(持续更新):

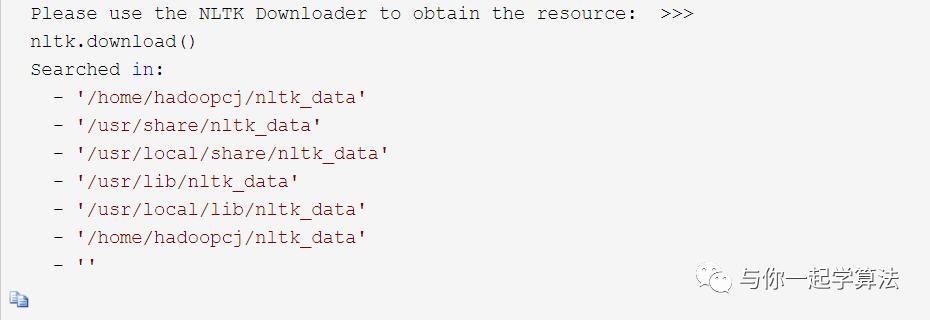

- nltk.download('stopwords')失敗:

从Github上下载stopwords.zip,并解压放到目录下。

Github地址为 https://github.com/nltk/nltk_data/tree/gh-pages/packages/corpora

至于放到哪个目录,在执行nltk.downloads(‘stopwords’)最后会给你这样的提示:(由於是之後記錄的,所以使用了他人的圖片)

我最後是放在/opt/conda/envs/SpareNet/nltk_data/corpora/stopwords.zip下,注意不存在的文件夾需要自己創建。

- ubuntu繁体字转换简体字:

ctrl+shift+c+f

- BertTokenizer使用详解:

- 在BertTokenizer中加入自己的词汇:

在training.py 第247行中加入以下代码:

with open('iu_vocab.json','r') as f:

tokens_list = json.load(f)['vocab']

tokenizer = BertTokenizer.from_pretrained(args.bert_model, do_lower_case=args.do_lower_case)

tokenizer.add_tokens(tokens_list)然后在载入模型时,training.py第282行加入以下代码:

else:

model = BertForMaskedLM.from_pretrained(args.bert_model)

model.resize_token_embeddings(args.len_tokens)- 遇到问题:decoder的vocab_size没有resize:

在modeling_utils.py第299行加入以下代码:

def _tie_or_clone_weights(self, first_module, second_module):

""" Tie or clone module weights depending of weither we are using TorchScript or not

"""

# Update bias size if has attribuate bias

if hasattr(self, "cls"):

self.cls.predictions.bias.data = torch.nn.functional.pad(

self.cls.predictions.bias.data,

(0, self.config.vocab_size - self.cls.predictions.bias.shape[0]),

"constant",

0,

)

if self.config.torchscript:

first_module.weight = nn.Parameter(second_module.weight.clone())

else:

first_module.weight = second_module.weight参考了github上大佬的issues:https://github.com/huggingface/transformers/issues/2480![]() https://github.com/huggingface/transformers/issues/2480

https://github.com/huggingface/transformers/issues/2480

1770

1770

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?