朴素贝叶斯实现书籍分类

朴素贝叶斯是生成方法,直接找出特征输出Y和特征X的联合分布P(X,Y)P(X,Y),然后用P(Y|X)=P(X,Y)/P(X)P(Y|X)=P(X,Y)/P(X)得出。

一、数据集

数据集链接:https://wss1.cn/f/73l1yh2yjny

数据格式说明:

X.Y

X表示书籍

Y表示该书籍下不同章节

目的:判断文本出自哪个书籍

自行划分训练和测试集

二、实现方法

1. 数据预处理

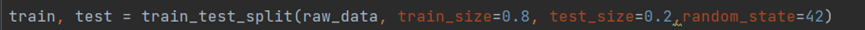

(1) 将原始数据的80%作为训练集,20%作为测试集

(2) 将训练集和测试集的数据去除所有数字、字符和多余的空格,并建立字典,将段落对应的书籍号作为索引,段落内容作为值。

2.朴素贝叶斯实现

(1) 创建词汇表

(2) 计算先验概率

(3) 计算条件概率

二、代码

naive_bayes_text_classifier.py

import numpy as np

import re

def loadDataSet(filepath):

f = open(filepath, "r").readlines()

raw_data = []

print("去除所有符号数字...")

for i in range(0, len(f), 2):

temp = dict()

temp["class"] = int(f[i].strip().split(".")[0])

#去除所有符号和数字

mid = re.sub("[1-9,!?,.:\"();&\t]", " ", f[i + 1].strip(), count=0, flags=0)

#去除多余的空格

temp["abstract"] = re.sub(" +", " ", mid, count=0, flags=0).strip()

if temp["abstract"] != "":

raw_data.append(temp)

postingList=[i["abstract"].split() for i in raw_data]

classVec = [i["class"] for i in raw_data] #1 is abusive, 0 not

return postingList, classVec

# 集合结构内元素的唯一性,创建一个包含所有词汇的词表。

def createVocabList(dataSet):

vocabSet = set([]) # 建立一个空列表

for document in dataSet:

vocabSet = vocabSet | set(document) # 合并两个集合

return list(vocabSet)

def setOfWords2Vec(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if word in vocabList:

returnVec[vocabList.index(word)] = 1

else:

continue

print("the word: %s is not in my Vocabulary!" % word)

return returnVec

# 进行训练, 这里就是计算: 条件概率 和 先验概率

def trainNB0(trainMatrix, trainCategory):

numTrainDocs = len(trainMatrix) # 计算总的样本数量

# 计算样本向量化后的长度, 这里等于词典长度。

numWords = len(trainMatrix[0])

# 计算先验概率

p0 = np.sum(trainCategory == 0) / float(numTrainDocs)

p1 = np.sum(trainCategory == 1) / float(numTrainDocs)

p2 = np.sum(trainCategory == 2) / float(numTrainDocs)

p3 = np.sum(trainCategory == 3) / float(numTrainDocs)

p4 = np.sum(trainCategory == 4) / float(numTrainDocs)

p5 = np.sum(trainCategory == 5) / float(numTrainDocs)

p6 = np.sum(trainCategory == 6) / float(numTrainDocs)

#print(p0,p1,p2,p3,p4,p5,p6)

# 进行初始化, 用于向量化后的样本 累加, 为什么初始化1不是全0, 防止概率值为0.

p0Num = np.ones(numWords)

p1Num = np.ones(numWords)

p2Num = np.ones(numWords)

p3Num = np.ones(numWords)

p4Num = np.ones(numWords)

p5Num = np.ones(numWords)

p6Num = np.ones(numWords) #change to ones()

# 初始化求条件概率的分母为2, 防止出现0,无法计算的情况。

p0Denom = 2.0

p1Denom = 2.0

p2Denom = 2.0

p3Denom = 2.0

p4Denom = 2.0

p5Denom = 2.0

p6Denom = 2.0 #change to 2.0

# 遍历所有向量化后的样本, 并且每个向量化后的长度相等, 等于词典长度。

for i in range(numTrainDocs):

# 统计标签为1的样本: 向量化后的样本的累加, 样本中1总数的求和, 最后相除取log就是条件概率。

if trainCategory[i] == 0:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

# 统计标签为0的样本: 向量化后的样本累加, 样本中1总数的求和, 最后相除取log就是条件概率。

elif trainCategory[i] == 1:

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

elif trainCategory[i] == 2:

p2Num += trainMatrix[i]

p2Denom += sum(trainMatrix[i])

elif trainCategory[i] == 3:

p3Num += trainMatrix[i]

p3Denom += sum(trainMatrix[i])

elif trainCategory[i] == 4:

p4Num += trainMatrix[i]

p4Denom += sum(trainMatrix[i])

elif trainCategory[i] == 5:

p5Num += trainMatrix[i]

p5Denom += sum(trainMatrix[i])

elif trainCategory[i] == 6:

p6Num += trainMatrix[i]

p6Denom += sum(trainMatrix[i])

# 求条件概率。

p0Vect = np.log(p0Num / p0Denom)

p1Vect = np.log(p1Num / p1Denom) # 改为 log() 防止出现0

p2Vect = np.log(p2Num / p2Denom)

p3Vect = np.log(p3Num / p3Denom)

p4Vect = np.log(p4Num / p4Denom)

p5Vect = np.log(p5Num / p5Denom)

p6Vect = np.log(p6Num / p6Denom)

# 返回条件概率 和 先验概率

return p0Vect, p1Vect, p2Vect, p3Vect, p4Vect, p5Vect, p6Vect, p0,p1,p2,p3,p4,p5,p6

def classifyNB(vec2Classify, p0Vec, p1Vec,p2Vec, p3Vec,p4Vec, p5Vec,p6Vec, p0,p1,p2,p3,p4,p5,p6):

# 向量化后的样本 分别 与 各类别的条件概率相乘 加上先验概率取log,之后进行大小比较, 输出类别。

p0 = sum(vec2Classify * p0Vec) + np.log(p0)

p1 = sum(vec2Classify * p1Vec) + np.log(p1) #element-wise mult

p2 = sum(vec2Classify * p2Vec) + np.log(p2)

p3 = sum(vec2Classify * p3Vec) + np.log(p3)

p4 = sum(vec2Classify * p4Vec) + np.log(p4)

p5 = sum(vec2Classify * p5Vec) + np.log(p5)

p6 = sum(vec2Classify * p6Vec) + np.log(p6)

res=[p0,p1,p2,p3,p4,p5,p6]

return res.index(max(res))

if __name__ == '__main__':

# 生成训练样本 和 标签

print("获取训练数据...")

listOPosts, listClasses = loadDataSet("bys_data_train.txt")

print("训练数据集大小:",len(listOPosts))

# 创建词典

print("建立词典...")

myVocabList = createVocabList(listOPosts)

# 用于保存样本转向量之后的

trainMat=[]

# 遍历每一个样本, 转向量后, 保存到列表中。

for postinDoc in listOPosts:

trainMat.append(setOfWords2Vec(myVocabList, postinDoc))

# 计算 条件概率 和 先验概率

print("训练...")

p0V,p1V,p2V,p3V,p4V,p5V,p6V,p0,p1,p2,p3,p4,p5,p6 = trainNB0(np.array(trainMat), np.array(listClasses))

# 给定测试样本 进行测试

print("获取测试数据...")

listOPosts, listClasses = loadDataSet("bys_data_test.txt")

print("测试数据集大小:", len(listOPosts))

f=open("output.txt","w")

total=0

true=0

for i,j in zip(listOPosts,listClasses):

testEntry = i

thisDoc = np.array(setOfWords2Vec(myVocabList, testEntry))

result=classifyNB(thisDoc, p0V,p1V,p2V,p3V,p4V,p5V,p6V,p0,p1,p2,p3,p4,p5,p6)

print(" ".join(testEntry))

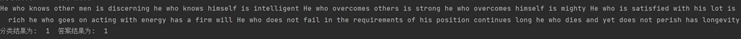

print('分类结果为: ', result,' 答案结果为: ',j)

f.write(" ".join(testEntry)+"\n")

f.write('分类结果为: '+ str(result)+' 答案结果为: '+str(j)+ "\n")

total+=1

if result==j:

true+=1

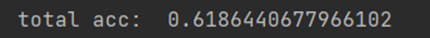

print("total acc: ",true/total)

f.write("total acc: "+str(true/total))

f.close()

preprocess.py

from sklearn.model_selection import train_test_split

f=open("AsianReligionsData .txt","r",encoding='gb18030',errors="ignore").readlines()

raw_data=[]

for i in range(0,len(f),2):

raw_data.append(f[i]+f[i+1])

train, test = train_test_split(raw_data, train_size=0.8, test_size=0.2,random_state=42)

print(len(train))

print(len(test))

f=open("bys_data_train.txt","w")

for i in train:

f.write(i)

f.close()

f=open("bys_data_test.txt","w")

for i in test:

f.write(i)

f.close()

实验结果

(1) 预测结果和真实结果比较

(2) 准确率计算

1544

1544

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?