一、前置准备

CentOS7、jdk1.8、scala-2.11.12、spark-2.4.5

想要完成本期视频中所有操作,需要以下准备:

二、环境搭建

2.1 下载并解压

下载 Spark 安装包,这里我下载的是spark-2.4.5-bin-hadoop2.7.tgz。下载地址:http://spark.apache.org/downloads.html

# 解压

[xiaokang@hadoop ~]$ tar -zxvf spark-2.4.5-bin-hadoop2.7.tgz -C /opt/software/

# 重命名(可选)

[xiaokang@hadoop ~]$ mv /opt/software/spark-2.4.5-bin-hadoop2.7/ /opt/software/spark-2.4.5

2.2 配置环境变量

[xiaokang@hadoop ~]$ sudo vim /etc/profile.d/env.sh

在原来基础上更新配置环境变量:

export SPARK_HOME=/opt/software/spark-2.4.5

export PATH=${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:${ZOOKEEPER_HOME}/bin:${HIVE_HOME}/bin:${ZEPPELIN_HOME}/bin:${HBASE_HOME}/bin:${SQOOP_HOME}/bin:${FLUME_HOME}/bin:${PYTHON_HOME}/bin:${SCALA_HOME}/bin:${MAVEN_HOME}/bin:${GRADLE_HOME}/bin:${KAFKA_HOME}/bin:${SPARK_HOME}/bin:$PATH

执行 source 命令,使得配置的环境变量立即生效:

[xiaokang@hadoop ~]$ source /etc/profile.d/env.sh

2.3 修改配置

进入 ${SPARK_HOME}/conf/ 目录下,复制一份spark-env.sh.template文件进行更改

[xiaokang@hadoop conf]$ cp spark-env.sh.template spark-env.sh

export JAVA_HOME=/opt/moudle/jdk1.8.0_191

export SCALA_HOME=/opt/moudle/scala-2.11.12

# Options read when launching programs locally with

# ./bin/run-example or ./bin/spark-submit

# - HADOOP_CONF_DIR, to point Spark towards Hadoop configuration files

SPARK_LOCAL_IP=hadoop

# - SPARK_PUBLIC_DNS, to set the public dns name of the driver program

2.4 启动测试

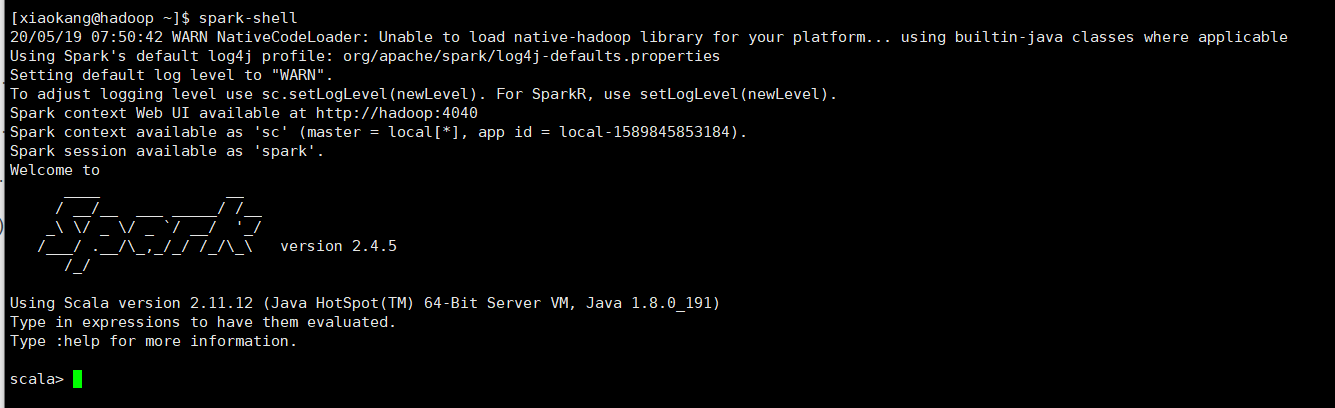

[xiaokang@hadoop ~]$ spark-shell

2.5 wordcount案例

准备一个需要统计词频的小文件,部分词频数据:

xiaokang Hive Hadoop Flink 微信公众号:小康新鲜事儿 MapReduce Spark

HBase Spark 微信公众号:小康新鲜事儿

HBase Spark 微信公众号:小康新鲜事儿 Hadoop MapReduce Hive

xiaokang MapReduce HBase Flink Spark Hadoop

Flink Hadoop Spark xiaokang

xiaokang MapReduce Hive HBase Spark

微信公众号:小康新鲜事儿 HBase MapReduce Hive Spark xiaokang

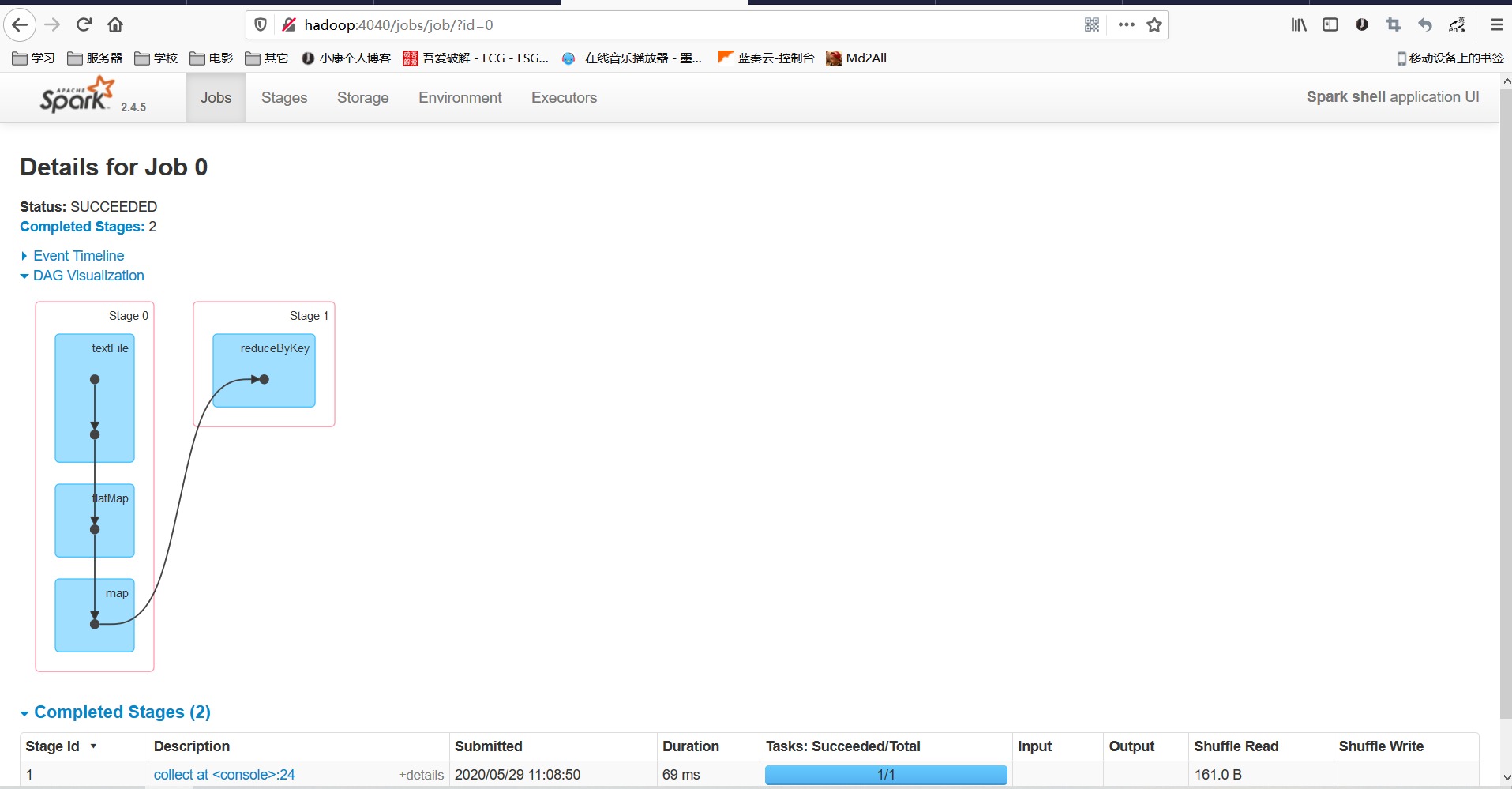

Spark之WordCount案例实操:

scala> val result=sc.textFile("file:///home/xiaokang/wordcount-xiaokang.txt").flatMap(_.split("\t")).map((_,1)).reduceByKey(_ + _).collect

result: Array[(String, Int)] = Array((Flink,617), (Spark,614), (MapReduce,631), (Hive,636), (xiaokang,647), (HBase,642), (微信公众号:小康新鲜事儿,647), (Hadoop,644))

查看 Spark Web UI 界面,端口为4040:

286

286

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?