1.是什么

所谓的插件是通过下载集成的方式,使得SD在绘画过程中通过API的调用在参数内通过页面设置达到二次渲染出图的过程

SD启动器2024最新版本下载

链接:https://pan.quark.cn/s/eea6375642fd

最全的放大模型webui和comfyui通用ESRGAN

链接:https://pan.quark.cn/s/db047c924f9c

我用夸克网盘分2024stablediffusion插件大全

链接:https://pan.quark.cn/s/b738a99f6f72

我用夸克网盘分Comfyui工作流4月更新

链接:https://pan.quark.cn/s/043adee22d23

2.怎么玩

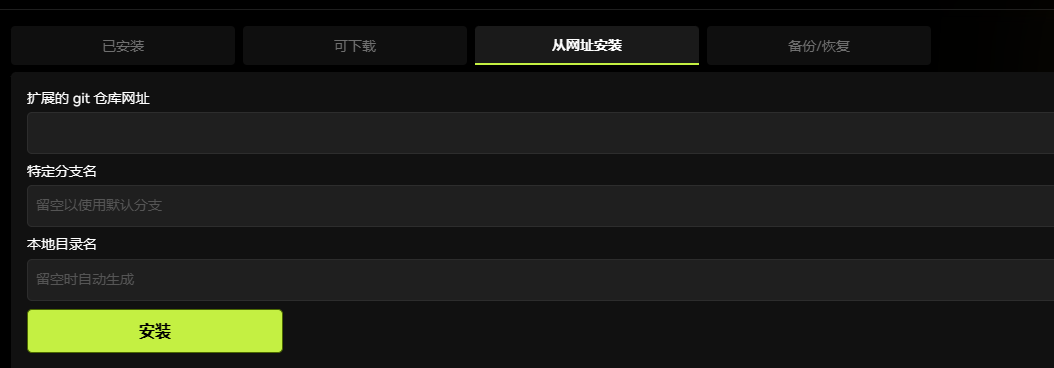

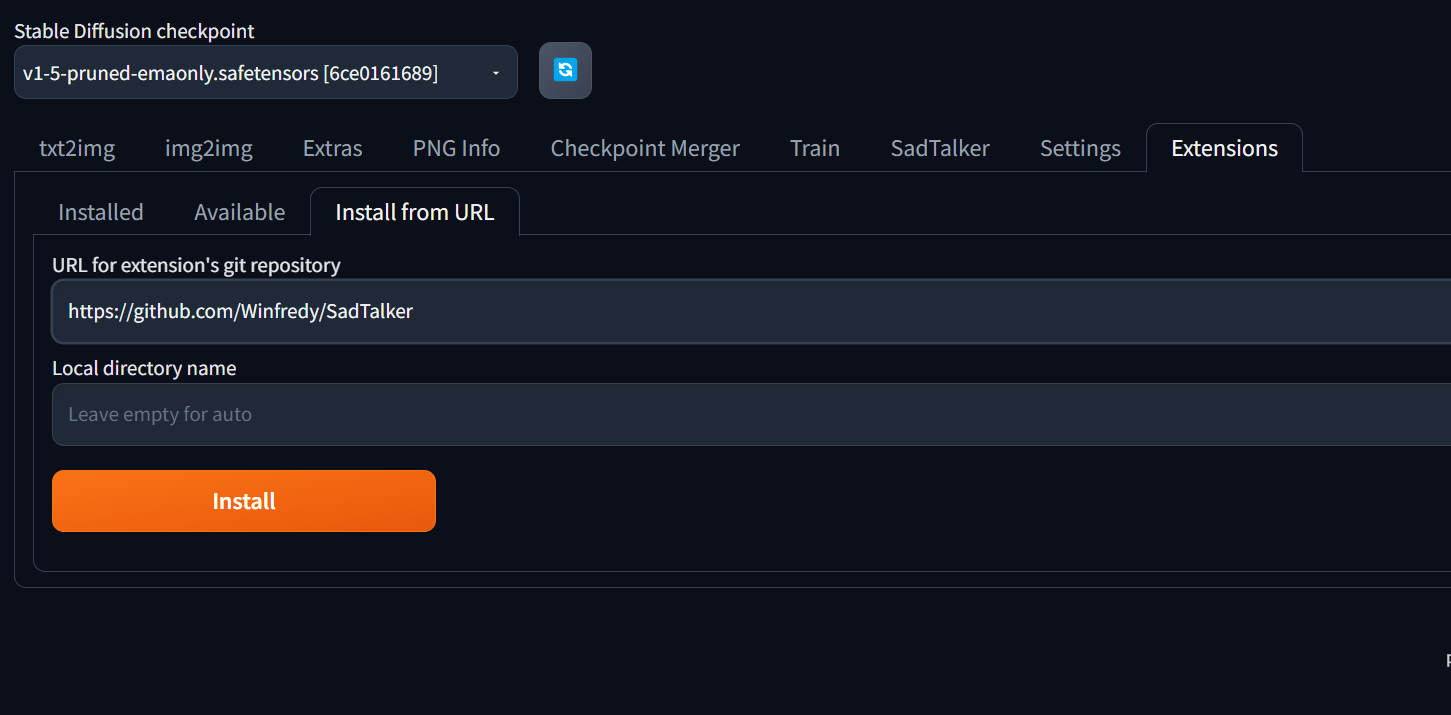

复制到从网址安装

点击安装即可

安装完重启生效

升级版本

3.在哪下

https://gitcode.net/rubble7343/sd-webui-extensions/raw/master/index.json

下载插件的N种方式

1.直接下载zip安装包

2.git clone

3.从网址安装

4.插件列表安装

备份插件列表

https://github.com/Gerschel/sd_web_ui_preset_utils.git

4.报错怎么办

RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cpu and cuda:0! (when checking argument for argument weight in method wrapper_CUDA___slow_conv2d_forward)

Console logs

代码语言:javascript

复制

Startup time: 46.8s (prepare environment: 25.9s, import torch: 4.7s, import gradio: 1.0s, setup paths: 0.5s, initialize shared: 0.2s, other imports: 0.5s, setup codeformer: 0.3s, load scripts: 8.4s, create ui: 4.1s, gradio launch: 0.6s, app_started_callback: 0.5s).

Loading VAE weights specified in settings: E:\sd-webui-aki\sd-webui-aki-v4\models\VAE\vae-ft-mse-840000-ema-pruned.safetensors

Applying attention optimization: xformers... done.

Model loaded in 6.5s (load weights from disk: 0.6s, create model: 0.9s, apply weights to model: 4.3s, load VAE: 0.4s, calculate empty prompt: 0.1s).

refresh_ui

Restoring base VAE

Applying attention optimization: xformers... done.

VAE weights loaded.

2023-11-25 18:37:19,315 - ControlNet - INFO - Loading model: control_v11f1p_sd15_depth [cfd03158]

2023-11-25 18:37:19,995 - ControlNet - INFO - Loaded state_dict from [E:\sd-webui-aki\sd-webui-aki-v4\models\ControlNet\control_v11f1p_sd15_depth.pth]

2023-11-25 18:37:19,996 - ControlNet - INFO - controlnet_default_config

2023-11-25 18:37:22,842 - ControlNet - INFO - ControlNet model control_v11f1p_sd15_depth [cfd03158] loaded.

2023-11-25 18:37:23,008 - ControlNet - INFO - Loading preprocessor: depth

2023-11-25 18:37:23,010 - ControlNet - INFO - preprocessor resolution = 896

2023-11-25 18:37:27,343 - ControlNet - INFO - ControlNet Hooked - Time = 8.458001852035522

0: 640x384 1 face, 78.0ms

Speed: 4.0ms preprocess, 78.0ms inference, 29.0ms postprocess per image at shape (1, 3, 640, 384)

2023-11-25 18:37:50,189 - ControlNet - INFO - Loading model from cache: control_v11f1p_sd15_depth [cfd03158]

2023-11-25 18:37:50,192 - ControlNet - INFO - Loading preprocessor: depth

2023-11-25 18:37:50,192 - ControlNet - INFO - preprocessor resolution = 896

2023-11-25 18:37:50,279 - ControlNet - INFO - ControlNet Hooked - Time = 0.22900152206420898

2023-11-25 18:38:30,791 - AnimateDiff - INFO - AnimateDiff process start.

2023-11-25 18:38:30,791 - AnimateDiff - INFO - Loading motion module mm_sd_v15_v2.ckpt from E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\model\mm_sd_v15_v2.ckpt

2023-11-25 18:38:31,574 - AnimateDiff - INFO - Guessed mm_sd_v15_v2.ckpt architecture: MotionModuleType.AnimateDiffV2

2023-11-25 18:38:33,296 - AnimateDiff - WARNING - Missing keys <All keys matched successfully>

2023-11-25 18:38:34,243 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet middle block.

2023-11-25 18:38:34,245 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet input blocks.

2023-11-25 18:38:34,245 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet output blocks.

2023-11-25 18:38:34,246 - AnimateDiff - INFO - Setting DDIM alpha.

2023-11-25 18:38:34,254 - AnimateDiff - INFO - Injection finished.

2023-11-25 18:38:34,254 - AnimateDiff - INFO - Hacking loral to support motion lora

2023-11-25 18:38:34,254 - AnimateDiff - INFO - Hacking CFGDenoiser forward function.

2023-11-25 18:38:34,254 - AnimateDiff - INFO - Hacking ControlNet.

*** Error completing request

*** Arguments: ('task(8jna2axn6nwg2d4)', '1 sex girl, big breasts, solo, high heels, skirt, thigh strap, squatting, black footwear, long hair, closed eyes, multicolored hair, red hair, black shirt, sleeveless, black skirt, full body, shirt, lips, brown hair, black hair, sleeveless shirt, bare shoulders, crop top, midriff, grey background, simple background, ', 'bad hands, normal quality, ((monochrome)), ((grayscale)), ((strabismus)), ng_deepnegative_v1_75t, (bad-hands-5:1.3), (worst quality:2), (low quality:2), (normal quality:2), lowres, bad anatomy, bad_prompt, badhandv4, EasyNegative, ', [], 20, 'Euler a', 1, 1, 7, 1600, 896, False, 0.7, 2, 'Latent', 0, 0, 0, 'Use same checkpoint', 'Use same sampler', '', '', [], <gradio.routes.Request object at 0x0000024A8CCB4670>, 0, False, '', 0.8, -1, False, -1, 0, 0, 0, True, False, {'ad_model': 'face_yolov8n.pt', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, {'ad_model': 'None', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, False, 'keyword prompt', 'keyword1, keyword2', 'None', 'textual inversion first', 'None', '0.7', 'None', False, 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, <animatediff_utils.py.AnimateDiffProcess object at 0x0000024A8CC58940>, False, False, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, None, 'Refresh models', <scripts.animatediff_ui.AnimateDiffProcess object at 0x0000024A3BD73F10>, UiControlNetUnit(enabled=True, module='depth_midas', model='control_v11f1p_sd15_depth [cfd03158]', weight=1, image={'image': array([[[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** ...,

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]]], dtype=uint8), 'mask': array([[[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** ...,

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]]], dtype=uint8)}, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=-1, threshold_b=-1, guidance_start=0, guidance_end=1, pixel_perfect=True, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), False, '', 0.5, True, False, '', 'Lerp', False, False, 1, 0.15, False, 'OUT', ['OUT'], 5, 0, 'Bilinear', False, 'Bilinear', False, 'Lerp', '', '', False, False, None, True, '🔄', False, False, 'Matrix', 'Columns', 'Mask', 'Prompt', '1,1', '0.2', False, False, False, 'Attention', [False], '0', '0', '0.4', None, '0', '0', False, False, False, 0, None, [], 0, False, [], [], False, 0, 1, False, False, 0, None, [], -2, False, [], False, 0, None, None, False, False, False, False, False, False, False, False, '1:1,1:2,1:2', '0:0,0:0,0:1', '0.2,0.8,0.8', 20, False, False, 'positive', 'comma', 0, False, False, '', 1, '', [], 0, '', [], 0, '', [], True, False, False, False, 0, False, 5, 'all', 'all', 'all', '', '', '', '1', 'none', False, '', '', 'comma', '', True, '', '20', 'all', 'all', 'all', 'all', 0, '', 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, None, None, False, None, None, False, None, None, False, 50, [], 30, '', 4, [], 1, '', '', '', '') {}

Traceback (most recent call last):

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 57, in f

res = list(func(*args, **kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 36, in f

res = func(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\txt2img.py", line 55, in txt2img

processed = processing.process_images(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-prompt-history\lib_history\image_process_hijacker.py", line 21, in process_images

res = original_function(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 732, in process_images

res = process_images_inner(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\scripts\animatediff_cn.py", line 77, in hacked_processing_process_images_hijack

assert global_input_frames, 'No input images found for ControlNet module'

AssertionError: No input images found for ControlNet module

提示:Python 运行时抛出了一个异常。请检查疑难解答页面。

---

2023-11-25 18:39:52,611 - AnimateDiff - INFO - AnimateDiff process start.

2023-11-25 18:39:52,611 - AnimateDiff - INFO - Motion module already injected. Trying to restore.

2023-11-25 18:39:52,612 - AnimateDiff - INFO - Restoring DDIM alpha.

2023-11-25 18:39:52,612 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet input blocks.

2023-11-25 18:39:52,612 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet output blocks.

2023-11-25 18:39:52,612 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet middle block.

2023-11-25 18:39:52,612 - AnimateDiff - INFO - Removal finished.

2023-11-25 18:39:52,650 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet middle block.

2023-11-25 18:39:52,650 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet input blocks.

2023-11-25 18:39:52,650 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet output blocks.

2023-11-25 18:39:52,650 - AnimateDiff - INFO - Setting DDIM alpha.

2023-11-25 18:39:52,656 - AnimateDiff - INFO - Injection finished.

2023-11-25 18:39:52,656 - AnimateDiff - INFO - AnimateDiff LoRA already hacked

2023-11-25 18:39:52,656 - AnimateDiff - INFO - CFGDenoiser already hacked

2023-11-25 18:39:52,657 - AnimateDiff - INFO - Hacking ControlNet.

2023-11-25 18:39:52,657 - AnimateDiff - INFO - BatchHijack already hacked.

2023-11-25 18:39:52,657 - AnimateDiff - INFO - ControlNet Main Entry already hacked.

2023-11-25 18:39:52,697 - ControlNet - INFO - Loading model from cache: control_v11f1p_sd15_depth [cfd03158]

2023-11-25 18:39:55,575 - ControlNet - INFO - Loading preprocessor: depth

2023-11-25 18:39:55,576 - ControlNet - INFO - preprocessor resolution = 448

2023-11-25 18:40:03,478 - ControlNet - INFO - ControlNet Hooked - Time = 10.787747859954834

*** Error completing request

*** Arguments: ('task(pud1kvh3lzj0ocs)', '1 sex girl, big breasts, solo, high heels, skirt, thigh strap, squatting, black footwear, long hair, closed eyes, multicolored hair, red hair, black shirt, sleeveless, black skirt, full body, shirt, lips, brown hair, black hair, sleeveless shirt, bare shoulders, crop top, midriff, grey background, simple background, ', 'bad hands, normal quality, ((monochrome)), ((grayscale)), ((strabismus)), ng_deepnegative_v1_75t, (bad-hands-5:1.3), (worst quality:2), (low quality:2), (normal quality:2), lowres, bad anatomy, bad_prompt, badhandv4, EasyNegative, ', [], 20, 'Euler a', 1, 1, 7, 800, 448, False, 0.7, 2, 'Latent', 0, 0, 0, 'Use same checkpoint', 'Use same sampler', '', '', [], <gradio.routes.Request object at 0x0000024A8CCB7DC0>, 0, False, '', 0.8, -1, False, -1, 0, 0, 0, True, False, {'ad_model': 'face_yolov8n.pt', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, {'ad_model': 'None', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, False, 'keyword prompt', 'keyword1, keyword2', 'None', 'textual inversion first', 'None', '0.7', 'None', False, 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, <animatediff_utils.py.AnimateDiffProcess object at 0x0000024A8CC58940>, False, False, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, None, 'Refresh models', <scripts.animatediff_ui.AnimateDiffProcess object at 0x0000024AB1539DB0>, UiControlNetUnit(enabled=True, module='depth_midas', model='control_v11f1p_sd15_depth [cfd03158]', weight=1, image={'image': array([[[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** ...,

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]]], dtype=uint8), 'mask': array([[[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** ...,

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]]], dtype=uint8)}, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=-1, threshold_b=-1, guidance_start=0, guidance_end=1, pixel_perfect=True, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), False, '', 0.5, True, False, '', 'Lerp', False, False, 1, 0.15, False, 'OUT', ['OUT'], 5, 0, 'Bilinear', False, 'Bilinear', False, 'Lerp', '', '', False, False, None, True, '🔄', False, False, 'Matrix', 'Columns', 'Mask', 'Prompt', '1,1', '0.2', False, False, False, 'Attention', [False], '0', '0', '0.4', None, '0', '0', False, False, False, 0, None, [], 0, False, [], [], False, 0, 1, False, False, 0, None, [], -2, False, [], False, 0, None, None, False, False, False, False, False, False, False, False, '1:1,1:2,1:2', '0:0,0:0,0:1', '0.2,0.8,0.8', 20, False, False, 'positive', 'comma', 0, False, False, '', 1, '', [], 0, '', [], 0, '', [], True, False, False, False, 0, False, 5, 'all', 'all', 'all', '', '', '', '1', 'none', False, '', '', 'comma', '', True, '', '20', 'all', 'all', 'all', 'all', 0, '', 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, None, None, False, None, None, False, None, None, False, 50, [], 30, '', 4, [], 1, '', '', '', '') {}

Traceback (most recent call last):

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 57, in f

res = list(func(*args, **kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 36, in f

res = func(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\txt2img.py", line 55, in txt2img

processed = processing.process_images(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-prompt-history\lib_history\image_process_hijacker.py", line 21, in process_images

res = original_function(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 732, in process_images

res = process_images_inner(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\scripts\animatediff_cn.py", line 118, in hacked_processing_process_images_hijack

return getattr(processing, '__controlnet_original_process_images_inner')(p, *args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 867, in process_images_inner

samples_ddim = p.sample(conditioning=p.c, unconditional_conditioning=p.uc, seeds=p.seeds, subseeds=p.subseeds, subseed_strength=p.subseed_strength, prompts=p.prompts)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 420, in process_sample

return process.sample_before_CN_hack(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 1140, in sample

samples = self.sampler.sample(self, x, conditioning, unconditional_conditioning, image_conditioning=self.txt2img_image_conditioning(x))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_kdiffusion.py", line 235, in sample

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args=self.sampler_extra_args, disable=False, callback=self.callback_state, **extra_params_kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_common.py", line 261, in launch_sampling

return func()

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_kdiffusion.py", line 235, in <lambda>

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args=self.sampler_extra_args, disable=False, callback=self.callback_state, **extra_params_kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\utils\_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\sampling.py", line 145, in sample_euler_ancestral

denoised = model(x, sigmas[i] * s_in, **extra_args)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\scripts\animatediff_infv2v.py", line 269, in mm_cfg_forward

x_out[a:b] = self.inner_model(x_in[a:b], sigma_in[a:b], cond=make_condition_dict(c_crossattn, image_cond_in[a:b]))

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\external.py", line 112, in forward

eps = self.get_eps(input * c_in, self.sigma_to_t(sigma), **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\external.py", line 138, in get_eps

return self.inner_model.apply_model(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_utils.py", line 17, in <lambda>

setattr(resolved_obj, func_path[-1], lambda *args, **kwargs: self(*args, **kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_utils.py", line 26, in __call__

return self.__sub_func(self.__orig_func, *args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_unet.py", line 48, in apply_model

return orig_func(self, x_noisy.to(devices.dtype_unet), t.to(devices.dtype_unet), cond, **kwargs).float()

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 858, in apply_model

x_recon = self.model(x_noisy, t, **cond)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 1335, in forward

out = self.diffusion_model(x, t, context=cc)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 827, in forward_webui

raise e

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 824, in forward_webui

return forward(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 561, in forward

control = param.control_model(x=x_in, hint=hint, timesteps=timesteps, context=context, y=y)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\cldm.py", line 31, in forward

return self.control_model(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\cldm.py", line 300, in forward

guided_hint = self.input_hint_block(hint, emb, context)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\generative-models\sgm\modules\diffusionmodules\openaimodel.py", line 102, in forward

x = layer(x)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions-builtin\Lora\networks.py", line 444, in network_Conv2d_forward

return originals.Conv2d_forward(self, input)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\conv.py", line 463, in forward

return self._conv_forward(input, self.weight, self.bias)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\conv.py", line 459, in _conv_forward

return F.conv2d(input, weight, bias, self.stride,

RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cpu and cuda:0! (when checking argument for argument weight in method wrapper_CUDA___slow_conv2d_forward)

提示:Python 运行时抛出了一个异常。请检查疑难解答页面。

---

2023-11-25 18:43:26,500 - AnimateDiff - INFO - AnimateDiff process start.

2023-11-25 18:43:26,501 - AnimateDiff - INFO - Motion module already injected. Trying to restore.

2023-11-25 18:43:26,501 - AnimateDiff - INFO - Restoring DDIM alpha.

2023-11-25 18:43:26,502 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet input blocks.

2023-11-25 18:43:26,503 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet output blocks.

2023-11-25 18:43:26,503 - AnimateDiff - INFO - Removing motion module from SD1.5 UNet middle block.

2023-11-25 18:43:26,503 - AnimateDiff - INFO - Removal finished.

2023-11-25 18:43:26,519 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet middle block.

2023-11-25 18:43:26,519 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet input blocks.

2023-11-25 18:43:26,519 - AnimateDiff - INFO - Injecting motion module mm_sd_v15_v2.ckpt into SD1.5 UNet output blocks.

2023-11-25 18:43:26,520 - AnimateDiff - INFO - Setting DDIM alpha.

2023-11-25 18:43:26,522 - AnimateDiff - INFO - Injection finished.

2023-11-25 18:43:26,522 - AnimateDiff - INFO - AnimateDiff LoRA already hacked

2023-11-25 18:43:26,522 - AnimateDiff - INFO - CFGDenoiser already hacked

2023-11-25 18:43:26,522 - AnimateDiff - INFO - Hacking ControlNet.

2023-11-25 18:43:26,522 - AnimateDiff - INFO - BatchHijack already hacked.

2023-11-25 18:43:26,522 - AnimateDiff - INFO - ControlNet Main Entry already hacked.

2023-11-25 18:43:26,736 - ControlNet - INFO - Loading model from cache: control_v11f1p_sd15_depth [cfd03158]

2023-11-25 18:43:28,938 - ControlNet - INFO - Loading preprocessor: depth

2023-11-25 18:43:28,939 - ControlNet - INFO - preprocessor resolution = 448

2023-11-25 18:43:38,425 - ControlNet - INFO - ControlNet Hooked - Time = 11.88900351524353

*** Error completing request

*** Arguments: ('task(ugw097u8h16i04e)', '1 sex girl, big breasts, solo, high heels, skirt, thigh strap, squatting, black footwear, long hair, closed eyes, multicolored hair, red hair, black shirt, sleeveless, black skirt, full body, shirt, lips, brown hair, black hair, sleeveless shirt, bare shoulders, crop top, midriff, grey background, simple background, ', 'bad hands, normal quality, ((monochrome)), ((grayscale)), ((strabismus)), ng_deepnegative_v1_75t, (bad-hands-5:1.3), (worst quality:2), (low quality:2), (normal quality:2), lowres, bad anatomy, bad_prompt, badhandv4, EasyNegative, ', [], 20, 'Euler a', 1, 1, 7, 800, 448, False, 0.7, 2, 'Latent', 0, 0, 0, 'Use same checkpoint', 'Use same sampler', '', '', [], <gradio.routes.Request object at 0x0000024A8CC5E950>, 0, False, '', 0.8, -1, False, -1, 0, 0, 0, True, False, {'ad_model': 'face_yolov8n.pt', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, {'ad_model': 'None', 'ad_prompt': '', 'ad_negative_prompt': '', 'ad_confidence': 0.3, 'ad_mask_k_largest': 0, 'ad_mask_min_ratio': 0, 'ad_mask_max_ratio': 1, 'ad_x_offset': 0, 'ad_y_offset': 0, 'ad_dilate_erode': 4, 'ad_mask_merge_invert': 'None', 'ad_mask_blur': 4, 'ad_denoising_strength': 0.4, 'ad_inpaint_only_masked': True, 'ad_inpaint_only_masked_padding': 32, 'ad_use_inpaint_width_height': False, 'ad_inpaint_width': 512, 'ad_inpaint_height': 512, 'ad_use_steps': False, 'ad_steps': 28, 'ad_use_cfg_scale': False, 'ad_cfg_scale': 7, 'ad_use_checkpoint': False, 'ad_checkpoint': 'Use same checkpoint', 'ad_use_vae': False, 'ad_vae': 'Use same VAE', 'ad_use_sampler': False, 'ad_sampler': 'Euler a', 'ad_use_noise_multiplier': False, 'ad_noise_multiplier': 1, 'ad_use_clip_skip': False, 'ad_clip_skip': 1, 'ad_restore_face': False, 'ad_controlnet_model': 'None', 'ad_controlnet_module': 'None', 'ad_controlnet_weight': 1, 'ad_controlnet_guidance_start': 0, 'ad_controlnet_guidance_end': 1, 'is_api': ()}, False, 'keyword prompt', 'keyword1, keyword2', 'None', 'textual inversion first', 'None', '0.7', 'None', False, 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, <animatediff_utils.py.AnimateDiffProcess object at 0x0000024A8CC58940>, False, False, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, 'LoRA', 'None', 1, 1, None, 'Refresh models', <scripts.animatediff_ui.AnimateDiffProcess object at 0x0000024A8CC59F60>, UiControlNetUnit(enabled=True, module='depth_midas', model='control_v11f1p_sd15_depth [cfd03158]', weight=1, image={'image': array([[[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** [[183, 187, 189],

*** [183, 187, 189],

*** [183, 187, 189],

*** ...,

*** [185, 189, 191],

*** [185, 189, 191],

*** [185, 189, 191]],

***

*** ...,

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]],

***

*** [[223, 224, 227],

*** [223, 224, 227],

*** [223, 224, 227],

*** ...,

*** [227, 227, 227],

*** [227, 227, 227],

*** [227, 227, 227]]], dtype=uint8), 'mask': array([[[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** ...,

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]],

***

*** [[0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0],

*** ...,

*** [0, 0, 0],

*** [0, 0, 0],

*** [0, 0, 0]]], dtype=uint8)}, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=-1, threshold_b=-1, guidance_start=0, guidance_end=1, pixel_perfect=True, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), UiControlNetUnit(enabled=False, module='none', model='None', weight=1, image=None, resize_mode='Crop and Resize', low_vram=False, processor_res=512, threshold_a=64, threshold_b=64, guidance_start=0, guidance_end=1, pixel_perfect=False, control_mode='Balanced', save_detected_map=True), False, '', 0.5, True, False, '', 'Lerp', False, False, 1, 0.15, False, 'OUT', ['OUT'], 5, 0, 'Bilinear', False, 'Bilinear', False, 'Lerp', '', '', False, False, None, True, '🔄', False, False, 'Matrix', 'Columns', 'Mask', 'Prompt', '1,1', '0.2', False, False, False, 'Attention', [False], '0', '0', '0.4', None, '0', '0', False, False, False, 0, None, [], 0, False, [], [], False, 0, 1, False, False, 0, None, [], -2, False, [], False, 0, None, None, False, False, False, False, False, False, False, False, '1:1,1:2,1:2', '0:0,0:0,0:1', '0.2,0.8,0.8', 20, False, False, 'positive', 'comma', 0, False, False, '', 1, '', [], 0, '', [], 0, '', [], True, False, False, False, 0, False, 5, 'all', 'all', 'all', '', '', '', '1', 'none', False, '', '', 'comma', '', True, '', '20', 'all', 'all', 'all', 'all', 0, '', 1.6, 0.97, 0.4, 0, 20, 0, 12, '', True, False, False, False, 512, False, True, ['Face'], False, '{\n "face_detector": "RetinaFace",\n "rules": {\n "then": {\n "face_processor": "img2img",\n "mask_generator": {\n "name": "BiSeNet",\n "params": {\n "fallback_ratio": 0.1\n }\n }\n }\n }\n}', 'None', 40, None, None, False, None, None, False, None, None, False, 50, [], 30, '', 4, [], 1, '', '', '', '') {}

Traceback (most recent call last):

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 57, in f

res = list(func(*args, **kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\call_queue.py", line 36, in f

res = func(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\txt2img.py", line 55, in txt2img

processed = processing.process_images(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-prompt-history\lib_history\image_process_hijacker.py", line 21, in process_images

res = original_function(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 732, in process_images

res = process_images_inner(p)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\scripts\animatediff_cn.py", line 118, in hacked_processing_process_images_hijack

return getattr(processing, '__controlnet_original_process_images_inner')(p, *args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 867, in process_images_inner

samples_ddim = p.sample(conditioning=p.c, unconditional_conditioning=p.uc, seeds=p.seeds, subseeds=p.subseeds, subseed_strength=p.subseed_strength, prompts=p.prompts)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 420, in process_sample

return process.sample_before_CN_hack(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\processing.py", line 1140, in sample

samples = self.sampler.sample(self, x, conditioning, unconditional_conditioning, image_conditioning=self.txt2img_image_conditioning(x))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_kdiffusion.py", line 235, in sample

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args=self.sampler_extra_args, disable=False, callback=self.callback_state, **extra_params_kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_common.py", line 261, in launch_sampling

return func()

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_samplers_kdiffusion.py", line 235, in <lambda>

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args=self.sampler_extra_args, disable=False, callback=self.callback_state, **extra_params_kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\utils\_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\sampling.py", line 145, in sample_euler_ancestral

denoised = model(x, sigmas[i] * s_in, **extra_args)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-animatediff\scripts\animatediff_infv2v.py", line 269, in mm_cfg_forward

x_out[a:b] = self.inner_model(x_in[a:b], sigma_in[a:b], cond=make_condition_dict(c_crossattn, image_cond_in[a:b]))

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\external.py", line 112, in forward

eps = self.get_eps(input * c_in, self.sigma_to_t(sigma), **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\k-diffusion\k_diffusion\external.py", line 138, in get_eps

return self.inner_model.apply_model(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_utils.py", line 17, in <lambda>

setattr(resolved_obj, func_path[-1], lambda *args, **kwargs: self(*args, **kwargs))

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_utils.py", line 26, in __call__

return self.__sub_func(self.__orig_func, *args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\modules\sd_hijack_unet.py", line 48, in apply_model

return orig_func(self, x_noisy.to(devices.dtype_unet), t.to(devices.dtype_unet), cond, **kwargs).float()

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 858, in apply_model

x_recon = self.model(x_noisy, t, **cond)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 1335, in forward

out = self.diffusion_model(x, t, context=cc)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 827, in forward_webui

raise e

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 824, in forward_webui

return forward(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\hook.py", line 561, in forward

control = param.control_model(x=x_in, hint=hint, timesteps=timesteps, context=context, y=y)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\cldm.py", line 31, in forward

return self.control_model(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions\sd-webui-controlnet\scripts\cldm.py", line 300, in forward

guided_hint = self.input_hint_block(hint, emb, context)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\repositories\generative-models\sgm\modules\diffusionmodules\openaimodel.py", line 102, in forward

x = layer(x)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "E:\sd-webui-aki\sd-webui-aki-v4\extensions-builtin\Lora\networks.py", line 444, in network_Conv2d_forward

return originals.Conv2d_forward(self, input)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\conv.py", line 463, in forward

return self._conv_forward(input, self.weight, self.bias)

File "E:\sd-webui-aki\sd-webui-aki-v4\py310\lib\site-packages\torch\nn\modules\conv.py", line 459, in _conv_forward

return F.conv2d(input, weight, bias, self.stride,

RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cpu and cuda:0! (when checking argument for argument weight in method wrapper_CUDA___slow_conv2d_forward)

提示:Python 运行时抛出了一个异常。请检查疑难解答页面。

---报错解决

代码语言:javascript

复制

I got the same error using "--use-cpu all", If you modify this segment of modules/devices.py

def get_device_for(task):

if task in shared.cmd_opts.use_cpu:

return cpu

return get_optimal_device()

to just return CPU it properly forces CN onto the CPU as well. Which points to the issue being with it looking for a task tied to use_cpu and not finding it. Digging into CN's code, it calls get_device_for("controlnet"). So I reverted the code and tried something different.

I'm guessing you're using the same flag, just "--use-cpu all"?

I got it working with the original code by replacing that with "--use-cpu all controlnet". This would effectively make use_cpu an array holding "all" and "controlnet", allowing CN to find itself and get CPU.

Another fix for this might be

def get_device_for(task):

if task in shared.cmd_opts.use_cpu or "all" in shared.cmd_opts.use_cpu:

return cpu

return get_optimal_device()

which makes 'all' behave as implied by the term, forcing CPU on everything, this also applies to something called swinir (which from what I can tell was also missed by "all" previously). This would be a fix code-side, whereas adding controlnet to the argument list is user-side and easier to do.安装插件

下载对应的大模型

代码语言:javascript

复制

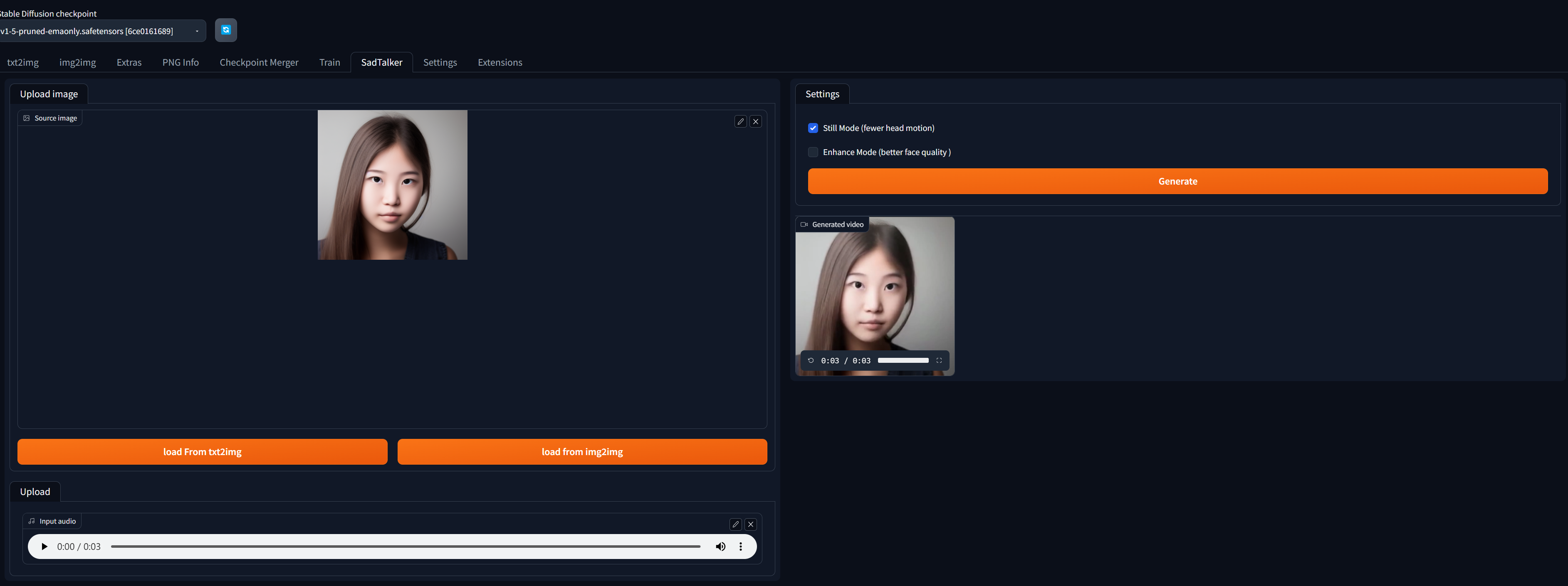

https://github.com/OpenTalker/SadTalker/tree/main#2-download-models模型存放位置

stable-diffusion-webui/models/SadTalkerorstable-diffusion-webui/extensions/SadTalker/checkpoints/, the checkpoints will be detected automatically.

启用即可,注意安装完任意插件都需要重新启动webui

1790

1790

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?