hadoop默认不支持lzo的压缩格式;

lzo压缩工具,支持对超过block大小的数据进行切分;

关于lzo压缩的弊端:

1.需要手工或者shell对lzo文件创建index;

2.lzo格式的文件,通过hive执行同样的查询操作时要比其它格式慢几倍之多;

总结:暂时不建议使用lzo压缩

环境:

系统:Centos 7.5

hadoop:hadoop-2.6.0-cdh5.7.0.tar.gz(通过hadoop-2.6.0-cdh5.7.0-src.tar.gz编译生成的)

lzo所需组件:

lzo

lzop

hadoop-gpl-packaging:gpl-packaging的作用主要是对压缩的lzo文件创建索引,否则的话,无论压缩文件是否大于hdfs的block大小,都只会1个map操作。

编译安装lzo:#安装相关依赖

yum -y install lzo-devel zlib-devel gcc autoconf automake libtool

#编译lzo

cd /usr/local/src

wget http://www.oberhumer.com/opensource/lzo/download/lzo-2.06.tar.gz

tar -zxvf lzo-2.06.tar.gz

cd lzo-2.06

./configure -enable-shared -prefix=/usr/local/lzo

make

make install

注:编译完lzo包之后,会在/usr/local/lzo/目录下生成一些文件。

[root@hadoop004 lzo]# ll

total 0

drwxr-xr-x 3 root root 17 Apr 19 10:30 include

drwxr-xr-x 2 root root 103 Apr 19 10:30 lib

drwxr-xr-x 3 root root 17 Apr 19 10:30 share

#查看lzop命令:

[root@hadoop004 lzo]# which lzop

/usr/bin/lzop

#lzo命令使用方法及压缩测试

lzo压缩:lzop -v filename

lzo解压:lzop -dv filename

[root@hadoop004 hadoop]# du -sh *

69Maccess.log

16Maccess.log.lzo

注:.lzo为压缩过后的文件,可以看到压缩比例很高。

安装hadoop-lzo:#编译安装hadoop-lzo的准备工作

cd /usr/local/src

wget https://github.com/twitter/hadoop-lzo/archive/master.zip

unzip master.zip

cd hadoop-lzo-master/

注:因为hadoop使用的是2.6.0;所以版本修改为2.6.0;修改的文件为pom.xml

UTF-8

2.6.0

1.0.4

#开始编译

mvn clean package -Dmaven.test.skip=true

注:编译成功

[INFO] Building jar: /usr/local/src/hadoop-lzo-master/target/hadoop-lzo-0.4.21-SNAPSHOT-javadoc.jar

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 01:08 min

[INFO] Finished at: 2019-04-19T10:39:55+08:00

[INFO] Final Memory: 28M/136M

[INFO] ------------------------------------------------------------------------

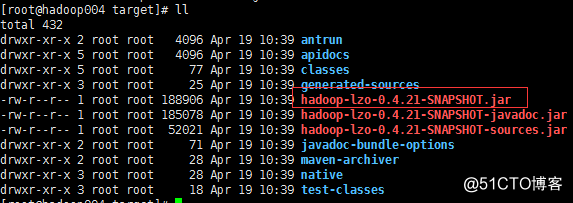

#编译生成的文件hadoop-lzo-0.4.21-SNAPSHOT.jar,很重要(在target目录下)

[root@hadoop004 target]# pwd

/usr/local/src/hadoop-lzo-master/target

[root@hadoop004 target]# ll

total 432

drwxr-xr-x 2 root root 4096 Apr 19 10:39 antrun

drwxr-xr-x 5 root root 4096 Apr 19 10:39 apidocs

drwxr-xr-x 5 root root 77 Apr 19 10:39 classes

drwxr-xr-x 3 root root 25 Apr 19 10:39 generated-sources

-rw-r--r-- 1 root root 188906 Apr 19 10:39 hadoop-lzo-0.4.21-SNAPSHOT.jar

-rw-r--r-- 1 root root 185078 Apr 19 10:39 hadoop-lzo-0.4.21-SNAPSHOT-javadoc.jar

-rw-r--r-- 1 root root 52021 Apr 19 10:39 hadoop-lzo-0.4.21-SNAPSHOT-sources.jar

drwxr-xr-x 2 root root 71 Apr 19 10:39 javadoc-bundle-options

drwxr-xr-x 2 root root 28 Apr 19 10:39 maven-archiver

drwxr-xr-x 3 root root 28 Apr 19 10:39 native

drwxr-xr-x 3 root root 18 Apr 19 10:39 test-classes

注:我们最终需要的就是hadoop-lzo-0.4.21-SNAPSHOT.jar文件

注:将hadoop-lzo-0.4.21-SNAPSHOT.jar包复制到各hadoop节点的${HADOOP_HOME}/share/hadoop/common/目录下才能被hadoop使用

hadoop各节点配置以支持lzo:

注:首先在master节点执行./stop-all.sh,以关闭所有节点的进程#core-site.xml配置支持的压缩的类型

io.compression.codecs

org.apache.hadoop.io.compress.GzipCodec,

org.apache.hadoop.io.compress.DefaultCodec,

com.hadoop.compression.lzo.LzoCodec,

com.hadoop.compression.lzo.LzopCodec,

org.apache.hadoop.io.compress.BZip2Codec

io.compression.codec.lzo.class

com.hadoop.compression.lzo.LzoCodec

#mapred-site.xml配置mapreduce各阶段的压缩类型

输入阶段的压缩

mapred.compress.map.output

true

mapred.map.output.compression.codec

com.hadoop.compression.lzo.LzoCodec

最终阶段的压缩

mapreduce.output.fileoutputformat.compress

true

mapreduce.output.fileoutputformat.compress.codec

org.apache.hadoop.io.compress.BZip2Codec

注:配置完成之后在master节点执行./start-all.sh,启动各节点的相关的进程

执行job测试lzo压缩切分是否生效:

修改hadoop的block大小为10M;#hdfs-site.xml

dfs.blocksize

10485760

准备测试文件,大小为78.7M;[hadoop@hadoop002 hadoop]$ hadoop fs -ls /input

Found 1 items

-rw-r--r-- 3 hadoop hadoop 82552172 2019-04-19 11:46 /input/hadoop_data_000.txt

[hadoop@hadoop002 hadoop]$ hadoop fs -du -s -h /input

78.7 M 236.2 M /input

执行MapReduce Job查看:[hadoop@hadoop001 train]$ hadoop jar hadoop_train-1.0.jar com.g609.hadoop.etl.driver.LogETLDriver /input /output

19/04/19 11:46:43 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

19/04/19 11:46:44 INFO input.FileInputFormat: Total input paths to process : 1

19/04/19 11:46:44 INFO lzo.GPLNativeCodeLoader: Loaded native gpl library from the embedded binaries

19/04/19 11:46:44 INFO lzo.LzoCodec: Successfully loaded & initialized native-lzo library [hadoop-lzo rev f1deea9a313f4017dd5323cb8bbb3732c1aaccc5]

19/04/19 11:46:44 INFO mapreduce.JobSubmitter: number of splits:8

19/04/19 11:46:44 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1555643762064_0001

19/04/19 11:46:45 INFO impl.YarnClientImpl: Submitted application application_1555643762064_0001

19/04/19 11:46:45 INFO mapreduce.Job: The url to track the job: http://hadoop001:8088/proxy/application_1555643762064_0001/

19/04/19 11:46:45 INFO mapreduce.Job: Running job: job_1555643762064_0001

19/04/19 11:46:53 INFO mapreduce.Job: Job job_1555643762064_0001 running in uber mode : false

19/04/19 11:46:53 INFO mapreduce.Job: map 0% reduce 0%

19/04/19 11:47:05 INFO mapreduce.Job: map 38% reduce 0%

19/04/19 11:47:06 INFO mapreduce.Job: map 50% reduce 0%

19/04/19 11:47:14 INFO mapreduce.Job: map 75% reduce 0%

19/04/19 11:47:15 INFO mapreduce.Job: map 88% reduce 0%

19/04/19 11:47:18 INFO mapreduce.Job: map 88% reduce 29%

19/04/19 11:47:20 INFO mapreduce.Job: map 100% reduce 29%

19/04/19 11:47:24 INFO mapreduce.Job: map 100% reduce 72%

19/04/19 11:47:27 INFO mapreduce.Job: map 100% reduce 79%

19/04/19 11:47:30 INFO mapreduce.Job: map 100% reduce 86%

19/04/19 11:47:33 INFO mapreduce.Job: map 100% reduce 92%

19/04/19 11:47:36 INFO mapreduce.Job: map 100% reduce 100%

19/04/19 11:47:37 INFO mapreduce.Job: Job job_1555643762064_0001 completed successfully

注:可以看到执行job时启用了lzo压缩工具的切分功能,数据大小为78.7M,因为每个block大小为10M,启动了8个Map; 达到预期的效果

查看输出结果:可以看到输出结果的格式为.bz2,与我们在mapred-site.xml中配置的输出结果一致;[hadoop@hadoop002 hadoop]$ hadoop fs -ls /output

Found 2 items

-rw-r--r-- 3 hadoop hadoop 0 2019-04-19 11:47 /output/_SUCCESS

-rw-r--r-- 3 hadoop hadoop 14507599 2019-04-19 11:47 /output/part-r-00000.bz2

通过Hive查询测试切分是否成功:#准备测试数据part-r-00000.lzo,大小为28.6M;块大小之前已经设置10M

[hadoop@hadoop002 hadoop]$ hadoop fs -du -s -h /compress-lzo/day=20180717/*

28.6 M 85.8 M /compress-lzo/day=20180717/part-r-00000.lzo

#创建Hive分区表并加载hdfs上的测试数据

CREATE EXTERNAL TABLE g6_access_lzo (

cdn string,

region string,

level string,

time string,

ip string,

domain string,

url string,

traffic bigint)

PARTITIONED BY (

day string)

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t'

STORED AS INPUTFORMAT "com.hadoop.mapred.DeprecatedLzoTextInputFormat"

OUTPUTFORMAT "org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat"

LOCATION '/compress-lzo';

#刷新元数据

alter table g6_access_lzo add if not exists partition(day='20180717');

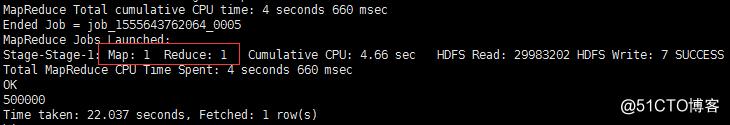

#第一次执行sql语句,查看Map的个数;可以看到Map个数为1;

MapReduce Total cumulative CPU time: 4 seconds 660 msec

Ended Job = job_1555643762064_0005

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 4.66 sec HDFS Read: 29983202 HDFS Write: 7 SUCCESS

Total MapReduce CPU Time Spent: 4 seconds 660 msec

OK

500000

Time taken: 22.037 seconds, Fetched: 1 row(s)

#对part-r-00000.lzo文件生成index操作

hadoop jar hadoop-lzo-0.4.21-SNAPSHOT.jar com.hadoop.compression.lzo.LzoIndexer /compress-lzo/day=20180717/part-r-00000.lzo

[hadoop@hadoop001 ~]$ hadoop jar hadoop-lzo-0.4.21-SNAPSHOT.jar com.hadoop.compression.lzo.LzoIndexer /compress-lzo/day=20180717/part-r-00000.lzo

19/04/19 15:26:56 INFO lzo.GPLNativeCodeLoader: Loaded native gpl library from the embedded binaries

19/04/19 15:26:56 INFO lzo.LzoCodec: Successfully loaded & initialized native-lzo library [hadoop-lzo rev f1deea9a313f4017dd5323cb8bbb3732c1aaccc5]

19/04/19 15:26:57 INFO lzo.LzoIndexer: [INDEX] LZO Indexing file /compress-lzo/day=20180717/part-r-00000.lzo, size 0.03 GB...

19/04/19 15:26:58 INFO Configuration.deprecation: hadoop.native.lib is deprecated. Instead, use io.native.lib.available

19/04/19 15:26:58 INFO lzo.LzoIndexer: Completed LZO Indexing in 0.36 seconds (78.54 MB/s). Index size is 2.03 KB.

#查看生成的index文件

[hadoop@hadoop001 ~]$ hadoop fs -ls /compress-lzo/day=20180717/*

-rw-r--r-- 3 hadoop hadoop 29975501 2019-04-19 15:19 /compress-lzo/day=20180717/part-r-00000.lzo

-rw-r--r-- 3 hadoop hadoop 2080 2019-04-19 15:26 /compress-lzo/day=20180717/part-r-00000.lzo.index

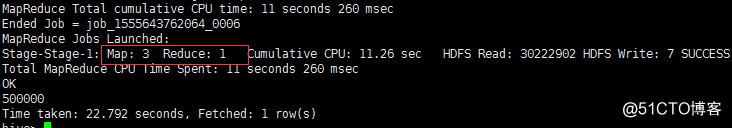

#第二次执行sql语句,查看Map的个数;可以看到Map个数为3;

MapReduce Total cumulative CPU time: 11 seconds 260 msec

Ended Job = job_1555643762064_0006

MapReduce Jobs Launched:

Stage-Stage-1: Map: 3 Reduce: 1 Cumulative CPU: 11.26 sec HDFS Read: 30222902 HDFS Write: 7 SUCCESS

Total MapReduce CPU Time Spent: 11 seconds 260 msec

OK

500000

Time taken: 22.792 seconds, Fetched: 1 row(s)

创建Index之前;Map为1个

创建Index之后;Map为3个

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?