文章目录

实验记录(双过strong baseline)

增大d_model = 512 + conformer

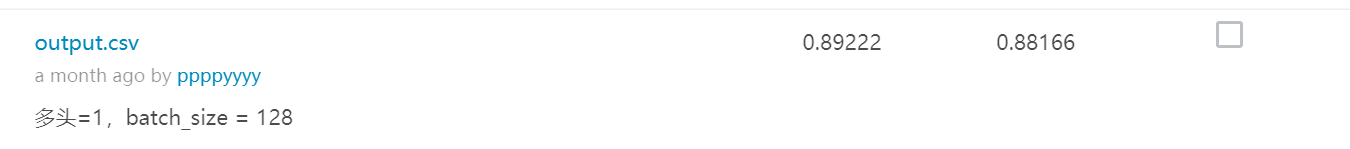

只修改batch_size=128和head=1,接近medium

代码理解

样例代码

主要理解dim_feedforward=256,输出model看一下

ConformerBlock参数设置(照抄)

self.conformer_block = ConformerBlock(

dim=d_model,

dim_head=64,

heads=8,

ff_mult=4,

conv_expansion_factor=2,

conv_kernel_size=31,

attn_dropout=dropout,

ff_dropout=dropout,

conv_dropout=dropout

)

实验bug修改

安装comformer库遇到No module named问题(jupyter lab使用的环境是python3.7,下载的是envs/python38)

查看pip install安装的conformer包在哪里

在jupyter lab查看sys path,手动加入包所在地方

改进空间(待改进)

- AM-Softmax

- SAP

- conformer_block 的各个参数设置还不理解,学完后面再来…

参考资料

课程主页:https://speech.ee.ntu.edu.tw/~hylee/ml/2021-spring.php

李宏毅深度学习2021春HW4实验四,Strong Baseline,使用Conformer

Conformer的代码实现:https://github.com/lucidrains/conformer/blob/master/conformer/conformer.py

数据集和提示——以下来源于bilibili评论:https://www.bilibili.com/video/BV1nL4y1H7Wo?spm_id_from=333.999.0.0

课程官方资料库(含PPT和样例代码):https://speech.ee.ntu.edu.tw/~hylee/ml/2021-spring.html

HW4数据集:

https://pan.baidu.com/s/1qAar83zKKENNfFu3CYQ_tw 提取码:4o8t (Google Driver参见样例代码)

Conformer:

https://arxiv.org/pdf/2005.08100.pdf https://arxiv.org/pdf/1706.03762.pdf

https://github.com/lucidrains/conformer

SAP:

https://danielpovey.com/files/2018_interspeech_xvector_attention.pdf

(code我忘了出处,github搜Self Attentive Pooling都可用)

AMSoftmax & Focal:

https://arxiv.org/pdf/1801.05599.pdf

https://github.com/miquelindia90/DoubleAttentionSpeakerVerification/blob/master/scripts/loss.py

SpecAug:

https://github.com/zcaceres/spec_augment

5045

5045

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?