import tensorflow as tf

tf.test.is_gpu_available()

import tensorflow as tf

tf.test.is_gpu_available(

cuda_only=False,

min_cuda_compute_capability=None

)

- 最新版本

import tensorflow as tf

tf.config.list_physical_devices('GPU')

但是有些时候,就算上述代码运行没问题,还是不能够使用gpu的(因为cuda版本、gpu算力不兼容等原因),所以需要简单地使用一下gpu试试看:

- tensorflow2.X(下述代码参考自tensorflow2.0官方教程)

import tensorflow as tf

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10, activation='softmax')

])

model.compile(optimizer='adam',loss='sparse_categorical_crossentropy',metrics=['accuracy'])

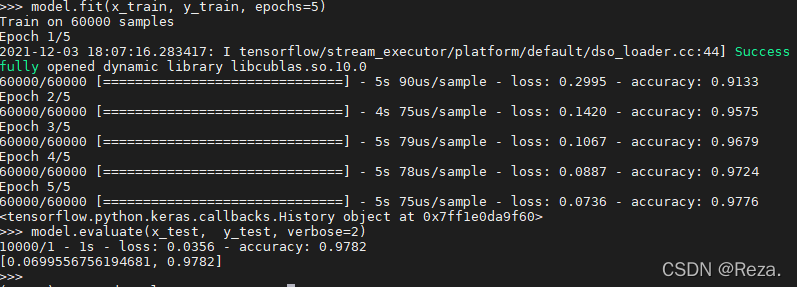

model.fit(x_train, y_train, epochs=5)

model.evaluate(x_test, y_test, verbose=2)

正常来说这段demo用gpu的话十几秒就能训练好,证明是可以使用gpu进行推理和反传的。

- tensorflow1.X(下述代码参考自博客:使用前向传播和反向传播的神经网络代码)

import tensorflow as tf

from numpy.random import RandomState

batch_size = 8

w1 = tf.Variable(tf.random_normal([2, 3], stddev=1, seed=1))

w2 = tf.Variable(tf.random_normal([3, 1], stddev=1, seed=1))

x = tf.placeholder(tf.float32, shape=(None, 2), name='x-input')

y_ = tf.placeholder(tf.float32, shape=(None, 1), name='y-input')

a = tf.matmul(x, w1)

y = tf.matmul(a, w2)

cross_entropy = -tf.reduce_mean(y_ * tf.log(tf.clip_by_value(y, 1e-10, 1.0)))

train_step = tf.train.AdamOptimizer(0.001).minimize((cross_entropy)) #反向传播算法

rdm = RandomState(1)

dataset_size = 128

X = rdm.rand(dataset_size, 2)

Y = [[int(x1+x2 <1)] for (x1, x2) in X]

with tf.Session() as sess:

init_op = tf.global_variables_initializer()

sess.run(init_op)

print(sess.run(w1))

print(sess.run(w2))

STEPS = 5000

for i in range(STEPS):

start = (i * batch_size) % dataset_size

end = min(start + batch_size, dataset_size)

sess.run(train_step, feed_dict={x: X[start:end], y_: Y[start:end]})

if i % 1000 == 0:

total_cross_entropy = sess.run(cross_entropy, feed_dict={x: X, y_: Y})

print("After %d training steps(s), cross entropy on all data is %g" % (i, total_cross_entropy))

print(sess.run(w1))

print(sess.run(w2))

2840

2840

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?