目录

须知

\qquad 本文不介绍Scrapy安装配置,不介绍Scrapy的架构,默认具备Scrapy初级基础,默认具备一定的爬虫知识.

\qquad 本文以爬取古风漫画网中漫画咒术回战为例作分析,实际上代码可爬取网站内任意漫画.

分析

A . \mathcal{A}. A.目标

https://www.gufengmh8.com/manhua/zhoushuhuizhan/

B . \mathcal{B}. B.子目标

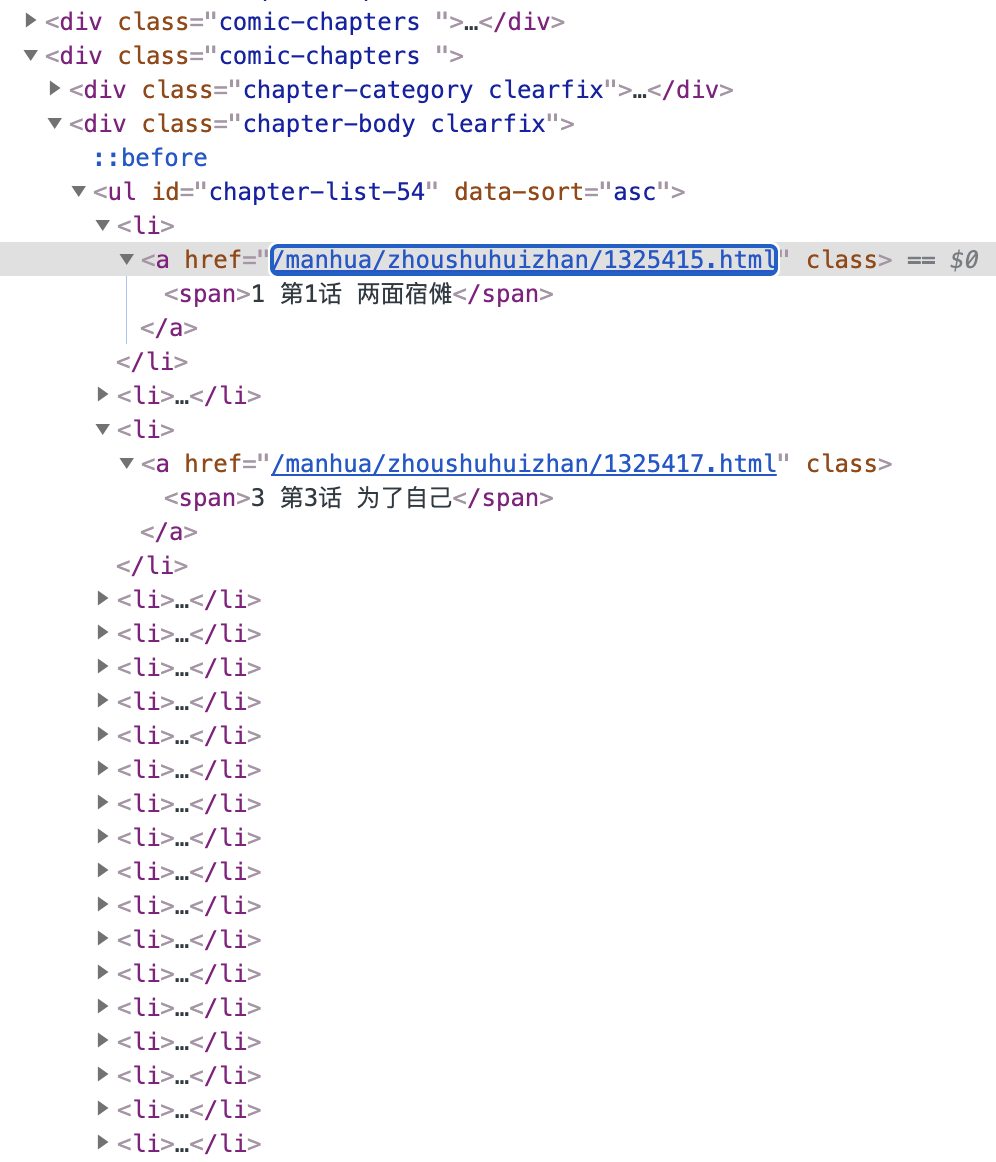

C . \mathcal{C}. C.子目标分析

https://www.gufengmh8.com/manhua/zhoushuhuizhan/1325415.html

\qquad

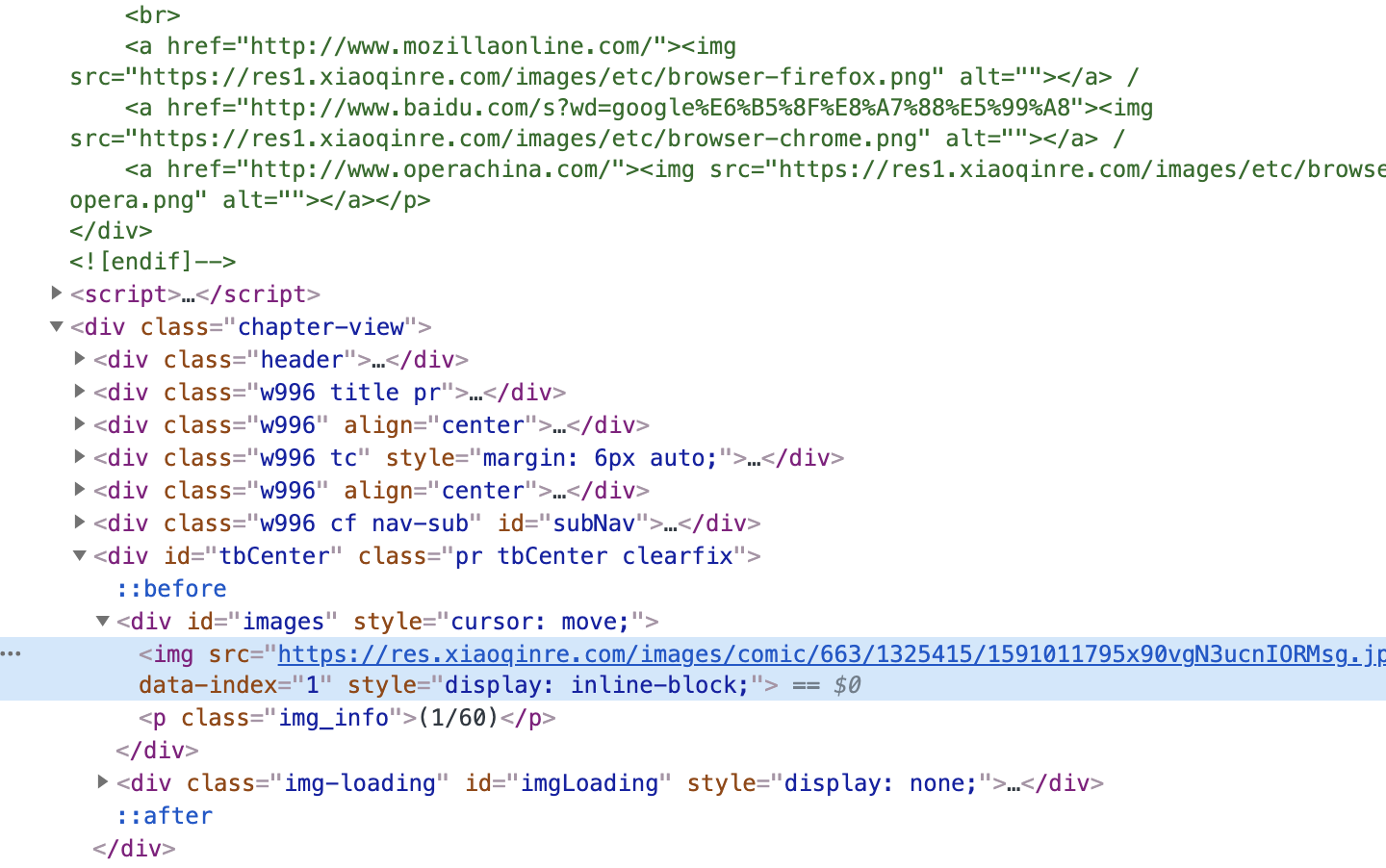

某一张图片如下,到此可以使用普通的爬虫写法完成爬取,然而我们有更为方便的方法.

\qquad

可以看到script里包含了当前子目标所有的图片,正则提取后与母网址join即可,至此分析结束.

manhua.py

\qquad 主逻辑部分.

# -*- coding: utf-8 -*-

import re

import base64

import scrapy

from urllib import parse

from scrapy import Request

from PicSpider.items import PicItem

class ManhuaSpider(scrapy.Spider):

name = 'manhua'

start_urls = ['https://www.gufengmh8.com/manhua/zhoushuhuizhan/']

def parse(self, response):

li_list = response.xpath('//*[@id="chapter-list-54"]//li')

for idx, li in enumerate(li_list):

child_url = li.xpath('./a/@href').extract_first("")

dir_name = str(li.xpath('./a/span/text()').extract_first(""))

yield Request(url=parse.urljoin(response.url, child_url), callback=self.parse_detail, meta={'dir_name': dir_name})

def parse_detail(self, response):

raw_data = response.xpath('/html/body/script[1]').extract_first("")

urls = re.match(r'.*var chapterImages = \[(.*?)]', raw_data).group(1)

urls = re.sub(r'"', r'', urls)

url_list = urls.split(",")

chapter_path = re.match(r'.*var chapterPath = "(.*?)"', raw_data).group(1)

chapter_path = "https://res.xiaoqinre.com/" + chapter_path

url_list = [chapter_path + url for url in url_list]

pic_item = PicItem()

pic_item["pic_url"] = url_list

pic_item["dir_name"] = response.meta["dir_name"]

yield pic_item

items.py

\qquad

定义简单的PicItem类.

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.html

import scrapy

class PicspiderItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

pass

class PicItem(scrapy.Item):

pic_url = scrapy.Field()

dir_name = scrapy.Field()

pipelines.py

\qquad 规范输出,图片重命名不可或缺.

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

from scrapy import Request

import re

from scrapy.pipelines.images import ImagesPipeline

class PicspiderPipeline(object):

def process_item(self, item, spider):

return item

class ImagesRenamePipeline(ImagesPipeline):

# 重写get_media_requests方法

def get_media_requests(self, item, info):

for idx, image_url in enumerate(item['pic_url']):

# meta里面的数据是从spider获取,然后通过meta传递给下面的file_path方法

yield Request(image_url, meta={'dir_name': item['dir_name'], 'idx': str(idx+1)})

# 重写file_path函数为图片划分目录并重命名

def file_path(self, request, response=None, info=None):

# 图片以索引命名

image_name = request.meta['idx']

# 接收上面meta传递过来的目录名称

dir_name = request.meta['dir_name']

# 过滤Windows命名非法字符串

dir_name = re.sub(r'[?\\*|“<>:/]', '', dir_name)

# 分文件夹存储的关键:{0}对应着dir_name, {1}对应着image_name

filename = u'{0}/{1}.png'.format(dir_name, image_name)

return filename

settings.py

\qquad

几处需要注意的设置,如代理USER_AGENT,ROBOTSTXT_OBEY,DOWNLOAD_DELAY,ITEM_PIPELINES以及末尾的图片下载设置,同时需要在当前项目目录创建images文件夹以供图片存储,当然你可以自定义其他位置 (需要更改代码相应处配置).

# -*- coding: utf-8 -*-

import sys

import os

from fake_useragent import UserAgent

ua = UserAgent()

# Scrapy settings for PicSpider project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'PicSpider'

SPIDER_MODULES = ['PicSpider.spiders']

NEWSPIDER_MODULE = 'PicSpider.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = ua.random

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

DOWNLOAD_DELAY = 0.01

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'PicSpider.middlewares.PicspiderSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

DOWNLOADER_MIDDLEWARES = {

'PicSpider.middlewares.PicspiderDownloaderMiddleware': 543,

}

# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'PicSpider.pipelines.ImagesRenamePipeline': 1,

'PicSpider.pipelines.PicspiderPipeline': 300,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

# 图片下载设置

IMAGES_URLS_FIELD = "pic_url"

project_dir = os.path.dirname(os.path.abspath(__file__))

IMAGES_STORE = os.path.join(project_dir, 'images')

middlewares.py

\qquad

如果不使用IP代理,middlewares.py采取默认设置即可.

main.py

\qquad

用于Pycharm调试.

from scrapy.cmdline import execute

import sys

import os

# 添加路径

sys.path.append(os.path.dirname(os.path.abspath(__file__)))

# 执行爬虫语句

execute(["scrapy", "crawl", "manhua"])

2805

2805

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?