Pytorch入门学习(三)

现有网络模型的使用及修改

- 以vgg16网络模型为例,最后分类是1000类,而使用的CIFAR10数据集需要最后分成10类,因此需要进行网络模型的修改。

- 直接添加线性层

- 修改最后线性层的参数

- 代码

# torchvision.models.vgg16(pretrained: bool = False, progress: bool = True, **kwargs)

# pretrained (bool) – If True, returns a model pre-trained on ImageNet

# progress (bool) – If True, displays a progress bar of the download to stderr

# 参数pretrained:为True代表下载的网络模型中的参数已经在ImageNet数据集中训练好了,预训练

# 为False代表下载的网络模型中的参数为初始值,并没有训练过

# 参数progress为True,显示下载进度条

import torchvision

# ../代表返回上一路径,通常./就可以

# train_data = torchvision.datasets.ImageNet("./ImageNet", split="train", transform=torchvision.transforms.ToTensor(),download = True)

# RuntimeError: The archive ILSVRC2012_devkit_t12.tar.gz is not present in the root directory or is corrupted.

# You need to download it externally and place it in ./ImageNet.

from torch import nn

vgg16_false = torchvision.models.vgg16(pretrained=False)

vgg16_true = torchvision.models.vgg16(pretrained=True)

# print(vgg16_true)

train_data = torchvision.datasets.CIFAR10("./dataset", train=True, download=True,

transform=torchvision.transforms.ToTensor())

# 如何改进现有的网络去实现自己的目标

# Vgg16训练好的模型,最后为1000类,而CIFAR10为10类

# 第一种实现,最后添加Linear层,将1000类转换成10类

# vgg16_true.add_module("add_linear", nn.Linear(1000, 10))

# print(vgg16_true)

# 第二种实现,在classifier中添加Linear层

# vgg16_true.classifier.add_module("add_linear", nn.Linear(1000, 10))

# print(vgg16_true)

# 第三种实现,直接修改VGG16模型最后Linear层的参数

print(vgg16_false)

vgg16_false.classifier[6] = nn.Linear(4096, 10)

print(vgg16_false)

网络模型的保存和读取

- 方式一:网络结构 + 网络参数

- 方式二:网络参数,以字典形式

保存

# 保存网络模型

import torch

import torchvision

from torch import nn

vgg16_false = torchvision.models.vgg16(pretrained=False)

# 保存方式一:网络模型结构 + 网络参数

# torch.save(vgg16_false, "vgg16_method1.pth")

# 保存方式二:网络参数,以字典形式(官方推荐)

# torch.save(vgg16_false.state_dict(), "vgg16_method2.pth")

# 针对自己定义的网络模型,采用方式一进行保存,会有陷阱

class MyModule(nn.Module):

def __init__(self) -> None:

super().__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=3)

def forward(self, x):

x = self.conv1(x)

return x

myModule = MyModule()

# 采用方式一保存

torch.save(myModule, "myModule_method1.pth")

读取

# 加载网络模型

import torch

import torchvision

from torch import nn

from model_save import *

# 加载方式一,对应保存方式一

# model = torch.load("vgg16_method1.pth")

# print(model)

# 加载方式二,对应保存方式二:获取网络参数字典,创建新的网络结构,导入网络参数

# model_dict = torch.load("vgg16_method2.pth")

# print(model_dict)

# vgg16 = torchvision.models.vgg16(pretrained=False)

# vgg16.load_state_dict(model_dict)

# print(vgg16)

# 针对自己定义的网络模型,采用方式一进行加载时,还需要添加网络模型的定义部分,实例部分不需要,否则报错

# 如果不想复制自定义网络模型的定义语句,可以添加一句from model_save import * 也可以

# class MyModule(nn.Module):

# def __init__(self) -> None:

# super().__init__()

# self.conv1 = nn.Conv2d(3, 64, kernel_size=3)

# def forward(self, x):

# x = self.conv1(x)

# return x

myModule = torch.load("myModule_method1.pth")

# 如果不添加网络模型的定义,则会报错

# AttributeError: Can't get attribute 'MyModule' on <module '__main__' from xxx

print(myModule)

完整的模型训练套路

套路(一)

- 代码

- model.py

import torch

from torch import nn

class MyModule(nn.Module):

def __init__(self) -> None:

super().__init__()

self.model = nn.Sequential(

nn.Conv2d(in_channels=3, out_channels=32, kernel_size=5, stride=1, padding=2),

nn.MaxPool2d(kernel_size=2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 测试网络

if __name__ == '__main__':

myModule = MyModule()

# 卷积层对输入的尺寸要求是(N, C, H ,W)

input = torch.ones((64, 3, 32, 32))

output = myModule(input)

print(output.shape)

# torch.Size([64, 10])

- train.py

import torch.optim

import torchvision

from torch.utils.tensorboard import SummaryWriter

from model import *

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

train_data = torchvision.datasets.CIFAR10(root="./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10(root="./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# 获取数据集的长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(train_data_size))

# 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 搭建神经网络

# 标准是需要新建model.py,在其里面写网络模型的定义,并简单对网络进行测试

# 需要导入model.py文件,from model import *

# 创建网络模型

myModule = MyModule()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

# 优化器

learnng_rate = 1e-2 # 1 x 10 ^ (-2) = 0.01

optim = torch.optim.SGD(myModule.parameters(), lr = learnng_rate)

# 设置训练网络的参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 2

# 添加tensorboard

writer = SummaryWriter("logs")

for i in range(epoch):

print("__________第{}轮训练开始__________".format(i+1))

# 训练步骤开始

for data in train_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

# 优化器优化模型

# 清零上一梯度

optim.zero_grad()

# 反向传播,获取梯度

loss.backward()

# 优化器根据梯度优化参数

optim.step()

total_train_step += 1

if total_train_step % 100 == 0:

print("训练次数:{}, Loss:{}".format(total_train_step, loss.item())) # 添加item(),将tensor类型转化为纯数字

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 每轮训练完后,需要让网络在测试数据集上跑一遍,以测试数据集上的准确率来评估网络模型

# 测试步骤开始,不需要进行调优

total_test_loss = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

print("整体测试集上的Loss:{}".format(total_test_loss))

writer.add_scalar("total_test_loss", total_test_loss, total_test_step)

total_test_step += 1

# 保存每一轮训练的结果

torch.save(myModule, "myModule_{}.pth".format(i+1))

print("第{}轮训练后的模型已保存".format(i+1))

writer.close()

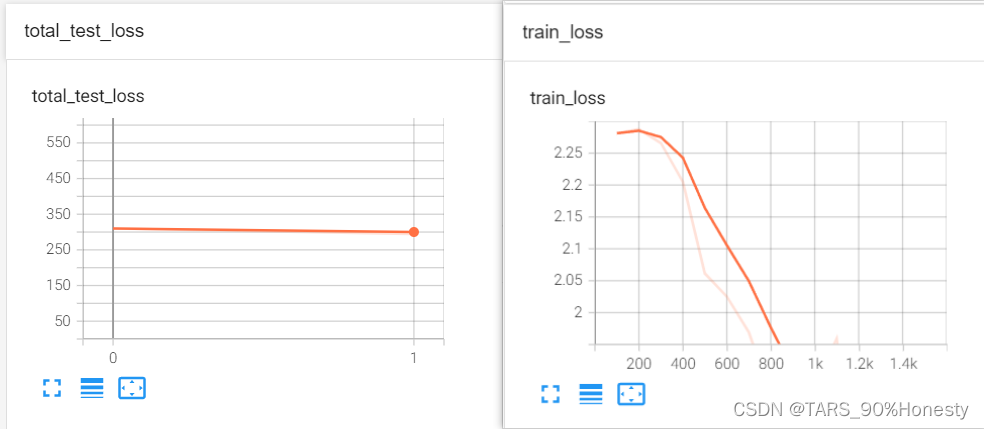

- tensorboard显示

套路(二)

- 增加优化代码:添加测试数据集正确率

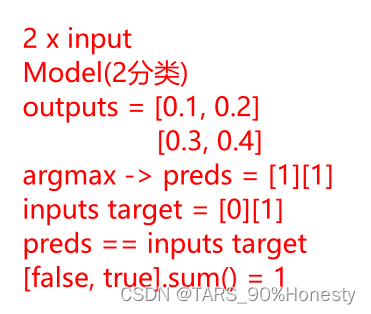

- 正确率的计算思路

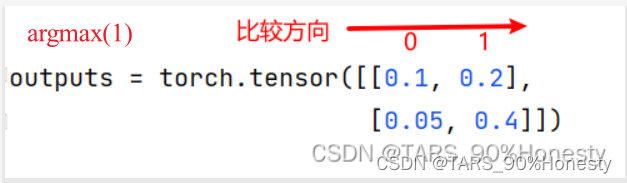

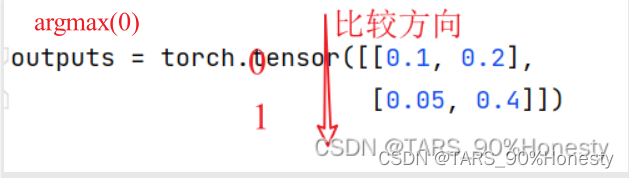

argmax函数将outputs由概率形式转化为preds最高概率的下标形式

import torch

outputs = torch.tensor([[0.1, 0.2],

[0.05, 0.4]])

print(outputs.argmax(1)) # tensor([1, 1])

print(outputs.argmax(0)) # tensor([0, 1])

- 上述正确率思路的代码实现

import torch

outputs = torch.tensor([[0.1, 0.2],

[0.3, 0.4]])

print(outputs.argmax(1)) # tensor([1, 1])

preds = outputs.argmax(1)

targets = torch.tensor([0, 1])

print(preds == targets) # tensor([False, True])

print((preds == targets).sum()) # tensor(1)

- 对上述完整套路(一)进行改进

# 添加整体测试集上的正确率total_test_accuray

import torch.optim

import torchvision

from torch.utils.tensorboard import SummaryWriter

from model import *

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

train_data = torchvision.datasets.CIFAR10(root="./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10(root="./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# 获取数据集的长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(train_data_size))

# 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 搭建神经网络

# 标准是需要新建model.py,在其里面写网络模型的定义,并简单对网络进行测试

# 需要导入model.py文件,from model import *

# 创建网络模型

myModule = MyModule()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

# 优化器

learnng_rate = 1e-2 # 1 x 10 ^ (-2) = 0.01

optim = torch.optim.SGD(myModule.parameters(), lr = learnng_rate)

# 设置训练网络的参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 2

# 添加tensorboard

writer = SummaryWriter("logs")

for i in range(epoch):

print("__________第{}轮训练开始__________".format(i+1))

# 训练步骤开始

for data in train_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

# 优化器优化模型

# 清零上一梯度

optim.zero_grad()

# 反向传播,获取梯度

loss.backward()

# 优化器根据梯度优化参数

optim.step()

total_train_step += 1

if total_train_step % 100 == 0:

print("训练次数:{}, Loss:{}".format(total_train_step, loss.item())) # 添加item(),将tensor类型转化为纯数字

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 每轮训练完后,需要让网络在测试数据集上跑一遍,以测试数据集上的准确率来评估网络模型

# 测试步骤开始,不需要进行调优

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy += accuracy

print("整体测试集上的Loss:{}".format(total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("total_test_loss", total_test_loss, total_test_step)

writer.add_scalar("total_test_accuracy", total_accuracy / test_data_size, total_test_step)

total_test_step += 1

# 保存每一轮训练的结果

torch.save(myModule, "myModule_{}.pth".format(i+1))

print("第{}轮训练后的模型已保存".format(i+1))

writer.close()

套路(三)

- 关注自己网络中是否有特殊的层,有的话必须调用以下两句代码,没有的话也可以调用,不会影响代码运行,建议添加上,保证完整性。

- 假设实例化后的网络为myModule

myModule.train(), 进入训练状态myModule.eval(), 进入验证状态- 查看官方文档说明,仅对网络中特殊的层起作用,比如Dropout,BatchNorm。

Sets the module in training mode.

This has any effect only on certain modules. See documentations of particular modules for details of their behaviors in training/evaluation mode, if they are affected, e.g. Dropout, BatchNorm, etc.

- 对上述完整套路(二)进行改进

# 添加myModule.train() 和 myModule.eval()

# 针对网络中的特殊层起作用,比如Dropout,BatchNorm等

# 当不含这些层时,添加也不影响代码运行,建议添加,保证完整性。

import torch.optim

import torchvision

from torch.utils.tensorboard import SummaryWriter

from model import *

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

train_data = torchvision.datasets.CIFAR10(root="./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10(root="./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# 获取数据集的长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(train_data_size))

# 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 搭建神经网络

# 标准是需要新建model.py,在其里面写网络模型的定义,并简单对网络进行测试

# 需要导入model.py文件,from model import *

# 创建网络模型

myModule = MyModule()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

# 优化器

learnng_rate = 1e-2 # 1 x 10 ^ (-2) = 0.01

optim = torch.optim.SGD(myModule.parameters(), lr = learnng_rate)

# 设置训练网络的参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 2

# 添加tensorboard

writer = SummaryWriter("logs")

for i in range(epoch):

print("__________第{}轮训练开始__________".format(i+1))

# 训练步骤开始

myModule.train()

for data in train_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

# 优化器优化模型

# 清零上一梯度

optim.zero_grad()

# 反向传播,获取梯度

loss.backward()

# 优化器根据梯度优化参数

optim.step()

total_train_step += 1

if total_train_step % 100 == 0:

print("训练次数:{}, Loss:{}".format(total_train_step, loss.item())) # 添加item(),将tensor类型转化为纯数字

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 每轮训练完后,需要让网络在测试数据集上跑一遍,以测试数据集上的准确率来评估网络模型

# 测试步骤开始,不需要进行调优

myModule.eval()

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy += accuracy

print("整体测试集上的Loss:{}".format(total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("total_test_loss", total_test_loss, total_test_step)

writer.add_scalar("total_test_accuracy", total_accuracy / test_data_size, total_test_step)

total_test_step += 1

# 保存每一轮训练的结果

torch.save(myModule, "myModule_{}.pth".format(i+1))

print("第{}轮训练后的模型已保存".format(i+1))

writer.close()

GPU训练

方式一

- 针对哪些部分进行GPU训练呢?

- 网络模型

- 数据(输入,标注)

- 损失函数

- 添加

.cuda()

- 代码

if(torch.cuda.is_available()):

myModule = myModule.cuda()

if(torch.cuda.is_available()):

loss_fn = loss_fn.cuda()

if (torch.cuda.is_available()):

imgs = imgs.cuda()

targets = targets.cuda()

- 针对完整套路(三)添加GPU后代码,及添加测试运行时间

# 网络模型

# 数据(输入, 标注)

# 损失函数

# .cuda()

import torch.optim

import torchvision

from torch.utils.tensorboard import SummaryWriter

import time

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

train_data = torchvision.datasets.CIFAR10(root="./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10(root="./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# 获取数据集的长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(train_data_size))

# 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 搭建神经网络

class MyModule(nn.Module):

def __init__(self) -> None:

super().__init__()

self.model = nn.Sequential(

nn.Conv2d(in_channels=3, out_channels=32, kernel_size=5, stride=1, padding=2),

nn.MaxPool2d(kernel_size=2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 创建网络模型

myModule = MyModule()

if(torch.cuda.is_available()):

myModule = myModule.cuda()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

if(torch.cuda.is_available()):

loss_fn = loss_fn.cuda()

# 优化器

learnng_rate = 1e-2 # 1 x 10 ^ (-2) = 0.01

optim = torch.optim.SGD(myModule.parameters(), lr = learnng_rate)

# 设置训练网络的参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 2

# 添加tensorboard

writer = SummaryWriter("logs")

start_time = time.time()

for i in range(epoch):

print("__________第{}轮训练开始__________".format(i+1))

# 训练步骤开始

myModule.train()

for data in train_dataloader:

imgs, targets = data

if (torch.cuda.is_available()):

imgs = imgs.cuda()

targets = targets.cuda()

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

# 优化器优化模型

# 清零上一梯度

optim.zero_grad()

# 反向传播,获取梯度

loss.backward()

# 优化器根据梯度优化参数

optim.step()

total_train_step += 1

if total_train_step % 100 == 0:

end_time = time.time()

print(end_time - start_time)

print("训练次数:{}, Loss:{}".format(total_train_step, loss.item())) # 添加item(),将tensor类型转化为纯数字

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 每轮训练完后,需要让网络在测试数据集上跑一遍,以测试数据集上的准确率来评估网络模型

# 测试步骤开始,不需要进行调优

myModule.eval()

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

if (torch.cuda.is_available()):

imgs = imgs.cuda()

targets = targets.cuda()

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy += accuracy

print("整体测试集上的Loss:{}".format(total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("total_test_loss", total_test_loss, total_test_step)

writer.add_scalar("total_test_accuracy", total_accuracy / test_data_size, total_test_step)

total_test_step += 1

# 保存每一轮训练的结果

torch.save(myModule, "myModule_{}.pth".format(i+1))

print("第{}轮训练后的模型已保存".format(i+1))

writer.close()

- 如果电脑不带GPU,可以使用

Google Colab这个平台训练代码。

方式(二)- 更常用

-

.to(device)

-

device = torch.device("cpu)

-

device = torch.device(“cuda”)

-

当电脑上有多张显卡时: 指定显卡

-

device = torch.device(“cuda:0”)

-

device = torch.device(“cuda:1”)

-

代码

# 网络模型

# 数据(输入, 标注)

# 损失函数

# .to(device)

# device = torch.device("cpu)

# device = torch.device("cuda")

# 当电脑上有多张显卡时: 指定显卡

# device = torch.device("cuda:0")

# device = torch.device("cuda:1")

import torch.optim

import torchvision

from torch.utils.tensorboard import SummaryWriter

import time

# 定义训练的设备

# device = torch.device("cuda")

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 准备数据集

from torch import nn

from torch.utils.data import DataLoader

train_data = torchvision.datasets.CIFAR10(root="./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10(root="./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# 获取数据集的长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(train_data_size))

# 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 搭建神经网络

class MyModule(nn.Module):

def __init__(self) -> None:

super().__init__()

self.model = nn.Sequential(

nn.Conv2d(in_channels=3, out_channels=32, kernel_size=5, stride=1, padding=2),

nn.MaxPool2d(kernel_size=2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 创建网络模型

myModule = MyModule()

myModule = myModule.to(device)

# 损失函数

loss_fn = nn.CrossEntropyLoss()

loss_fn = loss_fn.to(device)

# 优化器

learnng_rate = 1e-2 # 1 x 10 ^ (-2) = 0.01

optim = torch.optim.SGD(myModule.parameters(), lr = learnng_rate)

# 设置训练网络的参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 2

# 添加tensorboard

writer = SummaryWriter("logs")

start_time = time.time()

for i in range(epoch):

print("__________第{}轮训练开始__________".format(i+1))

# 训练步骤开始

myModule.train()

for data in train_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

# 优化器优化模型

# 清零上一梯度

optim.zero_grad()

# 反向传播,获取梯度

loss.backward()

# 优化器根据梯度优化参数

optim.step()

total_train_step += 1

if total_train_step % 100 == 0:

end_time = time.time()

print(end_time - start_time)

print("训练次数:{}, Loss:{}".format(total_train_step, loss.item())) # 添加item(),将tensor类型转化为纯数字

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 每轮训练完后,需要让网络在测试数据集上跑一遍,以测试数据集上的准确率来评估网络模型

# 测试步骤开始,不需要进行调优

myModule.eval()

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = myModule(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy += accuracy

print("整体测试集上的Loss:{}".format(total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("total_test_loss", total_test_loss, total_test_step)

writer.add_scalar("total_test_accuracy", total_accuracy / test_data_size, total_test_step)

total_test_step += 1

# 保存每一轮训练的结果

torch.save(myModule, "myModule_{}.pth".format(i+1))

print("第{}轮训练后的模型已保存".format(i+1))

writer.close()

完整的模型验证(测试,demo)套路

- 即利用已经训练好的模型,然后给它提供输入

- 代码如下

import torch

import torchvision

from PIL import Image

from torch import nn

image_path = "image/img.png"

image = Image.open(image_path)

print(image) # PIL image

# png格式是四通道,除了RGB三通道外,还有一个透明度通道,所以我们需要调用convert('RGB')保留其颜色通道

# 如果图片本来就是三个颜色通道,经过此操作,不变。

# 加上这一步后,可以适应png jpg各种格式的图片。

image = image.convert('RGB')

transform = torchvision.transforms.Compose([torchvision.transforms.Resize((32, 32)),

torchvision.transforms.ToTensor()])

image = transform(image)

print(image.shape) # torch.Size([3, 32, 32])

class MyModule(nn.Module):

def __init__(self) -> None:

super().__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 获取在Google Colab上训练好的CIFAR10网络模型myModule_gpu_30.pth文件

# GPU上的模型在CPU上运行,需要注释设备,device

model = torch.load("myModule_gpu_30.pth", map_location=torch.device('cpu'))

# print(model)

image = torch.reshape(image, (1, 3, 32, 32))

model.eval()

with torch.no_grad():

output = model(image)

print(output)

# tensor([[ 0.0420, -1.9627, 3.6137, 2.4646, 2.2315, 4.4008, -0.0148, -3.1938,

# -1.0747, -7.2118]], grad_fn=<AddmmBackward0>)

print(output.argmax(1)) # tensor([5]) 核对CIFAR10数据集的标签 ,是dog,测试准确

567

567

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?