深度强化学习——DDPG算法复现

DDPG(Deep Deterministic Policy Gradient)是一种基于策略的深度强化学习算法,于2016年由Timothy P. Lillicrap等人提出,论文:“Timothy P. Lillicrap,Jonathan J. Hunt,and Alexander Pritzel et al, CONTINUOUS CONTROL WITH DEEP REINFORCEMENT LEARNING,2016”,能够解决Actor-critic收敛速度慢的问题,现在已成为经典的深度强化学习算法。具体原理参见论文。也可参考博客:

https://blog.csdn.net/qq_30615903/article/details/80776715?ops_request_misc=&request_id=&biz_id=102&utm_term=DDPG&utm_medium=distribute.pc_search_result.none-task-blog-2allsobaiduweb~default-0-80776715

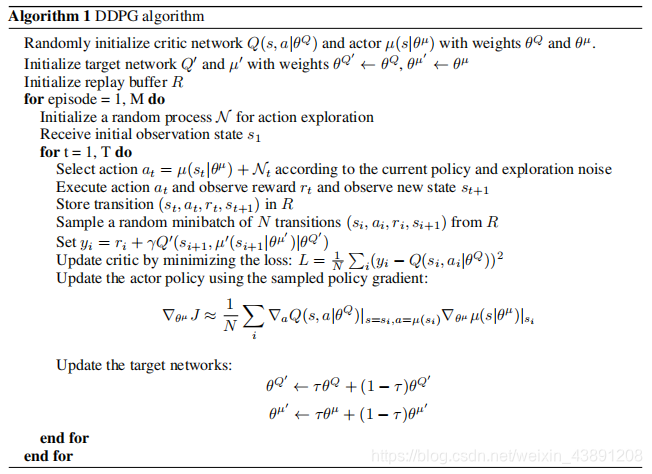

算法框架如下:

实现场景

这里以gym中的游戏“Pendulum-v0”为例,复现论文中的算法。“Pendulum-v0”是简单的杠杆平衡游戏,目标是使杠杆最后能够停留在顶端保持平衡。

算法平台

算法已经修改成了能够在windows上运行,编译软件是Vscode,python3.6.2,tensorflow1.4.0.

算法实现

导入相应的包

import os

import tensorflow as tf

import numpy as np

噪声

class OUActionNoise(object):

def __init__(self,mu,sigma=0.15,theta=0.2,dt=1e-2,x0=None):

self.theta = theta

self.mu = mu

self.dt = dt

self.sigma = sigma

self.x0 = x0

self.reset()

def __call__(self):

# noise = OUActionNoise

x = self.x_prev + self.theta*(self.mu - self.x_prev)*self.dt +\

self.sigma*np.sqrt(self.dt)*np.random.normal(size=self.mu.shape)

self.x_prev = x

return x

def reset(self):

self.x_prev = self.x0 if self.x0 is not None else np.zeros_like(self.mu)

经验池

class ReplayBuffer(object):

def __init__(self,max_size,input_shape,n_actions):

self.mem_size = max_size

self.mem_cntr = 0

self.state_memory = np.zeros((self.mem_size,*input_shape))

self.new_state_memory = np.zeros((self.mem_size,*input_shape))

self.action_memory = np.zeros((self.mem_size,n_actions))

self.reward_memory = np.zeros(self.mem_size)

self.terminal_memory = np.zeros(self.mem_size,dtype=np.float32)

def store_transition(self,state,action,reward,state_,done):

index = self.mem_cntr%self.mem_size

self.state_memory[index] = state

self.new_state_memory[index] = state_

self.reward_memory[index] = reward

self.action_memory[index] = action

self.terminal_memory[index] = 1-int(done)

self.mem_cntr += 1

def sample_buffer(self,batch_size):

max_mem = min(self.mem_cntr,self.mem_size)

batch = np.random.choice(max_mem,batch_size)

states = self.state_memory[batch]

actions = self.action_memory[batch]

new_states = self.new_state_memory[batch]

rewards = self.reward_memory[batch]

terminal = self.terminal_memory[batch]

return states,actions,new_states,rewards,terminal

Actor

class Actor(object):

def __init__(self,lr,n_actions,name,input_dims,sess,fc1_dims,

fc2_dims,action_bound,batch_size=64,chkpt_dir = "DDPG_paper_reproduction"):

self.lr = lr

self.n_actions = n_actions

self.input_dims = input_dims

self.name = name

self.fc1_dims = fc1_dims

self.fc2_dims = fc2_dims

self.sess = sess

self.batch_size = batch_size

self.action_bound = action_bound

self.ckpt_dir = chkpt_dir

self.build_network()

self.params = tf.trainable_variables(scope=self.name)

self.saver = tf.train.Saver()

self.checkpoint_file = os.path.join(chkpt_dir,name+'_ddpg.ckpt')

self.unnormalized_actor_gradients = tf.gradients(self.mu,self.params,

-self.action_gradient)

self.actor_gradients = list(map(lambda x: tf.div(x,self.batch_size),

self.unnormalized_actor_gradients))

self.optimize = tf.train.AdamOptimizer(self.lr).\

apply_gradients(zip(self.actor_gradients,self.params))

def build_network(self):

with tf.variable_scope(self.name):

self.input = tf.placeholder(tf.float32,

shape=[None,*self.input_dims],

name = 'inputs')

self.action_gradient = tf.placeholder(tf.float32,

shape = [None,self.n_actions])

f1 = 1/np.sqrt(self.fc1_dims)

dense1 = tf.layers.dense(self.input,units=self.fc1_dims,

kernel_initializer=tf.random_uniform_initializer(-f1,f1),

bias_initializer=tf.random_uniform_initializer(-f1,f1))

batch1 = tf.layers.batch_normalization(dense1)

layer1_activation = tf.nn.relu(batch1)

f2 = 1/np.sqrt(self.fc2_dims)

dense2 = tf.layers.dense(layer1_activation,units=self.fc2_dims,

kernel_initializer=tf.random_uniform_initializer(minval=-f2,maxval=f2),

bias_initializer=tf.random_uniform_initializer(minval=-f2,maxval=f2))

batch2 = tf.layers.batch_normalization(dense2)

layer2_activation = tf.nn.relu(batch2)

f3 = 0.003

mu = tf.layers.dense(layer2_activation,units=self.n_actions,

# activation = 'tanh',

kernel_initializer=tf.random_uniform_initializer(minval=-f3,maxval=f3),

bias_initializer=tf.random_uniform_initializer(-f3,f3))

mu = tf.nn.tanh(mu)

self.mu = tf.multiply(mu,self.action_bound)

def predict(self,inputs):

return self.sess.run(self.mu,feed_dict={self.input:inputs})

def train(self,inputs,gradients):

self.sess.run(self.optimize,

feed_dict = {self.input:inputs,

self.action_gradient:gradients})

def save_checkpoint(self):

print('...saving checkpoint...')

self.saver.save(self.sess,self.checkpoint_file)

def load_checkpoint(self):

print('...loading checkpoint...')

self.saver.restore(self.sess,self.checkpoint_file)

Critic

class Critic(object):

def __init__(self,lr,n_actions,name,input_dims,sess,fc1_dims,

fc2_dims,batch_size=64,chkpt_dir = "DDPG_paper_reproduction"):

self.lr = lr

self.n_actions = n_actions

self.input_dims = input_dims

self.name = name

self.fc1_dims = fc1_dims

self.fc2_dims = fc2_dims

self.sess = sess

self.batch_size = batch_size

self.ckpt_dir = chkpt_dir

self.build_network()

self.params = tf.trainable_variables(scope=name)

self.saver = tf.train.Saver()

self.checkpoint_file = os.path.join(chkpt_dir,name+'_ddpg.ckpt')

self.optimize = tf.train.AdamOptimizer(self.lr).minimize(self.loss)

self.action_gradients = tf.gradients(self.q,self.actions)

def build_network(self):

with tf.variable_scope(self.name):

self.input = tf.placeholder(tf.float32,

shape=[None,*self.input_dims],

name = 'inputs')

self.actions = tf.placeholder(tf.float32,

shape = [None,self.n_actions],

name='actions')

self.q_target = tf.placeholder(tf.float32,

shape = [None,1],

name='targets')

f1 = 1/np.sqrt(self.fc1_dims)

dense1 = tf.layers.dense(self.input,units=self.fc1_dims,

kernel_initializer=tf.random_uniform_initializer(-f1,f1),

bias_initializer=tf.random_uniform_initializer(-f1,f1))

batch1 = tf.layers.batch_normalization(dense1)

layer1_activation = tf.nn.relu(batch1)

f2 = 1/np.sqrt(self.fc2_dims)

dense2 = tf.layers.dense(layer1_activation,units=self.fc2_dims,

kernel_initializer=tf.random_uniform_initializer(-f2,f2),

bias_initializer=tf.random_uniform_initializer(-f2,f2))

batch2 = tf.layers.batch_normalization(dense2)

action_in = tf.layers.dense(self.actions,units=self.fc2_dims

)# activation='relu'

action_in = tf.nn.relu(action_in)

state_actions = tf.add(batch2,action_in)

state_actions = tf.nn.relu(state_actions)

f3 = 0.003

self.q = tf.layers.dense(state_actions,units=1,

kernel_initializer=tf.random_uniform_initializer(-f3,f3),

bias_initializer=tf.random_uniform_initializer(-f3,f3),

kernel_regularizer=tf.keras.regularizers.l2(0.01))

self.loss = tf.losses.mean_squared_error(self.q_target,self.q)

def predict(self,inputs,actions):

return self.sess.run(self.q,

feed_dict={self.input:inputs,

self.actions:actions})

def train(self,inputs,actions,q_target):

self.sess.run(self.optimize,

feed_dict = {self.input:inputs,

self.actions:actions,

self.q_target:q_target})

def get_action_gradients(self,inputs,actions):

return self.sess.run(self.action_gradients,

feed_dict={self.input:inputs,

self.actions:actions})

def save_checkpoint(self):

print('...saving checkpoint...')

self.saver.save(self.sess,self.checkpoint_file)

def load_checkpoint(self):

print('...loading checkpoint...')

self.saver.restore(self.sess,self.checkpoint_file)

Agent

class Agent(object):

def __init__(self,alpha,beta,input_dims,tau,env,gamma=0.99,

n_actions=2,max_size=100000,layer1_size=400,layer2_size=300,

batch_size=64):

self.gamma=gamma

self.tau=tau

self.memory = ReplayBuffer(max_size,input_dims,n_actions)

self.batch_size = batch_size

self.sess = tf.Session()

self.actor = Actor(alpha,n_actions,'Actor',input_dims,self.sess,

layer1_size,layer2_size,env.action_space.high)

self.critic = Critic(beta,n_actions,'Critic',input_dims,self.sess,

layer1_size,layer2_size)

self.target_actor = Actor(alpha,n_actions,'TargetActor',input_dims,

self.sess,layer1_size,layer2_size,

env.action_space.high)

self.target_critic = Critic(beta,n_actions,'TargetCritic',input_dims,

self.sess,layer1_size,layer2_size)

self.noise = OUActionNoise(mu=np.zeros(n_actions))

self.update_critic = \

[self.target_critic.params[i].assign(

tf.multiply(self.critic.params[i],self.tau)\

+tf.multiply(self.target_critic.params[i],1-self.tau))

for i in range(len(self.target_critic.params))]

self.update_actor = \

[self.target_actor.params[i].assign(

tf.multiply(self.actor.params[i],self.tau)\

+tf.multiply(self.target_actor.params[i],1-self.tau))

for i in range(len(self.target_actor.params))]

self.sess.run(tf.global_variables_initializer())

self.update_network_parameters(first=True)

def update_network_parameters(self,first=False):

if first:

old_tau = self.tau

self.tau = 1.0

self.target_critic.sess.run(self.update_critic)

self.target_actor.sess.run(self.update_actor)

self.tau = old_tau

else:

self.target_critic.sess.run(self.update_critic)

self.target_actor.sess.run(self.update_actor)

def remember(self,state,action,reward,new_state,done):

self.memory.store_transition(state,action,reward,new_state,done)

def choose_action(self,state):

state = state[np.newaxis,:]

mu = self.actor.predict(state)

noise = self.noise()

mu_prime = mu + noise

return mu_prime[0]

def learn(self):

if self.memory.mem_cntr < self.batch_size:

return

state,action,new_state,reward,done = \

self.memory.sample_buffer(self.batch_size)

critic_value = self.target_critic.predict(new_state,

self.target_actor.predict(new_state))

target = []

for j in range(self.batch_size):

target.append(reward[j]+self.gamma*critic_value[j]*done[j])

target = np.reshape(target,(self.batch_size,1))

_ = self.critic.train(state,action,target)

a_outs = self.actor.predict(state)

grads = self.critic.get_action_gradients(state,a_outs)

self.actor.train(state,grads[0])

self.update_network_parameters()

def save_models(self):

self.actor.save_checkpoint()

self.target_actor.save_checkpoint()

self.critic.save_checkpoint()

self.target_critic.save_checkpoint()

def load_models(self):

self.actor.load_checkpoint()

self.target_actor.load_checkpoint()

self.critic.load_checkpoint()

self.target_critic.load_checkpoint()

#测试,调用gym模块和前面定义的类

from ddpg_tf_orig import Agent #前面定义的类封装在ddpg_tf_orig.py中

import numpy as np

import gym

if __name__ == '__main__':

env = gym.make('Pendulum-v0')

obs = env.reset()

agent = Agent(alpha=0.0001,beta=0.001,input_dims=[3],tau=0.001,env=env,

batch_size=64,layer1_size=400,layer2_size=300,n_actions=1)

score_history = []

np.random.seed(0)

for i in range(1000):

obs = env.reset()

env.render()

done = False

score = 0

while not done:

act = agent.choose_action(obs)

new_state,reward,done,info = env.step(act)

agent.remember(obs,act,reward,new_state,int(done))

agent.learn()

score += reward

obs = new_state

score_history.append(score)

print('episode',i,'score % .2f' % score,

'100 game average % .2f' % np.mean(score_history[-100:]))

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?