HDFS基于JAVA开发示例

文章目录

1:HDFS

1.1:连接hadoop文件系统

1:Configuration介绍

Configuration是一个配置信息类,它包含配置信息的加载,获取配置信息和加载配置信息等。类加载的过程是,先加载该类的静态代码块,再加载静态变量,然后才是构造方法。所以,我在分析的时候,先看静态代码块,然后再看构造方法,最后根据该类的主要功能去分析。

Configuration是Hadoop的公共类,所以被放在了hadoop-common-2.7.4.jar下:org.apache.hadoop.conf.Configruration。

该类是Job的配置信息类,配置信息的传递必须通过Configuration。因为通过Configuration可以实现在多个mapper

和多个reducer任务间共享信息。

Configuration实现了Iterable和Writable两个接口。因此它具有迭代功能,迭代Configuration对象中所有name-value键

值对,加载到内存。实现Writable是为了实现Hadoop框架要求的序列化,可以将内存中的name-value序列化到硬盘。

- 2:Configuration创建初始化

以下三种方式均可

1:core-site.xml,hdfs-site.xml配置文件放在resources目录下,configuration对象会自动加载

2:conf.addResource();方法进行加载配置文件core-site.xml,hdfs-site.xml

3:conf.set();设置,k,v的键值对配置参数

demo

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

private static FileSystem fS;

private static FileSystem fS;

private static Configuration conf;

static {

conf = new Configuration();

conf.addResource(new Path(PATH_TO_HDFS_SITE_XML));

conf.addResource(new Path(PATH_TO_CORE_SITE_XML));

try {

fS = FileSystem.get(new URI("hdfs://0.0.0.0:9000"), conf, "hadoop");

} catch (Exception e) {

System.out.println(e);

}

}

2:FileSystem常用接口

Java抽象类org.apache.hadoop.fs.FileSystem定义了hadoop的一个文件系统接口,

Hadoop中关于文件操作类基本上全部是在"org.apache.hadoop.fs"包中,

这些API能够支持的操作包含:打开文件,读写文件,删除文件等

-

1:FileSystem接口使用

Hadoop类库中最终面向用户提供的接口类是FileSystem,该类是个抽象类,只能通过来类的get方法得到具体实例,并通过该实例来进行文件系统的操作。 -

2:FileStatus

获取文件或者文件夹的元信息!比如:文件路径,文件大小,文件所有者,所在的块大小,文件修改时间,备份数量,权限等! -

3:FSDataInputStream

输入流对象! 可以将HDFS中的文件或者文件夹读取到本地! -

4:FSDataOutputStream:

输出流对象! 可以将本地的文件或者文件夹上传到HDFS中!

1.2:开发准备

基于maven进行开发

建立resources目录,复制hadoop的core-site.xml,hdfs-site.xml和lo4j.properties

1.3:开发demo

1:创建文件

try {

//Configuration用于封装配置对象信

Path path = new Path("hdfs://192.168.2.101:9000/home/hadoop/testTxt");

if (fS.exists(path)) {

System.out.println("path is exists");

} else {

fS.create(path);

System.out.println("create successed");

}

} catch (Exception e) {

System.out.println(e);

}

2:创建目录

if (!fSystem.exists(filePath)) {

fSystem.mkdirs(filePath);

}

3:写入数据

` String content = "write test";

FSDataOutputStream out = null;

try {

out = fSystem.create(new Path(DEST_PATH + File.separator + FILE_NAME));

out.write(content.getBytes());

out.hsync();

LOG.info("success to write.");

} finally {

// make sure the stream is closed finally.

IOUtils.closeStream(out);

}

4:读取数据

private void read() throws IOException {

String strPath = PATH+ File.separator + FILE-NAME;

Path path = new Path(strPath);

FSDataInputStream in = null;

BufferedReader reader = null;

StringBuffer strBuffer = new StringBuffer();

try {

in = fSystem.open(path);

reader = new BufferedReader(new InputStreamReader(in));

String sTempOneLine;

// write file

while ((sTempOneLine = reader.readLine()) != null) {

strBuffer.append(sTempOneLine);

}

LOG.info("result is : " + strBuffer.toString());

LOG.info("success to read.");

} finally {

// make sure the streams are closed finally.

IOUtils.closeStream(reader);

IOUtils.closeStream(in);

}

}

5:删除文件

private void delete() throws IOException {

Path beDeletedPath = new Path(PATH + File.separator + FILE_NAME);

if (fSystem.delete(beDeletedPath, true)) {

LOG.info("success to delete the file " + PATH + File.separator + FILE_NAME);

} else {

LOG.warn("failed to delete the file " + PATH + File.separator + FILE_NAME);

}

}

6:删除目录

private void rmdir() throws IOException {

Path destPath = new Path(PATH);

if (!deletePath(destPath)) {

LOG.error("failed to delete Path " + PATH);

return;

}

LOG.info("success to delete path " + PATH);

}

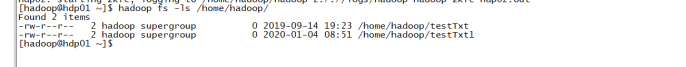

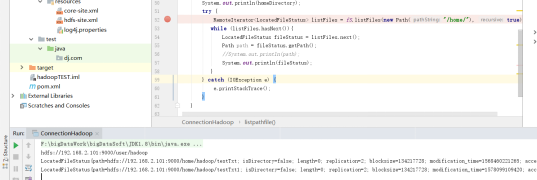

7:listFiles:获取目录的所有文件的信息

demo

try {

//true,false解决是否迭代遍历子目录下

RemoteIterator<LocatedFileStatus> listFiles = fS.listFiles(new Path("/home/"), true);

while (listFiles.hasNext()){

LocatedFileStatus fileStatus = listFiles.next();

Path path = fileStatus.getPath();

//System.out.println(path);

System.out.println(fileStatus);

}

} catch (IOException e) {

e.printStackTrace();

}

8:getFileStatus:获取单个文件或目录的信息

FileStatus fileStatus1 = fS.getFileStatus(new Path("/home/"));

System.out.println(fileStatus1);

结果

FileStatus{path=hdfs://192.168.2.101:9000/home; isDirectory=true; modification_time=1568460221122; access_time=0; owner=hadoop; group=supergroup; permission=rwxr-xr-x; isSymlink=false}

564

564

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?