目录

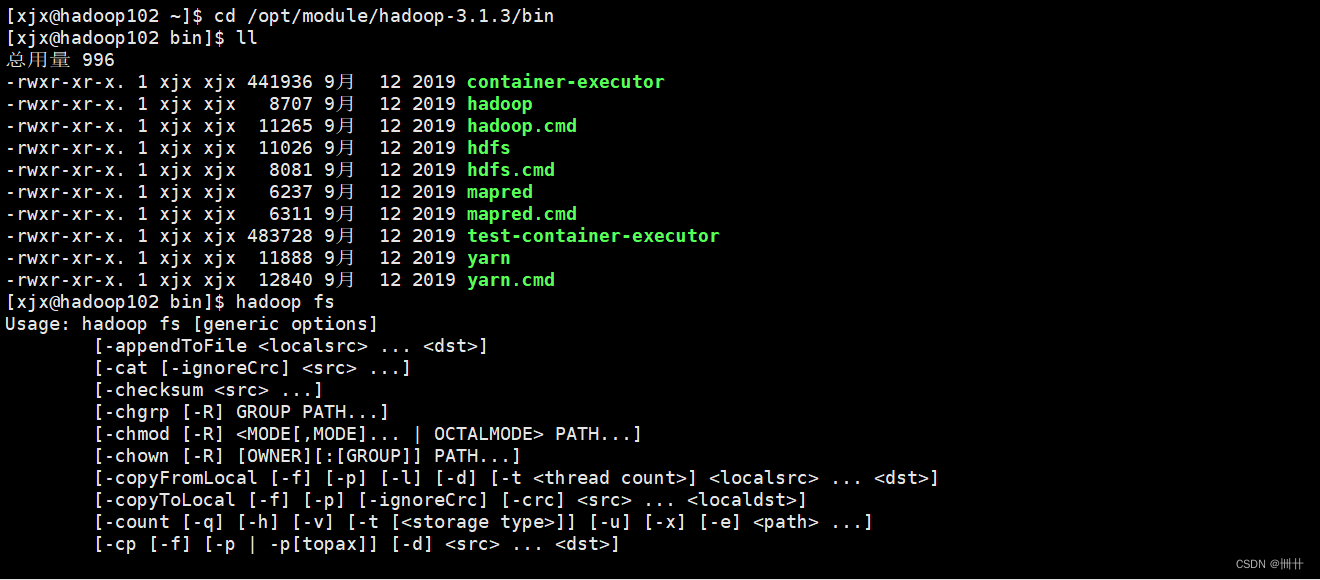

1 、基本语法

2 、命令大全

bin/hadoop fs

Usage: hadoop fs [generic options]

[-appendToFile <localsrc> ... <dst>]

[-cat [-ignoreCrc] <src> ...]

[-checksum <src> ...]

[-chgrp [-R] GROUP PATH...]

[-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...]

[-chown [-R] [OWNER][:[GROUP]] PATH...]

[-copyFromLocal [-f] [-p] [-l] [-d] [-t <thread count>] <localsrc> ... <dst>]

[-copyToLocal [-f] [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-count [-q] [-h] [-v] [-t [<storage type>]] [-u] [-x] [-e] <path> ...]

[-cp [-f] [-p | -p[topax]] [-d] <src> ... <dst>]

[-createSnapshot <snapshotDir> [<snapshotName>]]

[-deleteSnapshot <snapshotDir> <snapshotName>]

[-df [-h] [<path> ...]]

[-du [-s] [-h] [-v] [-x] <path> ...]

[-expunge]

[-find <path> ... <expression> ...]

[-get [-f] [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-getfacl [-R] <path>]

[-getfattr [-R] {-n name | -d} [-e en] <path>]

[-getmerge [-nl] [-skip-empty-file] <src> <localdst>]

[-head <file>]

[-help [cmd ...]]

[-ls [-C] [-d] [-h] [-q] [-R] [-t] [-S] [-r] [-u] [-e] [<path> ...]]

[-mkdir [-p] <path> ...]

[-moveFromLocal <localsrc> ... <dst>]

[-moveToLocal <src> <localdst>]

[-mv <src> ... <dst>]

[-put [-f] [-p] [-l] [-d] <localsrc> ... <dst>]

[-renameSnapshot <snapshotDir> <oldName> <newName>]

[-rm [-f] [-r|-R] [-skipTrash] [-safely] <src> ...]

[-rmdir [--ignore-fail-on-non-empty] <dir> ...]

[-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]]

[-setfattr {-n name [-v value] | -x name} <path>]

[-setrep [-R] [-w] <rep> <path> ...]

[-stat [format] <path> ...]

[-tail [-f] [-s <sleep interval>] <file>]

[-test -[defsz] <path>]

[-text [-ignoreCrc] <src> ...]

[-touch [-a] [-m] [-t TIMESTAMP ] [-c] <path> ...]

[-touchz <path> ...]

[-truncate [-w] <length> <path> ...]

[-usage [cmd ...]]

3、常用命令实操

3.1 准备工作

sbin/start-dfs.sh

sbin/start-yarn.sh

hadoop fs -help rm

hadoop fs -mkdir /sanguo

3.2 上传

1)-moveFromLocal:从本地剪切粘贴到 HDFS(会删除本地源文件)

语法:

-moveFromLocal <localsrc> ... <dst>

tips:目录和文件 都可以上传

vim shuguo.txt

# 输入:

shuguo

hadoop fs -moveFromLocal ./shuguo.txt /sanguo

2)-copyFromLocal:从本地文件系统中拷贝文件到 HDFS 路径去

vim weiguo.txt

# 输入:

weiguo

hadoop fs -copyFromLocal weiguo.txt /sanguo

3)-put:等同于 copyFromLocal,生产环境更习惯用 put

语法:

-put [-f] [-p] [-l] [-d] [-t <thread count>] [-q <thread pool queue size>] <localsrc> ... <dst>

-p : 保留源文件的时间戳、权限信息

-f : 如果目标已经存在,则覆盖目标

-t : 指定使用线程数(默认为1)

-q : 指定线程池大小(默认为1024)

vim wuguo.txt

输入:

wuguo

hadoop fs -put ./wuguo.txt /sanguo

4)-appendToFile:追加一个文件到已经存在的文件末尾

语法:

-appendToFile <localsrc> ... <dst>

vim liubei.txt

输入:

liubei

hadoop fs -appendToFile liubei.txt /sanguo/shuguo.txt

3.3 下载

hadoop fs -copyToLocal /sanguo/shuguo.txt ./

2)-get:等同于 copyToLocal,生产环境更习惯用 get

语法:

-get [-f] [-p] [-crc] [-ignoreCrc] [-t <thread count>] [-q <thread pool queue size>] <src> ... <localdst> :

-p : 保留源文件的时间戳、权限信息

-f : 如果目标已经存在,则覆盖目标

-t : 指定使用线程数(默认为1)

-q : 指定线程池大小(默认为1024)

-ignoreCrc : 跳过下载文件的CRC校验

hadoop fs -get /sanguo/shuguo.txt ./shuguo2.txt

3.4 HDFS 直接操作

hadoop fs -ls /sanguo

hadoop fs -cat /sanguo/shuguo.txt

hadoop fs -chmod 666 /sanguo/shuguo.txt

hadoop fs -chown atguigu:atguigu /sanguo/shuguo.txt

hadoop fs -mkdir /jinguo

5)-cp:从 HDFS 的一个路径拷贝到 HDFS 的另一个路径

语法:

-cp [-f] [-p | -p[topax]] [-d] [-t <thread count>] [-q <thread pool queue size>] <src> ... <dst> :

-f : 如果目标文件已经存在,则覆盖目标

-d : 跳过创建临时文件

-t <thread count> : 使用线程数(默认为1)

-q <thread pool queue size> : 要使用的线程池队列大小,默认为1024

hadoop fs -cp /sanguo/shuguo.txt /jinguo

hadoop fs -mv /sanguo/wuguo.txt /jinguo

hadoop fs -mv /sanguo/weiguo.txt /jinguo

hadoop fs -tail /jinguo/shuguo.txt

-rm [-f] [-r|-R] [-skipTrash] [-safely] <src> ...

-f : 如果文件不存在,则不会报错

-[rR] : 递归删除目录(不指定时,只能删除文件)

-safely : 是否开启安全模式,删除大文件时需要确认(大文件通过 hadoop.shell.delete.limit.num.files 设置)

tips: 生产环境中慎用-rm -r - f 😱😱😱

hadoop fs -rm /sanguo/shuguo.txt

hadoop fs -rm -r /sanguo

10)-du 统计文件夹的

语法:

-du [-s] [-h] [-v] [-x] path1 path2...

参数:

-s : 对指定的目录求和,否则会遍历指定的目录

-h : 格式化文件大小(默认为字节数),自动单位换算

-v : 显示表头信息(默认不显示)

-x : 不统计快照

hadoop fs -du -s -h /jinguo

hadoop fs -du -h /jinguo

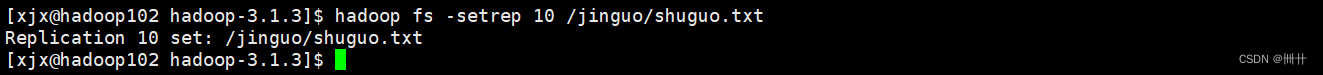

语法:

-setrep [-R] [-w] <rep> <path> ...

tips: 这里的副本数是 只是NameNode的元数据中记录的副本个数

集群中是否真的会有这么多副本,还需要看 DataNode 的数量

如果目前只有 3个 DataNode节点,最多也就 3 个副本,只有节点数的增加到 10 台时,副本数才能达到 10

hadoop fs -setrep 10 /jinguo/shuguo.txt

5402

5402

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?