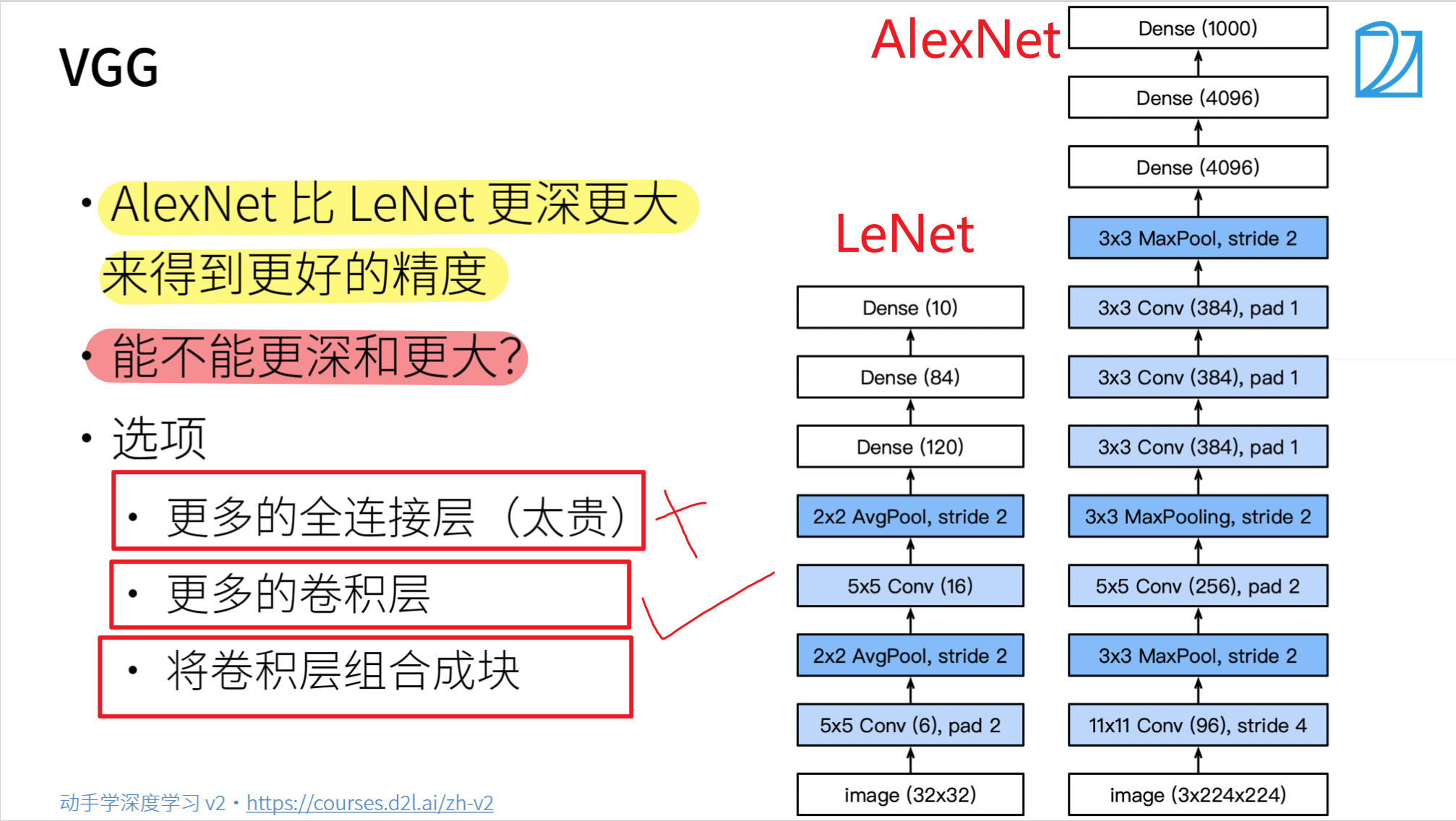

VGG

总结

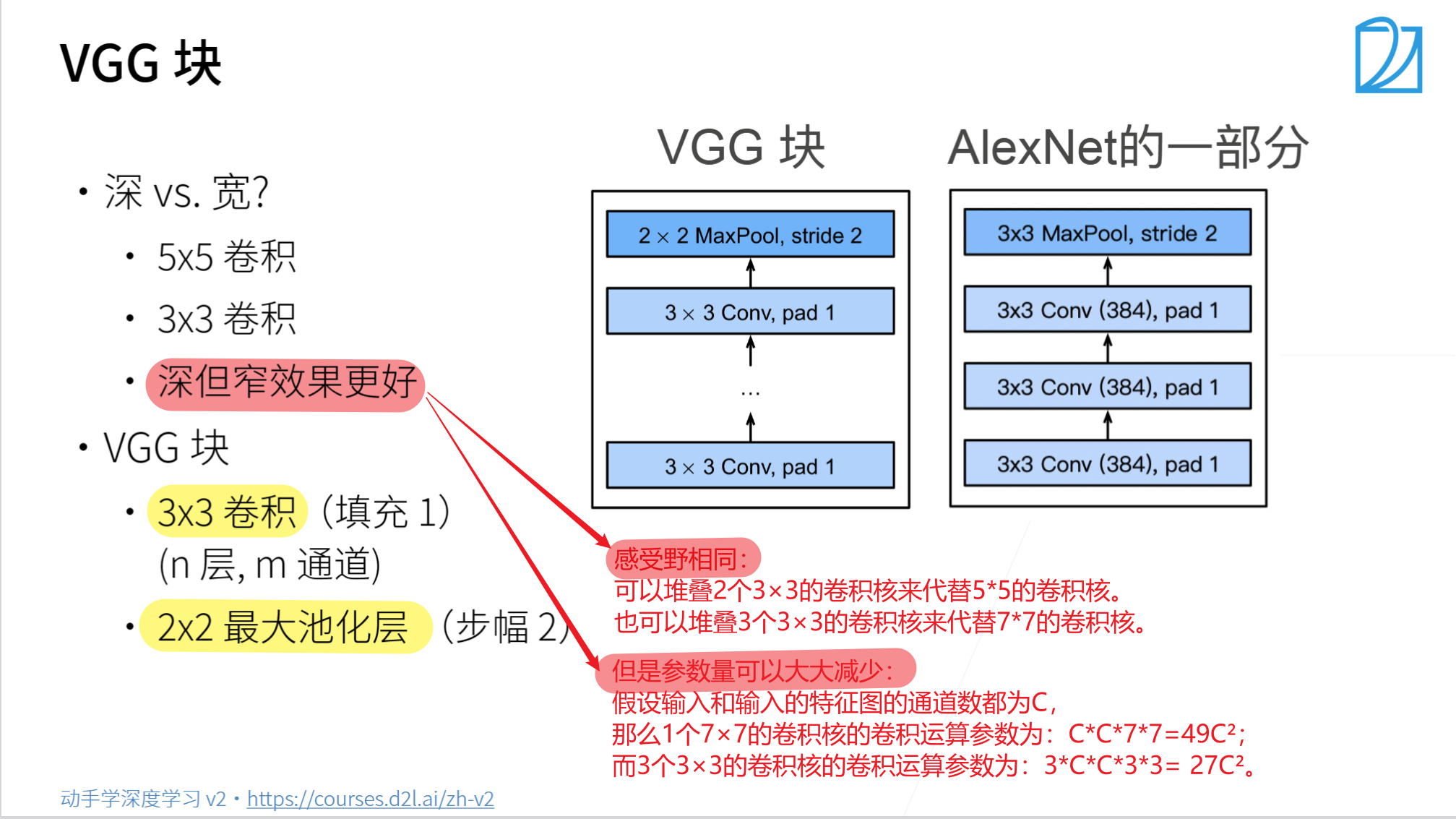

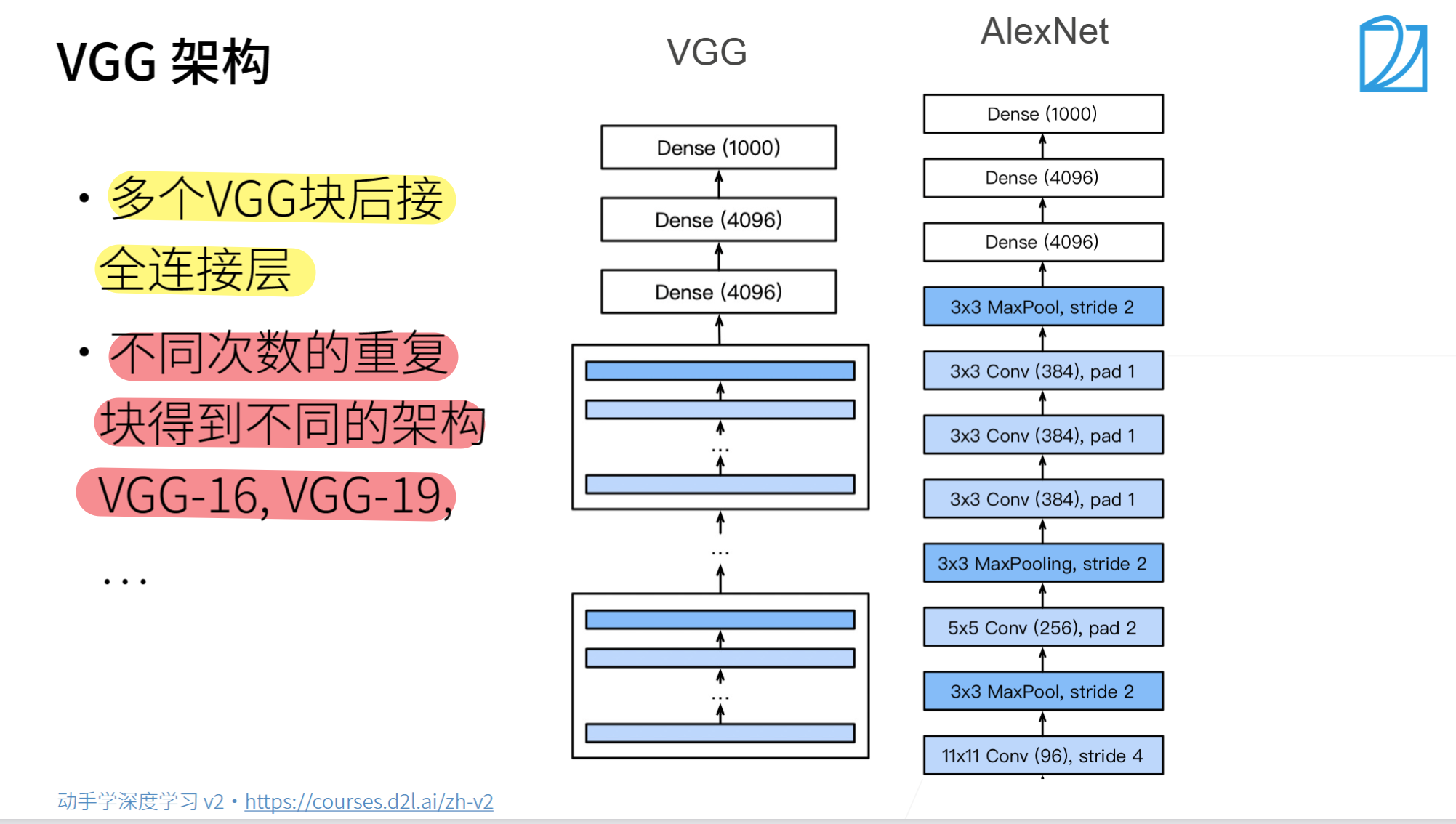

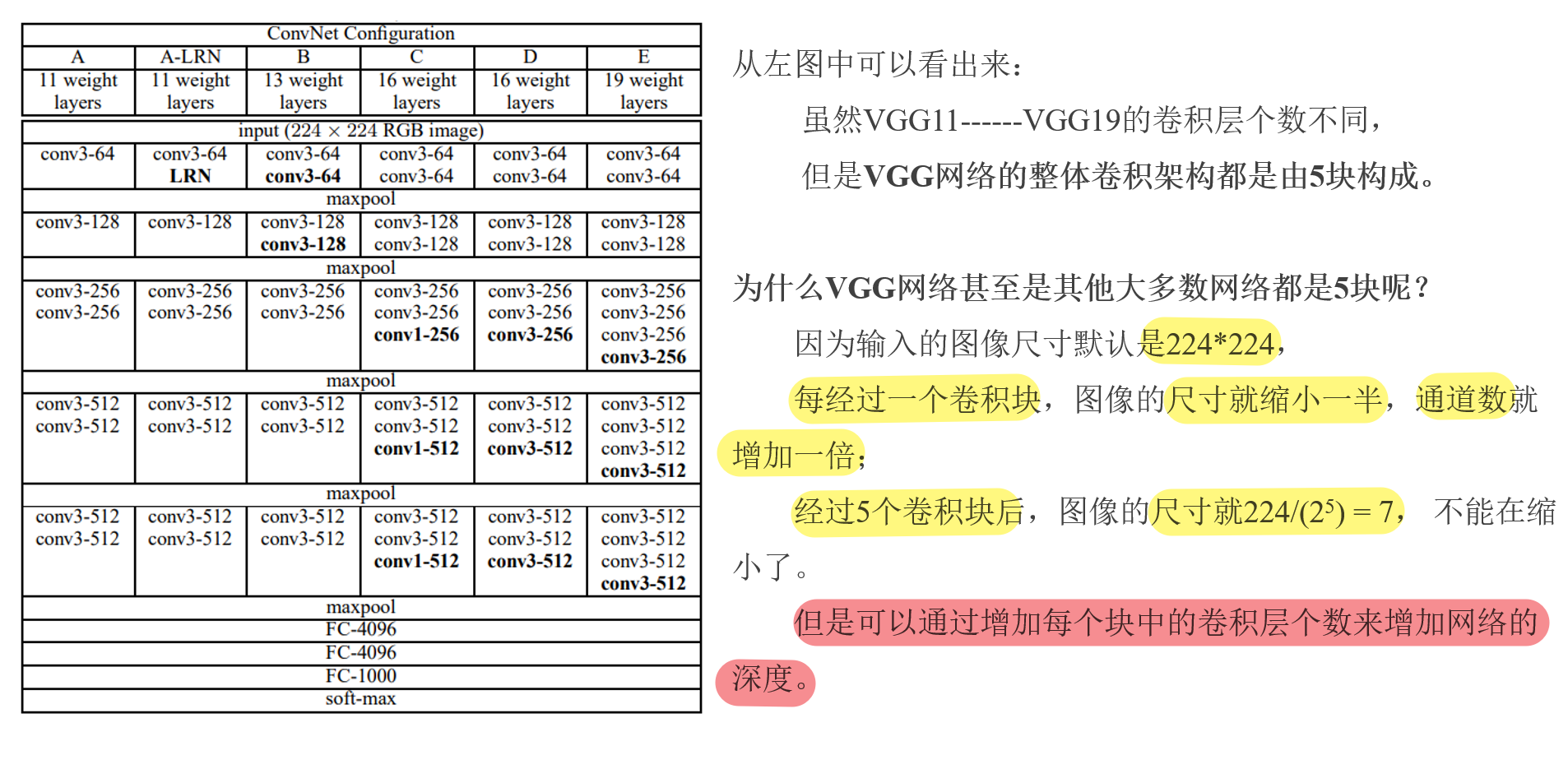

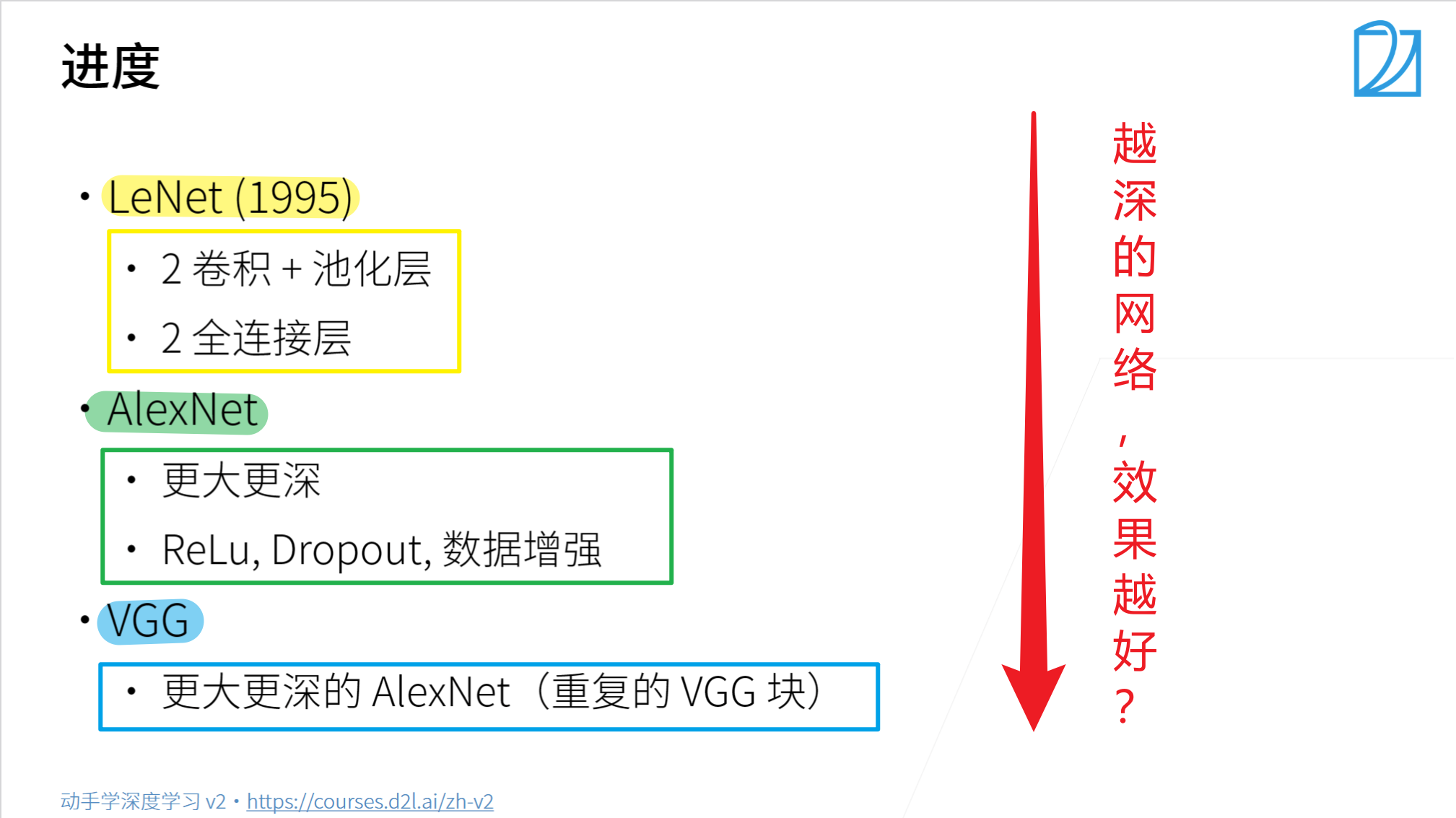

- VGG使用可重复使用的卷积块来构建深度卷积神经网络。

- 不同的卷积块个数和超参数可以得到不同复杂度的变种。

VGG代码实现

- 导入相关库

import torch

from torch import nn

from d2l import torch as d2l

- 定义网络模型

# 定义VGG块

def vgg_block(num_convs, in_channels, out_channels):

"""

:param num_convs: 卷积层个数

:param in_channels: 输入通道数

:param out_channels: 输出通道数

:return: 返回具体的VGG——block的每个层

"""

layers = []

for _ in range(num_convs):

# 每个VGG块中具体使用多少个卷积层和Relu

layers.append(

nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1)

)

layers.append(nn.ReLU())

in_channels = out_channels

# 每个VGG块最后加入一个最大池化层:让图像尺寸减半

layers.append(

nn.MaxPool2d(kernel_size=2, stride=2)

)

return nn.Sequential(

*layers

# *作用在形参上,代表这个位置接收任意多个非关键字参数,转化成元组方式;

# *作用在实参上,代表的是将输入迭代器拆成一个个元素。

)

# 定义VGG网络模型

def vgg(conv_arch):

conv_blks = []

in_channels = 1

# 卷积层部分

for(num_convs, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_convs, in_channels, out_channels))

in_channels = out_channels

return nn.Sequential(

*conv_blks,

nn.Flatten(), # [1, channels, h ,w] --->[1, channels*h*w]

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(), # [1, channels*h*w] -->[1, 4096]

nn.Dropout(0.5),

nn.Linear(4096, 4096), nn.ReLU(), # [1, 4096] --> [1, 4096]

nn.Dropout(0.5),

nn.Linear(4096, 10) # [1, 4096] --> [1, 10] 10分类任务

)

# # 使用VGG16, 训练精度反而下降

# conv_arch = ((2, 64), (2, 128), (3, 256), (3, 512), (3, 512)) # (卷积层个数, 输出通道数)

#使用VGG11

conv_arch = ((1, 64), (1, 128), (2, 256), (2, 512), (2, 512))

net = vgg(conv_arch)

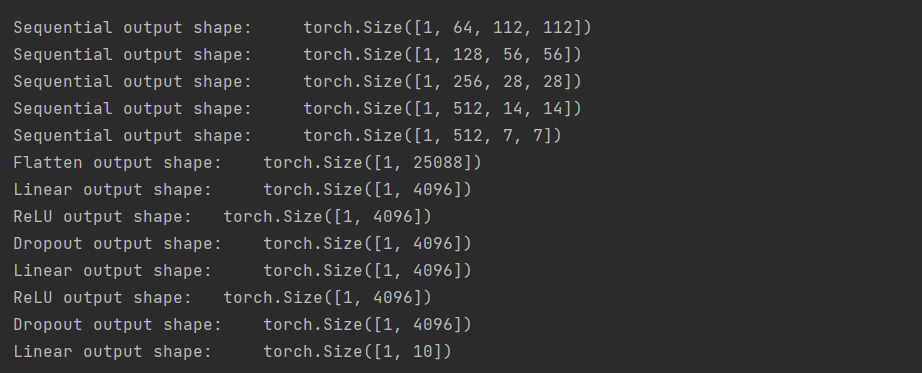

- 查看网络模型

X = torch.randn(1, 1, 224, 224)

for blk in net:

X = blk(X)

print(blk.__class__.__name__, 'output shape:\t', X.shape)

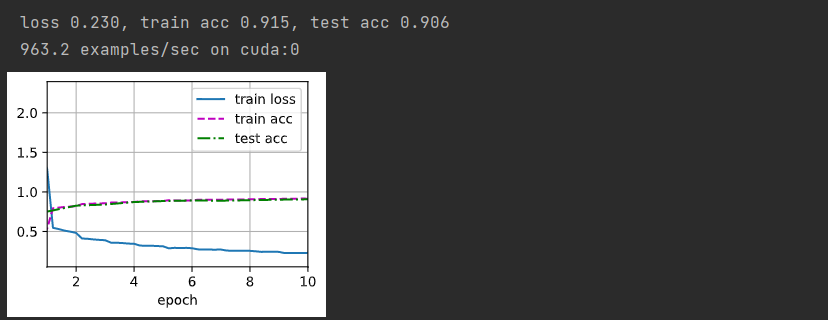

- 修改模型

- 由于VGG-16比AlexNet计算量更大,因此构建一个通道较少的网络

ratio = 4 # 吧所有的通道除以4

small_conv_arch = [(pair[0], pair[1]// ratio) for pair in conv_arch]

small_net = vgg(small_conv_arch)

- 加载数据集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

- 训练模型

lr, num_epochs = 0.05, 10

d2l.train_ch6(small_net, train_iter, test_iter,num_epochs, lr, d2l.try_gpu())

197

197

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?