目录

前言

本周主要看了人体姿态估计的一个简单模型,了解到模型构建过程,以及如何根据论文代码自己画出模型图,从而进行复现。

文献

文献名字:Simple Baselines for Human Pose Estimation and Tracking

作者:Bin Xiao, Haiping Wu, and Yichen Wei

创新点

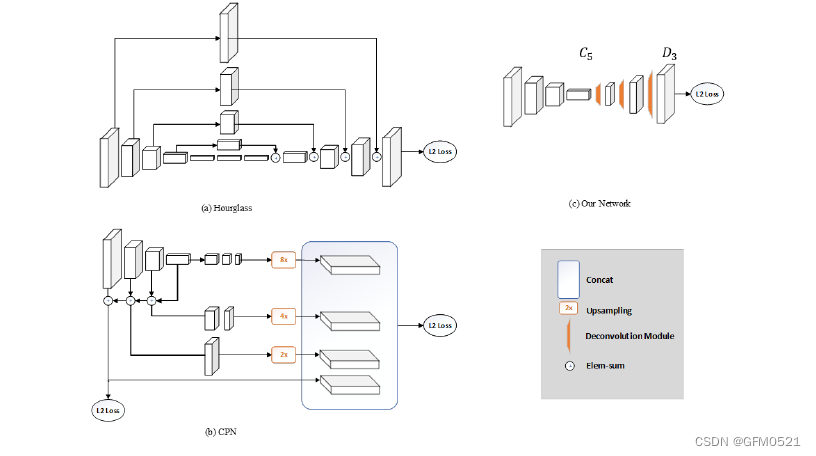

作者在文中提出的姿态检测方法是一种简单的baseline,相比于Hourglass与CPN,论文中构建的模型是在ResNet中插入了几层反卷积,将低分辨率的特征图扩张为原图大小,以此生成预测关键点需要的Heatmap。没有任何的特征融合,网络结构非常简单,相较于Hourglass和CPN,唯一较为新颖的点是引入了Deconvolution来替换Upsampling与convolution组成的结构主要方法只是在ResNet的最后一个卷积阶段添加一些反褶积层。我们采用这种结构是因为它可以说是从deep和low分辨率特征生成热图的最简单方法

默认情况下,使用三个具有批处理规范化和ReLU激活的反褶积层。每层有256个4×4内核的filter。步幅是2。最后加入1×1的卷积层,生成 个关键点的预测热图 。比较图1中的三种结构,很明显,我们的方法在生成高分辨率特征图方面与另外两种有所不同。两个工作都使用上采样来提高特征映射的分辨率,并将卷积参数放入其他块中。相反,我们的方法将上采样和卷积参数以更简单的方式组合到反褶积层中,而不使用跳层连接。

模型

模型图

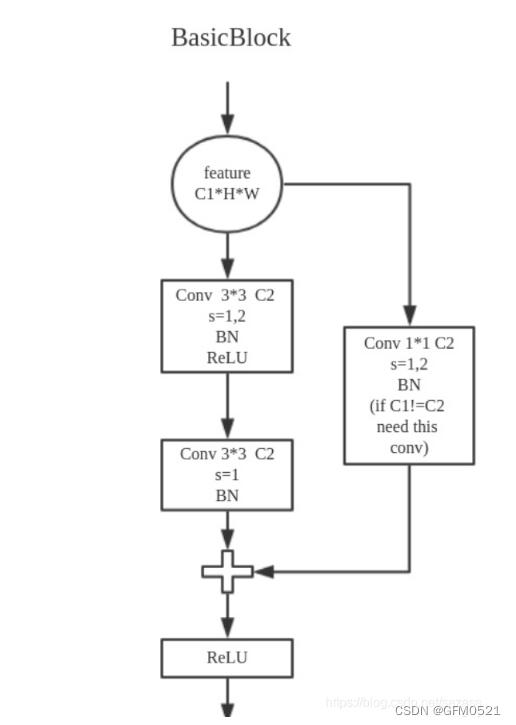

BasicBlock

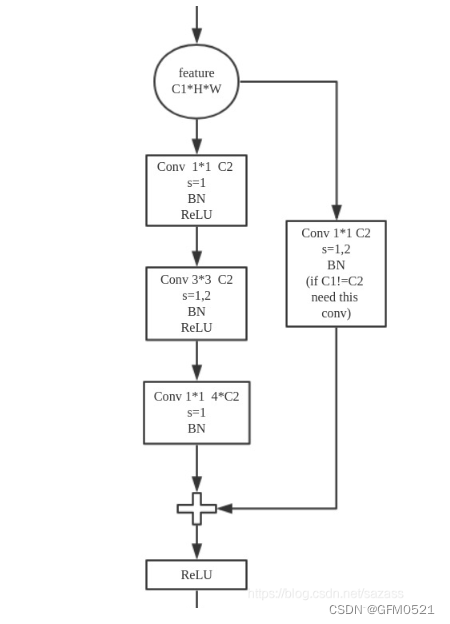

Bottleneck

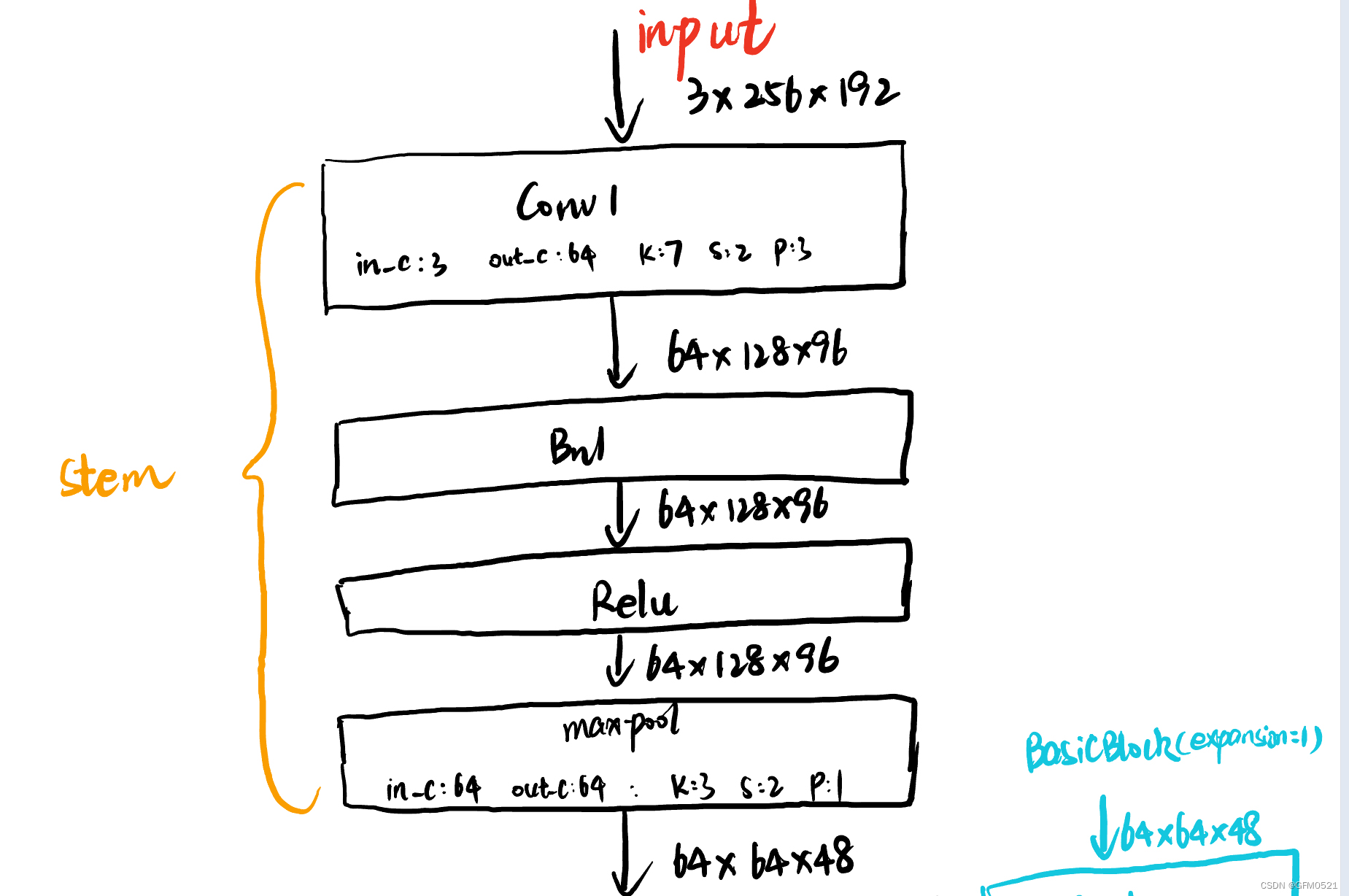

stem层

stem层将输入进来的图片进行初始特征提取,输入3x256x192的图片,经过conv1后变成64x128x96的feature map,再经过Bn1,Relu,maxpool后变成64x64x48的feature map

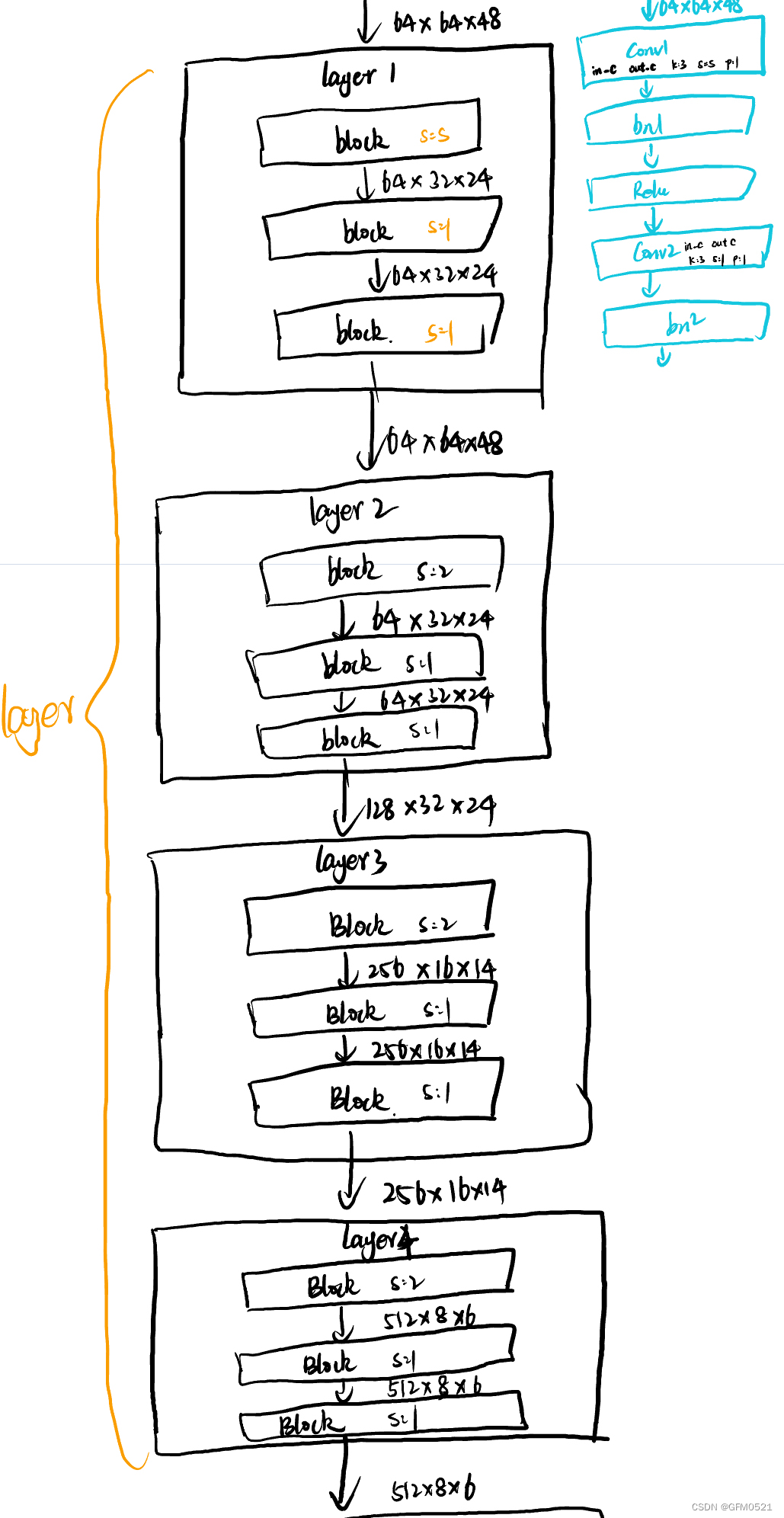

Layers层

将stem层处理好的特征图进行输入,Layers层进行深度图像特征提取。Layers里分四层,所用的模块的BasicBlock和Bottleneck,这里以BasicBlock作为例子。BasicBlock中conv1是k=3,s=s,p=1的卷积(其中步长是根据传入参数来决定的),经过bn1,relu后conv2是k=3,s=1,p=1的卷积。第一层时,没有传入stride,经过三次k=3,s=1,p=1的卷积核后,输出为64x64x48。layer2时传入步长为2,在layer2层,输出通道变为128,经过三次BasicBlock后输出特征图为128x32x24。layer3传入步长为2,输出通道为256,最后输出为256x16x14。layer4传入步长为2,输出通道为512,最后输出为512x8x6。

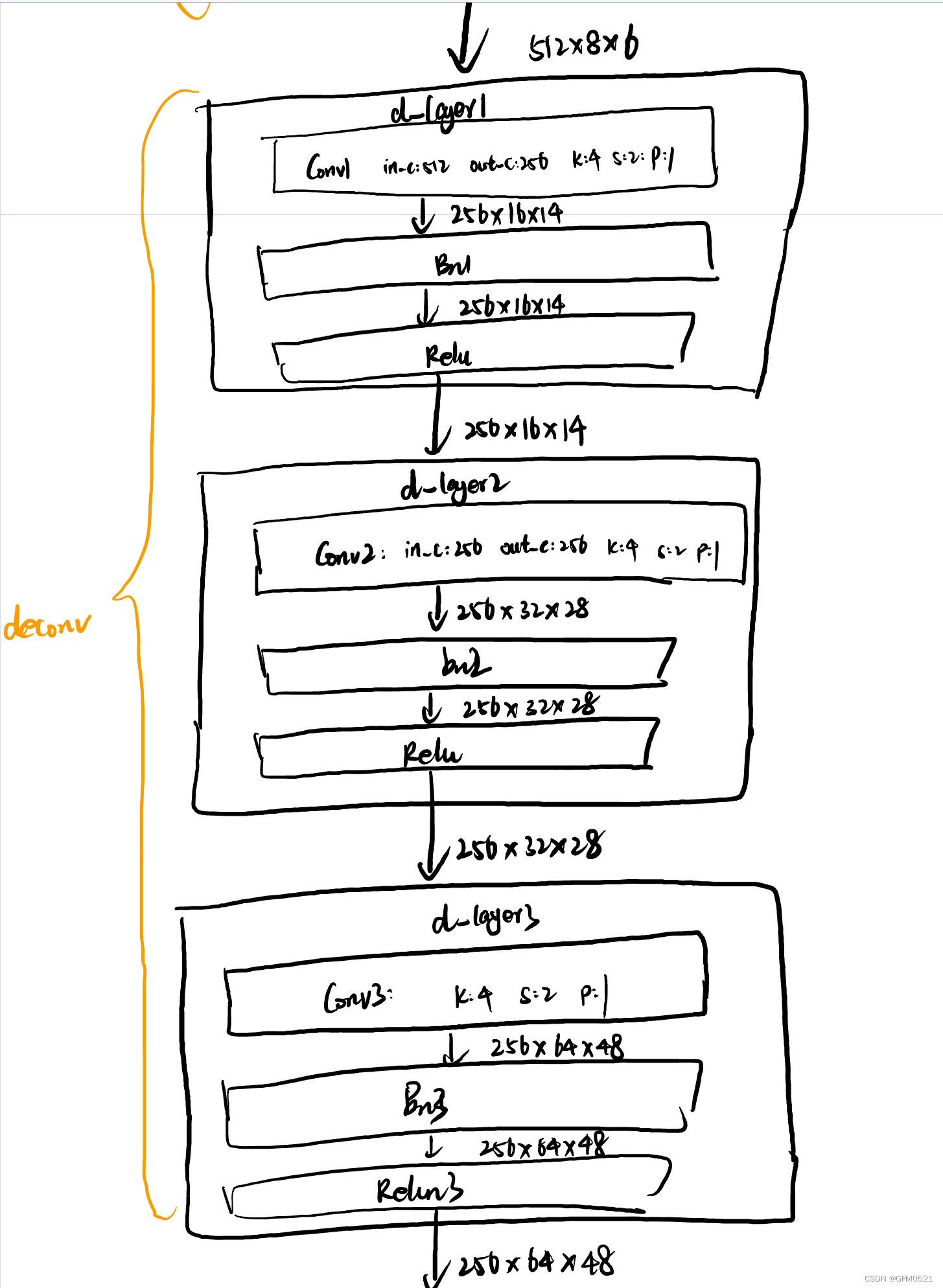

deconv层

插入了几层反卷积,将低分辨率的特征图扩张为原图大小,以此生成预测关键点需要的Heatmap

deconv中包含三层反卷积层每一层都有一个conv,bn和relu,conv1 in_plane = 512,out_plane=256,k=4,s=2,p=1.最后输出为256x16x14。经过三层后,最终输出为256x64x48

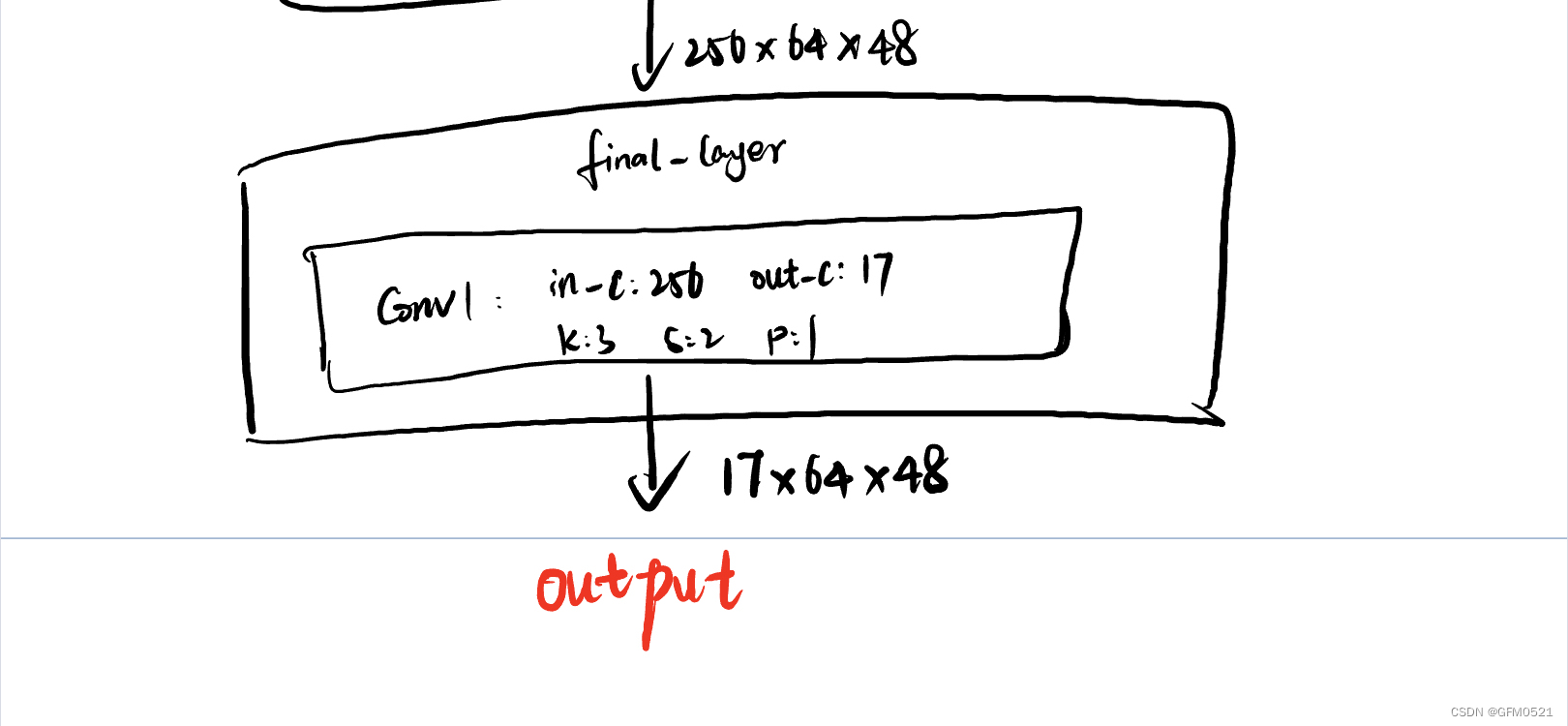

final_layer层

final_layer根据使用的数据集将经过反卷积后的特征图变成我们需要的大小,coco数据集17个关键点,所以要把最后输出的特征图通道变为17.其中final_layer卷积为in_plane = 256,out_plane = 17,k=3,s=2,p = 1.追踪输出的特征图为17x64x48

模型代码

自己写的简洁的模型代码,理解了整个模型构建的过程

import torch

from torch import nn

#定义3x3卷积核

def Conv3x3(inplane,outplane,stride = 1):

return nn.Conv2d(inplane,outplane,kernel_size=3,stride=stride,padding=1,bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self,inplane,outplane,stride=1,downsanple = None):

#conv1,步长可以传进来,conv2步长不传,就是1

super(BasicBlock, self).__init__()

self.conv1 = Conv3x3(inplane,outplane,stride)

self.bn1 = nn.BatchNorm2d(outplane)

self.relu = nn.ReLU()

self.conv2 = Conv3x3(outplane,outplane) #!!!!!!!

self.bn2 = nn.BatchNorm2d(outplane)

self.downsample = downsanple

self.stride =stride

def forward(self,x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self,inplane,outplane,stride = 1,downsample = None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplane,outplane,kernel_size=1,stride=stride,bias=False)

self.bn1 = nn.BatchNorm2d(outplane)

self.conv2 = nn.Conv2d(outplane,outplane,kernel_size=3,stride=1,padding=1,bias=False)

self.bn2 = nn.BatchNorm2d(outplane)

self.conv3 = nn.Conv2d(outplane,outplane*self.expansion,kernel_size=1,bias=False)

self.bn3 = nn.BatchNorm2d(outplane*self.expansion)

self.relu = nn.ReLU()

self.downsample = downsample

def forward(self,x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class SampplePose(nn.Module):

def __init__(self,block,layers,**kwargs):

super(SampplePose, self).__init__()

# extra = cfg.MODEL.EXTRA

self.inplanes =64

self.conv1 = nn.Conv2d(in_channels=3,out_channels=64,kernel_size=7,stride=2,padding=3,bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU()

self.maxpool = nn.MaxPool2d(kernel_size=3,stride=2,padding=1)

#层

self.layer1 = self._make_layer(block,64,layers[0])

self.layer2 = self._make_layer(block,128,layers[1],stride=2)

self.layer3 = self._make_layer(block,256,layers[2],stride=2)

self.layer4 = self._make_layer(block,512,layers[3],stride=2)

self.deconv = self._deconv_make_layer_(3,256,4)

self.final_layer = self._final_layer_()

def forward(self,x):

residual = x

out = self.conv1(x)

print(f'conv1后的特征图大小:{out.shape}')

out = self.bn1(out)

out = self.relu(out)

out = self.maxpool(out)

print(f'maxpool后的特征图大小:{out.shape}')

out = self.layer1(out)

print(f'第一层卷积后:{out.shape}')

out = self.layer2(out)

print(f'第二层卷积后:{out.shape}')

out = self.layer3(out)

print(f'第三层卷积后:{out.shape}')

out = self.layer4(out)

print(f'第四层卷积后:{out.shape}')

out = self.deconv(out)

print(f'反卷积后:{out.shape}')

out = self.final_layer(out)

return out

def _make_layer(self,block,outplane,blocks,stride = 1):

downsample = None

if stride != 1 or self.inplanes != outplane * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, outplane * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(outplane * block.expansion),

)

# print(f'shurru{self.inplanes}')

# print(outplane)

layers = []

layers.append(block(self.inplanes,outplane,stride,downsample))

self.inplanes = outplane*block.expansion

for i in range(1,blocks):

layers.append(block(self.inplanes,outplane))

return nn.Sequential(*layers)

#反卷积层

def _deconv_make_layer_(self,num_layers,num_filters,num_kernels):

layers = []

for i in range(num_layers):

outplane = num_filters

layers.append(nn.ConvTranspose2d(in_channels=self.inplanes,out_channels=outplane,kernel_size=4,stride=2,padding=1))

layers.append(nn.BatchNorm2d(outplane))

layers.append(nn.ReLU())

self.inplanes = outplane

return nn.Sequential(*layers)

def _final_layer_(self):

return nn.Conv2d(in_channels=256,out_channels=17,kernel_size=1,stride=1,padding=0)

if __name__ == '__main__':

x = torch.randn(1,3,256,192)

SampplePose18 = SampplePose(BasicBlock,[2,2,2,2])

out18 = SampplePose18(x)

print(f'ResNet18最终结果:{out18.shape}')

SampplePose34 = SampplePose(BasicBlock,[3,4,6,3])

out34 = SampplePose34(x)

print(f'ResNet34最终结果:{out34.shape}')

SampplePose50 = SampplePose(Bottleneck, [3, 4, 6, 3])

out50 = SampplePose50(x)

print(f'ResNet50最终结果:{out50.shape}')

SampplePose101 = SampplePose(Bottleneck, [3, 4, 6, 3])

out101 = SampplePose101(x)

print(f'ResNet101最终结果:{out101.shape}')

SampplePose152 = SampplePose(Bottleneck, [3, 4, 6, 3])

out152 = SampplePose152(x)

print(f'ResNet152最终结果:{out152.shape}')

输出结果

conv1后的特征图大小:torch.Size([1, 64, 128, 96])

maxpool后的特征图大小:torch.Size([1, 64, 64, 48])

第一层卷积后:torch.Size([1, 64, 64, 48])

第二层卷积后:torch.Size([1, 128, 32, 24])

第三层卷积后:torch.Size([1, 256, 16, 12])

第四层卷积后:torch.Size([1, 512, 8, 6])

反卷积后:torch.Size([1, 256, 64, 48])

ResNet18最终结果:torch.Size([1, 17, 64, 48])

conv1后的特征图大小:torch.Size([1, 64, 128, 96])

maxpool后的特征图大小:torch.Size([1, 64, 64, 48])

第一层卷积后:torch.Size([1, 64, 64, 48])

第二层卷积后:torch.Size([1, 128, 32, 24])

第三层卷积后:torch.Size([1, 256, 16, 12])

第四层卷积后:torch.Size([1, 512, 8, 6])

反卷积后:torch.Size([1, 256, 64, 48])

ResNet34最终结果:torch.Size([1, 17, 64, 48])

conv1后的特征图大小:torch.Size([1, 64, 128, 96])

maxpool后的特征图大小:torch.Size([1, 64, 64, 48])

第一层卷积后:torch.Size([1, 256, 64, 48])

第二层卷积后:torch.Size([1, 512, 32, 24])

第三层卷积后:torch.Size([1, 1024, 16, 12])

第四层卷积后:torch.Size([1, 2048, 8, 6])

反卷积后:torch.Size([1, 256, 64, 48])

ResNet50最终结果:torch.Size([1, 17, 64, 48])

conv1后的特征图大小:torch.Size([1, 64, 128, 96])

maxpool后的特征图大小:torch.Size([1, 64, 64, 48])

第一层卷积后:torch.Size([1, 256, 64, 48])

第二层卷积后:torch.Size([1, 512, 32, 24])

第三层卷积后:torch.Size([1, 1024, 16, 12])

第四层卷积后:torch.Size([1, 2048, 8, 6])

反卷积后:torch.Size([1, 256, 64, 48])

ResNet101最终结果:torch.Size([1, 17, 64, 48])

conv1后的特征图大小:torch.Size([1, 64, 128, 96])

maxpool后的特征图大小:torch.Size([1, 64, 64, 48])

第一层卷积后:torch.Size([1, 256, 64, 48])

第二层卷积后:torch.Size([1, 512, 32, 24])

第三层卷积后:torch.Size([1, 1024, 16, 12])

第四层卷积后:torch.Size([1, 2048, 8, 6])

反卷积后:torch.Size([1, 256, 64, 48])

ResNet152最终结果:torch.Size([1, 17, 64, 48])

Process finished with exit code 0

总结

第一次看论文里模型的具体实现,才开始看的时候没有太多头绪。师兄给我讲解了很多,说可以结合论文和代码来自己画出模型图结构,这样自己实现代码的时候就可以看着自己的模型图来实现。跟着这种方法学习,感觉进展很快。知道了代码从哪开始看起,卷积,反卷积的作用以及最后模型输出的是什么。自己在写代码的过程中也更进一步的了解到了每个参数的传入输出到底是怎样的。在写BasicBlock模块的时候,我第二次卷积时参数还写的时in_plane,out_palne.导致最后运行成功,通道数有问题。找了很久的错误才发现在这,最主要的原因就是对于参数的传递当时还没有理解很透彻。希望自己后面可以在此基础上可以将训练过程也进行实现。

1858

1858

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?