nn.L1Loss

https://pytorch.org/docs/stable/generated/torch.nn.L1Loss.html#torch.nn.L1Loss

例子:

input=[1,3,4]

target=[2,3,7]

则loss=(|2-1|+|3-3|+|7-4|)/3=4/3=1.333

import torch

from torch import nn

input = torch.tensor([1, 3, 4],dtype=torch.float)

target = torch.tensor([2, 3, 7],dtype=torch.float)

loss_fn1 = nn.L1Loss()

loss1 = loss_fn1(input, target)

print("loss1:{}".format(loss1))

loss1:1.3333333730697632

其中可以加入参数,求和,默认是求平均

loss_fn2=nn.L1Loss(reduction='sum')

loss2=loss_fn2(input,target)

print("loss2:{}".format(loss2))

loss2:4.0

nn.MSELoss

均方差损失函数

https://pytorch.org/docs/stable/generated/torch.nn.MSELoss.html#torch.nn.MSELoss

input=[1,3,4]

target=[2,3,7]

loss=(2-1)**2+(3-3)**2+(7-4)**2=0+9+1=10

loss_fn3=nn.MSELoss()

loss3=loss_fn3(input,target)

print("loss3:{}".format(loss3))

loss3:3.3333332538604736

默认求平均,即10/3=3.3333,可通过指定进行求和

loss_fn4=nn.MSELoss(reduction='sum')

loss4=loss_fn4(input,target)

print("loss4:{}".format(loss4))

loss4:10.0

交叉熵损失函数 CrossEntropyLoss

https://pytorch.org/docs/stable/generated/torch.nn.CrossEntropyLoss.html#torch.nn.CrossEntropyLoss

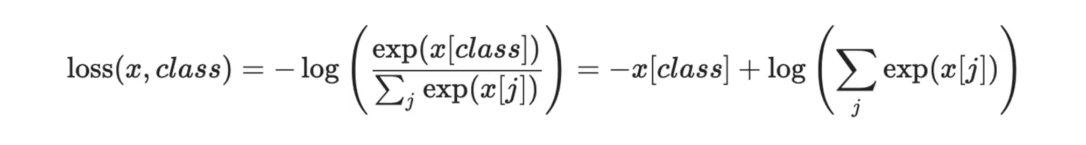

计算公式为:

比如对于一个3分类的图片,假设最终结果input为[0.2, 0.6 , 0.3],而正确的标签为1,即第2个,注意这是单个图片,input的尺寸为1x3,

此时的loss计算为:-x[1]+ln(exp(x[1])+exp(x[2])+exp(x[3])=-0.6+ln(exp(0.2)+exp(0.6)+exp(0.3))=0.88009

input=torch.tensor([0.2,0.6,0.3])

target=torch.tensor([1])

loss_fn=nn.CrossEntropyLoss()

input=torch.reshape(input,[1,3]) #1是指1个batchsize

loss=loss_fn(input,target)

print(loss)

tensor(0.8801)

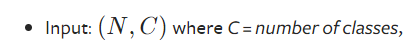

要注意输入格式

如果batchsize为N,则input为NxC,C为有几个分类,target为1xN,结果默认是取平均,即除以batchsize的大小

比如:

input=【【0.2, 0.6 , 0.3】

【0.5,0.1,0.7】】

target=【1,2】

第二行的loss=0.8618,

两行取平均,(0.8801+0.8618)/2=0.8709

input=torch.tensor([[0.2,0.6,0.3],

[0.5,0.1,0.7]])

target=torch.tensor([1,2])

loss_fn=nn.CrossEntropyLoss()

loss=loss_fn(input,target)

print(loss)

tensor(0.8710)

https://www.bilibili.com/video/BV1hE411t7RN?p=23

425

425

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?