目录

GPU加速

把所有的设备cpu、cuda0、cuda1等,挣到一个设备上。

.cuda()方法会搬到GPU上

.to()方法返回一个新的refoungs,是否一致取决于哪种类型数据。对一个模块使用,则模块整体更新。如果对一个tensor使用,结果不一样。data2=data.cuda(),此时data2和data不一样,会产生两个tensor,一个在cpu上一个在gpu上。

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

batch_size=200

learning_rate=0.01

epochs=10

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data/', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data/', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

class MLP(nn.Module):

def __init__(self):

super(MLP, self).__init__()

self.model = nn.Sequential(

nn.Linear(784, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 10),

nn.LeakyReLU(inplace=True),

)

def forward(self, x):

x = self.model(x)

return x

device = torch.device('cuda:0')

net = MLP().to(device)

optimizer = optim.SGD(net.parameters(), lr=learning_rate)

criteon = nn.CrossEntropyLoss().to(device)

for epoch in range(epochs):

for batch_idx, (data, target) in enumerate(train_loader):

data = data.view(-1, 28*28)

data, target = data.to(device), target.cuda()

logits = net(data)

loss = criteon(logits, target)

optimizer.zero_grad()

loss.backward()

# print(w1.grad.norm(), w2.grad.norm())

optimizer.step()

if batch_idx % 100 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

test_loss = 0

correct = 0

for data, target in test_loader:

data = data.view(-1, 28 * 28)

data, target = data.to(device), target.cuda()

logits = net(data)

test_loss += criteon(logits, target).item()

pred = logits.data.max(1)[1]

correct += pred.eq(target.data).sum()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

net=MLP().to(device),CrossEntropyLoss(),data,target=data.to(device),target.cuda(),使用.to()方法把网路搬到GPU上。

minist测试实战

不断train时,它可能会记住样本,此时效果会很好,但是一换其他样本就效果不佳。

对于每一张图片,对其可能是几的概率做softmax,并且做argmax(返回最大值所在索引),返回值即表明他是几。

logits=torch.rand(4,10)

pred=F.softmax(logits,dim=1)

print('pred shape:',pred.shape)

pred_lable=pred.argmax(dim=1)

print('pred_lable:',pred_lable)

print('logits.argmax:',logits.argmax(dim=1))

label=torch.tensor([9,3,2,4])

correct=torch.eq(pred_lable,label)

print('correct',correct)

accuracy=correct.sum().float().item()/4

print(accuracy)

我们得出[5,3,2,1],假设真实是[9,3,2,4],我们需要计算accuracy=2/4=50%。

计算正确的数量,使用eq()函数,如果元素相等返回1。

correct类型是unit8,我们需要使它变成float类型。

when to test

几个batch一个test

每个epoch一个test

vidsom可视化

visdom效率比tensorboard更高一点,因为后者会把数据写到文件中,导致监听文件占据大量空间。

visdom可以直接使用tensor。

使用visdom

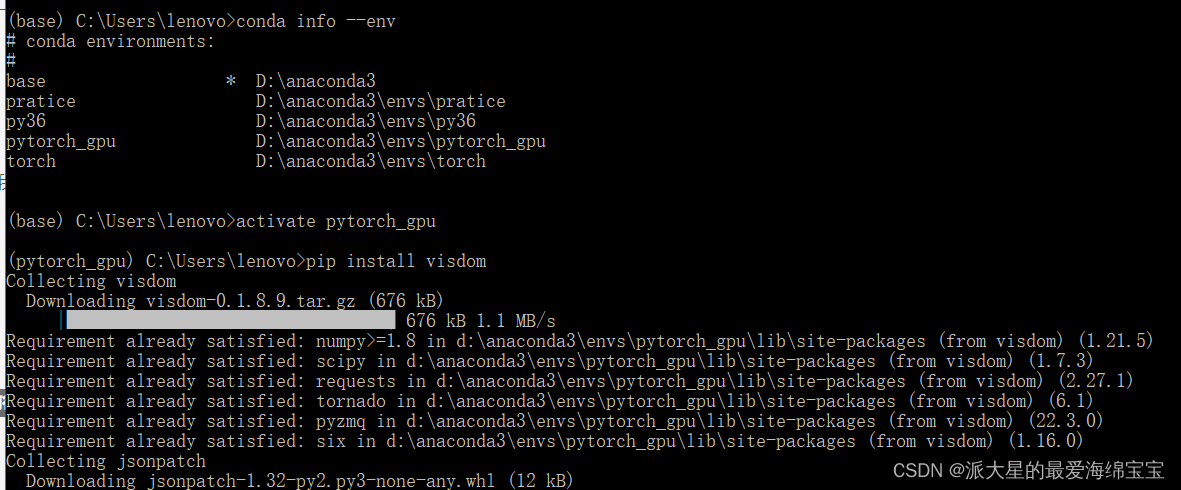

1.install

首先进入anaconda prompt,在我们特定环境中输入pip install visdom

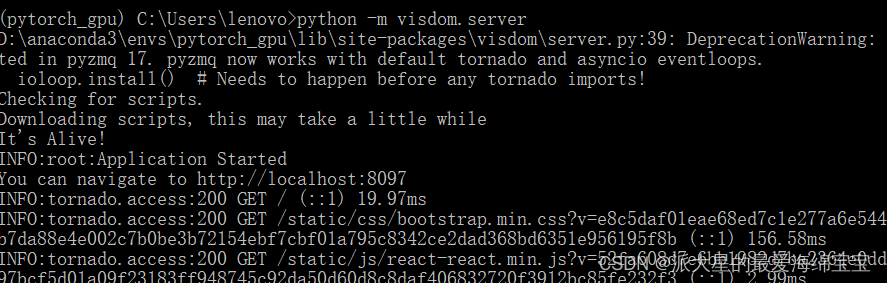

2.run server damon

开启监听进程,输入python -m visdom.server

visdom实际上是一个web服务器,开启后,程序向该服务器发送数据,服务器就会把数据渲染到网页上。运行前需要先开启该web服务器。

遇到问题

- pip unstall visdom

- 从官方网页上下载最新的代码,进入下载好的文件内,pip install -e .

- 退回去,开启监听进程

- 复制地址,打开浏览器

功能

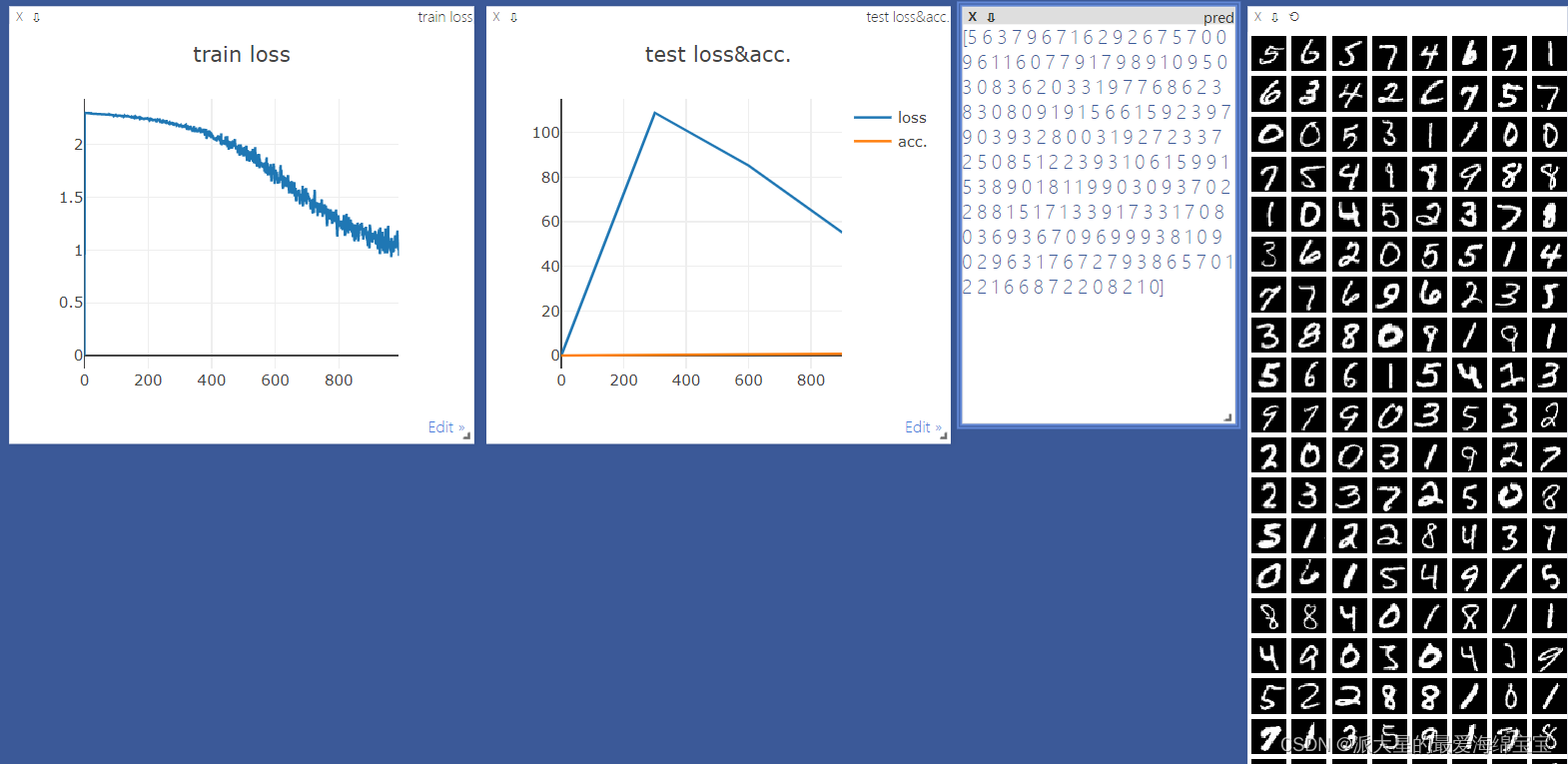

lines:single trace

画一个曲线

首先创建一条直线,有x,y,且赋初始值,win可以理解为id,大窗口是一个environment。把窗口名字命名为train_loss,且只有一个点(0,0)

from visdom import Visdom

viz=Visdom()

viz.line([0.],[0.],win='train_loss',opts=dict(title='train loss'))

viz.line([loss.item()],[global_step],win='train_loss',update='append')

global_step是x作表,image可以直接接受tensor,对于直线类型,接受的是numpy数据,指定为append,添加到当前直线后面,如果不指定,会全部覆盖掉。

lines:single trace

多条曲线

两条直线,我们需要两个y。

from visdom import Visdom

viz=Visdom()

viz.line([[0.0,0.0]],[0.],win='test',opts=dict(title='test loss & acc',legend=['loss','acc']))

viz.line([[test_loss,correct/len(test_loader.dataset)]],

[global_step],win='test',update='append')

完整代码

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from visdom import Visdom

batch_size=200

learning_rate=0.01

epochs=10

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data/', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

# transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data/', train=False, transform=transforms.Compose([

transforms.ToTensor(),

# transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True)

class MLP(nn.Module):

def __init__(self):

super(MLP, self).__init__()

self.model = nn.Sequential(

nn.Linear(784, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 200),

nn.LeakyReLU(inplace=True),

nn.Linear(200, 10),

nn.LeakyReLU(inplace=True),

)

def forward(self, x):

x = self.model(x)

return x

device = torch.device('cuda:0')

net = MLP().to(device)

optimizer = optim.SGD(net.parameters(), lr=learning_rate)

criteon = nn.CrossEntropyLoss().to(device)

viz=Visdom()

viz.line([0.],[0.],win='train_loss',opts=dict(title='train loss'))

viz.line([[0.0,0.0]],[0.],win='test',opts=dict(title='test loss & acc',legend=['loss','acc']))

global_step=0

for epoch in range(epochs):

for batch_idx, (data, target) in enumerate(train_loader):

data = data.view(-1, 28*28)

data, target = data.to(device), target.cuda()

logits = net(data)

loss = criteon(logits, target)

optimizer.zero_grad()

loss.backward()

# print(w1.grad.norm(), w2.grad.norm())

optimizer.step()

global_step+=1

viz.line([loss.item()],[global_step],win='train_loss',update='append')

if batch_idx % 100 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

test_loss = 0

correct = 0

for data, target in test_loader:

data = data.view(-1, 28 * 28)

data, target = data.to(device), target.cuda()

logits = net(data)

test_loss += criteon(logits, target).item()

pred = logits.argmax(dim=1)

correct += pred.eq(target).sum().float().item()

viz.line([[test_loss,correct/len(test_loader.dataset)]],

[global_step],win='test',update='append')

viz.images(data.view(-1, 1, 28, 28), win='x')

viz.text(str(pred.detach().cpu().numpy()),win='pred',

opts=dict(title='pred'))

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

801

801

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?