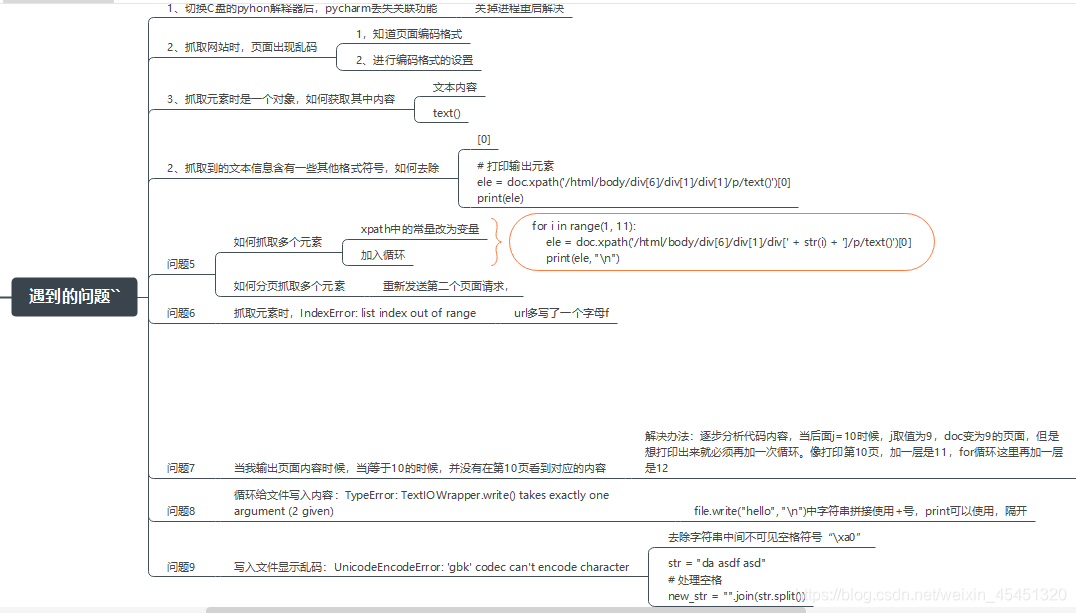

爬虫过程问题汇总:

代码:

import requests

from lxml import etree

# 爬取51网页代码

class GisMap():

# 定义属性

def __init__(self):

self.url = "http://www.51testing.com/html/90/category-catid-90.html"

# 爬虫抓取页面

def spider_page(self):

response = requests.get(self.url)

response.encoding = 'gbk'

self.doc = etree.HTML(response.text)

# 抓取元素并保存文件

def splider_save_element(self):

# 创建文件

file = open("test.txt", "w")

# 定位元素并存入文件

for j in range(2, 12):

for i in range(1, 11):

ele = self.doc.xpath('/html/body/div[6]/div[1]/div[' + str(i) + ']/p/text()')[0] # 每一页第一个元素的位置都是一样的

els = ''.join(ele.split())

file.write(els + "\n")

response = requests.get(f"http://www.51testing.com/html/90/category-catid-90-page-{j}.html")

response.encoding = "gbk"

self.doc = etree.HTML(response.text)

# 关闭文件

file.close()

if __name__ == '__main__':

# 实例化对象

pachong = GisMap()

# 调用类方法

pachong.spider_page()

pachong.splider_save_element()

1913

1913

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?