首先在阿里云购买一个服务器Linux centos使用Xshell连接(通过ip地址账号密码)

通过Xftp 把下载好的jdk、hadoop压缩包放到远程服务器上 文件目录/software

执行解压命令分别解压jdk和hadoop压缩包

jdk:tar -zxvf jdk-8u162-linux-x64.tar.gz -C /usr/local/java/

hadoop:tar -zxvf hadoop-2.7.1.tar.gz -C /usr/local/hadoop/

查看jdk 和 hadoop版本

jdk:java -version 命令

hadoop:到hadoop目录下执行./bin/hadoop version

配置环境变量 通过vim编辑/etc/profile

export JAVA_HOME=/usr/local/java/jdk1.8.0_162 export JRE_HOME=

J

A

V

A

H

O

M

E

/

j

r

e

e

x

p

o

r

t

C

L

A

S

S

P

A

T

H

=

.

:

{JAVA_HOME}/jre exportCLASSPATH=.:

JAVAHOME/jreexportCLASSPATH=.:{JAVA_HOME}/lib:

J

R

E

H

O

M

E

/

l

i

b

e

x

p

o

r

t

P

A

T

H

=

.

:

{JRE_HOME}/lib export PATH=.:

JREHOME/libexportPATH=.:{JAVA_HOME}/bin:

H

A

D

O

O

P

H

O

M

E

/

b

i

n

:

{HADOOP_HOME}/bin:

HADOOPHOME/bin:{HADOOP_HOME}/sbin:

P

A

T

H

e

x

p

o

r

t

H

A

D

O

O

P

H

O

M

E

=

/

u

s

r

/

l

o

c

a

l

/

h

a

d

o

o

p

/

h

a

d

o

o

p

−

2.7.1

e

x

p

o

r

t

P

A

T

H

=

PATH export HADOOP_HOME=/usr/local/hadoop/hadoop-2.7.1 export PATH=

PATHexportHADOOPHOME=/usr/local/hadoop/hadoop−2.7.1exportPATH=PATH:

H

A

D

O

O

P

H

O

M

E

/

b

i

n

e

x

p

o

r

t

P

A

T

H

=

HADOOP_HOME/bin export PATH=

HADOOPHOME/binexportPATH=PATH:$HADOOP_HOME/sbin

(Markdown原因…)

测试hadoop

cd /usr/local/hadoop/hadoop-2.7.1/

mkdir ./input

cp ./etc/hadoop/*.xml ./input

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.1.jar grep input output ‘dfs[a-z.]+’ cat ./output/*

伪分布式环境配置

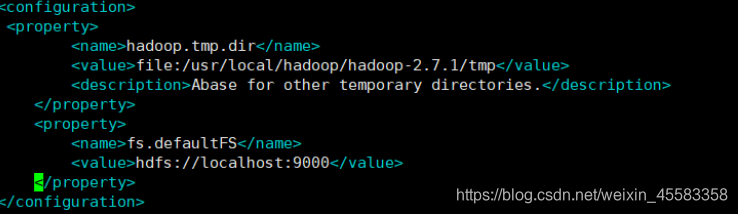

core-site.xml :

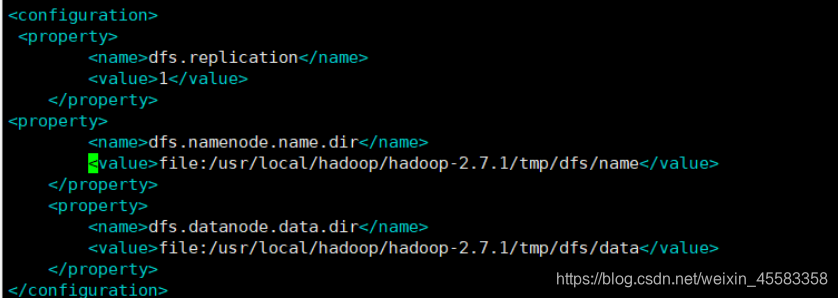

hdfs-site.xml:

格式化:./bin/hdfs namenode -format

接着开启 NaneNode 和 DataNode 守护进程:

./sbin/start-dfs.sh

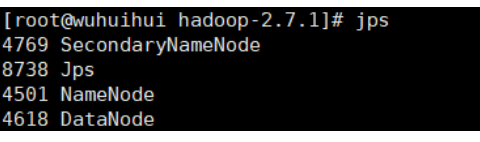

jps查看是否成功

178

178

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?