概述

Apache Sqoop™是一种在Apache Hadoop和结构化数据存储(例如关系数据库)之间高效传输批量数据的工具。通过内嵌的MapReduce程序实现关系型数据库和HDFS、Hbase、Hive等数据的导入导出。

安装

1.访问sqoop的网址http://sqoop.apache.org/,选择相应的sqoop版本下载,本案例选择下载的是1.4.7下载地址:https://mirrors.tuna.tsinghua.edu.cn/apache/sqoop/1.4.7/sqoop-1.4.7.bin__hadoop-2.6.0.tar.gz,下载完相应的工具包后,解压Sqoop.

[root@CentOS ~]# tar -zxf sqoop-1.4.7.bin__hadoop-2.6.0.tar.gz -C /usr/

[root@CentOS ~]# cd /usr/

[root@CentOS usr]# mv sqoop-1.4.7.bin__hadoop-2.6.0 sqoop-1.4.7

[root@CentOS ~]# cd /usr/sqoop-1.4.7/

2.配置SQOOP_HOME环境变量

[root@CentOS sqoop-1.4.7]# vi ~/.bashrc

JAVA_HOME=/usr/java/latest

HADOOP_HOME=/usr/hadoop-2.9.2

HBASE_HOME=/usr/hbase-1.2.4

HIVE_HOME=/usr/apache-hive-1.2.2-bin

SQOOP_HOME=/usr/sqoop-1.4.7

ZOOKEEPER_HOME=/usr/zookeeper-3.4.6

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HBASE_HOME/bin:$HIVE_HOME/bin:$SQOOP_HOME/bin:$ZOOKEEPER_HOME/bin

CLASSPATH=.

export JAVA_HOME

export HADOOP_HOME

export HBASE_HOME

export HIVE_HOME

export PATH

export CLASSPATH

export SQOOP_HOME

export ZOOKEEPER_HOME

[root@CentOS sqoop-1.4.7]# source ~/.bashrc

3.修改conf下的sqoop-env.sh.template配置文件

[root@CentOS sqoop-1.4.7]# mv conf/sqoop-env-template.sh conf/sqoop-env.sh

[root@CentOS sqoop-1.4.7]# vi conf/sqoop-env.sh

#Set path to where bin/hadoop is available

export HADOOP_COMMON_HOME=/usr/hadoop-2.9.2

#Set path to where hadoop-*-core.jar is available

export HADOOP_MAPRED_HOME=/usr/hadoop-2.9.2

#set the path to where bin/hbase is available

export HBASE_HOME=/usr/hbase-1.2.4

#Set the path to where bin/hive is available

export HIVE_HOME=/usr/apache-hive-1.2.2-bin

#Set the path for where zookeper config dir is

export ZOOCFGDIR=/usr/zookeeper-3.4.6/conf

4.将MySQL驱动jar拷贝到sqoop的lib目录下

[root@CentOS ~]# cp /usr/apache-hive-1.2.2-bin/lib/mysql-connector-java-5.1.48.jar /usr/sqoop-1.4.7/lib/

5.验证Sqoop是否安装成功

[root@CentOS sqoop-1.4.7]# sqoop version

Warning: /usr/sqoop-1.4.7/../hbase does not exist! HBase imports will fail.

Please set $HBASE_HOME to the root of your HBase installation.

Warning: /usr/sqoop-1.4.7/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /usr/sqoop-1.4.7/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /usr/sqoop-1.4.7/../zookeeper does not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation.

19/12/22 08:40:12 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

Sqoop 1.4.7

git commit id 2328971411f57f0cb683dfb79d19d4d19d185dd8

Compiled by maugli on Thu Dec 21 15:59:58 STD 2017

[root@CentOS sqoop-1.4.7]# sqoop list-tables --connect jdbc:mysql://192.168.52.1:3306/mysql --username root --password root

导入&导出

参考:

Import工具将单个表从RDBMS导入到HDFS。表中的每一行在HDFS中均表示为单独的记录。记录可以存储为文本文件(每行一个记录),也可以二进制表示形式存储为Avro或SequenceFiles。

RDBMS–>HDFS

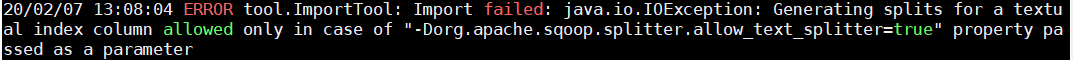

如果出现以下错误

需要加入-Dorg.apache.sqoop.splitter.allow_text_splitter=true参数

全表导入

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--driver com.mysql.jdbc.Driver \

--connect jdbc:mysql://train:3306/file?characterEncoding=UTF-8 \

--username root \

--password root \

--table file \

--num-mappers 4 \

--fields-terminated-by '\t' \

--target-dir /mysql/test/t_file \

--delete-target-dir

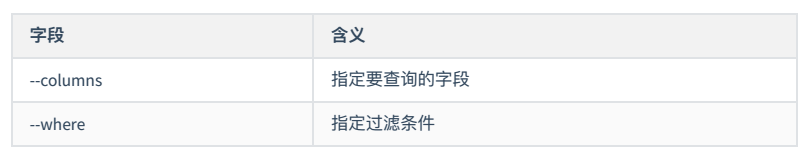

字段导入

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--driver com.mysql.jdbc.Driver \

--connect jdbc:mysql://train:3306/file?characterEncoding=UTF-8 \

--username root \

--password root \

--table file \

--columns id,oldname,newname \

--target-dir /mysql/test/t_file02 \

--delete-target-dir \

--num-mappers 4 \

--fields-terminated-by '\t'

导入查询

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--driver com.mysql.jdbc.Driver \

--connect jdbc:mysql://train:3306/file \

--username root \

--password root \

--num-mappers 3 \

--fields-terminated-by '\t' \

--query 'select id, oldname, newname from file where $CONDITIONS LIMIT 100 ' \

--split-by id \

--target-dir /mysql/test/t_file03 \

--delete-target-dir

如果要并行导入查询结果,则每个Map任务将需要执行查询的副本,其结果由sqoop推断的边界条件进行分区。您的查询必须包含令牌$CONDITIONS,每个sqoop进程将用唯一条件表达式替换该令牌。还必须使用–split-by选择拆分列。

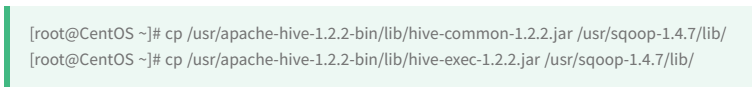

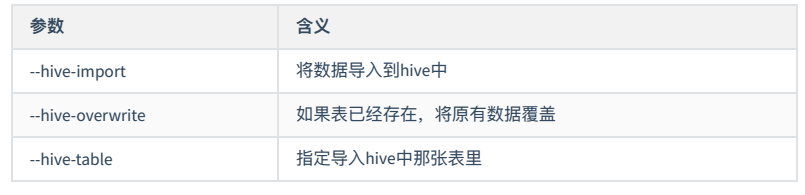

RDBMS导入

全量导入

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--connect jdbc:mysql://train:3306/file \

--username root \

--password root \

--table user \

--num-mappers 3 \

--hive-import \

--fields-terminated-by "\t" \

--hive-overwrite \

--hive-table baizhi.t_user

导入分区

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--connect jdbc:mysql://train:3306/test \

--username root \

--password root \

--table t_user \

--num-mappers 3 \

--hive-import \

--fields-terminated-by "\t" \

--hive-overwrite \

--hive-table baizhi.t_user \

--hive-partition-key city \

--hive-partition-value 'bj'

RDBMS–>Hbase

sqoop import \

-Dorg.apache.sqoop.splitter.allow_text_splitter=true \

--connect jdbc:mysql://train:3306/test \

--username root \

--password root \

--table t_user \

--num-mappers 3 \

--hbase-table baizhi:t_user1 \

--column-family cf1 \

--hbase-create-table \

--hbase-row-key id \

--hbase-bulkload

sqoop-export

Export工具将一组文件从HDFS导出回RDBMS。目标表必须已经存在于数据库中。根据用户指定的定界符,读取输入文件并将其解析为一组记录。

HDFS–>MySQL

测试数据

0 zhangsan true 20 2020-01-11

1 lisi false 25 2020-01-10

3 wangwu true 30 2020-01-17

4 zhaoliu false 50 1990-02-08

5 win7 true 20 1991-02-88

Mysql中需存在数据表

create table t_user(

id int primary key auto_increment,

name VARCHAR(32),

sex boolean,

age int,

birthDay date

) CHARACTER SET=utf8;

sqoop-export \

--connect jdbc:mysql://train:3306/test \

--username root \

--password root \

--table t_user \

--update-key id \

--update-mode allowinsert \

--export-dir /demo/src \

--input-fields-terminated-by '\t'

HBASE–>RDBMS

HBASE–>HIVE

HIVE-RDBMS 等价 HDFS–>RDBMS

1.准备测试数据 t_employee

7369,SMITH,CLERK,7902,1980-12-17 00:00:00,800,\N,20

7499,ALLEN,SALESMAN,7698,1981-02-20 00:00:00,1600,300,30

7521,WARD,SALESMAN,7698,1981-02-22 00:00:00,1250,500,30

7566,JONES,MANAGER,7839,1981-04-02 00:00:00,2975,\N,20

7654,MARTIN,SALESMAN,7698,1981-09-28 00:00:00,1250,1400,30

7698,BLAKE,MANAGER,7839,1981-05-01 00:00:00,2850,\N,30

7782,CLARK,MANAGER,7839,1981-06-09 00:00:00,2450,\N,10

7788,SCOTT,ANALYST,7566,1987-04-19 00:00:00,1500,\N,20

7839,KING,PRESIDENT,\N,1981-11-17 00:00:00,5000,\N,10

7844,TURNER,SALESMAN,7698,1981-09-08 00:00:00,1500,0,30

7876,ADAMS,CLERK,7788,1987-05-23 00:00:00,1100,\N,20

7900,JAMES,CLERK,7698,1981-12-03 00:00:00,950,\N,30

7902,FORD,ANALYST,7566,1981-12-03 00:00:00,3000,\N,20

7934,MILLER,CLERK,7782,1982-01-23 00:00:00,1300,\N,10

create database if not exists baizhi;

use baizhi;

drop table if exists t_employee;

CREATE TABLE t_employee(

empno INT,

ename STRING,

job STRING,

mgr INT,

hiredate TIMESTAMP,

sal DECIMAL(7,2),

comm DECIMAL(7,2),

deptno INT)

row format delimited

fields terminated by ','

collection items terminated by '|'

map keys terminated by '>'

lines terminated by '\n'

stored as textfile;

load data local inpath '/root/test/t_employee' overwrite into table t_employee;

drop table if exists t_employee_hbase;

create external table t_employee_hbase(empno INT,

ename STRING,

job STRING,

mgr INT,

hiredate TIMESTAMP,

sal DECIMAL(7,2),

comm DECIMAL(7,2),

deptno INT)

STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

WITH SERDEPROPERTIES("hbase.columns.mapping" = ":key,cf1:name,cf1:job,cf1:mgr,cf1:hiredate,cf1:sal,cf1:comm,cf1:deptno")

TBLPROPERTIES("hbase.table.name" = "baizhi:t_employee");

insert overwrite table t_employee_hbase select empno,ename,job,mgr,hiredate,sal,comm,deptno from t_employee;

2.将HBase的数据导出到HDFS

use baizhi;

INSERT OVERWRITE DIRECTORY '/demo/src/employee' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS TEXTFILE select * from t_employee_hbase;

3.将HDFS中的数据导出到RDBMS中

sqoop-export \

--connect jdbc:mysql://train:3306/test \

--username root \

--password root \

--table t_employee \

--update-key id \

--update-mode allowinsert \

--export-dir /demo/src/employee \

--input-fields-terminated-by ',' \

--input-null-string '\\N' \

--input-null-non-string '\\N';

4495

4495

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?