激活函数

import torch

a = torch.linspace(-100,100,10)

a

torch.sigmoid(a)#激活函数

a=torch.linsapce(-1,1,10)

torch.tanh(a)

torch.relu(a)

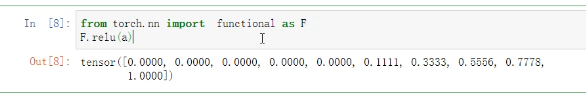

from torch.nn import function as F

F.relu(a)

loss

import torch

x=torch.ones(1)

x

w=torch.full([1],2)

w

from torch.nn import functional as F

mse =F.mse_loss(torch.ones(1),x*w)

mse

torch.autograd.grad(mse,[w])#报错没这个函数

怎么办呢

w.requires_grad_()

结果:

mse= F.mse_loss(torch.one(1),x*w)

torch.autograd.grad(mse,[w])

mse=F.mse_loss(torch.ones(1),x*w)

mse

x=torch.ones(1)

w=torch.full([1],2)

mse=F.mse_loss(torch.ones(1),x*w)

mse

torch.autograd.grad(mse,[w])#还是会报错

w.requires_grad_()

mse=F.mse_loss(torch.ones(1),x*w)

mse.backward()

w.grad#back自动求导 参数梯度信息记录在成员变量grad中

7734

7734

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?