模型简介

SSD,全称Single Shot MultiBox Detector,是Wei Liu在ECCV 2016上提出的一种目标检测算法。使用Nvidia Titan X在VOC 2007测试集上,SSD对于输入尺寸300x300的网络,达到74.3%mAP(mean Average Precision)以及59FPS;对于512x512的网络,达到了76.9%mAP ,超越当时最强的Faster RCNN(73.2%mAP)。具体可参考论文[1]。

SSD目标检测主流算法分成可以两个类型:

-

two-stage方法:RCNN系列

通过算法产生候选框,然后再对这些候选框进行分类和回归。

-

one-stage方法:YOLO和SSD

直接通过主干网络给出类别位置信息,不需要区域生成。

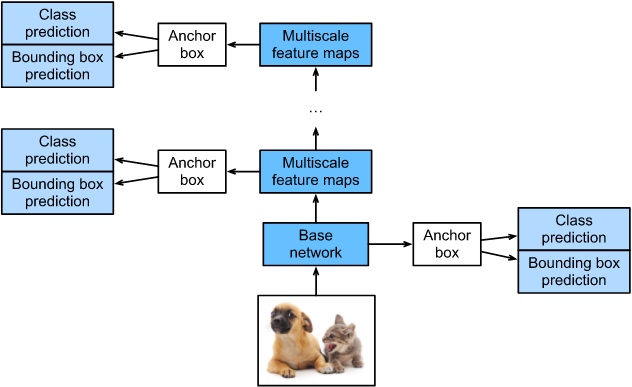

SSD是单阶段的目标检测算法,通过卷积神经网络进行特征提取,取不同的特征层进行检测输出,所以SSD是一种多尺度的检测方法。在需要检测的特征层,直接使用一个3 × \times × 3卷积,进行通道的变换。SSD采用了anchor的策略,预设不同长宽比例的anchor,每一个输出特征层基于anchor预测多个检测框(4或者6)。采用了多尺度检测方法,浅层用于检测小目标,深层用于检测大目标。SSD的框架如下图:

模型结构

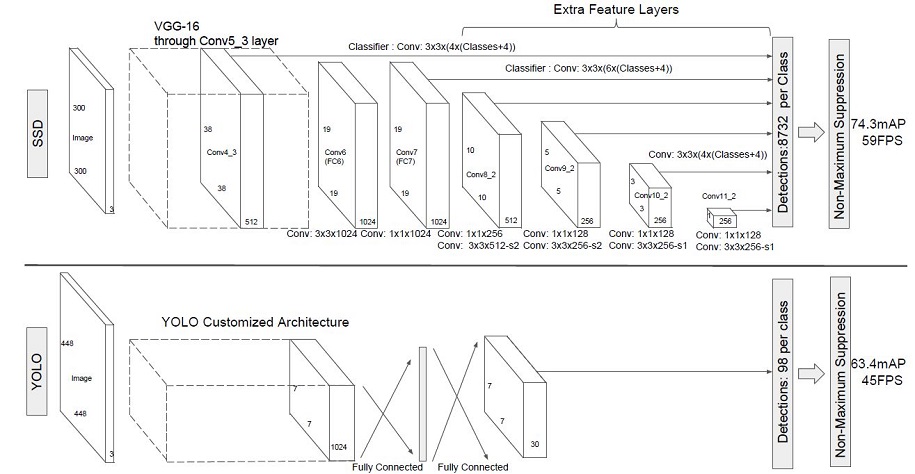

SSD采用VGG16作为基础模型,然后在VGG16的基础上新增了卷积层来获得更多的特征图以用于检测。SSD的网络结构如图所示。上面是SSD模型,下面是YOLO模型,可以明显看到SSD利用了多尺度的特征图做检测。

两种单阶段目标检测算法的比较:

SSD先通过卷积不断进行特征提取,在需要检测物体的网络,直接通过一个3

×

\times

× 3卷积得到输出,卷积的通道数由anchor数量和类别数量决定,具体为(anchor数量*(类别数量+4))。

SSD对比了YOLO系列目标检测方法,不同的是SSD通过卷积得到最后的边界框,而YOLO对最后的输出采用全连接的形式得到一维向量,对向量进行拆解得到最终的检测框。

模型特点

-

多尺度检测

在SSD的网络结构图中我们可以看到,SSD使用了多个特征层,特征层的尺寸分别是38 × \times × 38,19 × \times × 19,10 × \times × 10,5 × \times × 5,3 × \times × 3,1 × \times × 1,一共6种不同的特征图尺寸。大尺度特征图(较靠前的特征图)可以用来检测小物体,而小尺度特征图(较靠后的特征图)用来检测大物体。多尺度检测的方式,可以使得检测更加充分(SSD属于密集检测),更能检测出小目标。

-

采用卷积进行检测

与YOLO最后采用全连接层不同,SSD直接采用卷积对不同的特征图来进行提取检测结果。对于形状为m × \times × n × \times × p的特征图,只需要采用3 × \times × 3 × \times × p这样比较小的卷积核得到检测值。

-

预设anchor

在YOLOv1中,直接由网络预测目标的尺寸,这种方式使得预测框的长宽比和尺寸没有限制,难以训练。在SSD中,采用预设边界框,我们习惯称它为anchor(在SSD论文中叫default bounding boxes),预测框的尺寸在anchor的指导下进行微调。

数据采样

为了使模型对于各种输入对象大小和形状更加鲁棒,SSD算法每个训练图像通过以下选项之一随机采样:

-

使用整个原始输入图像

-

采样一个区域,使采样区域和原始图片最小的交并比重叠为0.1,0.3,0.5,0.7或0.9

-

随机采样一个区域

每个采样区域的大小为原始图像大小的[0.3,1],长宽比在1/2和2之间。如果真实标签框中心在采样区域内,则保留两者重叠部分作为新图片的真实标注框。在上述采样步骤之后,将每个采样区域大小调整为固定大小,并以0.5的概率水平翻转。

import cv2

import numpy as np

def _rand(a=0., b=1.):

return np.random.rand() * (b - a) + a

def intersect(box_a, box_b):

"""Compute the intersect of two sets of boxes."""

max_yx = np.minimum(box_a[:, 2:4], box_b[2:4])

min_yx = np.maximum(box_a[:, :2], box_b[:2])

inter = np.clip((max_yx - min_yx), a_min=0, a_max=np.inf)

return inter[:, 0] * inter[:, 1]

def jaccard_numpy(box_a, box_b):

"""Compute the jaccard overlap of two sets of boxes."""

inter = intersect(box_a, box_b)

area_a = ((box_a[:, 2] - box_a[:, 0]) *

(box_a[:, 3] - box_a[:, 1]))

area_b = ((box_b[2] - box_b[0]) *

(box_b[3] - box_b[1]))

union = area_a + area_b - inter

return inter / union

def random_sample_crop(image, boxes):

"""Crop images and boxes randomly."""

height, width, _ = image.shape

min_iou = np.random.choice([None, 0.1, 0.3, 0.5, 0.7, 0.9])

if min_iou is None:

return image, boxes

for _ in range(50):

image_t = image

w = _rand(0.3, 1.0) * width

h = _rand(0.3, 1.0) * height

# aspect ratio constraint b/t .5 & 2

if h / w < 0.5 or h / w > 2:

continue

left = _rand() * (width - w)

top = _rand() * (height - h)

rect = np.array([int(top), int(left), int(top + h), int(left + w)])

overlap = jaccard_numpy(boxes, rect)

# dropout some boxes

drop_mask = overlap > 0

if not drop_mask.any():

continue

if overlap[drop_mask].min() < min_iou and overlap[drop_mask].max() > (min_iou + 0.2):

continue

image_t = image_t[rect[0]:rect[2], rect[1]:rect[3], :]

centers = (boxes[:, :2] + boxes[:, 2:4]) / 2.0

m1 = (rect[0] < centers[:, 0]) * (rect[1] < centers[:, 1])

m2 = (rect[2] > centers[:, 0]) * (rect[3] > centers[:, 1])

# mask in that both m1 and m2 are true

mask = m1 * m2 * drop_mask

# have any valid boxes? try again if not

if not mask.any():

continue

# take only matching gt boxes

boxes_t = boxes[mask, :].copy()

boxes_t[:, :2] = np.maximum(boxes_t[:, :2], rect[:2])

boxes_t[:, :2] -= rect[:2]

boxes_t[:, 2:4] = np.minimum(boxes_t[:, 2:4], rect[2:4])

boxes_t[:, 2:4] -= rect[:2]

return image_t, boxes_t

return image, boxes

def ssd_bboxes_encode(boxes):

"""Labels anchors with ground truth inputs."""

def jaccard_with_anchors(bbox):

"""Compute jaccard score a box and the anchors."""

# Intersection bbox and volume.

ymin = np.maximum(y1, bbox[0])

xmin = np.maximum(x1, bbox[1])

ymax = np.minimum(y2, bbox[2])

xmax = np.minimum(x2, bbox[3])

w = np.maximum(xmax - xmin, 0.)

h = np.maximum(ymax - ymin, 0.)

# Volumes.

inter_vol = h * w

union_vol = vol_anchors + (bbox[2] - bbox[0]) * (bbox[3] - bbox[1]) - inter_vol

jaccard = inter_vol / union_vol

return np.squeeze(jaccard)

pre_scores = np.zeros((8732), dtype=np.float32)

t_boxes = np.zeros((8732, 4), dtype=np.float32)

t_label = np.zeros((8732), dtype=np.int64)

for bbox in boxes:

label = int(bbox[4])

scores = jaccard_with_anchors(bbox)

idx = np.argmax(scores)

scores[idx] = 2.0

mask = (scores > matching_threshold)

mask = mask & (scores > pre_scores)

pre_scores = np.maximum(pre_scores, scores * mask)

t_label = mask * label + (1 - mask) * t_label

for i in range(4):

t_boxes[:, i] = mask * bbox[i] + (1 - mask) * t_boxes[:, i]

index = np.nonzero(t_label)

# Transform to tlbr.

bboxes = np.zeros((8732, 4), dtype=np.float32)

bboxes[:, [0, 1]] = (t_boxes[:, [0, 1]] + t_boxes[:, [2, 3]]) / 2

bboxes[:, [2, 3]] = t_boxes[:, [2, 3]] - t_boxes[:, [0, 1]]

# Encode features.

bboxes_t = bboxes[index]

default_boxes_t = default_boxes[index]

bboxes_t[:, :2] = (bboxes_t[:, :2] - default_boxes_t[:, :2]) / (default_boxes_t[:, 2:] * 0.1)

tmp = np.maximum(bboxes_t[:, 2:4] / default_boxes_t[:, 2:4], 0.000001)

bboxes_t[:, 2:4] = np.log(tmp) / 0.2

bboxes[index] = bboxes_t

num_match = np.array([len(np.nonzero(t_label)[0])], dtype=np.int32)

return bboxes, t_label.astype(np.int32), num_match

def preprocess_fn(img_id, image, box, is_training):

"""Preprocess function for dataset."""

cv2.setNumThreads(2)

def _infer_data(image, input_shape):

img_h, img_w, _ = image.shape

input_h, input_w = input_shape

image = cv2.resize(image, (input_w, input_h))

# When the channels of image is 1

if len(image.shape) == 2:

image = np.expand_dims(image, axis=-1)

image = np.concatenate([image, image, image], axis=-1)

return img_id, image, np.array((img_h, img_w), np.float32)

def _data_aug(image, box, is_training, image_size=(300, 300)):

ih, iw, _ = image.shape

h, w = image_size

if not is_training:

return _infer_data(image, image_size)

# Random crop

box = box.astype(np.float32)

image, box = random_sample_crop(image, box)

ih, iw, _ = image.shape

# Resize image

image = cv2.resize(image, (w, h))

# Flip image or not

flip = _rand() < .5

if flip:

image = cv2.flip(image, 1, dst=None)

# When the channels of image is 1

if len(image.shape) == 2:

image = np.expand_dims(image, axis=-1)

image = np.concatenate([image, image, image], axis=-1)

box[:, [0, 2]] = box[:, [0, 2]] / ih

box[:, [1, 3]] = box[:, [1, 3]] / iw

if flip:

box[:, [1, 3]] = 1 - box[:, [3, 1]]

box, label, num_match = ssd_bboxes_encode(box)

return image, box, label, num_match

return _data_aug(image, box, is_training, image_size=[300, 300])

from mindspore.common.initializer import initializer, TruncatedNormal

def init_net_param(network, initialize_mode='TruncatedNormal'):

"""Init the parameters in net."""

params = network.trainable_params()

for p in params:

if 'beta' not in p.name and 'gamma' not in p.name and 'bias' not in p.name:

if initialize_mode == 'TruncatedNormal':

p.set_data(initializer(TruncatedNormal(0.02), p.data.shape, p.data.dtype))

else:

p.set_data(initialize_mode, p.data.shape, p.data.dtype)

def get_lr(global_step, lr_init, lr_end, lr_max, warmup_epochs, total_epochs, steps_per_epoch):

""" generate learning rate array"""

lr_each_step = []

total_steps = steps_per_epoch * total_epochs

warmup_steps = steps_per_epoch * warmup_epochs

for i in range(total_steps):

if i < warmup_steps:

lr = lr_init + (lr_max - lr_init) * i / warmup_steps

else:

lr = lr_end + (lr_max - lr_end) * (1. + math.cos(math.pi * (i - warmup_steps) / (total_steps - warmup_steps))) / 2.

if lr < 0.0:

lr = 0.0

lr_each_step.append(lr)

current_step = global_step

lr_each_step = np.array(lr_each_step).astype(np.float32)

learning_rate = lr_each_step[current_step:]

return learning_rate

188

188

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?