实验环境:Centos7虚拟机一台

关闭防火墙 # systemctl stop firewalld

配置完成环境变量一定得:# source /etc/profile

配置静态IP

[root@localhost ~]# vi /etc/sysconfig/network-scripts/ifcfg-eno16777736

检测:ping baidu.com

配置免密登录

# 生成密钥

ssh-keygen

# 生成授权文件authorized_keys

ssh-copy-id localhost

安装jdk

上传jdk文件到虚拟机

解压jdk-8u251-linux-x64.tar.gz

配置jdk环境变量

[root@localhost jdk1.8.0_251]# vi /etc/profile

[root@localhost jdk1.8.0_251]# tail -5 /etc/profile

unset i

unset -f pathmunge

export JAVA_HOME=/root/jdk1.8.0_251

export PATH=$PATH:$JAVA_HOME/bin

[root@localhost jdk1.8.0_251]# source /etc/profile

[root@localhost jdk1.8.0_251]# java -version

java version "1.8.0_251"

Java(TM) SE Runtime Environment (build 1.8.0_251-b08)

Java HotSpot(TM) 64-Bit Server VM (build 25.251-b08, mixed mode)

[root@localhost jdk1.8.0_251]#

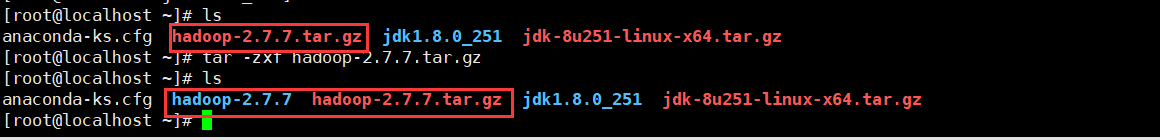

安装hadoop

上传hadoop压缩包

解压hadoop-2.7.7

配置hadoop环境变量

[root@localhost ~]# tail -5 /etc/profile

export JAVA_HOME=/root/jdk1.8.0_251

export PATH=$PATH:$JAVA_HOME/bin

export HADOOP_HOME=/root/hadoop-2.7.7

export PATH=$HADOOP_HOME/sbin:$PATH:$HADOOP_HOME/bin

配置hadoop配置文件

hadoop-env.sh

core-site.xml

hdfs-site.xml

yarn-env.sh

mapred-site.xml

yarn-site.xml

slaves

# vi hadoop-env.sh

# vi core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/zran/hadoopdata</value>

</property>

</configuration>

# vi hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

</configuration>

修改mapred-site.xml(注意要将mapred-site.xml.template重命名为 .xml的文件)

命令:mv mapred-site.xml.template mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

# vi yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanger.hostname</name>

<value>localhost</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

# vi yarn-env.sh

格式化 namenode

# hdfs namenode -format

验证

开启hadoop伪分布集群

# 开启伪分布集群

start-all.sh

# jps进程检测

[root@localhost hadoop]# jps

10673 NodeManager

10979 Jps

10436 SecondaryNameNode

10581 ResourceManager

10167 NameNode

10252 DataNode

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?