引言

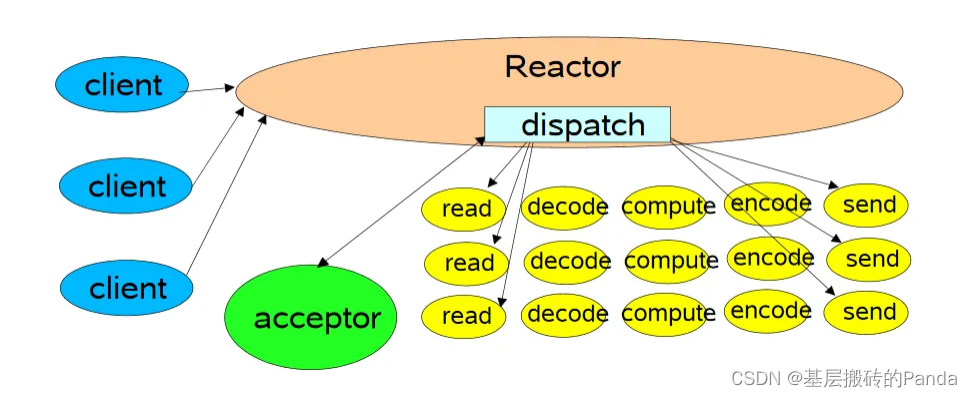

在Reactor (One eventLoop per thread)模型中,一次时间循环可以处理若干个事件,但是这些事件是串行处理的,一个任务若是执行时间过长,会延迟其他任务的处理,在客户端看来就是响应变慢了。线程池就是处理这种耗时场景的方案之一。

一、什么是线程池及作用

为了避免线程的频繁创建和销毁,提前准备好一定数量的线程,并管理起来,有任务就分配一个线程去执行,任务执行结束,不是销毁线程,而是放回到线程池,待下次有任务来继续执行。

作用:

- 实现了线程的复用

- 减少了线程创建和销毁的开销

- 减少多个任务(不是一个任务)的执行时间

二、线程池的应用场景

- 某类任务比较耗时,影响线程执行其他任务;

- 需要异步的执行某类任务;

三、线程池的接口设计

线程池是一个典型的生产者消费模型。有生产者、队列、消费者(线程池),其中队列用于解耦。

- 线程池的创建(线程数量和队列大小)

- 线程池的销毁(线程退出标志和通知所有线程)

- 添加任务(封装任务、入队、通知线程执行)

- 任务调度(mutex、condition)

3.1 线程数量如何确定(经验值)

根据耗时任务的类型,可以将任务分为:

- IO密集型,线程数量为: 2*n + 2(n为CPU核心数)

- CPU密集型 ,线程数量为:CPU核心数

四、线程池在Redis、Nginx中的应用

4.1 Redis中的线程池

Redis是内存数据库,读写数据嘎嘎快。线程池主要用在,读写 io 处理以及数据包解析、压缩部分。

4.2 Nginx中的线程池

Nginx线程池模型,主线程将任务加入任务队列,线程池取任务执行,执行完任务将结果存入完成消息队列,通知主线程获取任务执行结果。

Nginx做静态代理时,作用的位置如下:

五、完整代码示例

5.1 thread_pool.h

#ifndef _THREAD_POOL_H

#define _THREAD_POOL_H

typedef struct thread_pool_t thread_pool_t;

typedef void (*handler_pt) (void *);

thread_pool_t *thread_pool_create(int thrd_count, int queue_size);

int thread_pool_post(thread_pool_t *pool, handler_pt func, void *arg);

int thread_pool_destroy(thread_pool_t *pool);

int wait_all_done(thread_pool_t *pool);

#endif

5.2 thread_pool.h

#include <pthread.h>

#include <stdint.h>

#include <stddef.h>

#include <stdlib.h>

#include "thrd_pool.h"

typedef struct task_t {

handler_pt func;

void * arg;

} task_t;

typedef struct task_queue_t {

uint32_t head;

uint32_t tail;

uint32_t count;

task_t *queue;

} task_queue_t;

struct thread_pool_t {

pthread_mutex_t mutex;

pthread_cond_t condition;

pthread_t *threads;

task_queue_t task_queue;

int closed;

int started; // 当前运行的线程数

int thrd_count;

int queue_size;

};

static void * thread_worker(void *thrd_pool);

static void thread_pool_free(thread_pool_t *pool);

thread_pool_t *

thread_pool_create(int thrd_count, int queue_size) {

thread_pool_t *pool;

if (thrd_count <= 0 || queue_size <= 0) {

return NULL;

}

pool = (thread_pool_t*) malloc(sizeof(*pool));

if (pool == NULL) {

return NULL;

}

pool->thrd_count = 0;

pool->queue_size = queue_size;

pool->task_queue.head = 0;

pool->task_queue.tail = 0;

pool->task_queue.count = 0;

pool->started = pool->closed = 0;

pool->task_queue.queue = (task_t*)malloc(sizeof(task_t)*queue_size);

if (pool->task_queue.queue == NULL) {

// TODO: free pool

return NULL;

}

pool->threads = (pthread_t*) malloc(sizeof(pthread_t) * thrd_count);

if (pool->threads == NULL) {

// TODO: free pool

return NULL;

}

int i = 0;

for (; i < thrd_count; i++) {

if (pthread_create(&(pool->threads[i]), NULL, thread_worker, (void*)pool) != 0) {

// TODO: free pool

return NULL;

}

pool->thrd_count++;

pool->started++;

}

return pool;

}

int

thread_pool_post(thread_pool_t *pool, handler_pt func, void *arg) {

if (pool == NULL || func == NULL) {

return -1;

}

task_queue_t *task_queue = &(pool->task_queue);

if (pthread_mutex_lock(&(pool->mutex)) != 0) {

return -2;

}

if (pool->closed) {

pthread_mutex_unlock(&(pool->mutex));

return -3;

}

if (task_queue->count == pool->queue_size) {

pthread_mutex_unlock(&(pool->mutex));

return -4;

}

task_queue->queue[task_queue->tail].func = func;

task_queue->queue[task_queue->tail].arg = arg;

task_queue->tail = (task_queue->tail + 1) % pool->queue_size;

task_queue->count++;

if (pthread_cond_signal(&(pool->condition)) != 0) {

pthread_mutex_unlock(&(pool->mutex));

return -5;

}

pthread_mutex_unlock(&(pool->mutex));

return 0;

}

static void

thread_pool_free(thread_pool_t *pool) {

if (pool == NULL || pool->started > 0) {

return;

}

if (pool->threads) {

free(pool->threads);

pool->threads = NULL;

pthread_mutex_lock(&(pool->mutex));

pthread_mutex_destroy(&pool->mutex);

pthread_cond_destroy(&pool->condition);

}

if (pool->task_queue.queue) {

free(pool->task_queue.queue);

pool->task_queue.queue = NULL;

}

free(pool);

}

int

wait_all_done(thread_pool_t *pool) {

int i, ret=0;

for (i=0; i < pool->thrd_count; i++) {

if (pthread_join(pool->threads[i], NULL) != 0) {

ret=1;

}

}

return ret;

}

int

thread_pool_destroy(thread_pool_t *pool) {

if (pool == NULL) {

return -1;

}

if (pthread_mutex_lock(&(pool->mutex)) != 0) {

return -2;

}

if (pool->closed) {

thread_pool_free(pool);

return -3;

}

pool->closed = 1;

if (pthread_cond_broadcast(&(pool->condition)) != 0 ||

pthread_mutex_unlock(&(pool->mutex)) != 0) {

thread_pool_free(pool);

return -4;

}

wait_all_done(pool);

thread_pool_free(pool);

return 0;

}

static void *

thread_worker(void *thrd_pool) {

thread_pool_t *pool = (thread_pool_t*)thrd_pool;

task_queue_t *que;

task_t task;

for (;;) {

pthread_mutex_lock(&(pool->mutex));

que = &pool->task_queue;

// 虚假唤醒 linux pthread_cond_signal

// linux 可能被信号唤醒

// 业务逻辑不严谨,被其他线程抢了该任务

while (que->count == 0 && pool->closed == 0) {

// pthread_mutex_unlock(&(pool->mutex))

// 阻塞在 condition

// ===================================

// 解除阻塞

// pthread_mutex_lock(&(pool->mutex));

pthread_cond_wait(&(pool->condition), &(pool->mutex));

}

if (pool->closed == 1) break;

task = que->queue[que->head];

que->head = (que->head + 1) % pool->queue_size;

que->count--;

pthread_mutex_unlock(&(pool->mutex));

(*(task.func))(task.arg);

}

pool->started--;

pthread_mutex_unlock(&(pool->mutex));

pthread_exit(NULL);

return NULL;

}

5.2 main.c

#include <stdio.h>

#include <stdlib.h>

#include <pthread.h>

#include <unistd.h>

#include "thrd_pool.h"

int nums = 0;

int done = 0;

pthread_mutex_t lock;

void do_task(void *arg) {

usleep(10000);

pthread_mutex_lock(&lock);

done++;

printf("doing %d task\n", done);

pthread_mutex_unlock(&lock);

}

int main(int argc, char **argv) {

int threads = 8;

int queue_size = 256;

if (argc == 2) {

threads = atoi(argv[1]);

if (threads <= 0) {

printf("threads number error: %d\n", threads);

return 1;

}

} else if (argc > 2) {

threads = atoi(argv[1]);

queue_size = atoi(argv[1]);

if (threads <= 0 || queue_size <= 0) {

printf("threads number or queue size error: %d,%d\n", threads, queue_size);

return 1;

}

}

thread_pool_t *pool = thread_pool_create(threads, queue_size);

if (pool == NULL) {

printf("thread pool create error!\n");

return 1;

}

while (thread_pool_post(pool, &do_task, NULL) == 0) {

pthread_mutex_lock(&lock);

nums++;

pthread_mutex_unlock(&lock);

}

printf("add %d tasks\n", nums);

wait_all_done(pool);

printf("did %d tasks\n", done);

thread_pool_destroy(pool);

return 0;

}

153

153

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?