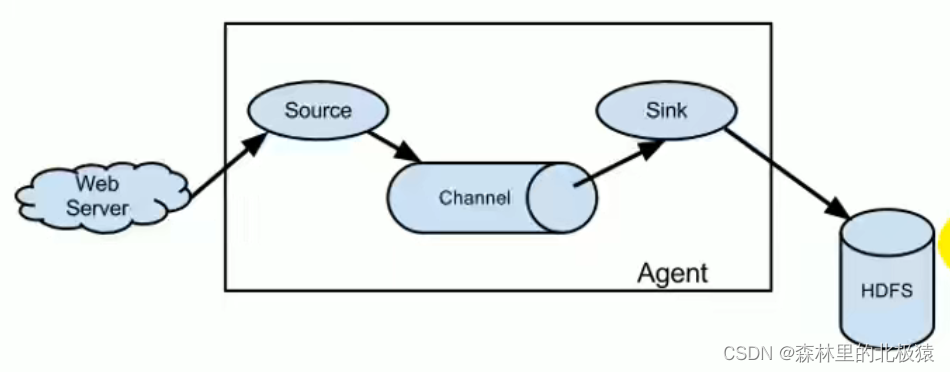

为什么选用Flume

flume最主要的作用就是,实时读取服务器本地磁盘数据,将数据写入到HDFS。(最主流)

Flume架构

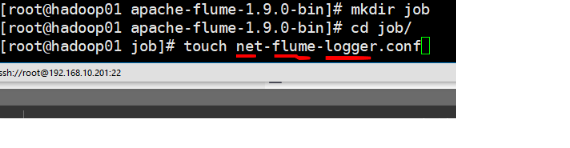

案例1

在flume下建立一个文件(文件命名一般都是source-flume-sink,见名见意)

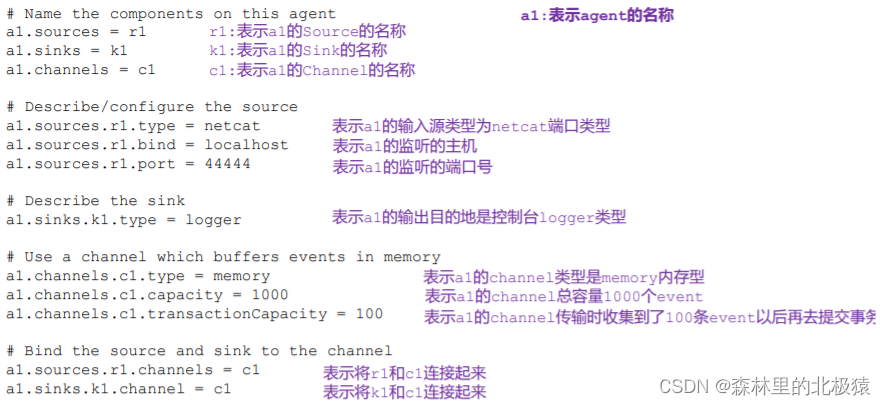

# example.conf: A single-node Flume configuration

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000 //1000代表的是1000个事件

a1.channels.c1.transactionCapacity = 100 //一次最多传100个时间,这条要比上面的少

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

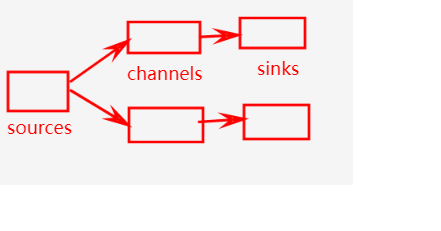

要注意这里:

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

这两行代表的这种意思

一个source能够发给多个channel,但是一个channel只能发给一个sink

shell启动命令

//官网给的启动,提示

$ bin/flume-ng agent -n $agent_name -c conf -f conf/flume-conf.properties.template -Dflume.root.logger=INFO,console

//我的启动

bin/flume-ng agent -n a1 -c conf/ -f job/net-flume-logger.co -Dflume.root.logger=INFO,console

–conf/-c:表示配置文件存储在 conf/目录

–name/-n:表示给 agent 起名为 a1

–conf-file/-f:flume 本次启动读取的配置文件是在 job 文件夹下的 flume-telnet.conf

文件。

-Dflume.root.logger=INFO,console :-D 表示 flume 运行时动态修改 flume.root.logger

参数属性值,并将控制台日志打印级别设置为 INFO 级别。日志级别包括:log、info、warn、

error。

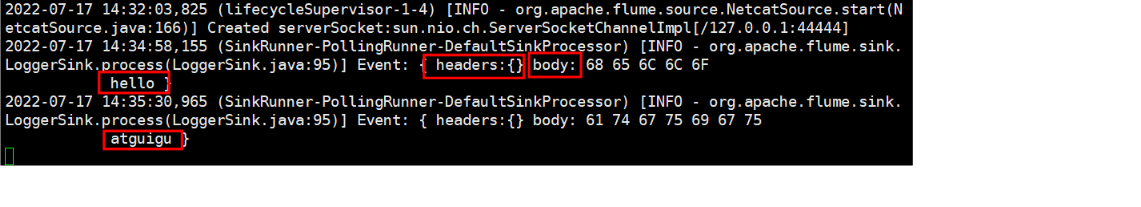

启动成功

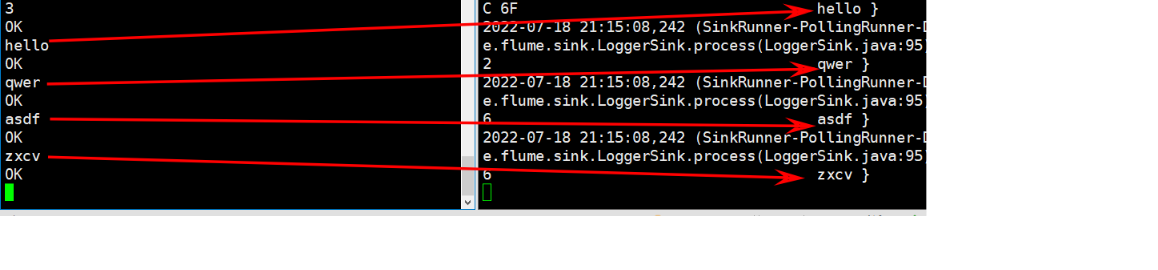

使用 netcat 工具向本机的 44444 端口发送内容

在 Flume 监听页面观察接收数据情况

案例二(实时监控单个追加文件)

案例需求:实时监控 Hive 日志,并上传到 HDFS 中

实时读取本地文件到HDFS

基本步骤

实施步骤

在flume下的job目录下创建一个文件

vim flume-file-hdfs.conf

需要注意的是:

要想读取Linux中文件,就得Linux命令的规则执行命令。

由于Hive的日志是在Linux系统中,所以读取文件的类型选择为exec(也就是executor 执行者 的意思)。

表示执行Linux命令来读取文件

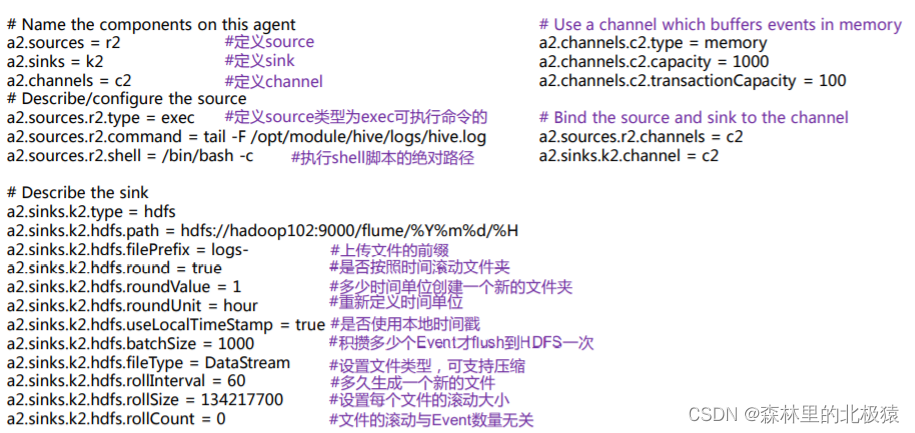

配置信息如下

查看flume链接

选择用户指南

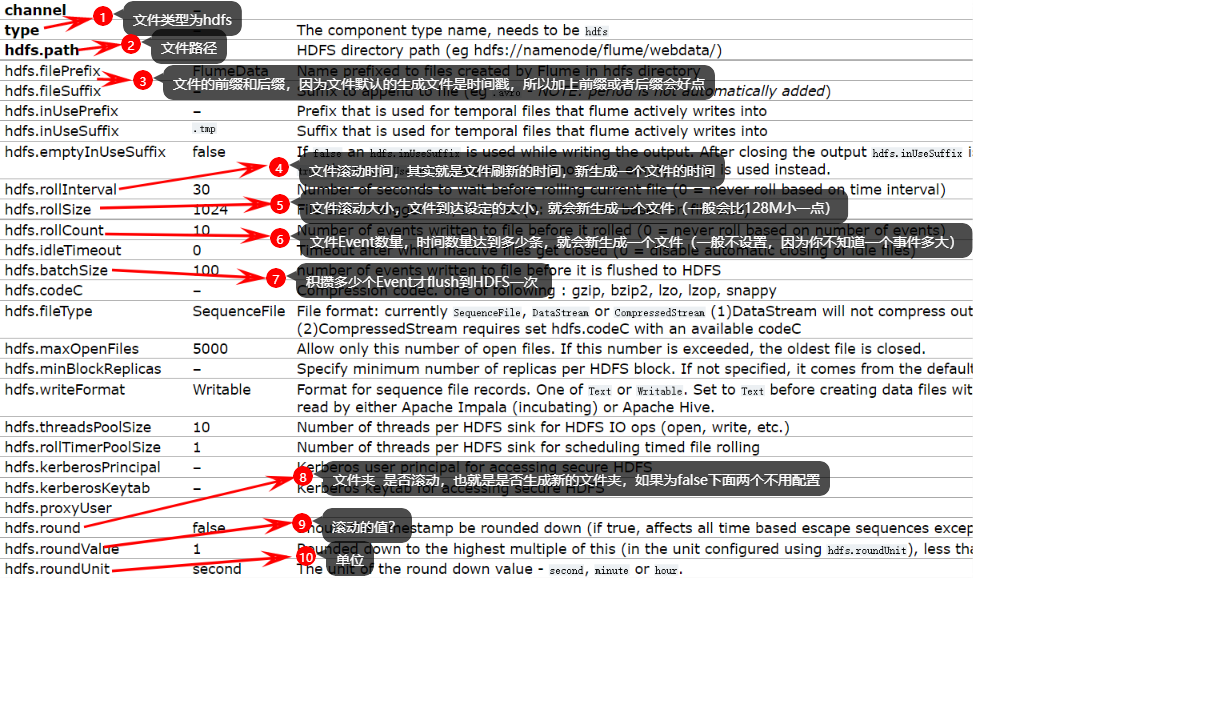

因为我们要上传文件到HDFS中,也就是sink端为HDFS,我们可以搜hdfs sink 查看需要什么配置参数

# Name the components on this agent

a2.sources = r2

a2.sinks = k2

a2.channels = c2

# Describe/configure the source

# 执行文件的类型,exec执行Linux命令,查看文件

a2.sources.r2.type = exec

# 文件的执行命令(这里hive.log如果没有特别配置默认在 /tmp/root/hive.log)

a2.sources.r2.command = tail -F /opt/module/hive/logs/hive.log

a2.sources.r2.shell = /bin/bash -c

# Describe the sink

a2.sinks.k2.type = hdfs

# 这里创建目录(不是文件)

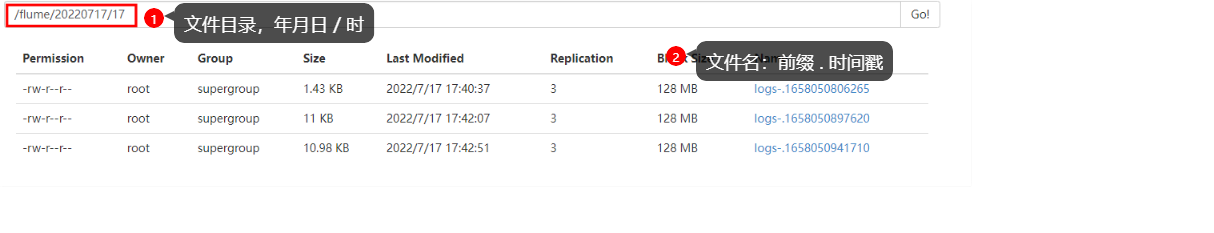

a2.sinks.k2.hdfs.path = hdfs://hadoop102:9000/flume/%Y%m%d/%H

#上传文件的前缀

a2.sinks.k2.hdfs.filePrefix = logs-

#是否按照时间滚动文件夹

a2.sinks.k2.hdfs.round = true

#多少时间单位创建一个新的文件夹

a2.sinks.k2.hdfs.roundValue = 1

#重新定义时间单位

a2.sinks.k2.hdfs.roundUnit = hour

#是否使用本地时间戳,这里一定要true,因为上面我们用到了时间

a2.sinks.k2.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

a2.sinks.k2.hdfs.batchSize = 100

#设置文件类型,可支持压缩

a2.sinks.k2.hdfs.fileType = DataStream

#多久生成一个新的文件

a2.sinks.k2.hdfs.rollInterval = 30

#设置每个文件的滚动大小

a2.sinks.k2.hdfs.rollSize = 134217700

#文件的滚动与 Event 数量无关

a2.sinks.k2.hdfs.rollCount = 0

# Use a channel which buffers events in memory

a2.channels.c2.type = memory

a2.channels.c2.capacity = 1000

a2.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r2.channels = c2

a2.sinks.k2.channel = c2

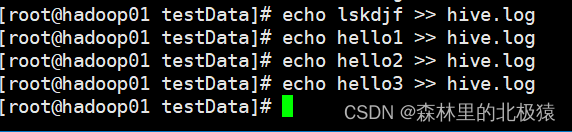

开始测试

启动flume任务

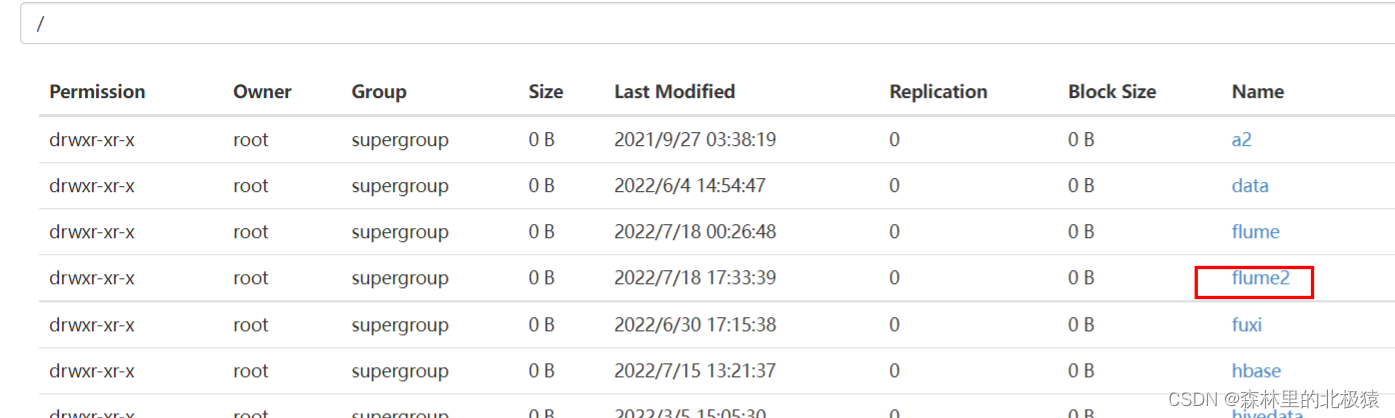

启动hive,在hive里面操作,查看hdfs

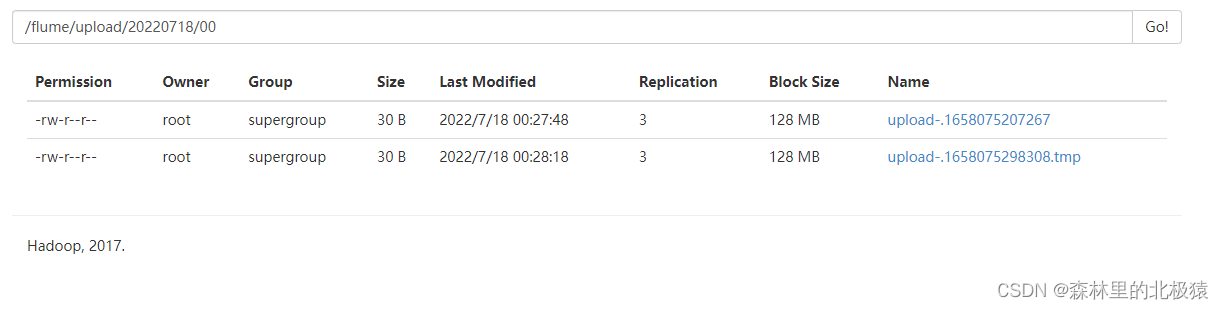

文件没有刷新前后缀是tmp,只要达到你设定的条件就会变成文件,例如30s,128M,等等

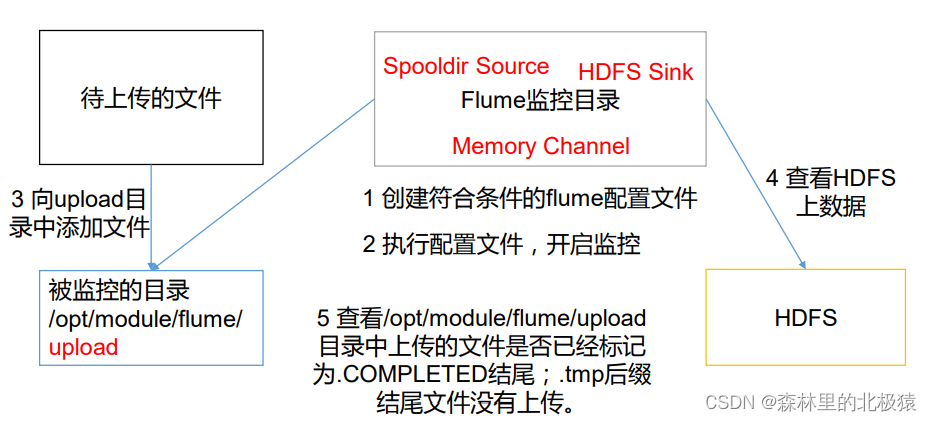

实时监控目录下多个新文件

使用 Flume 监听整个目录的文件,并上传至 HDFS,了解断点续传

需求分析

实现步骤

- 创建文件夹

[root@hadoop01 apache-flume-1.9.0-bin]# mkdir upload

- 在job目录下创建文件flume-dir-hdfs.conf

内容如下(配置文件)

a3.sources = r3

a3.sinks = k3

a3.channels = c3

# Describe/configure the source

a3.sources.r3.type = spooldir

a3.sources.r3.spoolDir = /opt/module/flume/upload

a3.sources.r3.fileSuffix = .COMPLETED

a3.sources.r3.fileHeader = true

#忽略所有以.tmp 结尾的文件,不上传

a3.sources.r3.ignorePattern = ([^ ]*\.tmp)

# Describe the sink

a3.sinks.k3.type = hdfs

a3.sinks.k3.hdfs.path =

hdfs://hadoop102:9000/flume/upload/%Y%m%d/%H

#上传文件的前缀

a3.sinks.k3.hdfs.filePrefix = upload-

#是否按照时间滚动文件夹

a3.sinks.k3.hdfs.round = true

#多少时间单位创建一个新的文件夹

a3.sinks.k3.hdfs.roundValue = 1

#重新定义时间单位

a3.sinks.k3.hdfs.roundUnit = hour

#是否使用本地时间戳

a3.sinks.k3.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

a3.sinks.k3.hdfs.batchSize = 100

a3.sinks.k3.hdfs.fileType = DataStream

#多久生成一个新的文件

a3.sinks.k3.hdfs.rollInterval = 60

#设置每个文件的滚动大小大概是 128M

a3.sinks.k3.hdfs.rollSize = 134217700

#文件的滚动与 Event 数量无关

a3.sinks.k3.hdfs.rollCount = 0

# Use a channel which buffers events in memory

a3.channels.c3.type = memory

a3.channels.c3.capacity = 1000

a3.channels.c3.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r3.channels = c3

a3.sinks.k3.channel = c3

- 运行文件

[root@hadoop01 apache-flume-1.9.0-bin]# bin/flume-ng agent -n a3 -c conf -f job/flume-dir-hdfs.conf

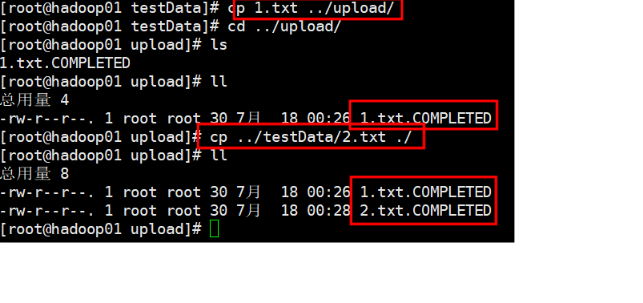

- 向 upload 文件夹中添加文件

上传成功后会出现以

.COMPLETED结尾的文件夹,说明flume已经将此文件上传了,以后不再监控。

所以,

不要在监控的目录中创建和修改文件。

监控的文件会500毫秒扫描一次。

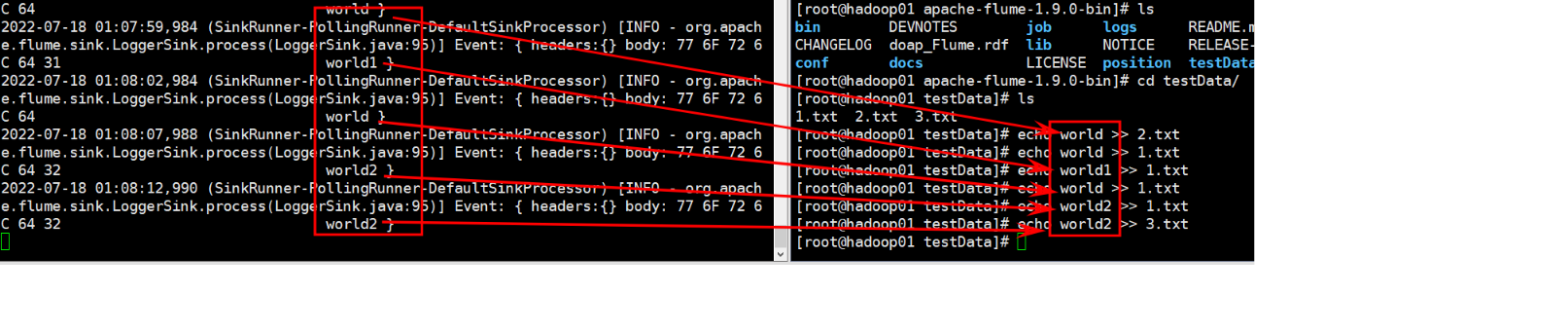

实时监控目录下的多个追加文件

案例需求

使用 Flume 监听整个目录的实时追加文件,并上传至 HDFS

实现步骤

- 配置参数

这里我是打印到控制台上,去hdfs也是同上面一样

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = TAILDIR

a1.sources.r1.filegroups = f1

a1.sources.r1.filegroups.f1 = /export/servers/apache-flume-1.9.0-bin/testData/.*.txt

a1.sources.r1.positionFile = /export/servers/apache-flume-1.9.0-bin/position/position.json

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

2. 启动监管命令

[root@hadoop01 apache-flume-1.9.0-bin]# bin/flume-ng agent -n a1 -c conf/ -f ./job/flume-taildir-hdfs.conf -Dflume.root.logger=INFO,console

- 向文件夹中追加内容

Taildir Source 维护了一个 json 格式的 position File,其会定期的往 position File

中更新每个文件读取到的最新的位置,因此能够实现断点续传。Position File 的格式如下:

{“inode”:2496272,“pos”:12,“file”:“/opt/module/flume/files/file1.txt”}

{“inode”:2496275,“pos”:12,“file”:“/opt/module/flume/files/file2.txt”}

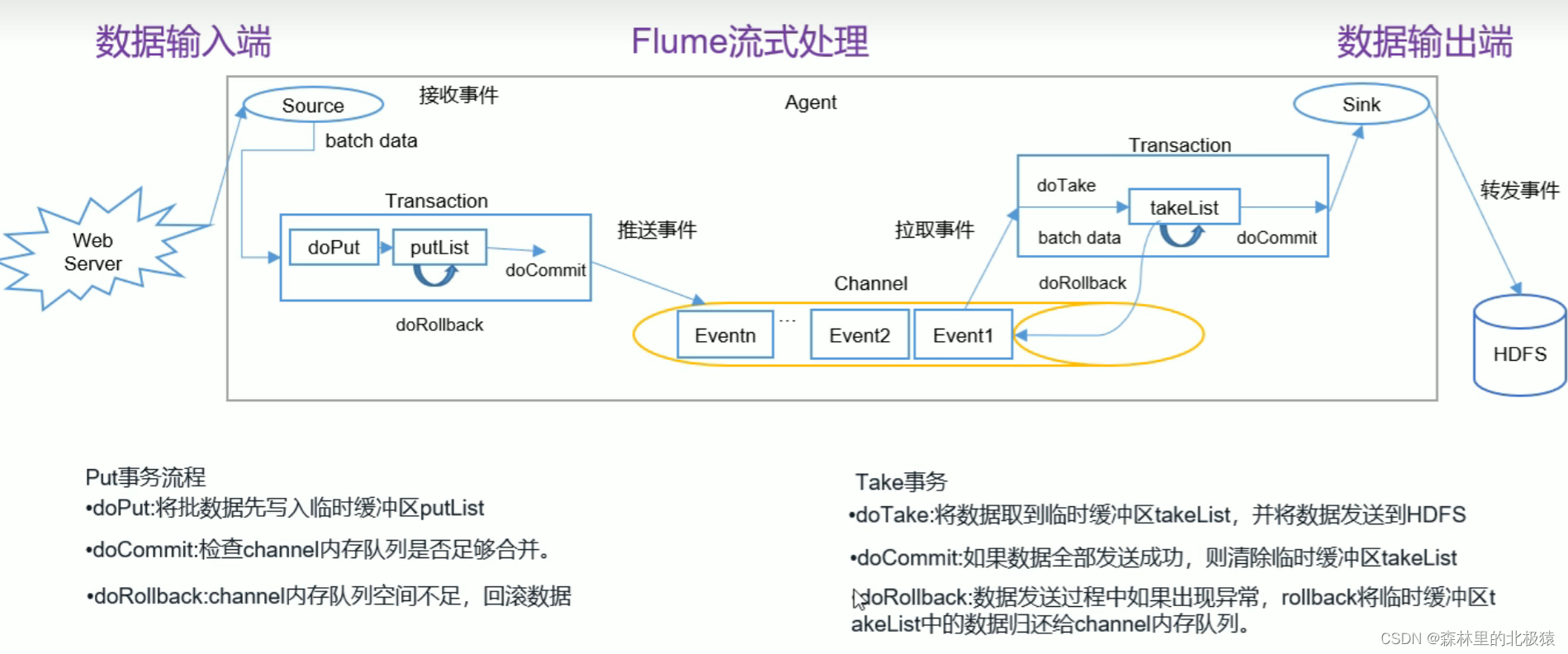

flume事务

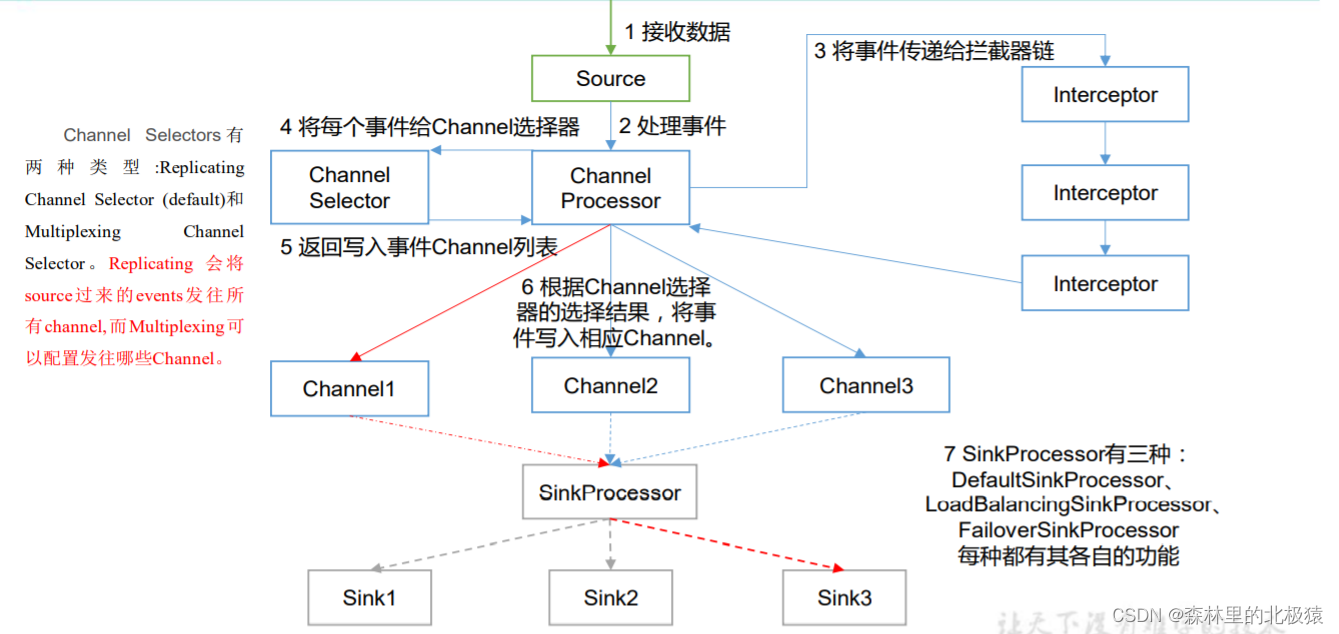

Flume Agent内部原理

1)ChannelSelector

ChannelSelector 的作用就是选出 Event 将要被发往哪个 Channel。其共有两种类型,

分别是 Replicating(复制)和 Multiplexing(多路复用)。

ReplicatingSelector 会将同一个 Event 发往所有的 Channel,Multiplexing 会根据相

应的原则,将不同的 Event 发往不同的 Channel。

2)SinkProcessor

SinkProcessor 共 有 三 种 类 型 , 分 别 是 DefaultSinkProcessor 、

LoadBalancingSinkProcessor 和 FailoverSinkProcessor

DefaultSinkProcessor 对 应 的 是 单 个 的 Sink , LoadBalancingSinkProcessor 和

FailoverSinkProcessor 对应的是 Sink Group,LoadBalancingSinkProcessor 可以实现负

载均衡的功能,FailoverSinkProcessor 可以实现故障转移的功能。

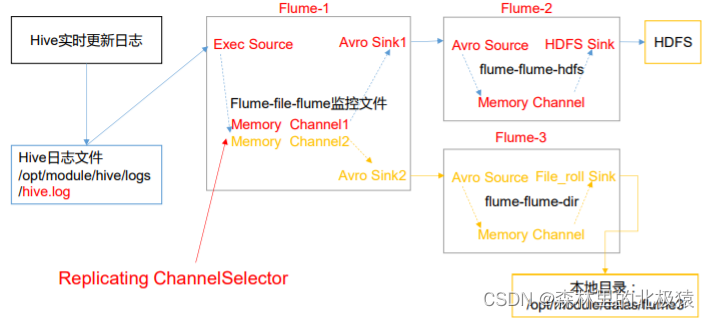

复用和多路复用

步骤

-

在flume/job/下创建文件夹group1

在flume/testData下创建文件夹flume3和hive.log

在flume/position下创建文件position2.json -

创建flume-1,在flume/job/group1下创建文件flume-file-flume.conf,配置如下:

#name

a1.sources = r1

a1.sinks = k1 k2

a1.channels = c1 c2

#source

a1.sources.r1.type = TAILDIR

a1.sources.r1.filegroups = f1

a1.sources.r1.filegroups.f1 = /export/servers/apache-flume-1.9.0-bin/testData/hive.log

a1.sources.r1.positionFile = /export/servers/apache-flume-1.9.0-bin/position/position1.json

#sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop01

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop01

a1.sinks.k2.port = 4142

#channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

a1.channels.c2.type = memory

a1.channels.c2.capacity = 1000

a1.channels.c2.transactionCapacity = 100

#bind

a1.sources.r1.channels = c1 c2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c2

- 创建flume-2,在flume/job/group1下创建文件flume-flume-hdfs.conf,配置如下:

# Name the components on this agent

a2.sources = r1

a2.sinks = k1

a2.channels = c1

#source

a2.sources.r1.type = avro

a2.sources.r1.bind = hadoop01

a2.sources.r1.port = 4141

#sink

a2.sinks.k1.type = hdfs

a2.sinks.k1.hdfs.path = hdfs://hadoop01:9000/flume2/%Y%m%d/%H

#上传文件的前缀

a2.sinks.k1.hdfs.filePrefix = flume2-

#是否按照时间滚动文件夹

a2.sinks.k1.hdfs.round = true

#多少时间单位创建一个新的文件夹

a2.sinks.k1.hdfs.roundValue = 1

#重新定义时间单位

a2.sinks.k1.hdfs.roundUnit = hour

#是否使用本地时间戳

a2.sinks.k1.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

a2.sinks.k1.hdfs.batchSize = 100

#设置文件类型,可支持压缩

a2.sinks.k1.hdfs.fileType = DataStream

#多久生成一个新的文件

a2.sinks.k1.hdfs.rollInterval = 30

#设置每个文件的滚动大小大概是 128M

a2.sinks.k1.hdfs.rollSize = 134217700

#文件的滚动与 Event 数量无关

a2.sinks.k1.hdfs.rollCount = 0

# Describe the channel

a2.channels.c1.type = memory

a2.channels.c1.capacity = 100

a2.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r1.channels = c1

a2.sinks.k1.channel = c1

- 创建flume-2,在flume/job/group1下创建文件flume-flume-file.conf,配置如下:

# Name the components on this agent

a3.sources = r1

a3.sinks = k1

a3.channels = c2

# Describe/configure the source

a3.sources.r1.type = avro

a3.sources.r1.bind = hadoop01

a3.sources.r1.port = 4142

# Describe the sink

a3.sinks.k1.type = file_roll

a3.sinks.k1.sink.directory = /export/servers/apache-flume-1.9.0-bin/testData/flume3/

# Describe the channel

a3.channels.c2.type = memory

a3.channels.c2.capacity = 1000

a3.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r1.channels = c2

a3.sinks.k1.channel = c2

提示:输出的本地目录必须是已经存在的目录,如果该目录不存在,并不会创建新的目录。

5. 执行文件

bin/flume-ng agent -n a1 -c conf/ -f job/group1/flume-file-flume.conf

bin/flume-ng agent -n a2 -c conf/ -f job/group1/flume-flume-hdfs.conf

bin/flume-ng agent -n a3 -c conf/ -f job/group1/flume-flume-file.conf

- 测试

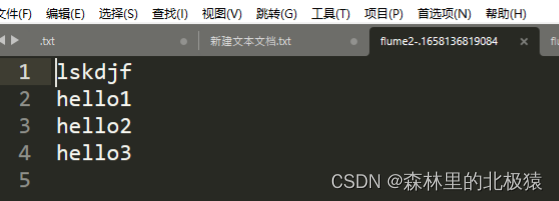

hdfs创建文件了

里面内容为

在磁盘中,文件生成

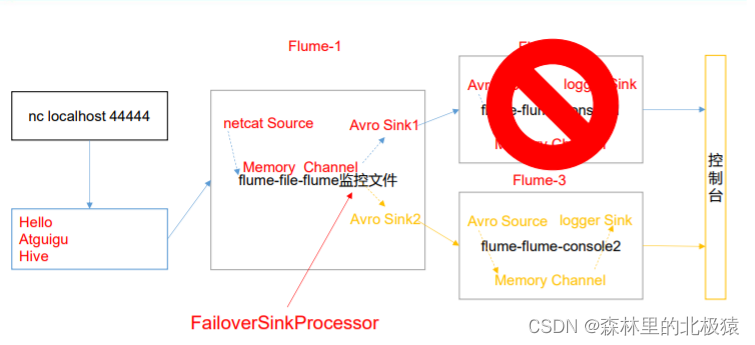

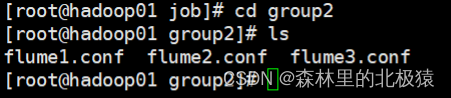

故障转移

配置文件

- 在Linux中创建好文件

- flume1.conf的配置如下

# Name the components on this agent

a1.sources = r1

a1.channels = c1

a1.sinkgroups = g1

a1.sinks = k1 k2

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups.g1.processor.priority.k1 = 5

a1.sinkgroups.g1.processor.priority.k2 = 10

a1.sinkgroups.g1.processor.maxpenalty = 10000

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop01

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop01

a1.sinks.k2.port = 4142

# Describe the channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinkgroups.g1.sinks = k1 k2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c1

- flume2.conf的配置如下

# Name the components on this agent

a2.sources = r1

a2.sinks = k1

a2.channels = c1

# Describe/configure the source

a2.sources.r1.type = avro

a2.sources.r1.bind = hadoop01

a2.sources.r1.port = 4141

# Describe the sink

a2.sinks.k1.type = logger

# Describe the channel

a2.channels.c1.type = memory

a2.channels.c1.capacity = 1000

a2.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r1.channels = c1

a2.sinks.k1.channel = c1

- flume3.conf的配置如下

# Name the components on this agent

a3.sources = r1

a3.sinks = k1

a3.channels = c2

# Describe/configure the source

a3.sources.r1.type = avro

a3.sources.r1.bind = hadoop01

a3.sources.r1.port = 4142

# Describe the sink

a3.sinks.k1.type = logger

# Describe the channel

a3.channels.c2.type = memory

a3.channels.c2.capacity = 1000

a3.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r1.channels = c2

a3.sinks.k1.channel = c2

- 分别运行

bin/flume-ng agent -n a1 -c conf/ -f job/group2/flume1.conf

bin/flume-ng agent -n a2 -c conf/ -f job/group2/flume2.conf -Dflume.root.loogger=INFO,console

bin/flume-ng agent -n a3 -c conf/ -f job/group2/flume3.conf -Dflume.root.loogger=INFO,console

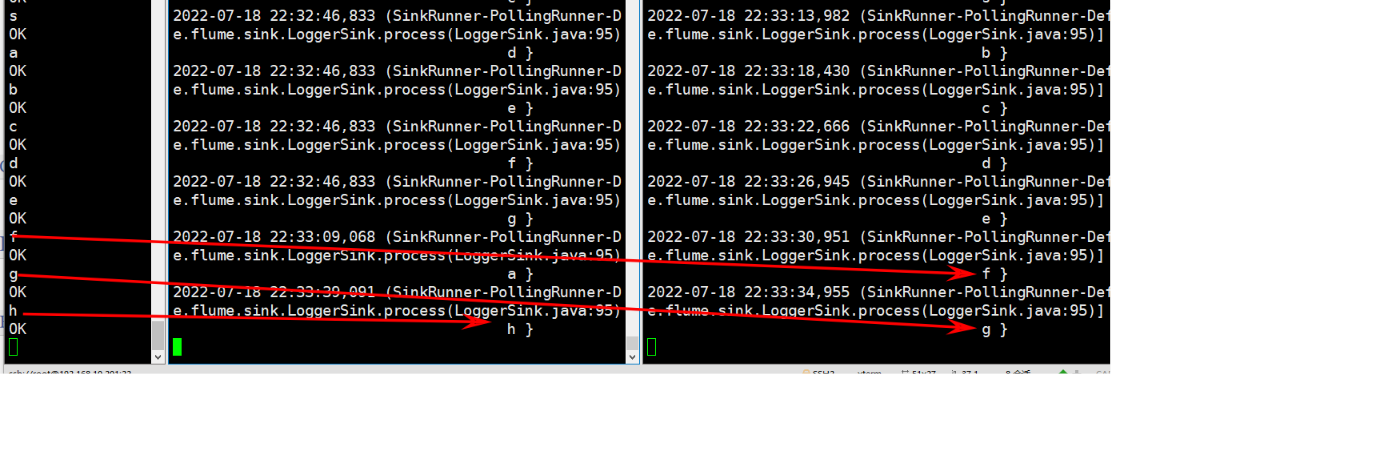

- 在netcat中发送消息,flume3先收到,无论我怎么发都是flume3收到

7. 把flume3停掉(模拟挂掉的场景)

使用netcat发送消息,可以看到在flume2出现

负载均衡

- 这里与上面操作其实,类似,我们只需要更改一下flume1文件就ok

- 配置文件如下

# Name the components on this agent

a1.sources = r1

a1.channels = c1

a1.sinkgroups = g1

a1.sinks = k1 k2

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

a1.sinkgroups.g1.processor.type = load_balance

a1.sinkgroups.g1.processor.backoff = true

a1.sinkgroups.g1.processor.selector = random

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop01

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop01

a1.sinks.k2.port = 4142

# Describe the channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinkgroups.g1.sinks = k1 k2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c1

运行,随机在flume2和flume3中传输数据

聚合

看尚硅谷文档,配置思想一样的

2912

2912

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?