目录

一、环境准备

Ubuntu镜像下载地址:Index of /ubuntu-releases/20.04/ | 清华大学开源软件镜像站 | Tsinghua Open Source Mirror

使用VMware安装三个节点,一个作为master主节点,如下:

注意每个节点内存不少于2G,CPU不少于2个,内存30GB以上

| 名字 | IP |

| k8s-master | 192.168.65.145 |

| k8s-node1 | 192.168.65.142 |

| k8s-node2 | 192.168.65.141 |

本博客安装版本说明:

k8s版本:1.19.10

docker版本:19.03

二、初始化工作

1. 设置root用户密码

zy@ubuntu:~/Desktop$ sudo passwd root

[sudo] password for zy:

New password:

Retype new password:

passwd: password updated successfully

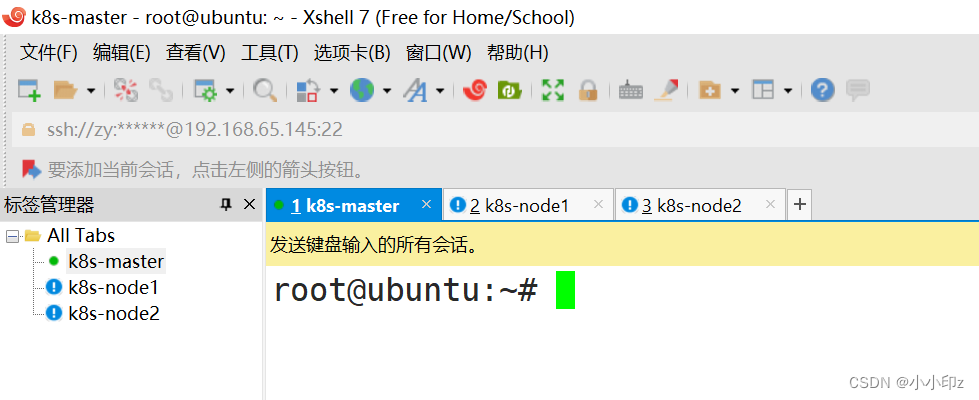

2. 使用xshell连接三台主机

3. 设置主机名和修改hosts文件

hostnamectl set-hostname k8s-master #master节点上执行

hostnamectl set-hostname k8s-node1 #ndoe1节点上执行

hostnamectl set-hostname k8s-node2 #node2节点上执行在 /etc/hosts文件后面添加:

192.168.65.145 k8s-master

192.168.65.142 k8s-node1

192.168.65.141 k8s-node24. 安装依赖软件以及关闭停用不需要使用的软件

卸载系统中自带的 snapd 软件

systemctl stop snapd snapd.socket

apt autoremove --purge -y snapd

apt install -y linux-modules-extra-5.4.0-52-generic linux-headers-5.4.0-525. 时间的同步与时区设置

# 设置系统时区为中国/上海

cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

timedatectl set-timezone Asia/Shanghai

bash -c "echo 'Asia/Shanghai' > /etc/timezone"

ntpdate ntp1.aliyun.com

# 将当前的 UTC 时间写入硬件时钟

timedatectl set-local-rtc 0

# 重启依赖于系统时间的服务

systemctl restart rsyslog.service cron.service

# 查看系统时间

date -R6. 关闭swap分区

swapoff -a && sed -i 's/^\/swap.img\(.*\)$/#\/swap.img \1/g' /etc/fstab && free

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab7. 在 kube-proxy 开启并使用 ipvs

# (1) 安装 ipvs 以及 负载均衡相关依赖

apt -y install ipvsadm ipset sysstat conntrack

(2) ipvs 内核模块手动加载(所有节点配置)

mkdir ~/k8s-init/

tee ~/k8s-init/ipvs.modules <<'EOF'

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_lc

modprobe -- ip_vs_lblc

modprobe -- ip_vs_lblcr

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- ip_vs_dh

modprobe -- ip_vs_fo

modprobe -- ip_vs_nq

modprobe -- ip_vs_sed

modprobe -- ip_vs_ftp

modprobe -- ip_vs_sh

modprobe -- ip_tables

modprobe -- ip_set

modprobe -- ipt_set

modprobe -- ipt_rpfilter

modprobe -- ipt_REJECT

modprobe -- ipip

modprobe -- xt_set

modprobe -- br_netfilter

modprobe -- nf_conntrack

EOF

# (3) 加载内核配置(临时|永久)注意管理员执行

chmod 755 ~/k8s-init/ipvs.modules && bash ~/k8s-init/ipvs.modules

sudo cp ~/k8s-init/ipvs.modules /etc/profile.d/ipvs.modules.sh

lsmod | grep -e ip_vs -e nf_conntrack

8. 集群各主机节点内核Kernel参数的调整

# 1.Kernel 参数调整

mkdir ~/k8s-init/

cat > ~/k8s-init/kubernetes-sysctl.conf <<EOF

# iptables 网桥模式开启

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

# 禁用 ipv6 协议

net.ipv6.conf.all.disable_ipv6=1

# 启用ipv4转发

net.ipv4.ip_forward=1

# net.ipv4.tcp_tw_recycle=0 #Ubuntu 没有参数

# 禁止使用 swap 空间,只有当系统 OOM 时才允许使用它

vm.swappiness=0

# 不检查物理内存是否够用

vm.overcommit_memory=1

# 不启 OOM

vm.panic_on_oom=0

# 文件系统通知数(根据内存大小和空间大小配置)

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

# 文件件打开句柄数

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

# tcp keepalive 相关参数配置

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

# net.ipv4.ip_conntrack_max = 65536 # Ubuntu 没有参数

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sudo cp ~/k8s-init/kubernetes-sysctl.conf /etc/sysctl.d/99-kubernetes.conf

sudo sysctl -p /etc/sysctl.d/99-kubernetes.conf

# 2.nftables 模式切换

# 在 Linux 中 nftables 当前可以作为内核 iptables 子系统的替代品,该工具可以充当兼容性层其行为类似于 iptables 但实际上是在配置 nftables。

$ apt list | grep "nftables/focal"

# nftables/focal 0.9.3-2 amd64

# python3-nftables/focal 0.9.3-2 amd64

# iptables 旧模式切换 (nftables 后端与当前的 kubeadm 软件包不兼容, 它会导致重复防火墙规则并破坏 kube-proxy, 所则需要把 iptables 工具切换到“旧版”模式来避免这些问题)

sudo update-alternatives --set iptables /usr/sbin/iptables-legacy

sudo update-alternatives --set ip6tables /usr/sbin/ip6tables-legacy

三、安装docker和k8s

1. 各个节点安装docker

# 1.更新apt包索引并安装包以允许apt在HTTPS上使用存储库

sudo apt-get install -y \

apt-transport-https \

ca-certificates \

curl \

gnupg-agent \

software-properties-common

# 2.添加Docker官方GPG密钥 # -fsSL

curl https://download.docker.com/linux/ubuntu/gpg | apt-key add -

# 3.通过搜索指纹的最后8个字符进行密钥验证

sudo apt-key fingerprint 0EBFCD88

# 4.设置稳定存储库

sudo add-apt-repository \

"deb [arch=amd64] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) \

stable"

# 5. 确保APT软件包索引已经更新

sudo apt update

#6. 确认您要安装的Docker版本

root@ubuntu:~# apt-cache madison docker-ce

docker-ce | 5:19.03.10~3-0~ubuntu-focal | https://download.docker.com/linux/ubuntu focal/stable amd64 Packages

docker-ce | 5:19.03.9~3-0~ubuntu-focal | https://download.docker.com/linux/ubuntu focal/stable amd64 Packages

#7. 安装19.03版本

apt-get install docker-ce=5:19.03.12~3-0~ubuntu-focal docker-ce-cli=5:19.03.12~3-0~ubuntu-focal containerd.io

#8. 检查docker版本

root@ubuntu:/etc/apt# docker -v

Docker version 19.03.12, build 48a66213fe

#9.加速器建立

mkdir -vp /etc/docker/

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://xlx9erfu.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"live-restore": true,

"dns": ["192.168.12.254"],

"insecure-registries": ["harbor.weiyigeek.com.cn"]

}

EOF

# PS : 私有仓库配置 insecure_registries

# 10.自启与启动

sudo systemctl enable --now docker

sudo systemctl restart docker

2. 下载 kubernetes 集群相关的软件包

# (1) gpg 签名下载导入

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo apt-key add -

# (2) Kubernetes 安装源

cat <<EOF | sudo tee /etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

# (3) 软件包索引更新以及只下载依赖包不安装kubernetes(注意此处建议采用指定版本下载 )

apt-cache madison kubelet # 查看可用的 kubernetes 版本

sudo apt-get update && sudo apt -d install kubelet kubeadm kubectl

# (4) 安装指定版本

apt -y install kubelet=1.19.10-00 kubeadm=1.19.10-00 kubectl=1.19.10-00

四、初始化集群

1. 在主节点上初始化集群

#apiserver-advertise-address 是master节点的地址

$ kubeadm init \

--apiserver-advertise-address=192.168.65.145 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16启动结果如下:

root@ubuntu:~# kubeadm init \

> --apiserver-advertise-address=192.168.65.145 \

> --image-repository registry.aliyuncs.com/google_containers \

> --service-cidr=10.96.0.0/12 \

> --pod-network-cidr=10.244.0.0/16

I0315 18:23:08.757810 35378 version.go:255] remote version is much newer: v1.26.2; falling back to: stable-1.19

W0315 18:23:09.853694 35378 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.19.16

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.65.145]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.65.145 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.65.145 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

[apiclient] All control plane components are healthy after 74.004910 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: mj7yh1.kcsms343yksi1k8l

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.65.145:6443 --token mj7yh1.kcsms343yksi1k8l \

--discovery-token-ca-cert-hash sha256:06d33735dffa06b7de28e7837297fb982354f1667cc7d250e2a3dfdc468256cb 2. 配置 kubectl

为用户zy配置kubectl:

su zy

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

为了使用更便捷,启用kubectl命令的自动补全功能:

3. 安装Pod网络

root@k8s-master:~# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

The connection to the server raw.githubusercontent.com was refused - did you specify the right host or port?

我这里提示拒绝连接,可以把yaml文件弄到本地来安装:

文件内容如下:

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

- apiGroups:

- "networking.k8s.io"

resources:

- clustercidrs

verbs:

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: docker.io/flannel/flannel-cni-plugin:v1.1.2

#image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.2

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: docker.io/flannel/flannel:v0.21.3

#image: docker.io/rancher/mirrored-flannelcni-flannel:v0.21.3

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: docker.io/flannel/flannel:v0.21.3

#image: docker.io/rancher/mirrored-flannelcni-flannel:v0.21.3

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate然后执行kubectl apply就可以了,如下:

root@k8s-master:~# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

4. 添加k8s-node1和k8s-node2

在k8s-node1和k8s-node2上分别执行如下命令,将其注册到Cluster中:

这句话在初始化集群时会打印在终端,直接复制执行即可。

如果没有记录,可以通过 kubeadm token list 查看

kubeadm join 192.168.65.145:6443 --token mj7yh1.kcsms343yksi1k8l \

--discovery-token-ca-cert-hash sha256:06d33735dffa06b7de28e7837297fb982354f1667cc7d250e2a3dfdc468256cb

在master上查看节点状态:

root@k8s-master:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 40m v1.19.10

k8s-node1 Ready <none> 2m48s v1.19.10

k8s-node2 Ready <none> 2m40s v1.19.10

查看pod具体情况:

root@k8s-master:~# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-flannel kube-flannel-ds-d9qtr 1/1 Running 0 3m35s

kube-flannel kube-flannel-ds-jbhdr 1/1 Running 0 3m43s

kube-flannel kube-flannel-ds-rpqg4 1/1 Running 0 7m8s

kube-system coredns-6d56c8448f-fdgm2 1/1 Running 0 40m

kube-system coredns-6d56c8448f-mkgx2 1/1 Running 0 40m

kube-system etcd-k8s-master 1/1 Running 0 41m

kube-system kube-apiserver-k8s-master 1/1 Running 0 41m

kube-system kube-controller-manager-k8s-master 1/1 Running 0 41m

kube-system kube-proxy-9rj9s 1/1 Running 0 3m43s

kube-system kube-proxy-sm5kd 1/1 Running 0 40m

kube-system kube-proxy-vgzgz 1/1 Running 0 3m35s

kube-system kube-scheduler-k8s-master 1/1 Running 0 41m

此时,所有节点都已经准备好了,Kubernetes Cluster创建成功!

946

946

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?