目录

一、数据来源

1.数据来源:kaggle

2.数据样式

通过前7列参数,判断出小麦的种类,小麦种类共有3类(第8列)。

本次模型拟合度96.667%(见后续详细代码)。

二、使用方式

人工神经网络(Artificial Neural Network)&反向传播 (Back Propagation)

方法说明:下述为简单阐述,详细说明请查阅相关文档。

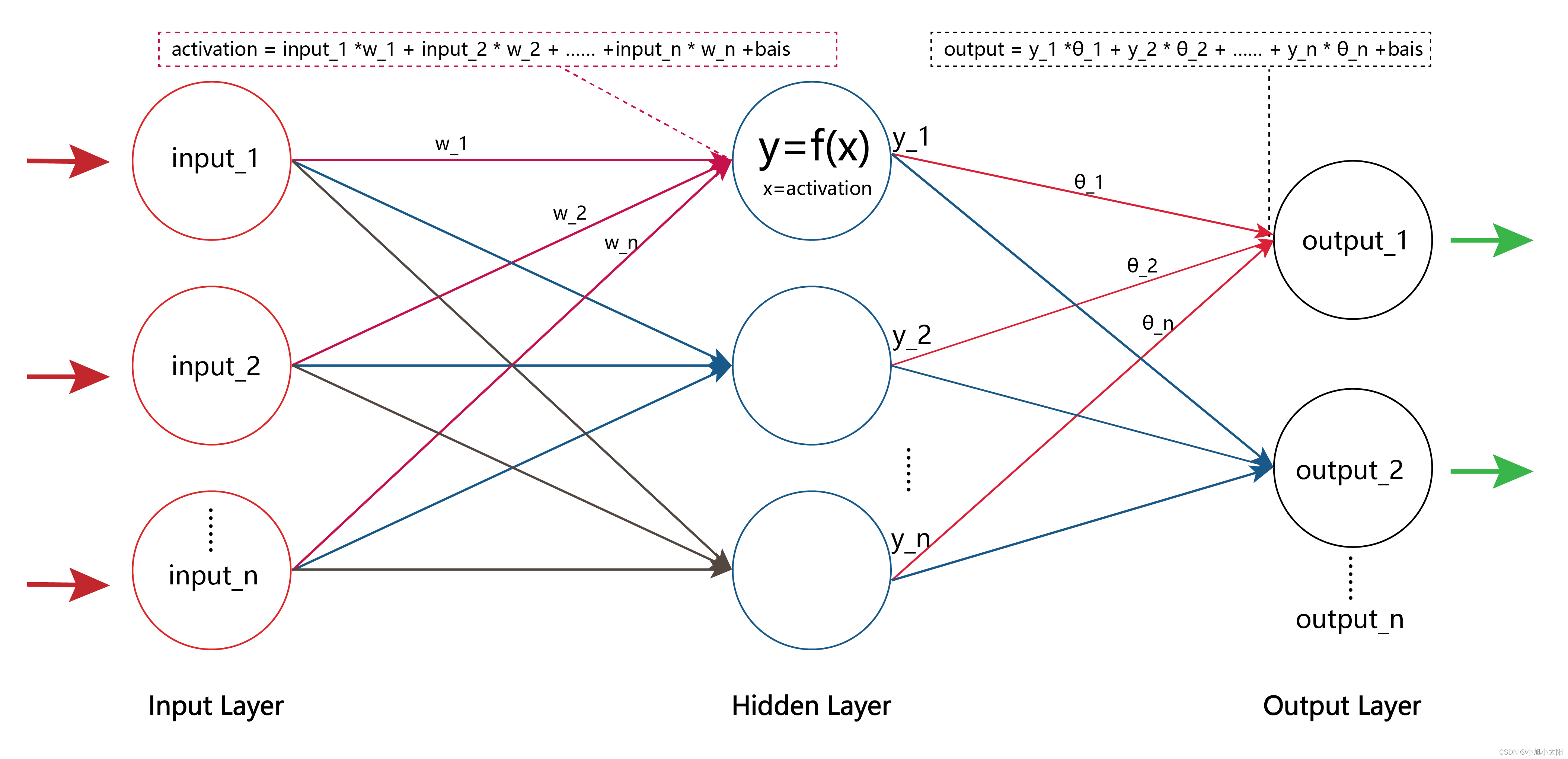

简单的人工神经网络分为三层,输入层(Input Layer)、隐藏层(Hidden Layer)、输出层(Output Layer);每一层里包含n个神经元(Neuron)。

1、向前传递

①在输入层,输入值(Input_i),得到输入每一个神经元的激活值( activation = input_1 *w_1 + input_2 * w_2 + …… +input_n * w_n +bais)。

②在隐藏层,每个神经元相当于一个整流器,通过激活函数进行传输,输出值为y_i。同时,y_i作为向下一层传递的输入值,继续进行传输。

正是通过了激活函数的传输,使得神经网络非线性化,能解决相对复杂的问题。

③在输入层,output_i = y_1 *θ_1 + y_2 * θ_2 + …… + y_n * θ_n +bais 。

激活函数:

常用的激活函数有:sigmoid函数,ReLU函数,Softplus函数,本文后续代码将用Softplus函数。

关于激活函数的解释,请查阅相关文档。

2、反向传播法

通过梯度下降法,不断拟合,寻找最优的权重系数w_n ,偏值bais_n。

其中:损失函数

因为需要通过训练数据来训练模型,拟合而出最优的权重系数和偏值,训练的起点是误差,误差等于输出值与训练数据观察值的差值,同时各个神经元之间存在多个函数关系;

这时,就需要从输出层开始反向传播。

例:拟合w_1

其中,以使用softplus激活函数为例,进行变形:

依次类推,计算其他权重系数和偏值的偏导数……

三、代码实现

从数据读取开始,不调取三方库,纯手工推导。

1、导入基础库

#1.导入基础库

from csv import reader

from math import exp,log

from random import randrange,seed,random

import copy2.读取csv文件和数据类型转换

#2.读取csv文件和数据类型转换

def csv_loader(file):

dataset=list()

with open(file,'r') as f:

csv_reader=reader(f)

for row in csv_reader:

if not row:

continue

dataset.append(row)

return dataset

#字符串数据转换为浮点型

def str_to_float_converter(dataset):

dataset=dataset[1:]

for i in range(len(dataset[0])-1):

for row in dataset:

row[i]= float(row[i].strip())

#观察数据转换为整型

def str_to_int_converter(dataset):

dataset=dataset[1:]

class_values= [row[-1] for row in dataset]

unique_values= set(class_values)

converter_dict=dict()

for i,value in enumerate(unique_values):

converter_dict[value] = i

for row in dataset:

row[-1] = converter_dict[row[-1]]3.数据归一化

#3.数据归一化

def normalization(dataset):

for i in range(len(dataset[0])-1):

col_values = [row[i] for row in dataset]

max_value = max(col_values)

min_value = min(col_values)

for row in dataset:

row[i] = (row[i] - min_value)/float(max_value - min_value)4.K折交叉验证拆分数据

#4.K折交叉验证拆分数据

def k_fold_cross_validation(dataset,n_folds):

dataset_split=list()

fold_size= int(len(dataset)/n_folds)

dataset_copy = list(dataset)

for i in range(n_folds):

fold_data = list()

while len(fold_data) < fold_size:

index = randrange(len(dataset_copy))

fold_data.append(dataset_copy.pop(index))

dataset_split.append(fold_data)

return dataset_split5.计算准确性

#5.计算准确性

def calculate_accuracy(actual,predicted):

correct = 0

for i in range(len(actual)):

if actual[i] == predicted[i]:

correct +=1

accuracy = correct/float(len(actual)) *100.0

return accuracy6.模型测试评分

#6.模型测试评分

def mode_scores(dataset,algo,n_folds,*args):

dataset_split = k_fold_cross_validation(dataset,n_folds)

scores = list()

for fold in dataset_split:

train = copy.deepcopy(dataset_split)

train.remove(fold)

train = sum(train, [])

test =list()

test = copy.deepcopy(fold)

predicted = algo(train, test, *args)

actual = [row[-1] for row in fold]

accuracy= calculate_accuracy(actual,predicted)

scores.append(accuracy)

return scores以下为人工神经网络算法的代码

7.初始化神经网络

#7.初始化神经网络

def initialize_network(n_inputs,n_hiddens,n_outputs):

network = list()

hidden_layer = [{'weight':[random() for i in range(n_inputs+1)]} for i in range(n_hiddens)]

network.append(hidden_layer)

output_layer = [{'weight':[random() for i in range(n_hiddens+1)]} for i in range(n_outputs)]

network.append(output_layer)

return network8.计算激活值

#8.计算激活值

def activate(weights,inputs):

activation =weights[-1]

for i in range(len(weights)-1):

activation +=weights[i] * inputs[i]

return activation9.传输神经元的激活值

#9.传输神经元的激活值

def neuron_transfer(activation):

output = log(1+exp(activation))

return output10.向前传递,获得输出结果

#10.向前传递,获得输出结果

def forward_propagation(network,row):

inputs = row

for layer in network:

inputs_new = list()

for neuron in layer:

activation = activate(neuron['weight'],inputs)

if layer != network[-1]:

neuron['output'] = neuron_transfer(activation)

neuron['input'] = activation

inputs_new.append(neuron['output'])

else:

neuron['output'] = activation

neuron['input'] = activation

inputs_new.append(neuron['output'])

inputs = inputs_new

return inputs11.计算神经元输出值对输入值的导数(传输器函数的导数)

#11.计算神经元输出值对输入值的导数(传输器函数的导数)

def transfer_derivative(input_):

derivative = 1.0/(1.0 + exp(-input_))

return derivative12.计算反向传播的误差

#12.计算反向传播的误差

def back_propagation_error(network,expected):

for i in reversed(range(len(network))):

layer = network[i]

if i-1 >= 0:

layer_pre = network[i-1]

else:

layer_pre = None

errors = list()

if i != len(network) -1:

for j in range(len(layer)):

neuron = layer[j]

error = 0.0

for neuron_latter in network[i+1]:

error += (neuron_latter['weight'][j] * neuron_latter['delta'][j])

neuron['error'] = error

errors.append(error)

else:

for j in range(len(layer)):

neuron = layer[j]

error = neuron['output'] - expected[j]

neuron['error'] = error

errors.append(error)

for j in range(len(layer)):

if not layer_pre:

continue

else:

neuron = layer[j]

neuron['delta'] = list()

for neuron_pre in layer_pre:

error_class= errors[j] * transfer_derivative(neuron_pre['input'])

neuron['delta'].append(error_class)13.更新权重系数

#13.更新权重系数

def update_weights(network,row,learning_rate):

for i in range(len(network)):

inputs = row[:-1]

if i != 0:

inputs = [neuron['output'] for neuron in network[i-1]]

for neuron in network[i]:

for j in range(len(inputs)):

neuron['weight'][j] -= learning_rate * neuron['error'] * inputs[j]

neuron['weight'][-1] -= learning_rate * neuron['error']14.训练神经网络

#14.训练神经网络

def train_network(network,train,learning_rate,n_epochs,n_outputs):

for epoch in range(n_epochs):

sum_error = 0.0

for row in train:

outputs = forward_propagation(network,row)

expected = [0 for i in range(n_outputs)]

expected[row[-1]] = 1

sum_error += sum([(outputs[i] - expected[i])**2 for i in range(len(expected))])

back_propagation_error(network,expected)

update_weights(network,row,learning_rate)

print('We are at epoch [%d] right now, The learning rate is [%.3f], the error is [%.3f]' %(epoch,learning_rate,sum_error))15.进行预测

#15.进行预测

def make_prediction(network,row):

outputs = forward_propagation(network,row)

prediction = outputs.index(max(outputs))

return prediction16.使用反向传播

#16.使用反向传播

def back_propagation(train,test,learning_rate,n_epochs,n_hiddens):

n_inputs = len(train[0]) -1

n_outputs = len(set(row[-1] for row in train))

network = initialize_network(n_inputs,n_hiddens,n_outputs)

train_network(network,train,learning_rate,n_epochs,n_outputs)

predictions = list()

for row in test:

prediction = make_prediction(network,row)

predictions.append(prediction)

return predictions17.开始测试

#17.开始测试

file='./download_datas/seeds_dataset.csv'

dataset=csv_loader(file)

str_to_float_converter(dataset)

str_to_int_converter(dataset)

dataset=dataset[1:]

normalization(dataset)

seed(1)

n_folds=5

learning_rate=0.005

n_epochs=500

n_hiddens=10

algo= back_propagation

scores=mode_scores(dataset,algo,n_folds,learning_rate,n_epochs,n_hiddens)

print('The scores of our model are : %s' % scores)

print('The average score of our model is : %.3f%%' % (sum(scores)/float(len(scores))))测试结果

#输出结果

The scores of our model are : [95.23809523809523, 95.23809523809523, 100.0, 95.23809523809523, 97.61904761904762]

The average score of our model is : 96.667%四、完整代码

#1.导入基础库

from csv import reader

from math import exp,log

from random import randrange,seed,random

import copy

#2.读取csv文件和数据类型转换

def csv_loader(file):

dataset=list()

with open(file,'r') as f:

csv_reader=reader(f)

for row in csv_reader:

if not row:

continue

dataset.append(row)

return dataset

#字符串数据转换为浮点型

def str_to_float_converter(dataset):

dataset=dataset[1:]

for i in range(len(dataset[0])-1):

for row in dataset:

row[i]= float(row[i].strip())

#观察数据转换为整型

def str_to_int_converter(dataset):

dataset=dataset[1:]

class_values= [row[-1] for row in dataset]

unique_values= set(class_values)

converter_dict=dict()

for i,value in enumerate(unique_values):

converter_dict[value] = i

for row in dataset:

row[-1] = converter_dict[row[-1]]

#3.数据归一化

def normalization(dataset):

for i in range(len(dataset[0])-1):

col_values = [row[i] for row in dataset]

max_value = max(col_values)

min_value = min(col_values)

for row in dataset:

row[i] = (row[i] - min_value)/float(max_value - min_value)

#4.K折交叉验证拆分数据

def k_fold_cross_validation(dataset,n_folds):

dataset_split=list()

fold_size= int(len(dataset)/n_folds)

dataset_copy = list(dataset)

for i in range(n_folds):

fold_data = list()

while len(fold_data) < fold_size:

index = randrange(len(dataset_copy))

fold_data.append(dataset_copy.pop(index))

dataset_split.append(fold_data)

return dataset_split

#5.计算准确性

def calculate_accuracy(actual,predicted):

correct = 0

for i in range(len(actual)):

if actual[i] == predicted[i]:

correct +=1

accuracy = correct/float(len(actual)) *100.0

return accuracy

#6.模型测试评分

def mode_scores(dataset,algo,n_folds,*args):

dataset_split = k_fold_cross_validation(dataset,n_folds)

scores = list()

for fold in dataset_split:

train = copy.deepcopy(dataset_split)

train.remove(fold)

train = sum(train, [])

test =list()

test = copy.deepcopy(fold)

predicted = algo(train, test, *args)

actual = [row[-1] for row in fold]

accuracy= calculate_accuracy(actual,predicted)

scores.append(accuracy)

return scores

#人工神经网络算法

#7.初始化神经网络

def initialize_network(n_inputs,n_hiddens,n_outputs):

network = list()

hidden_layer = [{'weight':[random() for i in range(n_inputs+1)]} for i in range(n_hiddens)]

network.append(hidden_layer)

output_layer = [{'weight':[random() for i in range(n_hiddens+1)]} for i in range(n_outputs)]

network.append(output_layer)

return network

#8.计算激活值

def activate(weights,inputs):

activation =weights[-1]

for i in range(len(weights)-1):

activation +=weights[i] * inputs[i]

return activation

#9.传输神经元的激活值

def neuron_transfer(activation):

output = log(1+exp(activation))

return output

#10.向前传递,获得输出结果

def forward_propagation(network,row):

inputs = row

for layer in network:

inputs_new = list()

for neuron in layer:

activation = activate(neuron['weight'],inputs)

if layer != network[-1]:

neuron['output'] = neuron_transfer(activation)

neuron['input'] = activation

inputs_new.append(neuron['output'])

else:

neuron['output'] = activation

neuron['input'] = activation

inputs_new.append(neuron['output'])

inputs = inputs_new

return inputs

#11.计算神经元输出值对输入值的导数(传输器函数的导数)

def transfer_derivative(input_):

derivative = 1.0/(1.0 + exp(-input_))

return derivative

#12.计算反向传播的误差

def back_propagation_error(network,expected):

for i in reversed(range(len(network))):

layer = network[i]

if i-1 >= 0:

layer_pre = network[i-1]

else:

layer_pre = None

errors = list()

if i != len(network) -1:

for j in range(len(layer)):

neuron = layer[j]

error = 0.0

for neuron_latter in network[i+1]:

error += (neuron_latter['weight'][j] * neuron_latter['delta'][j])

neuron['error'] = error

errors.append(error)

else:

for j in range(len(layer)):

neuron = layer[j]

error = neuron['output'] - expected[j]

neuron['error'] = error

errors.append(error)

for j in range(len(layer)):

if not layer_pre:

continue

else:

neuron = layer[j]

neuron['delta'] = list()

for neuron_pre in layer_pre:

error_class= errors[j] * transfer_derivative(neuron_pre['input'])

neuron['delta'].append(error_class)

#13.更新权重系数

def update_weights(network,row,learning_rate):

for i in range(len(network)):

inputs = row[:-1]

if i != 0:

inputs = [neuron['output'] for neuron in network[i-1]]

for neuron in network[i]:

for j in range(len(inputs)):

neuron['weight'][j] -= learning_rate * neuron['error'] * inputs[j]

neuron['weight'][-1] -= learning_rate * neuron['error']

#14.训练神经网络

def train_network(network,train,learning_rate,n_epochs,n_outputs):

for epoch in range(n_epochs):

sum_error = 0.0

for row in train:

outputs = forward_propagation(network,row)

expected = [0 for i in range(n_outputs)]

expected[row[-1]] = 1

sum_error += sum([(outputs[i] - expected[i])**2 for i in range(len(expected))])

back_propagation_error(network,expected)

update_weights(network,row,learning_rate)

print('We are at epoch [%d] right now, The learning rate is [%.3f], the error is [%.3f]' %(epoch,learning_rate,sum_error))

#15.进行预测

def make_prediction(network,row):

outputs = forward_propagation(network,row)

prediction = outputs.index(max(outputs))

return prediction

#16.使用反向传播

def back_propagation(train,test,learning_rate,n_epochs,n_hiddens):

n_inputs = len(train[0]) -1

n_outputs = len(set(row[-1] for row in train))

network = initialize_network(n_inputs,n_hiddens,n_outputs)

train_network(network,train,learning_rate,n_epochs,n_outputs)

predictions = list()

for row in test:

prediction = make_prediction(network,row)

predictions.append(prediction)

return predictions

#17.开始测试

file='./download_datas/seeds_dataset.csv'

dataset=csv_loader(file)

str_to_float_converter(dataset)

str_to_int_converter(dataset)

dataset=dataset[1:]

normalization(dataset)

seed(1)

n_folds=5

learning_rate=0.005

n_epochs=500

n_hiddens=10

algo= back_propagation

scores=mode_scores(dataset,algo,n_folds,learning_rate,n_epochs,n_hiddens)

print('The scores of our model are : %s' % scores)

print('The average score of our model is : %.3f%%' % (sum(scores)/float(len(scores))))

1044

1044

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?