大家好,今天利用python和pycharm进行数据的爬取,废话不多说,代码实现如下:

import urllib.request as request

import easygui

import bs4

import csv

import os

def is_connect():

import requests

try:

requests.get(“https://www.baidu.com”)

return True

except:

return False

if is_connect():

here = os.getcwd()

while True:

link = easygui.enterbox("G:", "爬虫新闻", here)

try:

open(link + r"\新闻信息.csv", "w")

break

except:

easygui.msgbox("路径错误或文件已打开")

url = "http://news.sohu.com/"

req = request.Request(url, headers={

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.80 Safari/537.36 Edg/86.0.622.43"})

response = request.urlopen(req).read().decode("utf-8")

soup = bs4.BeautifulSoup(response, "html.parser")

news = soup.findAll("a")

news2 = soup.findAll("b")

passed_news = []

with open(link + r"\新闻信息.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.writer(f)

for i in news2:

print(i.string)

writer.writerow([i.string])

for new in news:

if not "None" in str(new.string) and len(str(new.string).replace(" ", "").replace("\n", "")) > 6:

passed_news.append(str(new.string).replace(" ", "").replace("\n", ""))

for new in passed_news[:-4][1:]:

print(new)

writer.writerow([new])

f.close()

a = input("数据爬取完毕!")

else:

easygui.msgbox(“网络未连接”)

input(“按回车键退出”)

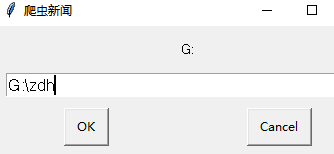

运行过后,选择文档存放地址:

点击OK,进行数据的爬取,然后进入我们选择文档存放的地址查看数据

自此完成

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?