决策树剪枝

剪枝是指将决策树的某些内部节点下面的节点都删掉,留下来的内部决策节点作为叶子节点。

为什么需要剪枝?

决策树是充分考虑了所有的数据点而生成的复杂树,它在学习的过程中为了尽可能的正确的分类训练样本,不停地对结点进行划分,因此这会导致整棵树的分支过多,造成决策树很庞大。决策树过于庞大,有可能出现过拟合的情况,决策树越复杂,过拟合的程度会越高。

所以,为了避免过拟合,咱们需要对决策树进行剪枝。

一般情况下,有两种剪枝策略,分别是预剪枝和后剪枝。

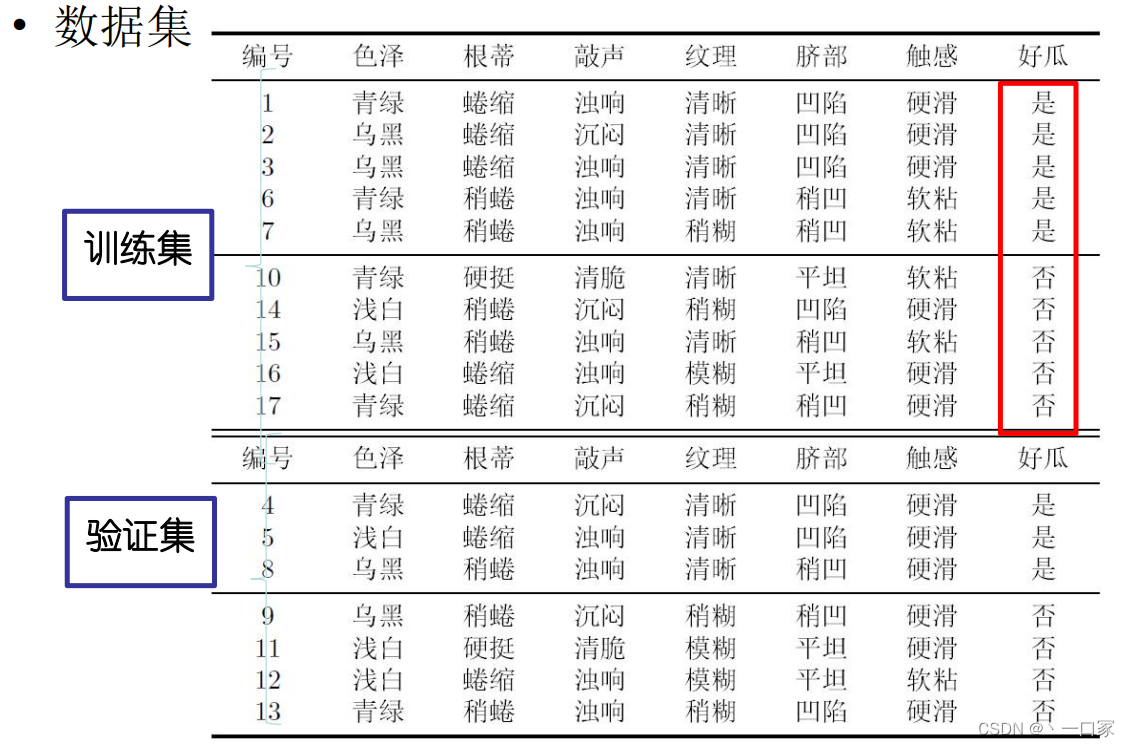

以下通过西瓜数据集(西瓜书中的例子)来对这两种剪枝方式进行介绍。

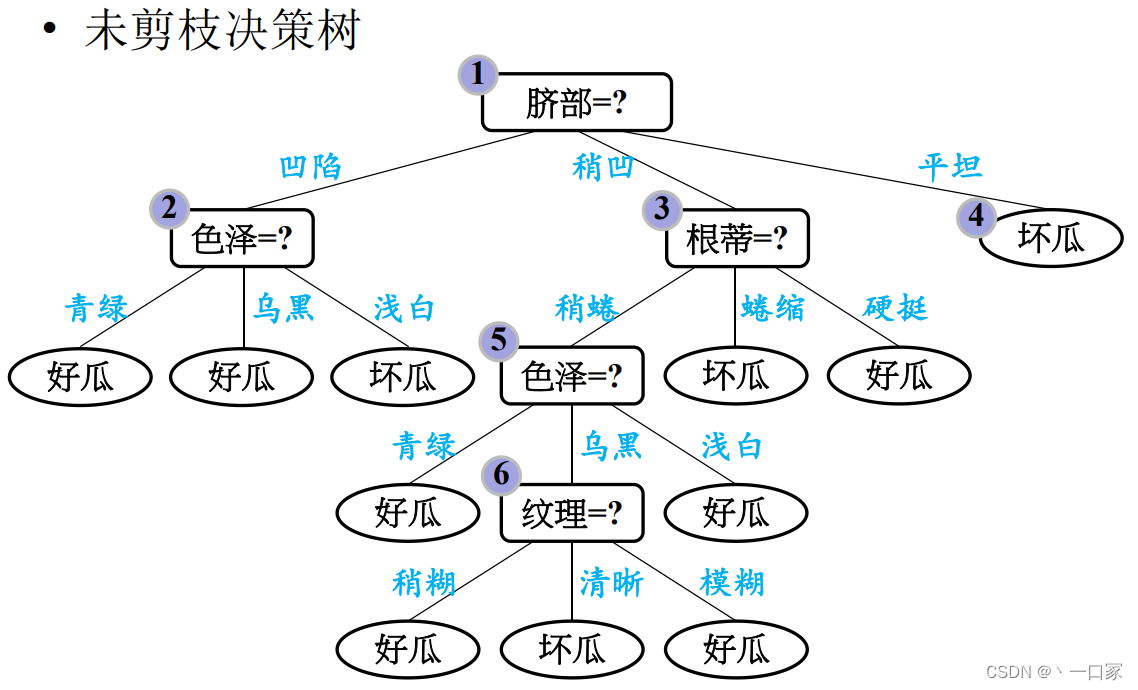

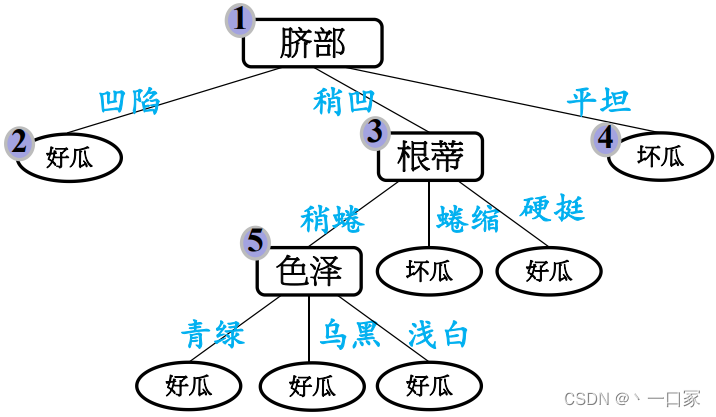

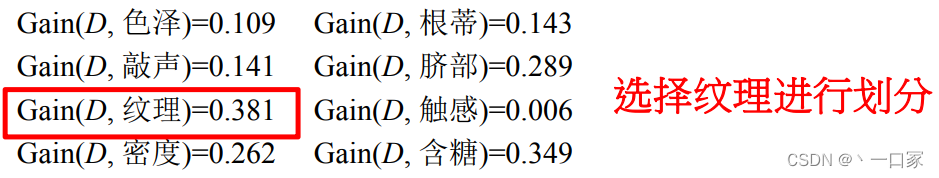

首先通过基尼指数划分出一棵完整的决策树。

以该决策树为例子介绍预剪枝与后剪枝的区别。

预剪枝

预剪枝就是在构造决策树的过程中,先对每个结点在划分前进行估计,如果当前结点的划分不能带来决策树模型泛化性能的提升,则不对当前结点进行划分并且将当前结点标记为叶结点。

总结:边构造边剪枝。

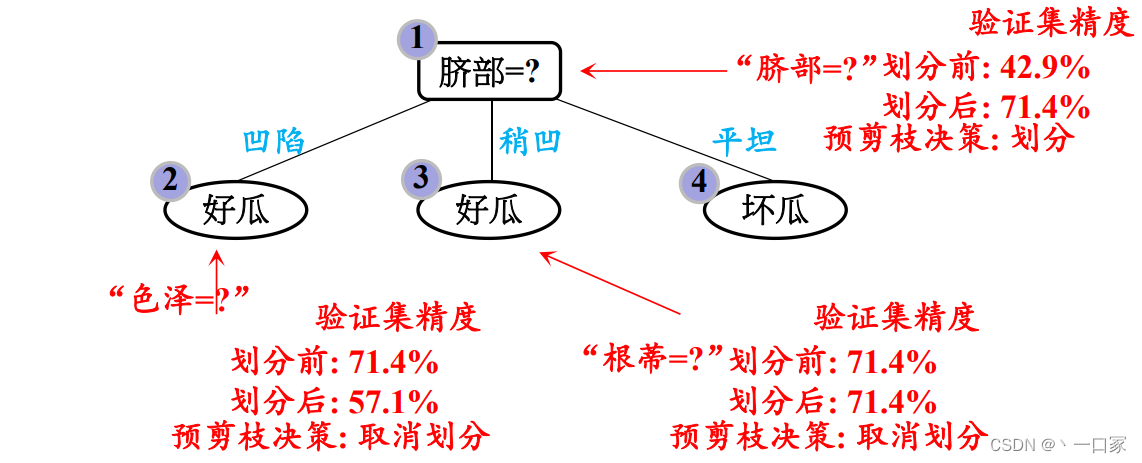

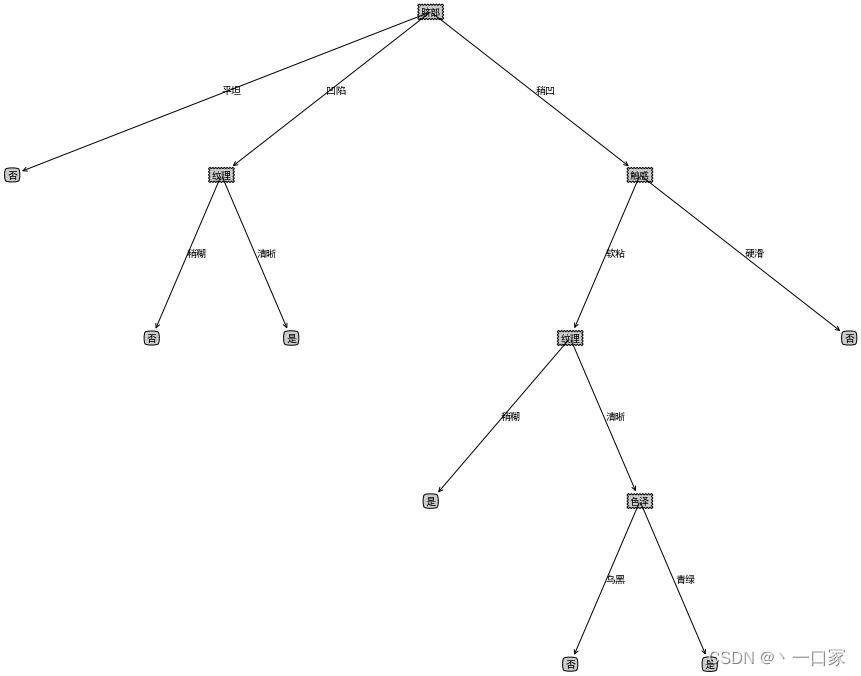

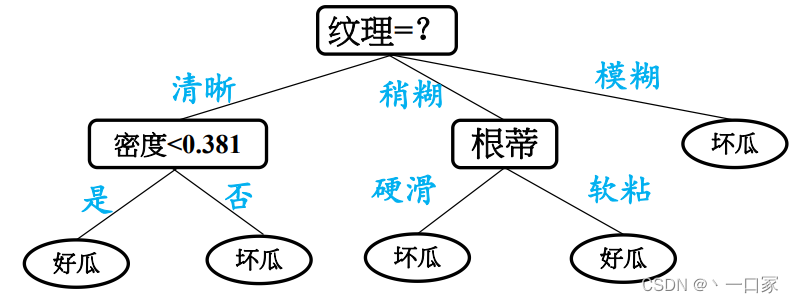

1.首先,是否要按照“脐部”划分。在划分前,只有一个根节点,也是叶子节点,标记为“好瓜”。通过测试集验证,只有{4,5,8}3个样本可以正确分类,精度为3/7=42.9%。当按照脐部划分后,再进行验证,发现{4,5,8,11,12}被正确分类,精度为5/7=71.4%。精度提高,所以按照“脐部”进行划分。

2.当按照脐部进行划分后,会对结点 (2) 进行划分,再次使用信息增益挑选出值最大的那个特征,信息增益值最大的那个特征是“色泽”,则使用“色泽”划分后决策树。但是,使用“色泽”划分后,编号为{5}的样本会从“好瓜”被分类为“坏瓜”,只有{4,8,11,12}被正确分类,精确度为4/7×100%=57.1%。所以,预剪枝操作会不再被这个节点进行划分。

3.对于节点(3),最优属性为“根蒂”。但是,这么划分后精确度仍然是 71.4% ,所以也不会对这个节点进行操作。

预剪枝得到的决策树如下图所示.

后剪枝

后剪枝就是先把整颗决策树构造完毕,然后自底向上的对非叶结点进行考察,若将该结点对应的子树换为叶结点能够带来泛化性能的提升,则把该子树替换为叶结点。

总结:构造完再剪枝。

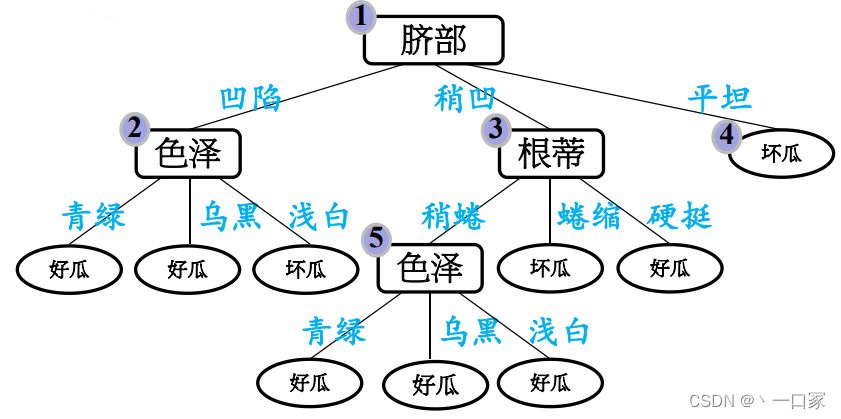

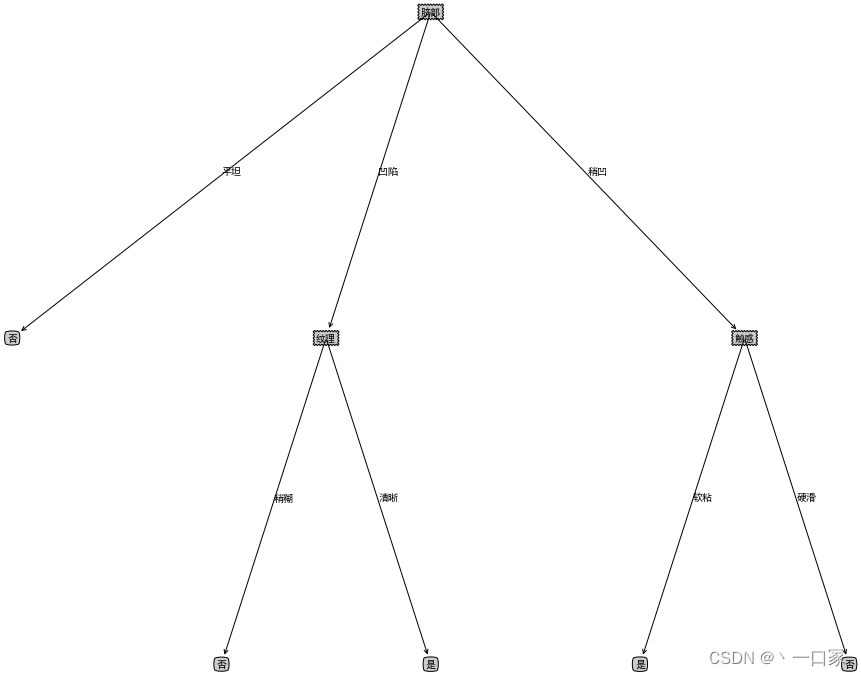

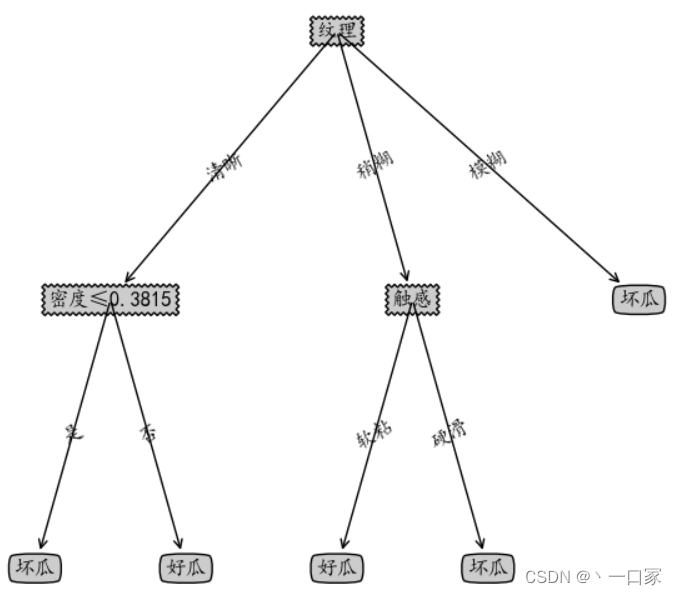

后剪枝算法首先考察上图中的结点 (6),若将以其为根节点的子树删除,即相当于把结点 (6) 替换为叶结点,替换后的叶结点包括编号为{7,15}的训练样本,因此把该叶结点标记为“好瓜”(因为这里正负样本数量相等,所以随便标记一个类别),因此此时的决策树在验证集上的精度为 57.1% (未剪枝的决策树为 42.9% ),所以后剪枝策略决定剪枝,剪枝后的决策树如下图所示:

接着考察结点 5,同样的操作,把以其为根节点的子树替换为叶结点,替换后的叶结点包含编号为{6,7,15}的训练样本,根据“多数原则”把该叶结点标记为“好瓜”,测试的决策树精度认仍为 57.1% ,所以不进行剪枝。

对结点2,若将其替换为叶结点,根据落在其上的训练样本{1,2,3,14} ,将其标记为“好瓜” ,得到验证集精度提升至 71.4%,则决定剪枝。

同理对结点3和1,先后替换为叶结点,验证集精度均未提升,则分支得到保留。

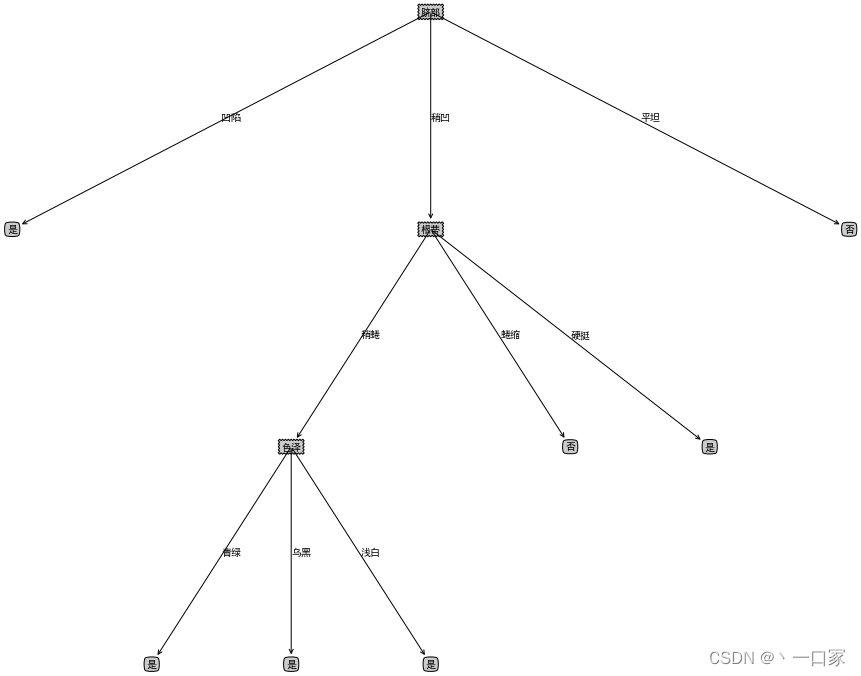

后剪枝得到的决策树如下图所示:

通过上面这两个例子我们也明白了两种剪枝方法的优缺点:后剪枝策略通常比预剪枝保留了更多的分支。一般情况下,后剪枝决策树的欠你和风险很小,泛化性能往往优于预剪枝决策树。但后剪枝过程在生成完全决策树之后才能进行,并且要自底向上对树中的所有非叶子结点逐一计算,因此训练时间开销比未剪枝决策树和预剪枝决策树的开销大得多。

python代码实现

采用上述西瓜数据集进行测试。

1.创建数据集

import math

import numpy as np

# 创建西瓜书数据集2.0

def createDataXG20():

data = np.array([['青绿', '蜷缩', '浊响', '清晰', '凹陷', '硬滑']

, ['乌黑', '蜷缩', '沉闷', '清晰', '凹陷', '硬滑']

, ['乌黑', '蜷缩', '浊响', '清晰', '凹陷', '硬滑']

, ['青绿', '蜷缩', '沉闷', '清晰', '凹陷', '硬滑']

, ['浅白', '蜷缩', '浊响', '清晰', '凹陷', '硬滑']

, ['青绿', '稍蜷', '浊响', '清晰', '稍凹', '软粘']

, ['乌黑', '稍蜷', '浊响', '稍糊', '稍凹', '软粘']

, ['乌黑', '稍蜷', '浊响', '清晰', '稍凹', '硬滑']

, ['乌黑', '稍蜷', '沉闷', '稍糊', '稍凹', '硬滑']

, ['青绿', '硬挺', '清脆', '清晰', '平坦', '软粘']

, ['浅白', '硬挺', '清脆', '模糊', '平坦', '硬滑']

, ['浅白', '蜷缩', '浊响', '模糊', '平坦', '软粘']

, ['青绿', '稍蜷', '浊响', '稍糊', '凹陷', '硬滑']

, ['浅白', '稍蜷', '沉闷', '稍糊', '凹陷', '硬滑']

, ['乌黑', '稍蜷', '浊响', '清晰', '稍凹', '软粘']

, ['浅白', '蜷缩', '浊响', '模糊', '平坦', '硬滑']

, ['青绿', '蜷缩', '沉闷', '稍糊', '稍凹', '硬滑']])

label = np.array(['是', '是', '是', '是', '是', '是', '是', '是', '否', '否', '否', '否', '否', '否', '否', '否', '否'])

name = np.array(['色泽', '根蒂', '敲声', '纹理', '脐部', '触感'])

return data, label, name

#划分测试集与训练集

def splitXgData20(xgData, xgLabel):

xgDataTrain = xgData[[0, 1, 2, 5, 6, 9, 13, 14, 15, 16],:]

xgDataTest = xgData[[3, 4, 7, 8, 10, 11, 12],:]

xgLabelTrain = xgLabel[[0, 1, 2, 5, 6, 9, 13, 14, 15, 16]]

xgLabelTest = xgLabel[[3, 4, 7, 8, 10, 11, 12]]

return xgDataTrain, xgLabelTrain, xgDataTest, xgLabelTest

2.生成决策树

# 定义一个常用函数 用来求numpy array中数值等于某值的元素数量

equalNums = lambda x,y: 0 if x is None else x[x==y].size

# 定义计算信息熵的函数

def singleEntropy(x):

"""计算一个输入序列的信息熵"""

# 转换为 numpy 矩阵

x = np.asarray(x)

# 取所有不同值

xValues = set(x)

# 计算熵值

entropy = 0

for xValue in xValues:

p = equalNums(x, xValue) / x.size

entropy -= p * math.log(p, 2)

return entropy

# 定义计算条件信息熵的函数

def conditionnalEntropy(feature, y):

"""计算 某特征feature 条件下y的信息熵"""

# 转换为numpy

feature = np.asarray(feature)

y = np.asarray(y)

# 取特征的不同值

featureValues = set(feature)

# 计算熵值

entropy = 0

for feat in featureValues:

# 解释:feature == feat 是得到取feature中所有元素值等于feat的元素的索引(类似这样理解)

# y[feature == feat] 是取y中 feature元素值等于feat的元素索引的 y的元素的子集

p = equalNums(feature, feat) / feature.size

entropy += p * singleEntropy(y[feature == feat])

return entropy

# 定义信息增益

def infoGain(feature, y):

return singleEntropy(y) - conditionnalEntropy(feature, y)

# 定义信息增益率

def infoGainRatio(feature, y):

return 0 if singleEntropy(feature) == 0 else infoGain(feature, y) / singleEntropy(feature)

# 特征选取

def bestFeature(data, labels, method = 'id3'):

assert method in ['id3', 'c45'], "method 须为id3或c45"

data = np.asarray(data)

labels = np.asarray(labels)

# 根据输入的method选取 评估特征的方法:id3 -> 信息增益; c45 -> 信息增益率

def calcEnt(feature, labels):

if method == 'id3':

return infoGain(feature, labels)

elif method == 'c45' :

return infoGainRatio(feature, labels)

# 特征数量 即 data 的列数量

featureNum = data.shape[1]

# 计算最佳特征

bestEnt = 0

bestFeat = -1

for feature in range(featureNum):

ent = calcEnt(data[:, feature], labels)

if ent >= bestEnt:

bestEnt = ent

bestFeat = feature

# print("feature " + str(feature + 1) + " ent: " + str(ent)+ "\t bestEnt: " + str(bestEnt))

return bestFeat, bestEnt

# 根据特征及特征值分割原数据集 删除data中的feature列,并根据feature列中的值分割 data和label

def splitFeatureData(data, labels, feature):

"""feature 为特征列的索引"""

# 取特征列

features = np.asarray(data)[:,feature]

# 数据集中删除特征列

data = np.delete(np.asarray(data), feature, axis = 1)

# 标签

labels = np.asarray(labels)

uniqFeatures = set(features)

dataSet = {}

labelSet = {}

for feat in uniqFeatures:

dataSet[feat] = data[features == feat]

labelSet[feat] = labels[features == feat]

return dataSet, labelSet

# 多数投票

def voteLabel(labels):

uniqLabels = list(set(labels))

labels = np.asarray(labels)

finalLabel = 0

labelNum = []

for label in uniqLabels:

# 统计每个标签值得数量

labelNum.append(equalNums(labels, label))

# 返回数量最大的标签

return uniqLabels[labelNum.index(max(labelNum))]

# 创建决策树

def createTree(data, labels, names, method = 'id3'):

data = np.asarray(data)

labels = np.asarray(labels)

names = np.asarray(names)

# 如果结果为单一结果

if len(set(labels)) == 1:

return labels[0]

# 如果没有待分类特征

elif data.size == 0:

return voteLabel(labels)

# 其他情况则选取特征

bestFeat, bestEnt = bestFeature(data, labels, method = method)

# 取特征名称

bestFeatName = names[bestFeat]

# 从特征名称列表删除已取得特征名称

names = np.delete(names, [bestFeat])

# 根据选取的特征名称创建树节点

decisionTree = {bestFeatName: {}}

# 根据最优特征进行分割

dataSet, labelSet = splitFeatureData(data, labels, bestFeat)

# 对最优特征的每个特征值所分的数据子集进行计算

for featValue in dataSet.keys():

decisionTree[bestFeatName][featValue] = createTree(dataSet.get(featValue), labelSet.get(featValue), names, method)

return decisionTree

# 树信息统计 叶子节点数量 和 树深度

def getTreeSize(decisionTree):

nodeName = list(decisionTree.keys())[0]

nodeValue = decisionTree[nodeName]

leafNum = 0

treeDepth = 0

leafDepth = 0

for val in nodeValue.keys():

if type(nodeValue[val]) == dict:

leafNum += getTreeSize(nodeValue[val])[0]

leafDepth = 1 + getTreeSize(nodeValue[val])[1]

else :

leafNum += 1

leafDepth = 1

treeDepth = max(treeDepth, leafDepth)

return leafNum, treeDepth

# 使用模型对其他数据分类

def dtClassify(decisionTree, rowData, names):

names = list(names)

# 获取特征

feature = list(decisionTree.keys())[0]

# 决策树对于该特征的值的判断字段

featDict = decisionTree[feature]

# 获取特征的列

feat = names.index(feature)

# 获取数据该特征的值

featVal = rowData[feat]

# 根据特征值查找结果,如果结果是字典说明是子树,调用本函数递归

if featVal in featDict.keys():

if type(featDict[featVal]) == dict:

classLabel = dtClassify(featDict[featVal], rowData, names)

else:

classLabel = featDict[featVal]

return classLabel

3.决策树可视化

# 可视化 主要源自《机器学习实战》

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif']=['SimHei'] #用来正常显示中文标签

plt.rcParams['figure.figsize'] = (10.0, 8.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

decisionNodeStyle = dict(boxstyle = "sawtooth", fc = "0.8")

leafNodeStyle = {"boxstyle": "round4", "fc": "0.8"}

arrowArgs = {"arrowstyle": "<-"}

# 画节点

def plotNode(nodeText, centerPt, parentPt, nodeStyle):

createPlot.ax1.annotate(nodeText, xy = parentPt, xycoords = "axes fraction", xytext = centerPt

, textcoords = "axes fraction", va = "center", ha="center", bbox = nodeStyle, arrowprops = arrowArgs)

# 添加箭头上的标注文字

def plotMidText(centerPt, parentPt, lineText):

xMid = (centerPt[0] + parentPt[0]) / 2.0

yMid = (centerPt[1] + parentPt[1]) / 2.0

createPlot.ax1.text(xMid, yMid, lineText)

# 画树

def plotTree(decisionTree, parentPt, parentValue):

# 计算宽与高

leafNum, treeDepth = getTreeSize(decisionTree)

# 在 1 * 1 的范围内画图,因此分母为 1

# 每个叶节点之间的偏移量

plotTree.xOff = plotTree.figSize / (plotTree.totalLeaf - 1)

# 每一层的高度偏移量

plotTree.yOff = plotTree.figSize / plotTree.totalDepth

# 节点名称

nodeName = list(decisionTree.keys())[0]

# 根节点的起止点相同,可避免画线;如果是中间节点,则从当前叶节点的位置开始,

# 然后加上本次子树的宽度的一半,则为决策节点的横向位置

centerPt = (plotTree.x + (leafNum - 1) * plotTree.xOff / 2.0, plotTree.y)

# 画出该决策节点

plotNode(nodeName, centerPt, parentPt, decisionNodeStyle)

# 标记本节点对应父节点的属性值

plotMidText(centerPt, parentPt, parentValue)

# 取本节点的属性值

treeValue = decisionTree[nodeName]

# 下一层各节点的高度

plotTree.y = plotTree.y - plotTree.yOff

# 绘制下一层

for val in treeValue.keys():

# 如果属性值对应的是字典,说明是子树,进行递归调用; 否则则为叶子节点

if type(treeValue[val]) == dict:

plotTree(treeValue[val], centerPt, str(val))

else:

plotNode(treeValue[val], (plotTree.x, plotTree.y), centerPt, leafNodeStyle)

plotMidText((plotTree.x, plotTree.y), centerPt, str(val))

# 移到下一个叶子节点

plotTree.x = plotTree.x + plotTree.xOff

# 递归完成后返回上一层

plotTree.y = plotTree.y + plotTree.yOff

# 画出决策树

def createPlot(decisionTree):

fig = plt.figure(1, facecolor = "white")

fig.clf()

axprops = {"xticks": [], "yticks": []}

createPlot.ax1 = plt.subplot(111, frameon = False, **axprops)

# 定义画图的图形尺寸

plotTree.figSize = 1.5

# 初始化树的总大小

plotTree.totalLeaf, plotTree.totalDepth = getTreeSize(decisionTree)

# 叶子节点的初始位置x 和 根节点的初始层高度y

plotTree.x = 0

plotTree.y = plotTree.figSize

plotTree(decisionTree, (plotTree.figSize / 2.0, plotTree.y), "")

plt.show()

4.预剪枝

# 创建预剪枝决策树

def createTreePrePruning(dataTrain, labelTrain, dataTest, labelTest, names, method = 'id3'):

trainData = np.asarray(dataTrain)

labelTrain = np.asarray(labelTrain)

testData = np.asarray(dataTest)

labelTest = np.asarray(labelTest)

names = np.asarray(names)

# 如果结果为单一结果

if len(set(labelTrain)) == 1:

return labelTrain[0]

# 如果没有待分类特征

elif trainData.size == 0:

return voteLabel(labelTrain)

# 其他情况则选取特征

bestFeat, bestEnt = bestFeature(dataTrain, labelTrain, method = method)

# 取特征名称

bestFeatName = names[bestFeat]

# 从特征名称列表删除已取得特征名称

names = np.delete(names, [bestFeat])

# 根据最优特征进行分割

dataTrainSet, labelTrainSet = splitFeatureData(dataTrain, labelTrain, bestFeat)

# 预剪枝评估

# 划分前的分类标签

labelTrainLabelPre = voteLabel(labelTrain)

labelTrainRatioPre = equalNums(labelTrain, labelTrainLabelPre) / labelTrain.size

# 划分后的精度计算

if dataTest is not None:

dataTestSet, labelTestSet = splitFeatureData(dataTest, labelTest, bestFeat)

# 划分前的测试标签正确比例

labelTestRatioPre = equalNums(labelTest, labelTrainLabelPre) / labelTest.size

# 划分后 每个特征值的分类标签正确的数量

labelTrainEqNumPost = 0

for val in labelTrainSet.keys():

labelTrainEqNumPost += equalNums(labelTestSet.get(val), voteLabel(labelTrainSet.get(val))) + 0.0

# 划分后 正确的比例

labelTestRatioPost = labelTrainEqNumPost / labelTest.size

# 如果没有评估数据 但划分前的精度等于最小值0.5 则继续划分

if dataTest is None and labelTrainRatioPre == 0.5:

decisionTree = {bestFeatName: {}}

for featValue in dataTrainSet.keys():

decisionTree[bestFeatName][featValue] = createTreePrePruning(dataTrainSet.get(featValue), labelTrainSet.get(featValue)

, None, None, names, method)

elif dataTest is None:

return labelTrainLabelPre

# 如果划分后的精度相比划分前的精度下降, 则直接作为叶子节点返回

elif labelTestRatioPost < labelTestRatioPre:

return labelTrainLabelPre

else :

# 根据选取的特征名称创建树节点

decisionTree = {bestFeatName: {}}

# 对最优特征的每个特征值所分的数据子集进行计算

for featValue in dataTrainSet.keys():

decisionTree[bestFeatName][featValue] = createTreePrePruning(dataTrainSet.get(featValue), labelTrainSet.get(featValue)

, dataTestSet.get(featValue), labelTestSet.get(featValue)

, names, method)

return decisionTree

# 将西瓜数据2.0分割为测试集和训练集

xgDataTrain, xgLabelTrain, xgDataTest, xgLabelTest = splitXgData20(xgData, xgLabel)

# 生成不剪枝的树

xgTreeTrain = createTree(xgDataTrain, xgLabelTrain, xgName, method = 'id3')

# 生成预剪枝的树

xgTreePrePruning = createTreePrePruning(xgDataTrain, xgLabelTrain, xgDataTest, xgLabelTest, xgName, method = 'id3')

# 画剪枝前的树

print("剪枝前的树")

createPlot(xgTreeTrain)

# 画剪枝后的树

print("剪枝后的树")

createPlot(xgTreePrePruning)

剪枝前

剪枝后

5.后剪枝

# 创建决策树 带预划分标签

def createTreeWithLabel(data, labels, names, method = 'id3'):

data = np.asarray(data)

labels = np.asarray(labels)

names = np.asarray(names)

# 如果不划分的标签为

votedLabel = voteLabel(labels)

# 如果结果为单一结果

if len(set(labels)) == 1:

return votedLabel

# 如果没有待分类特征

elif data.size == 0:

return votedLabel

# 其他情况则选取特征

bestFeat, bestEnt = bestFeature(data, labels, method = method)

# 取特征名称

bestFeatName = names[bestFeat]

# 从特征名称列表删除已取得特征名称

names = np.delete(names, [bestFeat])

# 根据选取的特征名称创建树节点 划分前的标签votedPreDivisionLabel=_vpdl

decisionTree = {bestFeatName: {"_vpdl": votedLabel}}

# 根据最优特征进行分割

dataSet, labelSet = splitFeatureData(data, labels, bestFeat)

# 对最优特征的每个特征值所分的数据子集进行计算

for featValue in dataSet.keys():

decisionTree[bestFeatName][featValue] = createTreeWithLabel(dataSet.get(featValue), labelSet.get(featValue), names, method)

return decisionTree

# 将带预划分标签的tree转化为常规的tree

# 函数中进行的copy操作,原因见有道笔记 【YL20190621】关于Python中字典存储修改的思考

def convertTree(labeledTree):

labeledTreeNew = labeledTree.copy()

nodeName = list(labeledTree.keys())[0]

labeledTreeNew[nodeName] = labeledTree[nodeName].copy()

for val in list(labeledTree[nodeName].keys()):

if val == "_vpdl":

labeledTreeNew[nodeName].pop(val)

elif type(labeledTree[nodeName][val]) == dict:

labeledTreeNew[nodeName][val] = convertTree(labeledTree[nodeName][val])

return labeledTreeNew

# 后剪枝 训练完成后决策节点进行替换评估 这里可以直接对xgTreeTrain进行操作

def treePostPruning(labeledTree, dataTest, labelTest, names):

newTree = labeledTree.copy()

dataTest = np.asarray(dataTest)

labelTest = np.asarray(labelTest)

names = np.asarray(names)

# 取决策节点的名称 即特征的名称

featName = list(labeledTree.keys())[0]

# print("\n当前节点:" + featName)

# 取特征的列

featCol = np.argwhere(names==featName)[0][0]

names = np.delete(names, [featCol])

# print("当前节点划分的数据维度:" + str(names))

# print("当前节点划分的数据:" )

# print(dataTest)

# print(labelTest)

# 该特征下所有值的字典

newTree[featName] = labeledTree[featName].copy()

featValueDict = newTree[featName]

featPreLabel = featValueDict.pop("_vpdl")

# print("当前节点预划分标签:" + featPreLabel)

# 是否为子树的标记

subTreeFlag = 0

# 分割测试数据 如果有数据 则进行测试或递归调用 np的array我不知道怎么判断是否None, 用is None是错的

dataFlag = 1 if sum(dataTest.shape) > 0 else 0

if dataFlag == 1:

# print("当前节点有划分数据!")

dataTestSet, labelTestSet = splitFeatureData(dataTest, labelTest, featCol)

for featValue in featValueDict.keys():

# print("当前节点属性 {0} 的子节点:{1}".format(featValue ,str(featValueDict[featValue])))

if dataFlag == 1 and type(featValueDict[featValue]) == dict:

subTreeFlag = 1

# 如果是子树则递归

newTree[featName][featValue] = treePostPruning(featValueDict[featValue], dataTestSet.get(featValue), labelTestSet.get(featValue), names)

# 如果递归后为叶子 则后续进行评估

if type(featValueDict[featValue]) != dict:

subTreeFlag = 0

# 如果没有数据 则转换子树

if dataFlag == 0 and type(featValueDict[featValue]) == dict:

subTreeFlag = 1

# print("当前节点无划分数据!直接转换树:"+str(featValueDict[featValue]))

newTree[featName][featValue] = convertTree(featValueDict[featValue])

# print("转换结果:" + str(convertTree(featValueDict[featValue])))

# 如果全为叶子节点, 评估需要划分前的标签,这里思考两种方法,

# 一是,不改变原来的训练函数,评估时使用训练数据对划分前的节点标签重新打标

# 二是,改进训练函数,在训练的同时为每个节点增加划分前的标签,这样可以保证评估时只使用测试数据,避免再次使用大量的训练数据

# 这里考虑第二种方法 写新的函数 createTreeWithLabel,当然也可以修改createTree来添加参数实现

if subTreeFlag == 0:

ratioPreDivision = equalNums(labelTest, featPreLabel) / labelTest.size

equalNum = 0

for val in labelTestSet.keys():

equalNum += equalNums(labelTestSet[val], featValueDict[val])

ratioAfterDivision = equalNum / labelTest.size

# print("当前节点预划分标签的准确率:" + str(ratioPreDivision))

# print("当前节点划分后的准确率:" + str(ratioAfterDivision))

# 如果划分后的测试数据准确率低于划分前的,则划分无效,进行剪枝,即使节点等于预划分标签

# 注意这里取的是小于,如果有需要 也可以取 小于等于

if ratioAfterDivision < ratioPreDivision:

newTree = featPreLabel

return newTree

xgTreePostPruning = treePostPruning(xgTreeTrain, xgDataTest, xgLabelTest, xgName)

createPlot(xgTreePostPruning)

连续值处理

连续属性取值数目非有限,无法直接进行划分。如何在决策树学习中使用连续属性?那就是:连续属性离散化:采用二分法将连续属性值一分为二。

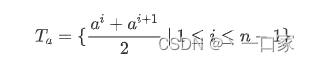

将连续属性 a 在样本集 D 上出现 n 个不同的 取值从小到大排列,记为 a 1 , a 2 , ..., a n 。基于划分点t, 可将D分为子集Dt +和Dt-,其中Dt-包含那些在属性a上取 值不大于t的样本,Dt +包含那些在属性 a上取值大于t的 样本。考虑包含n-1个元素的候选划分

每次从集合Ta中选择一个候选划分点 ,这样属性 a 的属性值将会分裂(二分)为两类离散化属性值(根据是否 <=t 获得两类离散属性值:=1 /

=0),由此可以计算每个划分点 t下的信息增益 / 增益率,根据信息增益 / 增益率的大小选择最优划分点,作为结点的最优划分属性,即

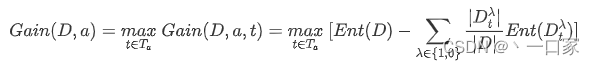

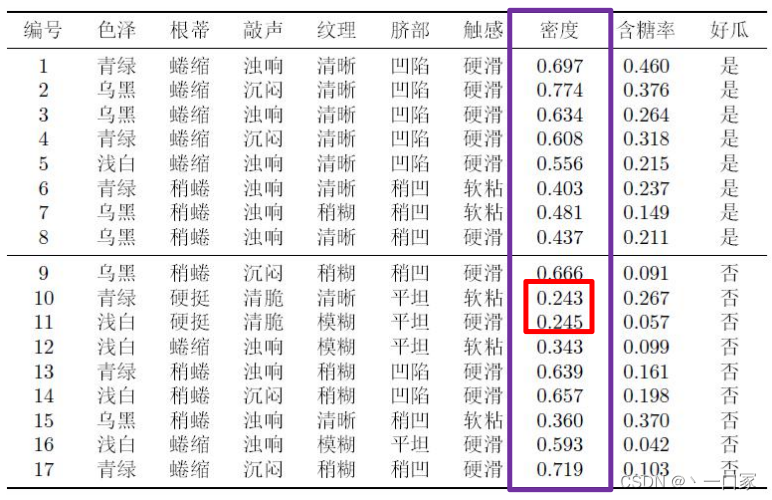

例子:给定下表数据,按照信息增益选择哪个属性进行划分?

1.对属性“密度” ,其候 选划分点集合包含16个 候选值:例如 (0.245+0.243)/2=0.244。

取划分点:t0=0.381;则: D(t>t0)={1,2,3,4,5,6,7,8,9, 13,14,16,17} 好瓜:8 坏瓜:5

D(t<t0)={10,11,12,15} 好瓜:0 坏瓜:4

![]()

![]()

计算每个划分点的信息增 益,得到最大增益为: 0.262,对应划分点为0.381。

1.对属性“密度” ,计算 每个划分点的信息增益,得 到最大增益为:0.262,对 应划分点为0.381

2.对“含糖量”计算每个 划分点的信息增益,得到 最大增益为:0.349,对应划分点为0.126

3.计算色泽等信息增益:

python代码实现

同样以上述西瓜数据集为例,在原数据的基础上添加两列连续值数据密度与含糖率构成既有离散值又有连续值的数据集。

1.构造数据集

def createDataSet():

dataSet2 = [["青绿", "蜷缩", "浊响", "清晰", "凹陷", "硬滑", 0.697, 0.460, "好瓜"],

["乌黑", "蜷缩", "沉闷", "清晰", "凹陷", "硬滑", 0.774, 0.376, "好瓜"],

["乌黑", "蜷缩", "浊响", "清晰", "凹陷", "硬滑", 0.634, 0.264, "好瓜"],

["青绿", "蜷缩", "沉闷", "清晰", "凹陷", "硬滑", 0.608, 0.318, "好瓜"],

["浅白", "蜷缩", "浊响", "清晰", "凹陷", "硬滑", 0.556, 0.215, "好瓜"],

["青绿", "稍蜷", "浊响", "清晰", "稍凹", "软粘", 0.403, 0.237, "好瓜"],

["乌黑", "稍蜷", "浊响", "稍糊", "稍凹", "软粘", 0.481, 0.149, "好瓜"],

["乌黑", "稍蜷", "浊响", "清晰", "稍凹", "硬滑", 0.437, 0.211, "好瓜"],

["乌黑", "稍蜷", "沉闷", "稍糊", "稍凹", "硬滑", 0.666, 0.091, "坏瓜"],

["青绿", "硬挺", "清脆", "清晰", "平坦", "软粘", 0.243, 0.267, "坏瓜"],

["浅白", "硬挺", "清脆", "模糊", "平坦", "硬滑", 0.245, 0.057, "坏瓜"],

["浅白", "蜷缩", "浊响", "模糊", "平坦", "软粘", 0.343, 0.099, "坏瓜"],

["青绿", "稍蜷", "浊响", "稍糊", "凹陷", "硬滑", 0.639, 0.161, "坏瓜"],

["浅白", "稍蜷", "沉闷", "稍糊", "凹陷", "硬滑", 0.657, 0.198, "坏瓜"],

["乌黑", "稍蜷", "浊响", "清晰", "稍凹", "软粘", 0.360, 0.370, "坏瓜"],

["浅白", "蜷缩", "浊响", "模糊", "平坦", "硬滑", 0.593, 0.042, "坏瓜"],

["青绿", "蜷缩", "沉闷", "稍糊", "稍凹", "硬滑", 0.719, 0.103, "坏瓜"],

]

featMeans2 = ["色泽", "根蒂", "敲声", "纹理", "脐部", "触感", "密度", "含糖率"]

isContinuous2 = [0, 0, 0, 0, 0, 0, 1, 1] # 0 离散, 1 连续

return dataSet2, featMeans2, isContinuous22.根据划分点的大小划分数据集

def splitDataSet_Continuous(dataSet, i, val):

retDataSetLess, retDataSetBig = [], [] # 小于等于 / 大于划分点的数据集

for featVec in dataSet:

# 连续属性要保留axis处的属性

retDataSetLess.append(featVec) if featVec[i] <= val else retDataSetBig.append(featVec)

return retDataSetLess, retDataSetBig3.根据信息增益选择最优划分属性(离散属性+连续属性)

from math import log

# 函数说明:计算给定数据集的经验熵(香农熵)

def calcShannonEnt(dataSet):

numEntires = len(dataSet) #返回数据集的行数

labelCounts = {} #保存每个标签(Label)出现次数的字典

for featVec in dataSet: #对每组特征向量进行统计

currentLabel = featVec[-1] #提取标签(Label)信息

if currentLabel not in labelCounts.keys(): #如果标签(Label)没有放入统计次数的字典,添加进去

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1 #Label计数

shannonEnt = 0.0 #经验熵(香农熵)

for key in labelCounts: #计算香农熵

prob = float(labelCounts[key]) / numEntires #选择该标签(Label)的概率

shannonEnt -= prob * log(prob, 2) #利用公式计算

return shannonEnt

def splitDataSet(dateSet, axis, value): #dateSet: 待划分的数据集,axis: 划分数据集的特征,value: 特征的返回值

retDataSet = [] # 因python不用考虑内存分配问题,在函数中传递的是列表的引用,所以需声明一个新列表对象

for featVec in dateSet:

if featVec[axis] == value: # 该特征值等于判断值

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:]) #上一步和这一步是排除掉特征值

retDataSet.append(reducedFeatVec) # 加入返回的数据集

return retDataSet

def chooseBestFeatureTosplit_Continuous(dataSet, isContinuous):

numFeatures = len(dataSet[0]) - 1

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0

bestFeatIdx = -1

globalBestDivideVal = None # 全局最优划分值(若最优划分为离散属性,那么无该值)

for i in range(numFeatures):

newEntropy = 0.0

localBestDivideVal = None # 局部(当前属性)最优划分值(若最优划分为离散属性,那么无该值)

uniqueVals = set([ex[i] for ex in dataSet])

if isContinuous[i] == 0: # 离散属性

for val in uniqueVals:

subDataSet = splitDataSet(dataSet, i, val)

prob = len(subDataSet) * 1.0 / (len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

else: # 连续属性

sortedUniqueVals = sorted(list(uniqueVals))

minEntropy = float('inf')

for j in range(1, len(sortedUniqueVals)): # 依次判断每个划分点的条件熵, 最小者具有最大信息增益

dividePointVal = (sortedUniqueVals[j - 1] + sortedUniqueVals[j]) / 2

subDataSetLess, subDataSetBig = splitDataSet_Continuous(dataSet, i, dividePointVal)

prob = len(subDataSetLess) * 1.0 / (len(dataSet))

entropy = prob * calcShannonEnt(subDataSetLess) + (1 - prob) * calcShannonEnt(subDataSetBig) # 条件熵

if entropy < minEntropy:

minEntropy = entropy

localBestDivideVal = dividePointVal

newEntropy = minEntropy

infoGain = baseEntropy - newEntropy

if infoGain > bestInfoGain:

bestInfoGain = infoGain

bestFeatIdx = i

globalBestDivideVal = localBestDivideVal # 当离散属性满足if时, 连续属性的划分值将被重置

return bestFeatIdx, bestInfoGain, globalBestDivideVal4.构建决策树

def treeGenerate(dataSet, featMeans, isContinuous):

classList = [ex[-1] for ex in dataSet]

# 所有样本的标签完全相同

if classList.count(classList[0]) == len(classList):

return classList[0]

# 使用完了所有特征, 仍然不能将数据集划分为仅包含唯一类别的分组

if len(dataSet[0]) == 1:

return majorityCnt(classList)

bestFeatIdx, _, bestDivideVal = chooseBestFeatureTosplit_Continuous(dataSet, isContinuous)

if isContinuous[bestFeatIdx] == 0: # 处理离散属性

bestFeatMean = featMeans[bestFeatIdx]

myTree = {bestFeatMean: {}}

copyFeatureMeans = featMeans[:]

copyIsContinuous = isContinuous[:]

# 注意: 离散属性若被选择则不能继续使用, 而连续属性可以继续使用

del (copyFeatureMeans[bestFeatIdx])

del (copyIsContinuous[bestFeatIdx])

uniqueVals = set([ex[bestFeatIdx] for ex in dataSet])

for val in uniqueVals:

subDataSet = splitDataSet(dataSet, bestFeatIdx, val)

myTree[bestFeatMean][val] = treeGenerate(subDataSet, copyFeatureMeans[:], copyIsContinuous[:])

else: # 处理连续属性

bestFeatMean = featMeans[bestFeatIdx] + '≤' + str(round(bestDivideVal, 4))

myTree = {bestFeatMean: {}}

subDataSetLess, subDataSetBig = splitDataSet_Continuous(dataSet, bestFeatIdx, bestDivideVal)

# 递归每一类“离散”属性

myTree[bestFeatMean]["是"] = treeGenerate(subDataSetLess, featMeans[:], isContinuous[:])

myTree[bestFeatMean]["否"] = treeGenerate(subDataSetBig, featMeans[:], isContinuous[:])

return myTree5.测试决策树算法

def classify_Continuous(inputTree, featMeans, testVec):

curNodeStr = list(inputTree.keys())[0]

isCon = True if curNodeStr.find('≤') > -1 else False # 根据结点的名称判断是否是连续属性

splitCurNodeStr = curNodeStr.split('≤')[0]

curNodeDict = inputTree[curNodeStr]

featIdx = featMeans.index(splitCurNodeStr) # 根据属性名定位所在位置

classLabel = None

for featVal in curNodeDict.keys(): # 当前内部结点curNodeStr的每一个属性值featVal

if not isCon: # 离散属性

if testVec[featIdx] == featVal:

if type(curNodeDict[featVal]).__name__ == 'dict': # 是内部结点

classLabel = classify_Continuous(curNodeDict[featVal], featMeans, testVec)

else: # 是叶结点

classLabel = curNodeDict[featVal]

else: # 连续属性

divideVal = float(curNodeStr.split('≤')[1])

featVal = '是' if testVec[featIdx] <= int(divideVal) else '否'

if type(curNodeDict[featVal]).__name__ == 'dict': # 是内部结点

classLabel = classify_Continuous(curNodeDict[featVal], featMeans, testVec)

else: # 是叶结点

classLabel = curNodeDict[featVal]

return classLabel

6.生成决策树

myDat, featMeans, isContinuous = createDataSet()

myTree = treeGenerate(myDat, featMeans, isContinuous)

print(myTree)

classLabel = classify_Continuous(myTree, ["色泽", "根蒂", "敲声", "纹理", "脐部", "触感", "密度", "含糖率"],

['浅白', '蜷缩', '浊响', '清晰', '凹陷', '硬滑', 0.585, 0.112])

print(classLabel) # 好瓜

![]()

740

740

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?