Date: 2023/3/8

Why 2D batch normalisation is used in features and 1D in classifiers?

There is no mathematical difference between them, except the dimension of input data.

nn.BatchNorm2d 83 only accepts 4D inputs while nn.BatchNorm1d 127 accepts 2D or 3D inputs. And because of that, in features which has been constructed of nn.Conv2d layers, inputs are [batch, ch, h, w] (4D) we need BatchNorm2d and in classifier we have Linear layers which accept [batch, length] or [batch, channel, length] (2D/3D) so we need BatchNorm1d.

Two linked docs completely explain this idea.

From: Why 2D batch normalisation is used in features and 1D in classifiers? - vision - PyTorch Forums

Date: 2023/4/4

Transform and augmenting images

Geometry:

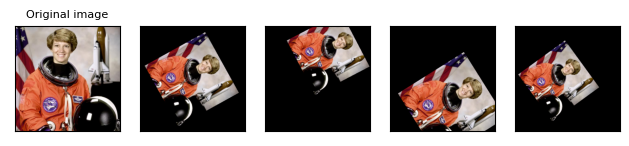

RandomAffine (随机仿射变换)

import torchvision.transforms as T

affine_transfomer = T.RandomAffine(degrees=(30, 70), translate=(0.1, 0.3), scale=(0.5, 0.75))

affine_imgs = [affine_transfomer(orig_img) for _ in range(4)]

plot(affine_imgs)

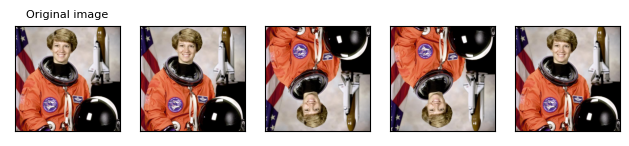

RandomHorizontalFlip (随机水平翻转)

RandomVerticalFlip (随机垂直翻转)

vflipper = T.RandomVerticalFlip(p=0.5)

transformed_imgs = [vflipper(orig_img) for _ in range(4)]

plot(transformed_imgs)

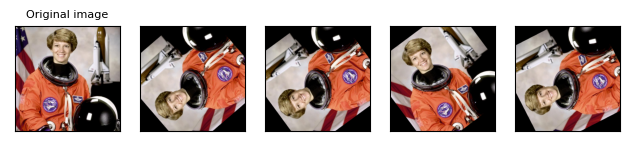

RandomResizedCrop (随机裁剪并resize)

resize_cropper = T.RandomResizedCrop(size=(32, 32))

resized_crops = [resize_cropper(orig_img) for _ in range(4)]

plot(resized_crops)

RandomRotation ()

rotater = T.RandomRotation(degrees=(0, 180))

rotated_imgs = [rotater(orig_img) for _ in range(4)]

plot(rotated_imgs)

Color:

RandomGrayscale (随机灰度化), Grayscale (灰度化)

gray_img = T.Grayscale()(orig_img)

plot([gray_img], cmap='gray')

修改HW3代码里的CNN为AlexNet或ResNet无法有效降低loss,提高accuracy。因此今天的任务是学习其他人的实现方法,同时在其他人方法上进行修改或尝试其余方法。

Date: 2023/4/5

Medium baseline: 0.73207. (Training Augmentation + Train Longer)

昨天使用了很多TA方法,当使用如下分数时,分数为0.55911和0.57569。

transforms.RandomGrayscale(0.5), # 随机灰度化

transforms.RandomRotation(degrees=(0, 180)), # 图像随机旋转

transforms.RandomResizedCrop(size=(128, 128)), # 随机截取并resize

transforms.RandomAffine(degrees=0, translate=(0.5, 0.5), scale=(0.25, 1)), # 随机仿射变换当去除 transforms.RandomResizedCrop(size=(128, 128)) 方法时,分数为0.4857和0.51095。

当继续去除 transforms.RandomAffine(degrees=0, translate=(0.5, 0.5), scale=(0.25, 1)) 方法时,分数为0.65044和0.66832。

当添加 transforms.ColorJitter(brightness=0.5, contrast=0.5, saturation=0.5, hue=0.5) 和transforms.RandomInvert() 方法时,分数为0.55142和0.57868。

Accuracy的升降可能可以说明当前任务中各种augment方法的有效性。

去除 transforms.ColorJitter(brightness=0.5, contrast=0.5, saturation=0.5, hue=0.5) 和transforms.RandomInvert() 方法,添加 transforms.RandomResizedCrop(size=(128, 128), scale=(0.8, 1.0), ratio=(0.8, 1.25)) 方法, 添加 nn.Dropout(p=0.25), 更改RandomGrayscale概率为0.05,分数为0.7358和0.76892。

添加 transforms.RandomHorizontalFlip(p=0.5)和 transforms.RandomVerticalFlip(p=0.5),分数为0.78745和0.80378。

Date 2023/4/11

添加 transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.2),分数为0.70166和0.74501。

修改 transforms.RandomResizedCrop(size=(128, 128), scale=(0.8, 1.0), ratio=(0.8, 1.25))为 transforms.RandomResizedCrop(size=(128, 128), scale=(0.8, 1.0)),修改 patience = 10为 patient = 20,分数为0.77635和0.81474,由于TA提升的性能已经极限,今天修改CNN为ResNet。

一方面继续努力提升HW3的分数,一方面开始做HW4。HW4的 simple code分数为0.606和0.61525。

HW4去掉一层全连接层,分数为0.64775和0.64525。nhead=2改为 nhead=1,分数为0.615和0.61175。d_model=80改为 d_model=120,分数为0.648和0.6485,d_model=120改为 d_model=40,分数为0.54575和0.54375,d_model=40改为d_model=160,分数为0.66和0.663,nhead=1改为 nhead=2,分数为0.66075和0.67225。因此选用去掉一层全连接层,nhead=1,d_model=160的Classifier,并在此基础上继续修改Classifier。

458

458

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?