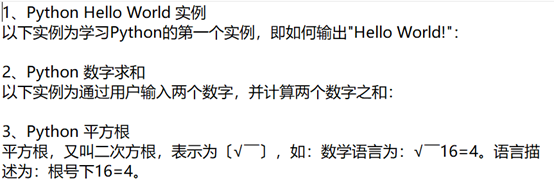

1.访问菜鸟教程(https://www.runoob.com),爬取其Python3实例模块的题目内容,要求输出格式如下图所示。

【答案1】

import requests

from lxml import etree

import time

# 获取题目链接

url = 'https://www.runoob.com/python3/python3-examples.html'

web_data = requests.get(url)

dom = etree.HTML(web_data.text, etree.HTMLParser(encoding='utf-8')) # 网页解析

exerciseList= dom.xpath('//div[@id="content"]/ul/li/a/text()') # 练习题名称

urlList= dom.xpath('//div[@id="content"]/ul/li/a/@href') # 练习题超链接

urlList = ['/python3/'+i if '/python3/' not in i else i for i in urlList ]

urlList = ['https://www.runoob.com' + i if 'www.runoob.com/' not in i else 'https:'+i for i in urlList]

exerciseString = '\n'.join(exerciseList) # 将练习题名称拼接成一个字符串

with open('exercisePython.txt', 'w') as f:

f.write(exerciseString)

#爬取题目数据及整理写出

resultList = []

for url in urlList:

web_data = requests.get(url)

dom = etree.HTML(web_data.text, etree.HTMLParser(encoding='utf-8')) # 网页源码解析

# 获取题目及答案

title = dom.xpath('string(//div[@id="content"]/h1)') # 练习题名称

content = dom.xpath('string(//div[@id="content"]/p[2])') # 练习题描述内容

code = dom.xpath('string(//div[@id="content"]//div[@class="example"]//div[@class="hl-main"])') # 练习题答案

result = dom.xpath('string(//div[@id="content"]/p[3])') # 结果描述

output = dom.xpath('string(//div[@id="content"]/pre)') # 目标输出

res = title + '\n' + content+ '\n' # 将内容进行拼接

resultList.append(res)

time.sleep(1)

print(url, '\n', res)

mid = resultList.copy()

for i in range(len(mid)):

mid[i] = str(i+1)+'、'+ mid[i] # 加入题目序号

with open('Python编程基础上机题库1.txt', 'w', encoding='utf-8') as f:

f.write('\n'.join(mid)) # 将数据写出

【答案2】

import requests

from lxml import etree

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/92.0.4515.159 Safari/537.36'

}

li_list = []

url='https://www.runoob.com/python3/python3-examples.html'

resp=requests.get(url=url).text

tree=etree.HTML(resp)

url_list=tree.xpath('//*[@id="content"]/ul/li/a/@href') #链接

for li in url_list:

if 'www' not in li:

li_list.append('https://www.runoob.com/python3/'+li)

else:li_list.append('https:'+li)

count=1

for urls in li_list:

count+=1

nextPage= requests.get(url=urls,headers=headers).text

tree2=etree.HTML(nextPage)

title=tree2.xpath('normalize-space(//*[@id="content"]/h1/text())')

contents = tree2.xpath('normalize-space(//*[@id="content"]/p[2]/text())')

result=str(count)+'、'+str(title)+'\n'+str(contents).lstrip()

print(result)

1029

1029

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?