一、配置环境

1、打开colab,创建一个空白notebook,在[修改运行时环境]中选择15GB显存的T4 GPU.

2、pip安装依赖python包

!pip install --upgrade accelerate

!pip install bitsandbytes transformers_stream_generator

!pip install transformers

!pip install sentencepiece

!pip install torch

!pip install accelerate

注意此时,安装完accelerate后需要重启notebook,不然报如下错误:

ImportError: Using low_cpu_mem_usage=True or a device_map requires Accelerate: pip install accelerate

注:参考文章内容[1]不能直接运行

二、模型推理

运行加载模型代码

import accelerate

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM, TextStreamer

# 待加载的预模型

model_path = "LinkSoul/Chinese-Llama-2-7b-4bit"

# 分词器

tokenizer = AutoTokenizer.from_pretrained(model_path, use_fast=False)

model = AutoModelForCausalLM.from_pretrained(

model_path,

load_in_4bit=True,

torch_dtype=torch.float16,

device_map='auto'

)

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

instruction = """[INST] <<SYS>>\nYou are a helpful, respectful and honest assistant. Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.

If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information.\n<</SYS>>\n\n{} [/INST]"""

下载模型需要耗费一点时间

You are using the default legacy behaviour of the <class 'transformers.models.llama.tokenization_llama.LlamaTokenizer'>. This is expected, and simply means that the `legacy` (previous) behavior will be used so nothing changes for you. If you want to use the new behaviour, set `legacy=False`. This should only be set if you understand what it means, and thouroughly read the reason why this was added as explained in https://github.com/huggingface/transformers/pull/24565

Downloading (…)model.bin.index.json: 100%

26.8k/26.8k [00:00<00:00, 1.13MB/s]

Downloading shards: 0%

0/2 [00:00<?, ?it/s]

Downloading (…)l-00001-of-00002.bin: 100%

9.97G/9.98G [04:58<00:00, 38.5MB/s]

Downloading (…)l-00002-of-00002.bin: 0%| | 0.00/3.50G [00:00<?, ?B/s]

Loading checkpoint shards: 0%| | 0/2 [00:00<?, ?it/s]

Downloading (…)neration_config.json: 100%

132/132 [00:00<00:00, 4.37kB/s]

demo1

prompt = instruction.format("What is the meaning of life")

generate_ids = model.generate(tokenizer(prompt, return_tensors='pt').input_ids.cuda(), max_new_tokens=4096, streamer=streamer)

输出:

/usr/local/lib/python3.10/dist-packages/transformers/generation/utils.py:1421: UserWarning: You have modified the pretrained model configuration to control generation. This is a deprecated strategy to control generation and will be removed soon, in a future version. Please use and modify the model generation configuration (see https://huggingface.co/docs/transformers/generation_strategies#default-text-generation-configuration )

warnings.warn(

/usr/local/lib/python3.10/dist-packages/bitsandbytes/nn/modules.py:224: UserWarning: Input type into Linear4bit is torch.float16, but bnb_4bit_compute_type=torch.float32 (default). This will lead to slow inference or training speed.

warnings.warn(f'Input type into Linear4bit is torch.float16, but bnb_4bit_compute_type=torch.float32 (default). This will lead to slow inference or training speed.')

The meaning of life is a philosophical question that has been debated for centuries. There is no one definitive answer, as different people and cultures may have different beliefs and values. Some people believe that the meaning of life is to seek happiness, while others believe that it is to fulfill a higher purpose or to serve a greater good. Ultimately, the meaning of life is a personal and subjective question that each individual must answer for themselves.

demo2

prompt = instruction.format("如何做个不拖延的人")

generate_ids = model.generate(tokenizer(prompt, return_tensors='pt').input_ids.cuda(), max_new_tokens=4096, streamer=streamer)

输出:

答案:不拖延的人是一个很好的目标,但是要成为一个不拖延的人并不容易。以下是一些建议,可以帮助你成为一个不拖延的人:

1. 制定计划:制定一个详细的计划,包括每天要完成的任务和时间表。这样可以帮助你更好地组织时间,并避免拖延。

2. 设定目标:设定个明确的目标,并制定一个实现这个目标的计划。这样可以帮助你更好地了解自己的目标,并更有动力地去完成任务。

3. 克服拖延的心理延的心理是一个常见的问题,但是可以通过一些方法克服。例如,你可以尝试使用一些技巧来克服拖延,如分解任务、使用时间管理工具等。

4. 坚持自己的计划:坚持自己的计划是非常重要的。如果你经常拖延,那么你需要坚持自己的计划,并尽可能地按照计划去完成任务5. 寻求帮助

最后的最后

感谢你们的阅读和喜欢,我收藏了很多技术干货,可以共享给喜欢我文章的朋友们,如果你肯花时间沉下心去学习,它们一定能帮到你。

因为这个行业不同于其他行业,知识体系实在是过于庞大,知识更新也非常快。作为一个普通人,无法全部学完,所以我们在提升技术的时候,首先需要明确一个目标,然后制定好完整的计划,同时找到好的学习方法,这样才能更快的提升自己。

这份完整版的大模型 AI 学习资料已经上传CSDN,朋友们如果需要可以微信扫描下方CSDN官方认证二维码免费领取【保证100%免费】

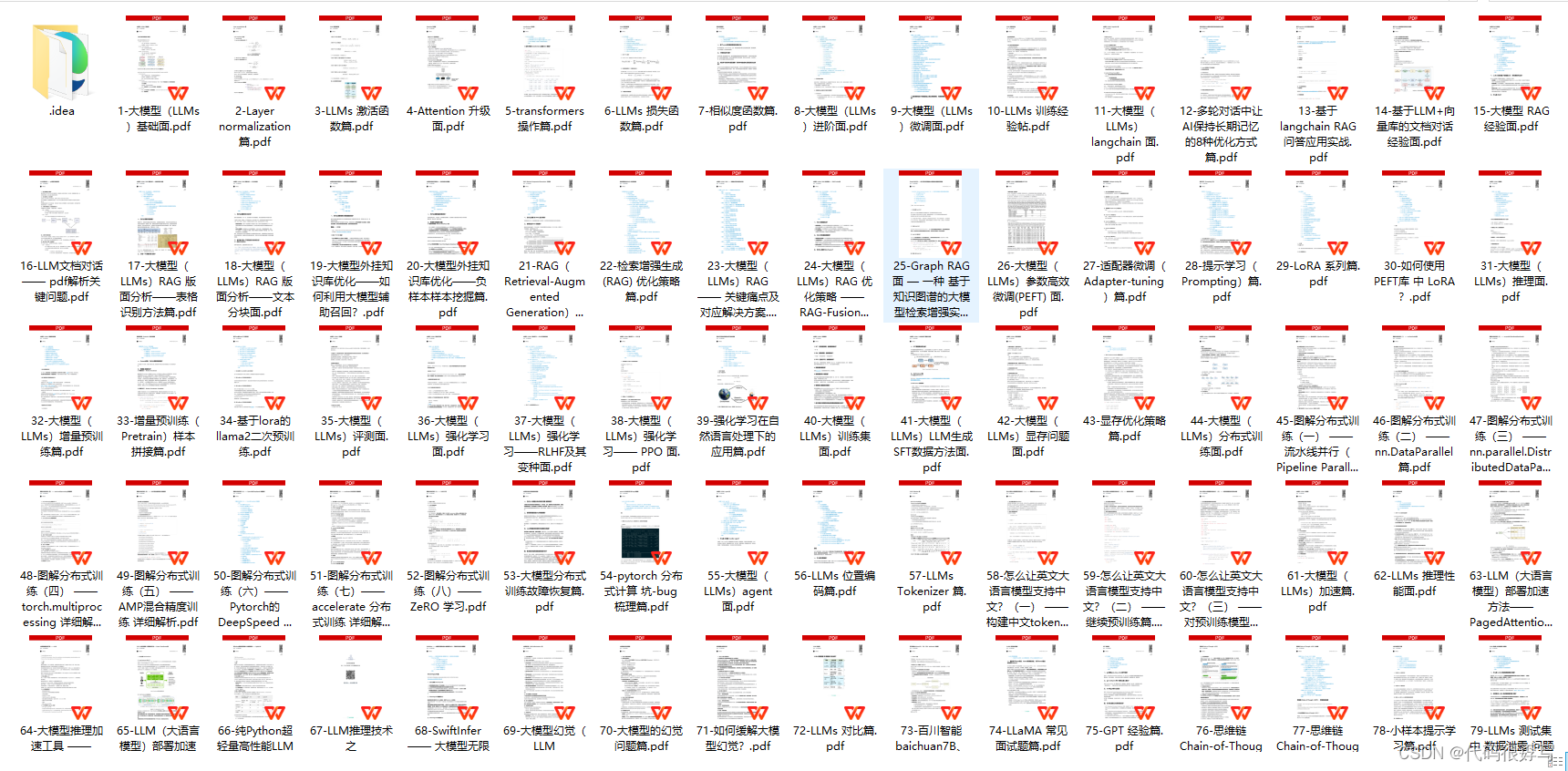

一、全套AGI大模型学习路线

AI大模型时代的学习之旅:从基础到前沿,掌握人工智能的核心技能!

二、640套AI大模型报告合集

这套包含640份报告的合集,涵盖了AI大模型的理论研究、技术实现、行业应用等多个方面。无论您是科研人员、工程师,还是对AI大模型感兴趣的爱好者,这套报告合集都将为您提供宝贵的信息和启示。

三、AI大模型经典PDF籍

随着人工智能技术的飞速发展,AI大模型已经成为了当今科技领域的一大热点。这些大型预训练模型,如GPT-3、BERT、XLNet等,以其强大的语言理解和生成能力,正在改变我们对人工智能的认识。 那以下这些PDF籍就是非常不错的学习资源。

四、AI大模型商业化落地方案

五、面试资料

我们学习AI大模型必然是想找到高薪的工作,下面这些面试题都是总结当前最新、最热、最高频的面试题,并且每道题都有详细的答案,面试前刷完这套面试题资料,小小offer,不在话下。

这份完整版的大模型 AI 学习资料已经上传CSDN,朋友们如果需要可以微信扫描下方CSDN官方认证二维码免费领取【保证100%免费】

1304

1304

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?