目录

把hive312/conf中的hive-site.xml复制到spark312/conf目录下:

spark连接hive我们需要六个关键的jar包,以及将hive的配置文件hive-site.xml拷贝到spark的conf目录下。如果你hive配置没问题的话,这些jar都在hive的目录中。

先来到spark312的jar包存放目录中:

[root@gree2 /]# cd opt/soft/spark312/jars/

复制jar包到该目录:

[root@gree2 jars]# cp /opt/soft/hive312/lib/hive-beeline-3.1.2.jar ./

[root@gree2 jars]# cp /opt/soft/hive312/lib/hive-cli-3.1.2.jar ./

[root@gree2 jars]# cp /opt/soft/hive312/lib/hive-exec-3.1.2.jar ./

[root@gree2 jars]# cp /opt/soft/hive312/lib/hive-jdbc-3.1.2.jar ./

[root@gree2 jars]# cp /opt/soft/hive312/lib/hive-metastore-3.1.2.jar ./

[root@gree2 jars]# cp /opt/soft/hive312/lib/mysql-connector-java-8.0.29.jar ./

来到conf目录:

[root@gree2 /]# cd opt/soft/spark312/conf/

把hive312/conf中的hive-site.xml复制到spark312/conf目录下:

[root@gree2 conf]# cp /opt/soft/hive312/conf/hive-site.xml ./

修改hive-site.xml文件:下面是里面的所有配置

<configuration>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/opt/soft/hive312/warehouse</value>

</property>

<property>

<name>hive.metastore.db.type</name>

<value>mysql</value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.61.141:3306/hive143?createDatabaseIfNotExist=true</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.cj.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

<description>关闭schema验证</description>

</property>

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

<description>提示当前数据库名</description>

</property>

<property>

<name>hive.cli.print.header</name>

<value>true</value>

<description>查询输出时带列名一起输出</description>

</property>

<property>

<name>hive.zookeeper.quorum</name>

<value>192.168.61.146</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>192.168.61.146</value></property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>192.168.61.146</value>

</property><property>

<name>hive.aux.jars.path</name>

<value>file:///opt/soft/hive312/lib/hive-hbase-handler-3.1.2.jar,file:///opt/soft/hive312/lib/zookeeper-3.4.6.jar,file:///opt/soft/hive312/lib/hbase-client-2.3.5.jar,file:///opt/soft/hive312/lib/hbase-common-2.3.5-tests.jar,file:///opt/soft/hive312/lib/hbase-server-2.3.5.jar,file:///opt/soft/hive312/lib/hbase-common-2.3.5.jar,file:///opt/soft/hive312/lib/hbase-protocol-2.3.5.jar,file:///opt/soft/hive312/lib/htrace-core-3.2.0-incubating.jar</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property><property>

<name>hadoop.proxyuser.hdfs.groups</name>

<value>*</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://192.168.61.146:9083</value>

</property></configuration>

最后一段配置是我们需要加的。

配置完成,开始测试:

先启动hadoop:

[root@gree2 ~]# start-all.sh

启动hive的RunJar服务:

nohup hive --service metastore &

nohup hive --service hiveserver2 &

jps查看启动的服务:

登录hive:

[root@gree2 ~]# beeline -u jdbc:hive2://192.168.61.146:10000

查看默认库default以及表的名字:

开启spark-shell:

scala> spark.table("aa")

spark查看hive的默认库内容,也可以库名加上表名直接查询,来查看hive其他库内容,

spark查看hive的默认库内容,也可以库名加上表名直接查询,来查看hive其他库内容,

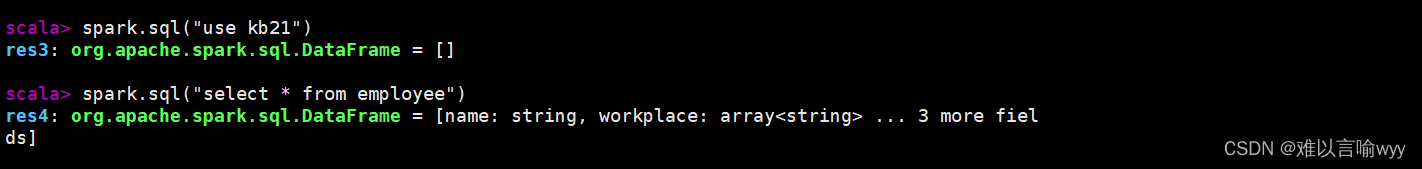

也可以使用spark.sql,

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?