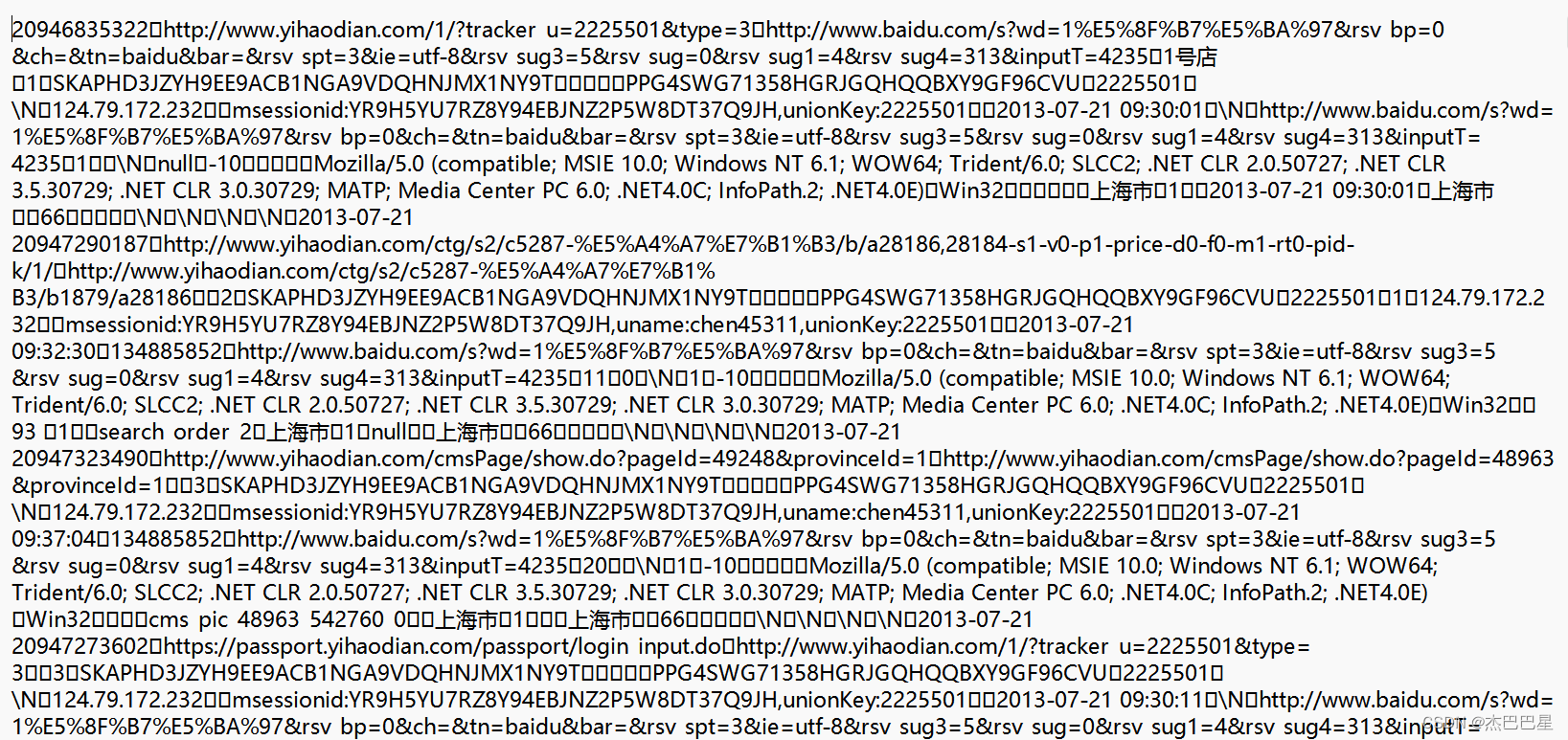

电商日志的数据分析(部分代码)

ProvinceStatApp.java

package mapreduce;

import org.apache.commons.lang3.StringUtils;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import utils.IPParser;

import utils.LogParser;

import java.io.IOException;

import java.util.Map;

/**

* 省份浏览量统计

*/

public class ProvinceStatApp {

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

FileSystem fileSystem = FileSystem.get(configuration);

Path outputPath = new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\trackInfo_out");

if (fileSystem.exists(outputPath)) {

fileSystem.delete(outputPath, true);

}

Job job = Job.getInstance(configuration);

job.setJarByClass(ProvinceStatApp.class);

job.setMapperClass(MyMapper.class);

job.setReducerClass(MyReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

FileInputFormat.setInputPaths(job, new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\trackinfo_20130721.txt"));

FileOutputFormat.setOutputPath(job, new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\trackInfo_out"));

job.waitForCompletion(true);

}

static class MyMapper extends Mapper<LongWritable, Text, Text, LongWritable> {

private LongWritable ONE = new LongWritable(1);

private LogParser logParser;

@Override

protected void setup(Context context) throws IOException, InterruptedException {

logParser = new LogParser();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String log = value.toString();

Map<String, String> info = logParser.parse(log);

String ip = info.get("ip");

if (StringUtils.isNotBlank(ip)) {

IPParser.RegionInfo regionInfo = IPParser.getInstance().analyseIp(ip);

if (regionInfo != null) {

String provine = regionInfo.getProvince();

if (StringUtils.isNotBlank(provine)) {

context.write(new Text(provine), ONE);

} else {

context.write(new Text("-"), ONE);

}

} else {

context.write(new Text("-"), ONE);

}

} else {

context.write(new Text("-"), ONE);

}

}

}

static class MyReducer extends Reducer<Text, LongWritable, Text, LongWritable> {

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException {

long count = 0;

System.out.println(context);

for (LongWritable value : values) {

count++;

}

context.write(key, new LongWritable(count));

}

}

}

etl.java

package mapreduce;

import utils.GetPageId;

import utils.IPParser;

import utils.LogParser;

import org.apache.commons.lang3.StringUtils;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.util.Map;

/**

* etl提取关键信息

*/

public class etl {

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

FileSystem fileSystem = FileSystem.get(configuration);

Path outputPath = new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\trackInfo_out");

if (fileSystem.exists(outputPath)) {

fileSystem.delete(outputPath, true);

}

Job job = Job.getInstance(configuration);

job.setJarByClass(ProvinceStatApp.class);

job.setMapperClass(MyMapper.class);

job.setReducerClass(MyReduce.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.setInputPaths(job, new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\trackinfo_20130721.txt"));

FileOutputFormat.setOutputPath(job, new Path("E:\\IdeaProject\\hadoop\\project\\src\\main\\java\\com\\data\\outInfo_out"));

job.waitForCompletion(true);

}

static class MyMapper extends Mapper<LongWritable, Text, Text, Text> {

private LongWritable ONE = new LongWritable(1);

private LogParser logParser;

@Override

protected void setup(Context context) throws IOException, InterruptedException {

logParser = new LogParser();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String log = value.toString();

GetPageId contentUtils = new GetPageId();

Map<String, String> info = logParser.parse(log);

String ip = info.get("ip");

String url = info.get("url");

String pageId = contentUtils.getPageId(url);

String country = info.get("country");

String province = info.get("province");

String city = info.get("city");

String time = info.get("time");

String out = "," + pageId + "," + ip + "," + country + "," + province + "," + city + "," + time;

context.write(new Text(url) , new Text(out));

}

}

static class MyReduce extends Reducer<Text, Text, Text, Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

for (Text value : values) {

context.write(key,value);

}

}

}

}

GetPageId.java

import org.apache.commons.lang3.StringUtils;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

public class GetPageId {

public static String getPageId(String url) {

String pageId = "";

if (StringUtils.isBlank(url)) {

return pageId;

}

Pattern pat = Pattern.compile("topicId=[0-9]+");

Matcher matcher = pat.matcher(url);

if (matcher.find()) {

pageId = matcher.group().split("topicId=")[1];

}

return pageId;

}

public static void main(String[] args) {

System.out.println(getPageId("http://www.yihaodian.com/cms/view.do?topicId=14572"));

System.out.println(getPageId("http://www.yihaodian.com/cms/view.do?topicId=22372&merchant=1"));

}

}

IPParser.java

package utils;

public class IPParser extends IPSeeker {

// 地址 仅仅只是在ecplise环境中使用,部署在服务器上,需要先将qqwry.dat放在集群的各个节点某个有读取权限目录,

// 然后在这里指定全路径

private static final String ipFilePath = "ip/qqwry.dat";

// 部署在服务器上

//private static final String ipFilePath = "/opt/datas/qqwry.dat";

private static IPParser obj = new IPParser(ipFilePath);

protected IPParser(String ipFilePath) {

super(ipFilePath);

}

public static IPParser getInstance() {

return obj;

}

/**

* 解析ip地址

*

* @param ip

* @return

*/

public RegionInfo analyseIp(String ip) {

if (ip == null || "".equals(ip.trim())) {

return null;

}

RegionInfo info = new RegionInfo();

try {

String country = super.getCountry(ip);

if ("局域网".equals(country) || country == null || country.isEmpty() || country.trim().startsWith("CZ88")) {

// 设置默认值

info.setCountry("中国");

info.setProvince("上海市");

} else {

int length = country.length();

int index = country.indexOf('省');

if (index > 0) { // 表示是国内的某个省

info.setCountry("中国");

info.setProvince(country.substring(0, Math.min(index + 1, length)));

int index2 = country.indexOf('市', index);

if (index2 > 0) {

// 设置市

info.setCity(country.substring(index + 1, Math.min(index2 + 1, length)));

}

} else {

String flag = country.substring(0, 2);

switch (flag) {

case "内蒙":

info.setCountry("中国");

info.setProvince("内蒙古自治区");

country = country.substring(3);

if (country != null && !country.isEmpty()) {

index = country.indexOf('市');

if (index > 0) {

// 设置市

info.setCity(country.substring(0, Math.min(index + 1, length)));

}

// TODO:针对其他旗或者盟没有进行处理

}

break;

case "广西":

case "西藏":

case "宁夏":

case "新疆":

info.setCountry("中国");

info.setProvince(flag);

country = country.substring(2);

if (country != null && !country.isEmpty()) {

index = country.indexOf('市');

if (index > 0) {

// 设置市

info.setCity(country.substring(0, Math.min(index + 1, length)));

}

}

break;

case "上海":

case "北京":

case "重庆":

case "天津":

info.setCountry("中国");

info.setProvince(flag + "市");

country = country.substring(3);

if (country != null && !country.isEmpty()) {

index = country.indexOf('区');

if (index > 0) {

// 设置市

char ch = country.charAt(index - 1);

if (ch != '小' || ch != '校') {

info.setCity(country.substring(0, Math.min(index + 1, length)));

}

}

if ("unknown".equals(info.getCity())) {

// 现在city还没有设置,考虑县

index = country.indexOf('县');

if (index > 0) {

// 设置市

info.setCity(country.substring(0, Math.min(index + 1, length)));

}

}

}

break;

case "香港":

case "澳门":

info.setCountry("中国");

info.setProvince(flag + "特别行政区");

break;

default:

info.setCountry(country); // 针对其他国外的ip

}

}

}

} catch (Exception e) {

// nothing

}

return info;

}

/**

* ip地址对应的info类

*

*/

public static class RegionInfo {

private String country ;

private String province ;

private String city ;

public String getCountry() {

return country;

}

public void setCountry(String country) {

this.country = country;

}

public String getProvince() {

return province;

}

public void setProvince(String province) {

this.province = province;

}

public String getCity() {

return city;

}

public void setCity(String city) {

this.city = city;

}

@Override

public String toString() {

return "RegionInfo [country=" + country + ", province=" + province + ", city=" + city + "]";

}

}

}

LogParser.java

package utils;

import org.apache.commons.lang3.StringUtils;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import java.util.HashMap;

import java.util.Map;

public class LogParser {

private Logger logger = LoggerFactory.getLogger(LogParser.class);

private StringUtils SStringUtils;

public Map<String, String> parse2(String log) {

Map<String, String> logInfo = new HashMap<String,String>();

IPParser ipParse = IPParser.getInstance();

if(SStringUtils.isNotBlank(log)) {

String[] splits = log.split("\t");

String ip = splits[0];

String url = splits[1];

String sessionId = splits[2];

String time = splits[3];

String country = splits[4];

String province = splits[5];

String city = splits[6];

logInfo.put("ip",ip);

logInfo.put("url",url);

logInfo.put("sessionId",sessionId);

logInfo.put("time",time);

logInfo.put("country",country);

logInfo.put("province",province);

logInfo.put("city",city);

} else{

logger.error("日志记录的格式不正确:" + log);

}

return logInfo;

}

public Map<String, String> parse(String log) {

Map<String, String> logInfo = new HashMap<String,String>();

IPParser ipParse = IPParser.getInstance();

if(StringUtils.isNotBlank(log)) {

String[] splits = log.split("\001");

String ip = splits[13];

String url = splits[1];

String sessionId = splits[10];

String time = splits[17];

logInfo.put("ip",ip);

logInfo.put("url",url);

logInfo.put("sessionId",sessionId);

logInfo.put("time",time);

IPParser.RegionInfo regionInfo = ipParse.analyseIp(ip);

logInfo.put("country",regionInfo.getCountry());

logInfo.put("province",regionInfo.getProvince());

logInfo.put("city",regionInfo.getCity());

} else{

logger.error("日志记录的格式不正确:" + log);

}

return logInfo;

}

}

89

89

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?