这是一段在 PyTorch 中实现 ResNet(残差网络)并使用 CIFAR-10 数据集进行训练和测试的代码。ResNet 是一种深度学习模型,由于其独特的“跳跃连接”设计,可以有效地解决深度神经网络中的梯度消失问题。CIFAR-10 是一个常用的图像分类数据集,包含10个类别的60000张32x32彩色图像。

下面我们会分步解析这段代码。

首先,我们看到导入了必要的 PyTorch 库和模块,包括神经网络(nn)、优化器(optim)、学习率调度器(lr_scheduler)、数据集(datasets)、数据转换(transforms)、数据加载器(DataLoader)等。

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim.lr_scheduler import StepLR, ReduceLROnPlateau

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import os

import torch.backends.cudnn as cudnn

import torch.nn.functional as F之后定义了一个名为`progress_bar`的函数,这个函数用于在控制台上显示训练或测试的进度。

def progress_bar(current, total, msg=None):

progress = current / total

bar_length = 20 # Length of progress bar to display

filled_length = int(round(bar_length * progress))

bar = '=' * filled_length + '-' * (bar_length - filled_length)

if msg:

print(f'\r[{bar}] {progress * 100:.1f}% {msg}', end='')

else:

print(f'\r[{bar}] {progress * 100:.1f}%', end='')

if current == total - 1:

print()

接下来的部分定义了 ResNet 模型。其中`conv3x3`函数用于创建一个3x3的卷积层,`BasicBlock`类代表了 ResNet 中的基础块,`ResNet`类则是整个网络的架构。特别地,`ResNet18`函数返回一个具有18层的 ResNet 模型(包括卷积层和全连接层)。

# Model definition

def conv3x3(in_planes, out_planes, stride=1):

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride, padding=1, bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_planes, planes, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(in_planes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.shortcut = nn.Sequential()

if stride != 1 or in_planes != self.expansion*planes:

self.shortcut = nn.Sequential(

nn.Conv2d(in_planes, self.expansion*planes, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(self.expansion*planes)

)

def forward(self, x):

out = torch.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

out += self.shortcut(x)

out = torch.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, num_blocks, num_classes=10):

super(ResNet, self).__init__()

self.in_planes = 64

self.conv1 = conv3x3(3,64)

self.bn1 = nn.BatchNorm2d(64)

self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)

self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)

self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)

self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)

self.linear = nn.Linear(512*block.expansion, num_classes)

def _make_layer(self, block, planes, num_blocks, stride):

strides = [stride] + [1]*(num_blocks-1)

layers = []

for stride in strides:

layers.append(block(self.in_planes, planes, stride))

self.in_planes = planes * block.expansion

return nn.Sequential(*layers)

def forward(self, x):

out = torch.relu(self.bn1(self.conv1(x)))

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = F.avg_pool2d(out, 4)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

def ResNet18():

return ResNet(BasicBlock, [2,2,2,2])然后是`train`和`test`函数,分别用于进行模型训练和测试。在`train`函数中,模型在每个 epoch 中对训练数据进行一次完整的前向传播和反向传播。同时,通过调用`progress_bar`函数,可以实时看到训练过程中的损失和准确率。在`test`函数中,模型在每个 epoch 结束后对测试数据进行一次前向传播,以验证模型的泛化能力。

# Training

def train(epoch):

print('\nEpoch: %d' % epoch)

model.train()

train_loss = 0

correct = 0

total = 0

for batch_idx, (inputs, targets) in enumerate(trainloader):

inputs, targets = inputs.to(device), targets.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, targets)

loss.backward()

optimizer.step()

train_loss += loss.item()

_, predicted = outputs.max(1)

total += targets.size(0)

correct += predicted.eq(targets).sum().item()

progress_bar(batch_idx, len(trainloader), 'Loss: %.3f | Acc: %.3f%% (%d/%d)'

% (train_loss/(batch_idx+1), 100.*correct/total, correct, total))

scheduler.step()

# Testing

def test(epoch):

global best_acc

model.eval()

test_loss = 0

correct = 0

total = 0

with torch.no_grad():

for batch_idx, (inputs, targets) in enumerate(testloader):

inputs, targets = inputs.to(device), targets.to(device)

outputs = model(inputs)

loss = criterion(outputs, targets)

test_loss += loss.item()

_, predicted = outputs.max(1)

total += targets.size(0)

correct += predicted.eq(targets).sum().item()

progress_bar(batch_idx, len(testloader), 'Loss: %.3f | Acc: %.3f%% (%d/%d)'

% (test_loss/(batch_idx+1), 100.*correct/total, correct, total))

# Save checkpoint.

acc = 100.*correct/total

if acc > best_acc:

print('Saving..')

state = {

'model': model.state_dict(),

'acc': acc,

'epoch': epoch,

}

if not os.path.isdir('checkpoint'):

os.mkdir('checkpoint')

torch.save(state, './checkpoint/ckpt.pth')

best_acc = acc

最后,我们看到在主函数中,首先进行了一些预处理操作,包括定义数据的转换方法、加载训练和测试数据集,并将数据封装进 DataLoader 中。接着,创建了一个 ResNet18 模型,并将其转移到了 GPU(如果可用)。然后,定义了损失函数(交叉熵损失)和优化器(随机梯度下降),并设置了学习率调度器,使得在每50个 epoch 后,学习率乘以 0.1。最后,开始进行200个 epoch 的训练和测试,每个 epoch 结束后,如果在测试集上的准确率达到新的最高值,就保存当前的模型参数。

if __name__ == '__main__':

best_acc = 0 # Start with 0 accuracy

transform_train = transforms.Compose([

transforms.RandomCrop(32, padding=4),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)),

])

transform_test = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)),

])

trainset = datasets.CIFAR10(root='./data', train=True, download=True, transform=transform_train)

trainloader = DataLoader(trainset, batch_size=128, shuffle=True, num_workers=2)

testset = datasets.CIFAR10(root='./data', train=False, download=True, transform=transform_test)

testloader = DataLoader(testset, batch_size=100, shuffle=False, num_workers=2)

# Instantiate model

device = 'cuda' if torch.cuda.is_available() else 'cpu'

model = ResNet18().to(device)

if device == 'cuda':

model = torch.nn.DataParallel(model)

cudnn.benchmark = True

# Loss function and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.1, momentum=0.9, weight_decay=5e-4)

scheduler = StepLR(optimizer, step_size=50, gamma=0.1)

start_epoch = 0

# Run training and testing

for epoch in range(start_epoch, start_epoch+200):

train(epoch)

test(epoch)通过这段代码,我们可以看到使用 PyTorch 实现、训练和测试深度学习模型的基本步骤,包括定义模型架构、设置损失函数和优化器、进行前向传播和反向传播、调整学习率、保存和加载模等。

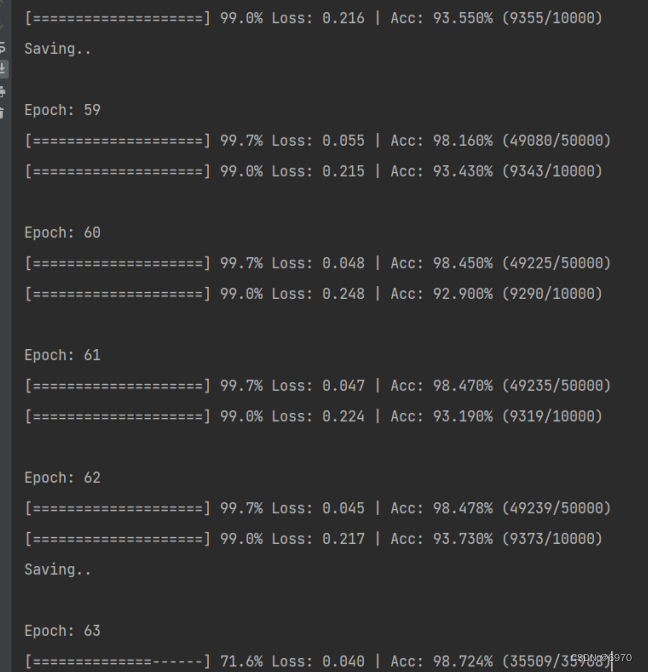

下面是我的运行过程。

在此,我想推荐大家加入我们的神经网络交流学习群。群号是732818397。在这个群里,我们可以一起学习和探讨关于神经网络的各种问题和挑战。无论你是初学者还是有经验的专业人士,我们都欢迎你的加入。希望我们能在学习和交流的过程中共同进步,共同提高。期待在群里遇见你。

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?