1. 爬取单个站点数据

1.1 对AERONET站点网址进行解析,并提取该网址下所有站点信息

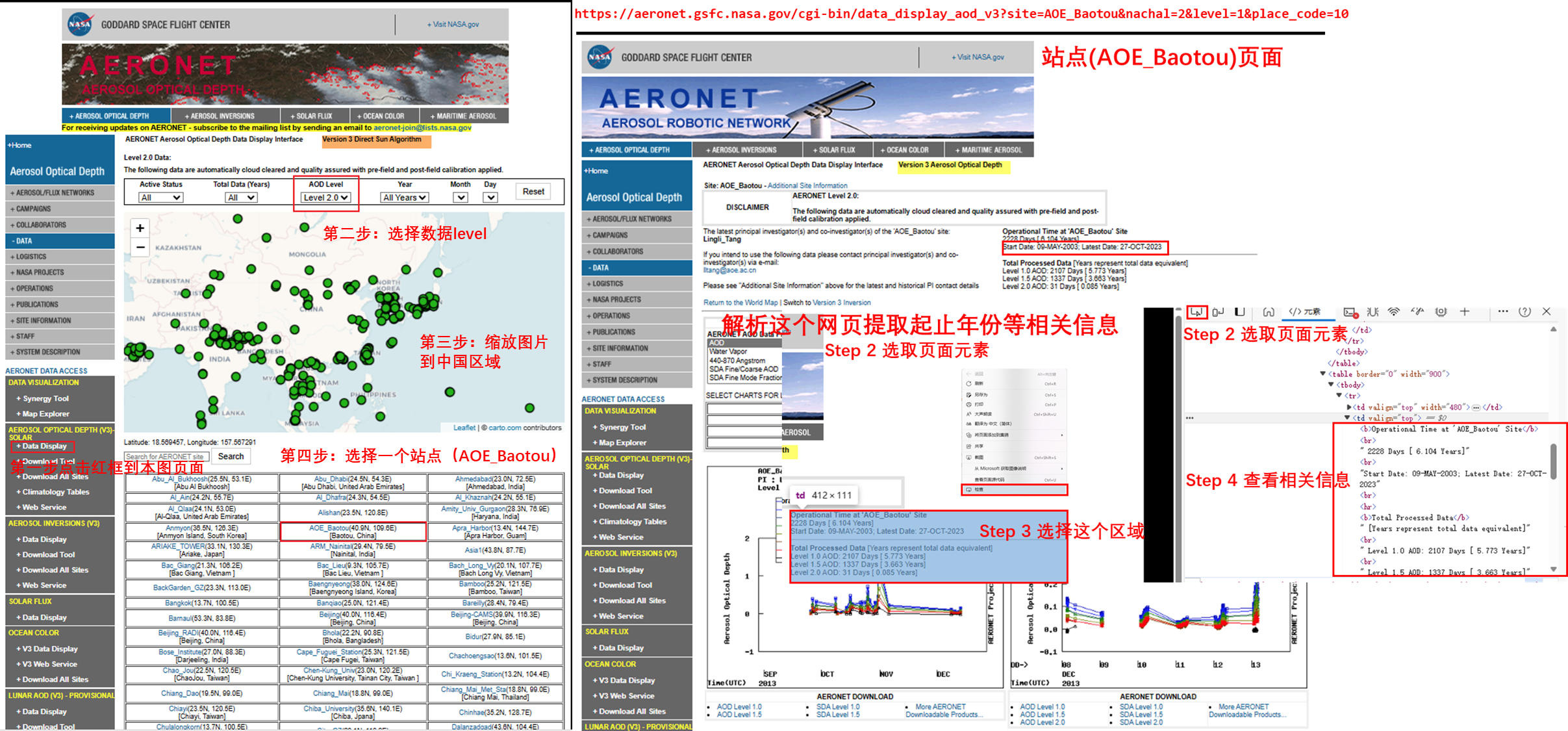

手动点击步骤:

点击AERONET官网https://aeronet.gsfc.nasa.gov/

点击左下 AEROSOL OPTICAL DEPTH (V3)-SOLAR -> Data Display

选择你想要的level(本文选择level2): AOD level:level2

缩放图中地图大小到你需要的范围,该页面下的站点信息会根据图中范围显示相应的站点

本文选择以下载AOE_Baotou站点数据为例

代码:该站点网站提取站点信息和起止时间 (利用代码解析右图Step4结果)

import re

import pandas as pd

from bs4 import BeautifulSoup

import requests

from pathlib import Path

# 单个链接

single_url = 'https://aeronet.gsfc.nasa.gov/cgi-bin/data_display_aod_v3?site=AOE_Baotou&nachal=2&level=1&place_code=10'

# 发起请求

response = requests.get(single_url)

beautifulSoup = BeautifulSoup(response.text, 'html.parser')

# 解析数据

station = 'AOE_Baotou' # 从URL或页面中获取

geoInfo = 'N/A' # 如果地理信息不在页面中,可以手动设置或设为'N/A'

# 使用'string'参数代替'text'参数,应对DeprecationWarning

pageUrl = beautifulSoup.find('a', string=re.compile(r'More AERONET Downloadable Products\.{3}')).get('href')

date = beautifulSoup.find(string=re.compile(r'Start Date.+')).split('-')

start_year = re.sub(r'\;.+', '', date[2])

latest_year = date[4]

# 准备数据

results = [[station, geoInfo, pageUrl, start_year, latest_year]]

输出结果

[['AOE_Baotou',

'N/A',

'webtool_aod_v3?stage=3®ion=Asia&state=China&site=AOE_Baotou&place_code=10',

'2003',

'2023']]

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?